DreamDojo vs. Digital Twins: How Nvidia’s World Models Will Revolutionize Robot Training

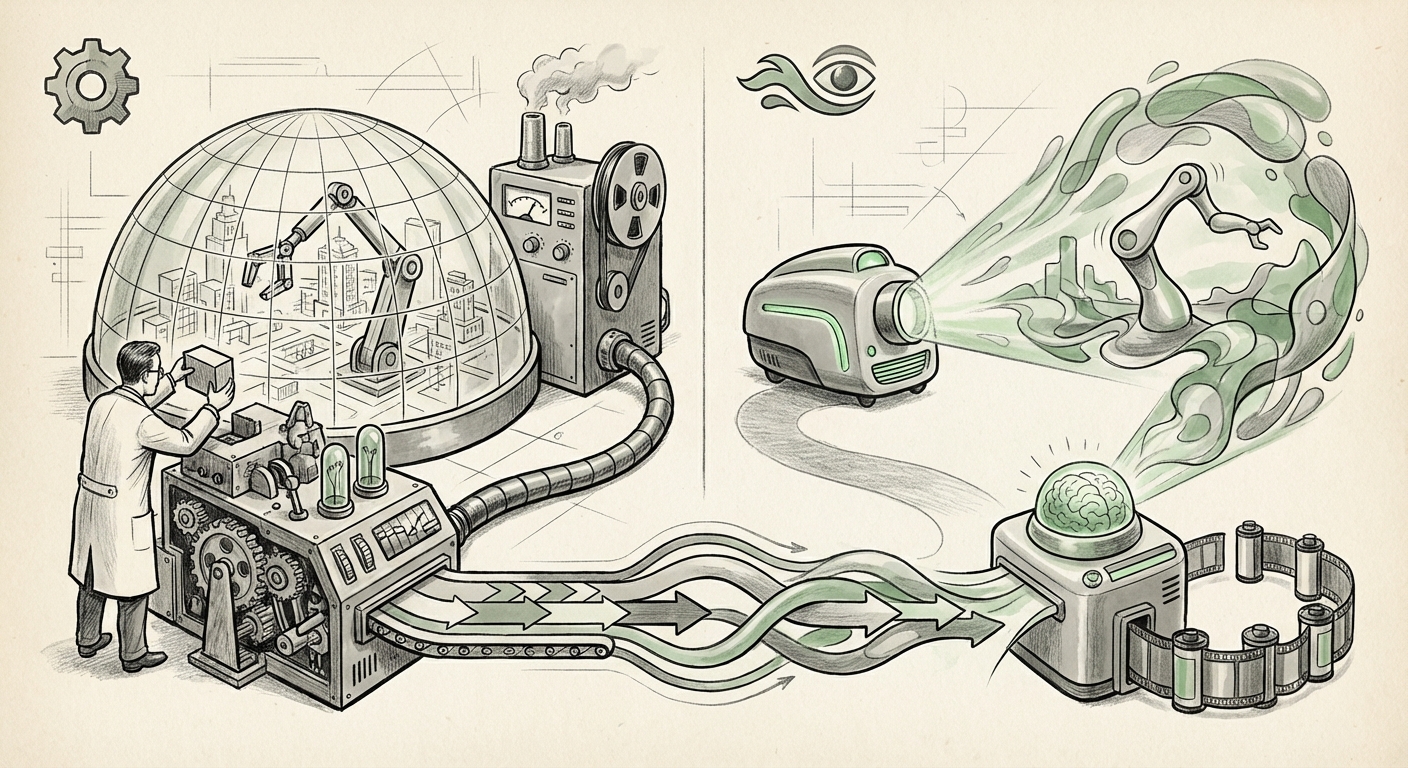

The path to creating truly intelligent, autonomous robots has always been constrained by the gap between theory and reality. For years, robotics relied on Digital Twins—perfect, physics-accurate 3D simulations used to train agents cheaply before deploying them in the real world. However, building these twins is incredibly expensive, time-consuming, and often fails to capture the messy complexity of reality.

Nvidia’s introduction of **DreamDojo**, an open-source world model designed for robot training, signals a potentially monumental shift. DreamDojo proposes a radical alternative: instead of relying on manually coded physics engines, the system learns to predict future states directly from raw video data. This moves robotics simulation from the domain of engineering design into the realm of large-scale generative AI.

This development is not just an incremental update; it represents a fundamental re-architecting of how embodied intelligence is developed. To understand its true disruptive potential, we must contextualize DreamDojo within the current technological landscape of foundation models and the move toward data-driven reality modeling.

The Old Way: The Tyranny of the Digital Twin

Imagine trying to teach a robot to pick up a glass of water in a factory setting. Traditionally, engineers must first build a hyper-realistic 3D model of that specific factory, including the exact friction of the floor, the reflectivity of the glass, and the precise mass distribution. This environment is the Digital Twin. Training happens here for millions of trials.

While accurate, this method has crippling limitations:

- High Setup Cost: Every new environment (a kitchen, a warehouse aisle, a construction site) requires building a new, complex 3D environment from scratch.

- Physics Fidelity: Even the best simulators can struggle with subtle real-world phenomena, leading to the classic "Sim2Real" gap, where a perfect simulation result fails miserably in the real world.

As we look at corroborating trends, the limitations of this manual approach become clearer. Researchers are actively questioning the sustainability of physics-based modeling for complex, real-world scenarios. Articles contrasting the **"World Model for Robotics" vs. "Digital Twin"** highlight that while Digital Twins aim for perfect, explicit physics (like an engineering blueprint), world models aim for predictive competence (like instinct or intuition).

Actionable Insight for Engineers:

For simulation specialists, the shift is moving from mastering Unreal Engine or Unity to mastering data pipelines and model architectures. The skill moves from rendering accurate surfaces to curating high-quality, diverse video data that captures essential dynamics.

The DreamDojo Difference: Learning Physics from Pixels

DreamDojo operates on a different premise, echoing breakthroughs seen in large language models (LLMs). Instead of being explicitly programmed with the rules of gravity or collision, DreamDojo observes massive amounts of video data—real-world or simulated—and builds an internal, compressed representation of *how the world works*. This is the World Model.

When given an initial video frame, the model generates plausible future frames. It doesn't just render images; it predicts the trajectory and interaction of objects based on the dynamics it has internalized. Crucially, this is achieved without requiring a full 3D engine rendering pipeline for every prediction.

This aligns perfectly with the cutting edge of general AI research. Research into **"Foundation Models for Embodied AI Training"** demonstrates that pre-training large models on vast, unstructured datasets (like video) allows the model to develop robust, generalized representations of the world, which can then be quickly fine-tuned for specific tasks. DreamDojo is Nvidia applying this foundation model philosophy directly to physical control.

Imagine an LLM that has read every book ever written; it can generate coherent text because it understands grammar and context implicitly. DreamDojo is the robotics equivalent: it "reads" the dynamics of the world through video and generates valid future motions implicitly.

The Generative Control Revolution

This capability validates a wider technological trend. The power of generative AI isn't limited to creating art or text; it is now being harnessed for control. In areas like autonomous driving or optimizing city traffic flow—topics covered under the scope of **"Generative AI in Real-World Control Systems"**—models that can accurately predict complex, dynamic outcomes are essential.

For robotics, this means a robot can be trained in a world model that continuously evolves based on new observations, making it inherently more adaptable than a static Digital Twin. If a robot learns in DreamDojo that a certain object is lighter than expected, the world model updates its internal physics representation instantly, allowing for immediate adaptation in training, something impossible with rigid 3D physics solvers.

Implications for Business and Automation Scalability

For business leaders and technology investors, the shift from manually engineered simulation to data-driven world models has profound economic consequences:

1. Democratization of Robotics Training

DreamDojo is open source. This is a critical strategic move by Nvidia. By releasing the core world modeling framework openly, they are lowering the barrier to entry for creating complex robotic training pipelines. Startups and smaller manufacturing firms that could never afford to license or build proprietary simulation suites can now leverage Nvidia's foundational AI research.

The competition is no longer just about who has the best physical robot arm, but who has the best data and the best world model.

2. Near-Instantaneous Domain Transfer

If the world model truly captures the underlying dynamics, training robots in one environment (e.g., a simulation mimicking an Amazon warehouse) might require significantly less fine-tuning to operate successfully in a completely different environment (e.g., a grocery store warehouse). The model carries its learned "understanding" of gravity, friction, and grasping across domains.

3. Massive Cost Reduction in Iteration

The real-world cost of robotics development lies in endless physical testing and the GPU cycles needed for traditional simulation. DreamDojo promises synthetic data generation at a fraction of the cost and speed of rendering fully articulated 3D scenes. This accelerates the time-to-deployment for new robotic tasks from months to potentially weeks.

A Look at the Ecosystem Battle

As noted in research assessing the **"Open Source Robotics Simulation Ecosystem,"** environments like MuJoCo and Isaac Gym have defined the past decade. DreamDojo does not necessarily replace them immediately, but it offers a complementary layer. Where existing tools excel at providing precise inputs/outputs, DreamDojo excels at filling in the gaps when precision is secondary to realistic, adaptable prediction. The future likely involves integrating DreamDojo's generative predictions *within* existing simulation frameworks to enhance fidelity cheaply.

Navigating the New Realities: Challenges Ahead

While the promise of learning dynamics from video is compelling, the transition brings new risks that must be managed:

Reliability and Safety

If a world model is trained on imperfect video data, its predictions—and thus the resulting robot behavior—will inherit those imperfections. In industrial or safety-critical applications (like autonomous surgery or heavy machinery), engineers need verifiable guarantees. Generative, learning-based models are inherently probabilistic, whereas traditional physics engines are deterministic.

This requires robust validation techniques. We need new methods to probe the boundaries of what the world model *thinks* is true, ensuring it doesn't hallucinate unsafe outcomes during high-stakes deployment.

Data Dependency and Bias

DreamDojo is only as good as the video data it consumes. If the training data lacks examples of rare but critical events (e.g., a slippery surface, an object dropped unexpectedly), the robot will be brittle when encountering them. Overcoming this requires clever data augmentation or careful curation of edge cases—a modern form of bias management specific to embodied AI.

Actionable Takeaways for Future AI Strategy

Nvidia’s DreamDojo is a clear signal that the bottleneck in robotics is shifting from hardware to software intelligence capable of abstracting reality. For organizations looking to embed advanced AI into physical processes, here are the immediate next steps:

- Invest in Data Infrastructure: Start treating operational video feeds not just as surveillance or analytics, but as high-value training currency. The better your real-world video data, the faster you can bootstrap a DreamDojo-style world model for your specific domain.

- Explore Generative Control Early: Begin piloting small-scale tasks using world model-based reinforcement learning. Understand the trade-off between the speed of generative simulation and the absolute precision offered by traditional simulators.

- Engage the Open Source Community: Because DreamDojo is open source, early adopters can influence its development roadmap. Contributing domain-specific datasets or testing results will provide early access to the most refined iteration of the technology.

The move from manually building digital worlds to allowing AI to learn its own predictive worlds is perhaps the most significant leap in embodied AI since the introduction of deep reinforcement learning. DreamDojo isn't just a tool for training better robots; it's a blueprint for a future where intelligence adapts to the world faster than we can design it.