The AI Triumvirate: Tracking Capital, Geopolitics, and Model Architecture Shifts

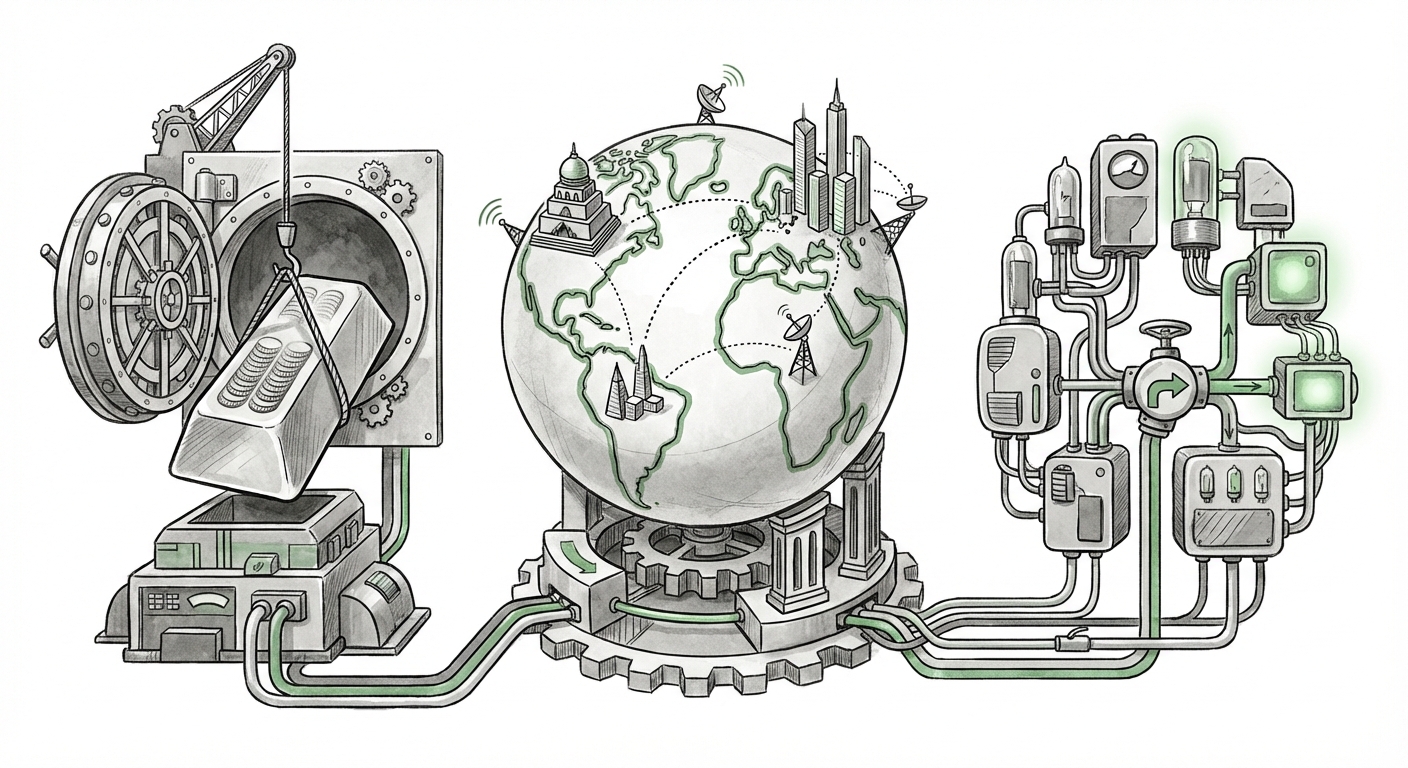

The artificial intelligence ecosystem is currently defined by three seismic forces operating in parallel: the relentless flow of massive capital, the urgent pivot toward national strategic autonomy, and fundamental leaps in how AI models are constructed. A quick glance at recent industry movements—from colossal funding rounds to high-level international summits—reveals that AI is no longer just a technological curiosity; it is the central axis around which future economic and geopolitical power will revolve.

As analysts, our job is to move beyond the headlines to understand the interplay between these forces. The story of AI right now is not singular; it is a complex triangulation between **money, power, and physics.**

Pillar 1: The Capital Conundrum – Funding the Frontier

When a foundational AI lab like OpenAI secures funding measured in tens of billions, it signals more than just confidence; it reveals the staggering operational costs of staying at the cutting edge. Training and deploying the next generation of large language models (LLMs) requires access to specialized hardware (GPUs), enormous energy resources, and elite engineering talent—a combination that effectively gatekeeps the frontier.

The Cost of Being First

The sheer scale of recent financing rounds confirms that the current pathway to achieving Artificial General Intelligence (AGI) relies heavily on brute-force scaling. This race for scale creates a concentration risk. As we analyze industry data regarding venture capital funding in AI foundation models, the trend is clear: investment is aggregating around incumbents who can absorb these costs or strike exclusive hardware partnerships.

What does this mean for the future? If only a handful of entities can afford to train the leading models, the resulting AI innovation pipeline becomes narrower. For CTOs and business leaders, the implication is a growing reliance on licensing these few powerful models, leading to potential vendor lock-in and standardization around specific, highly capitalized viewpoints. We must constantly monitor if this capital flow is fostering innovation or creating an oligopoly.

For further context on this funding dynamic, analysts often look toward market intelligence reports that track the valuation and burn rates of these hyper-scaled AI startups. The data suggests that while innovation is fast, the required resources often exceed traditional VC models, forcing hybrid structures between private equity and strategic partnerships.

Supporting Context: For a deeper dive into how VC investment patterns are skewing toward high-cost AI infrastructure, reference trends showing consolidation in deep tech financing. [PitchBook: AI funding trends 2024 summary analysis]

Pillar 2: Geopolitical AI – The Rise of National Strategies

The presence of high-level international meetings, such as India’s AI Summit, marks the transition of AI from a purely corporate endeavor to a critical component of national security and economic strategy. Nations are rapidly understanding that whoever controls the AI infrastructure, data pipelines, and regulatory frameworks will hold significant global leverage.

From Adoption to Sovereignty

For developing and emerging economies, this pivot is about more than just using existing tools; it’s about achieving digital sovereignty. They aim to build foundational models in their own languages, catering to local needs (like agriculture, local administration, or specialized healthcare), and ensuring data localization.

India, in particular, is positioning itself as a leader in this "Global South" AI alignment. By focusing governmental efforts on creating a robust National AI Strategy, they seek to avoid being merely a consumer of models developed in Silicon Valley or Beijing. This involves investing in local compute capabilities and fostering a domestic talent pool.

Practical Implication: Businesses must recognize that the regulatory landscape will fragment along national lines. A model that is compliant and useful in the EU (under the AI Act) may require significant localization and re-training to integrate seamlessly into an Indian government portal. Understanding national roadmaps is now a prerequisite for global expansion.

Supporting Context: To understand the structural commitment behind these summits, examining official government strategic documents reveals the scope of national investment. [MeitY on India AI Initiative provides direct insight into stated goals.]

Pillar 3: Architectural Evolution – The Physics of Progress

While capital dictates who can build, architectural breakthroughs dictate how effectively they can build. The relentless pursuit of larger models is hitting thermodynamic and economic walls. The "Next Frontier" is thus less about simply adding more parameters and more about smarter computation.

The Efficiency Imperative: Mixture of Experts (MoE)

The industry’s growing adoption of techniques like Mixture of Experts (MoE) architectures is perhaps the most significant technical development right now. Imagine a traditional AI model as a single, brilliant professor who must answer every type of question thrown at them—from quantum physics to baking recipes. It's slow and requires massive energy.

An MoE model, conversely, is like a large university. It has many smaller, specialized "expert" processors. When a question comes in (e.g., a complex coding query), a smart routing system only wakes up the necessary few experts (the computer science team) to handle that specific task. The rest of the university stays quiet.

Why This Matters: This approach allows models to be incredibly large in terms of total potential knowledge (many parameters) while remaining computationally cheap and fast during actual use (inference). This technical shift democratizes high performance slightly, as it allows smaller labs to field highly competitive models without the colossal training budget of an OpenAI or Google.

Actionable Insight for Engineers: Expect a shift in focus from pure pre-training scale to fine-tuning and efficiency. Mastery of MoE, quantization, and efficient inference engines will become critical differentiators for ML teams in the next 18 months.

Supporting Context: Technical deep dives into modern transformer architectures clarify why efficiency is the new frontier. [Hugging Face Blog on MoE Architectures offers an excellent technical breakdown.]

The Unseen Friction: Governance and Control

These three pillars—Capital, Geopolitics, and Architecture—do not exist in a vacuum. They are constrained, and often complicated, by the third, often invisible, force: **Governance and Safety.**

When companies secure funding that rivals the GDP of small nations, the public and regulatory scrutiny intensifies. The debate shifts from *can* we build powerful AI, to *should* we, and *who* gets to decide the guardrails?

The rapid deployment of powerful models forces governments worldwide to scramble for frameworks. The tension between fostering innovation (backed by massive capital) and ensuring safety (demanded by society) creates regulatory uncertainty. For enterprises, this means compliance is not a single checkbox; it’s a moving target managed across multiple jurisdictions, from the comprehensive risk-based approach of the EU AI Act to the more agile, sector-specific guidance emerging from the US and Asia.

Ultimately, the next round of massive investment will likely include a significant allocation toward alignment, safety auditing, and transparency reporting, simply because regulators will demand it before allowing the next major capability leap.

Supporting Context: The global conversation around AI governance highlights the complexity of setting rules for technology that evolves monthly. [Brookings Institution on Global AI Governance discusses the challenges of international alignment.]

Synthesis: The Future Landscape of AI

The current AI moment is defined by high stakes and high velocity. What we are witnessing is the formation of a new technological structure built upon this triumvirate:

- Capital Centralization: The initial gold rush for foundational models concentrates power, but the economic necessity for efficiency (Pillar 3) will eventually allow for more agile players to gain traction in specialized applications.

- The Bifurcation of Power: Nations will increasingly build distinct AI ecosystems based on their strategic goals—one focused on pure capability (US/China axis) and another focused on sovereign utility and broad national impact (India, EU).

- The Architecture Arms Race: Technical efficiency will become the primary competitive battleground over sheer model size, leading to novel hardware-software co-design.

Actionable Insights for Stakeholders

For Business Leaders: Do not blindly follow the largest model releases. Instead, analyze your workload against the efficiency curve. Can a smaller, highly optimized MoE model running locally or on private cloud infrastructure provide 90% of the required performance at 10% of the cost? Prioritize vendor diversification based on architectural openness.

For Policymakers: The pace of hardware acquisition and model development outstrips legislative cycles. Focus governance efforts not on banning specific technologies, but on mandating transparency in training data sources and ensuring interpretability standards for high-risk deployments, especially in sovereign sectors like finance and defense.

For Technologists: The days of easily deploying monolithic models are fading. Invest deeply in prompt engineering, vector databases, and retrieval-augmented generation (RAG) systems that allow powerful base models to be grounded in proprietary, local, and compliance-friendly data. Your value lies in the application layer that connects the massive compute power to real-world, contextual problems.

The convergence of unprecedented funding, intense national interest, and sophisticated architectural improvements ensures that the pace of AI transformation will only accelerate. The next phase belongs not just to those with the biggest budgets, but to those who can strategically navigate the friction between capital ambition, geopolitical demands, and the fundamental laws of computational efficiency.