The AI Gold Rush's Hidden Cost: Why Model Expenses Are Exploding Beyond Revenue Projections

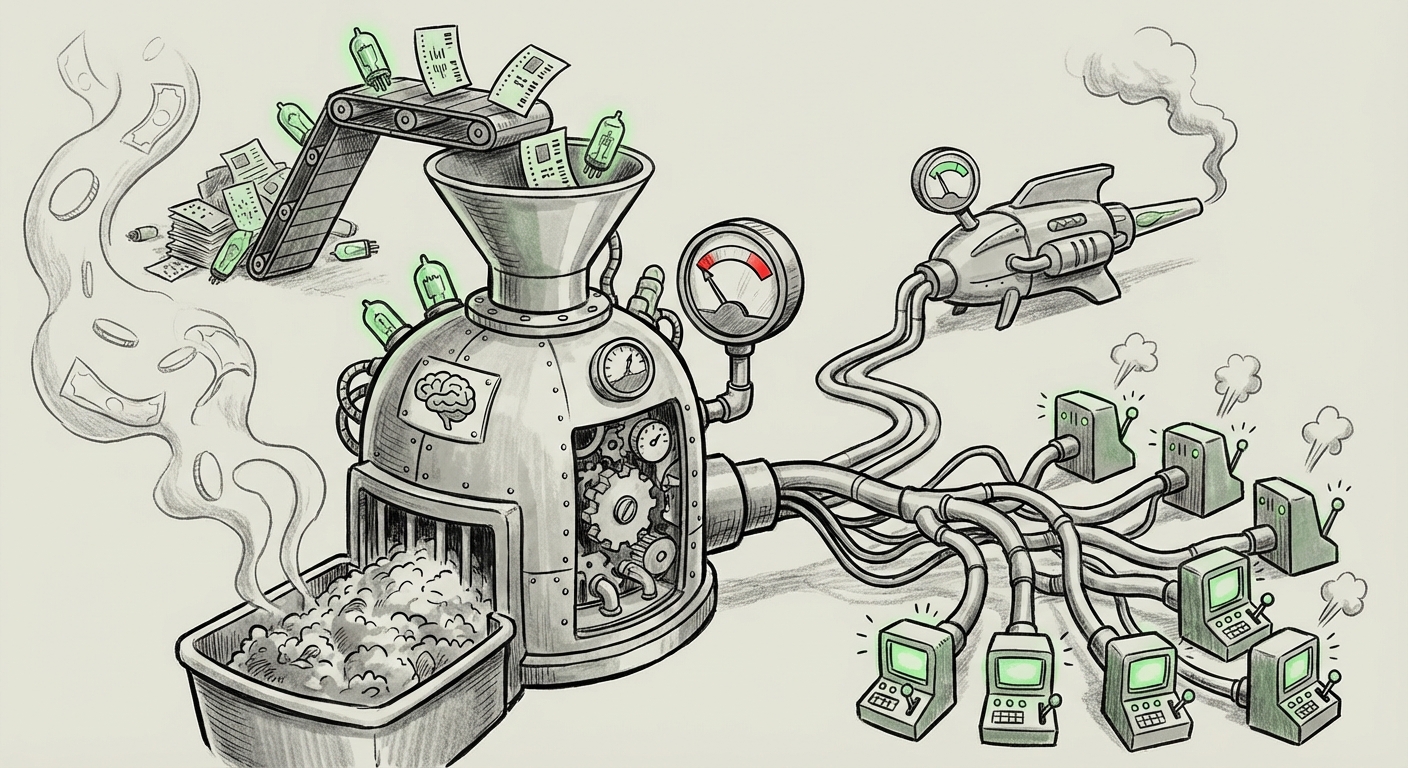

The narrative around Generative AI has long been one of explosive growth, rapid adoption, and seemingly limitless potential. Companies like OpenAI, which are at the bleeding edge of foundational model development, are driving this revolution. However, recent reports indicating that OpenAI is significantly increasing its cash burn forecast—warning investors that operational costs are escalating faster than expected revenue growth—tell a much more sober story about the economic realities underpinning the AI boom.

This isn't just a minor accounting adjustment; it's a critical juncture. It signals that the computational appetite of Artificial General Intelligence (AGI) research is exceeding even the most ambitious projections. To truly understand the future of AI, we must look beyond the headlines and dissect the core drivers of this massive outflow, focusing on infrastructure, deployment economics, and the emerging race for efficiency.

The Unstoppable Thirst for Compute: Hardware as the Primary Bottleneck

The most immediate culprit behind spiraling AI costs is the hardware itself. Training state-of-the-art models—the gargantuan tasks that create GPT-4 or its successors—requires astronomical amounts of specialized processing power, overwhelmingly in the form of NVIDIA’s Graphics Processing Units (GPUs), such as the H100 and the upcoming Blackwell architecture.

When a company like OpenAI updates its cash burn forecast, it is often a direct reflection of securing or planning to secure the next massive tranche of compute clusters. As an analyst observing the supply chain, it is clear that the demand for these chips remains insatiable. Cloud providers (Microsoft Azure, AWS, Google Cloud) are placing orders worth billions, often forcing leading-edge AI developers to compete fiercely for limited supply. This intense competition drives up capital expenditure (CapEx) dramatically.

The infrastructure reality is stark: if the training cost for the next frontier model is predicted to be in the tens of billions, and the market valuation models assumed lower training cycles or quicker monetization, the financial runway shortens considerably. We are witnessing the economic equivalent of a space race, where the primary constraint isn't scientific creativity but the ability to procure the necessary—and incredibly expensive—launch vehicles.

Implication for the Future: The End of 'Bigger is Always Better'

For hardware investors and cloud strategists, this high cost validates the current investment cycle in specialized AI silicon. However, for AI developers, it creates a hard ceiling. If training one model costs $100 billion, only a handful of entities globally can afford to play at the very top tier. This concentrates power and dictates that future R&D must prioritize computational efficiency just as much as capability improvement.

The Hidden Drain: Inference Economics Outpacing Training

Initially, the focus in AI economics centered on the immense, one-time cost of training a massive model. But as these models move from the lab to widespread commercial deployment, the true long-term drain is revealed: inference. Inference is the cost associated with running the model every single time a user asks a question, generates an image, or sends an email.

For a model used by millions globally, the cumulative cost of running trillions of computations for user queries can quickly dwarf the initial training cost. Reports detailing the Total Cost of Ownership (TCO) for running large language models (LLMs) at scale consistently show that while training is a massive upfront investment, inference becomes the persistent, grinding operational expense (OpEx).

Why is this happening faster than expected? It suggests that the adoption curve is steeper than anticipated, or that the initial models deployed—while powerful—were architecturally inefficient for mass servicing. When OpenAI raises its cash burn, it means the number of users or the complexity of the tasks they are performing requires more GPU time per query than they modeled when calculating their initial revenue targets. Essentially, the *utility* they are providing is costing more to deliver than the price point they can charge for it.

Implication for the Future: The Rise of Efficient Application Layers

Businesses relying on third-party LLMs must urgently pivot from simply consuming APIs to optimizing their usage. For SaaS founders, this means aggressively implementing strategies like prompt engineering to reduce token count, utilizing smaller, fine-tuned models for specific tasks, and caching results. The era of carelessly feeding massive context windows into foundational models for every task is economically unsustainable.

The Counter-Revolution: The Race for Efficiency and Small Models

In response to the unsustainable economics of frontier models, the industry is seeing a powerful counter-revolution focused on efficiency. If you cannot afford to build the biggest engine, you must build the most efficient one.

Researchers are intensely focused on several key areas to slash inference costs:

- Quantization: This process reduces the precision of the numbers used in the model (e.g., moving from 16-bit to 4-bit math), drastically shrinking the memory footprint and speeding up calculations with surprisingly minimal loss in performance.

- Model Distillation: Training a smaller, cheaper "student" model to mimic the output quality of a giant, expensive "teacher" model.

- Sparsity and Mixture of Experts (MoE): Architectures like MoE ensure that only relevant parts of a massive model are activated for any given query, dramatically cutting down on active computation per user request.

The existence of highly capable, open-source models that can be run locally or on smaller cloud instances (as discussed in analysis of emerging efficiency breakthroughs) proves that raw parameter count is no longer the sole determinant of value. OpenAI’s high burn rate is a signal that they are still heavily invested in scaling the *largest* models, perhaps finding it harder than competitors to transition key functionalities to radically more efficient architectures.

Implication for the Future: Democratization vs. Centralization

This efficiency drive is crucial for the long-term democratization of AI. If the only way to achieve high performance is through multi-billion dollar training runs, AI power remains centralized. Efficiency breakthroughs allow smaller companies and individual developers to compete effectively, fostering a healthier, more diverse ecosystem.

The Investor Reckoning: Demanding Profitability Timelines

The financial pressure cooker facing OpenAI is also reflecting a broader shift in Venture Capital (VC) sentiment. While early-stage AI funding was characterized by a "growth at all costs" mentality, the mood is hardening. Investors are now acutely aware of the capital intensity required to sustain this level of research and deployment.

When major funding rounds occur, the questions are shifting from "How big can you make it?" to "When will you stop needing this much money?" The escalating cash burn forecast forces this conversation into the mainstream. Investors are scrutinizing the path to profitability, especially for models that require constant, expensive iteration.

This trend suggests a necessary maturity in the AI sector. The initial frenzy, which rewarded sheer technological possibility, is now yielding to rigorous business fundamentals. We may see a bifurcation in the market:

- The "AGI Titans": A few heavily capitalized players (like OpenAI/Microsoft, Google DeepMind) focused purely on frontier capability, financed by strategic corporate backing rather than pure VC metrics.

- The "Efficiency Experts": Startups and established firms that focus on delivering high-value, narrowly scoped AI solutions using smaller, cost-effective models that can achieve profitability quickly.

Implication for the Future: Strategic Partnerships Over Pure Play

For any company relying on massive compute budgets, reliance on deep, strategic partnerships with hyperscalers (like Microsoft or Amazon) becomes non-negotiable. Pure-play AI startups that lack a clear, near-term revenue model tied to low operational costs will find securing the next funding round increasingly challenging.

Actionable Insights for Technology Leaders

What does this economic pressure cooker mean for businesses today planning their AI roadmap? The time for passive experimentation is over; strategic implementation and financial diligence are paramount.

1. Audit Your Inference Load Ruthlessly

Every business integrating LLMs into customer-facing products must establish a clear Cost-Per-Query (CPQ) metric. If your CPQ is too high, redesign the workflow. Can a simpler, open-source model handle 80% of the requests? Can you use a smaller model for the initial screening before escalating to a frontier model?

2. Diversify Your Compute Strategy

Do not anchor your entire strategy to a single provider or a single hardware provider (i.e., NVIDIA). Explore cloud solutions optimized for efficiency, investigate specialized silicon partnerships (like those focusing on custom AI accelerators), and be prepared to deploy models across different infrastructure stacks to mitigate pricing leverage.

3. Invest in Model Optimization Skills

The new premium hires will not just be those who know how to prompt, but those who understand model quantization, fine-tuning techniques, and optimizing inference pipelines. Efficiency engineering is now a core AI competency, directly tied to your bottom line.

Conclusion: The Maturation of the AI Economy

OpenAI’s increased cash burn forecast is not a sign of failure; it is a sign of the immense, almost incomprehensible scale of the resources required to push the boundaries of intelligence. It forces the entire technology sector to transition from the romantic phase of "Can we build it?" to the pragmatic phase of "Can we afford to run it?"

The future of AI will not be defined solely by the largest model in the world, but by the most efficient deployed model that solves a real business problem at scale. The race is shifting from sheer brute-force training towards engineering elegance. The companies that master efficiency in the coming years will not only survive the high costs of the current boom but will define the profitable, scalable AI applications of the next decade.