The AI Agent Divide: Why Software Development Leads the Revolution While Other Industries Lag

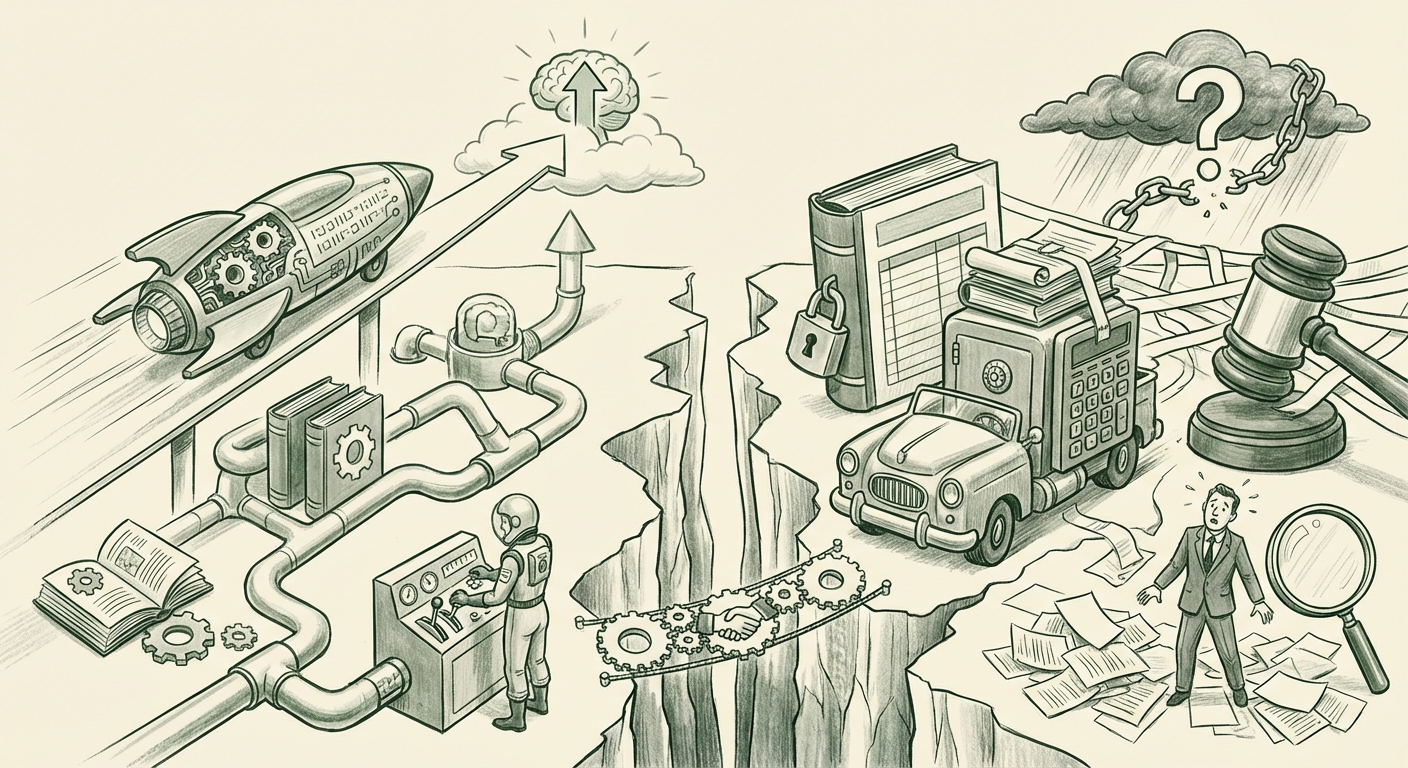

AI agents—systems designed to take a high-level goal, create a plan, use tools, and execute tasks autonomously—were promised as the great equalizer, poised to revolutionize every white-collar job. However, recent data, including findings from Anthropic, suggests a stark reality: this revolution is currently confined almost entirely to one arena: software development.

This "Bifurcation of Utility" is not a failure of AI, but a clear indicator of where the technology has found its first, easiest, and most productive foothold. Understanding this divide—why coding thrives while finance, legal, and marketing agents remain largely theoretical—is key to predicting the next wave of enterprise AI adoption.

The Coding Crucible: Why Software Engineering Became the Agent's Killer App

If we look at the evidence supporting the success of agents in engineering, we see a perfect storm of ideal conditions. Software development, more than any other domain, is inherently compatible with current Large Language Model (LLM) capabilities.

1. Testability and Low Consequence of Failure (Iteration Speed)

In coding, the goal is explicit: write a function that sorts data or calls an API correctly. The result is immediately verifiable through automated testing frameworks (like unit tests or compilation). If an agent generates faulty code, the developer spends minutes debugging the output and corrects the prompt. This rapid feedback loop allows agents to iterate quickly, constantly improving their plans and tool use.

As analyst reports focusing on coding productivity metrics consistently show, agentic assistance drastically cuts down on boilerplate creation and error correction, leading to measurable productivity gains. The stakes are low enough for high-speed trial and error.

2. Digital Native Workflows

Developers already operate within highly structured digital environments. Agents can seamlessly access, read, and write to GitHub repositories, use IDE debugging tools, manage package dependencies, and interact with cloud environments—all through established APIs. These tools are the agents' native language.

The ecosystem provides the tools needed for an agent to plan and execute without needing extensive physical-world integration or bespoke training on undocumented processes.

3. Structured Language and Logic

Code is, fundamentally, a highly structured, unambiguous language. Unlike the subtle nuances of negotiating a merger or crafting marketing copy, Python or JavaScript adheres to strict syntax and logic. LLMs excel at pattern recognition and syntactic generation, making them naturally adept at generating valid code blocks.

The Chasm: Barriers Slowing Agents in Other White-Collar Sectors

If coding is the path of least resistance, other industries represent significant friction. Research into the barriers preventing agent deployment in sectors like legal, finance, and marketing highlights systemic, structural challenges that go far beyond simple model performance.

The Trust and Consequence Gap

The primary barrier is the stakes of error. In finance, a hallucination leading to an incorrect trade or compliance violation can result in multi-million dollar fines or catastrophic market risk. In law, an agent fabricating a case citation can destroy a client's case.

As analyses of regulatory hurdles show, these industries demand high levels of Explainability (XAI) and near-zero error rates. Humans must trust the agent’s output implicitly before it can operate autonomously. Currently, the "trust gap" requires heavy human supervision, which negates the productivity promise of an autonomous agent.

Integrating Complex, Ambiguous Workflows

While a developer works with defined languages and tools, a marketing manager or a financial analyst works with messy, proprietary, and often undocumented processes. Consider an agent tasked with "Launch a new Q3 marketing campaign." This requires understanding brand guidelines (unstructured text), integrating with legacy CRM systems (complex APIs), interpreting real-time, sentiment-heavy social media data, and navigating budget approvals (human-centric bureaucracy).

Distinguishing between an AI agent and simpler automation, like Robotic Process Automation (RPA), is crucial here. RPA follows fixed, pre-programmed steps. A true agent must adapt its plan when these steps fail or when the input data is ambiguous. This adaptability is currently brittle outside of the structured world of code.

Regulatory and Data Security Headwinds

Highly regulated industries possess strict rules regarding data residency, privacy (like GDPR or HIPAA), and audit trails. Deploying third-party LLMs or agents that must process sensitive client information requires significant, costly infrastructure hardening and legal vetting that a small coding project often bypasses.

This tension between the speed of innovation and the need for stringent governance creates a significant bottleneck, explaining why deployment remains slow in these critical sectors.

Bridging the Divide: The Path to General-Purpose Agentic AI

For the revolution to spread beyond the confines of the IDE, the industry must focus on two key strategic areas: architectural evolution and domain-specific specialization.

Actionable Insight 1: Architecting for Grounding and Context

The next generation of successful enterprise agents won't just rely on their base LLM knowledge; they will succeed based on their ability to remain "grounded" in proprietary reality. This requires advanced techniques beyond simple Retrieval-Augmented Generation (RAG):

- Advanced Memory Modules: Agents need scalable, multi-layered memory systems that differentiate between transient working memory and long-term, curated organizational knowledge bases.

- Action Verification Layers: Before an agent executes a high-stakes action (e.g., sending an email to a client or modifying a financial ledger), a secondary verification model or human-in-the-loop checkpoint must validate the proposed action against known rulesets.

- Specialized Tooling: Instead of general-purpose access, agents will be granted access only to narrowly defined, audited toolkits specific to their function (e.g., a Legal Agent gets access only to approved case law databases, not the open internet).

Actionable Insight 2: The Rise of Specialized Agents

The hope for a single, general-purpose "AI Employee" capable of switching seamlessly between writing code, managing HR complaints, and balancing books is likely distant. The near-term future, as suggested by trends in enterprise adoption, points toward highly specialized agents.

We will likely see a surge in agents designed for hyper-specific tasks that reduce ambiguity:

- The Compliance Agent: Focused solely on reading regulatory documents and flagging internal documents that violate specific clauses.

- The Contract Summarization Agent: Trained exclusively on thousands of previous contract types to extract key risk variables.

- The Financial Reconciliation Agent: Constrained to interact only with secure, internal ledger APIs.

By limiting the scope, engineers drastically reduce the probability of catastrophic hallucination and make the process auditable, satisfying regulatory demands.

Implications for Business Strategy Today

For business leaders, the current state of AI agents provides a clear roadmap, not an immediate universal solution. Do not wait for the fully autonomous general agent; leverage the technology where it is demonstrably effective now.

For IT/Development Leaders: Double down on agentic tooling in your engineering pipeline. Measure the productivity gains rigorously, as this is your current competitive advantage in software delivery speed. Explore self-healing infrastructure managed by agents.

For Business Unit Leaders (Non-Tech): Focus on identifying processes that, while complex, possess underlying structured elements. Can you isolate the data retrieval portion of a task? Can you automate the *drafting* phase (like first-pass legal summaries or internal report outlines) rather than the final *decision-making* phase?

The shift from viewing LLMs as sophisticated autocomplete tools to viewing them as autonomous agents capable of tool use and planning is the most significant technological transformation since cloud computing. However, as the Anthropic findings demonstrate, technology adoption follows the path of least resistance first.

The current dominance in software development is not the endpoint; it is the proving ground. The hard work ahead is not just making the models smarter, but making them trustworthy, verifiable, and safely integrated into the highly regulated, ambiguous, and high-consequence workflows that define the vast majority of the global economy.