The Agent Bottleneck: Why AI Agents Rule Software Development But Are Missing Everywhere Else

The promise of Artificial Intelligence has long centered on the concept of the AI Agent: an autonomous entity capable of planning, executing complex tasks, and achieving high-level goals without constant human babysitting. We imagined agents managing our supply chains, drafting comprehensive legal briefs, or even optimizing entire hospital patient flows.

However, recent data from Anthropic, a leader in large language model (LLM) research, paints a far more focused picture of reality. Their findings suggest that while the concept of AI agents is thriving, its actual deployment is overwhelmingly concentrated in one domain: software development. Everywhere else, adoption is barely a whisper.

As AI technology analysts, this is not a sign of failure; it is a critical diagnostic moment. It tells us exactly where AI has found its first, solid foothold and, more importantly, where the critical barriers to broader, truly autonomous adoption lie. To understand the next decade of AI ROI, we must understand why the coder is the first worker to truly embrace the machine colleague.

The Software Gold Rush: Why Coding is the Perfect AI Playground

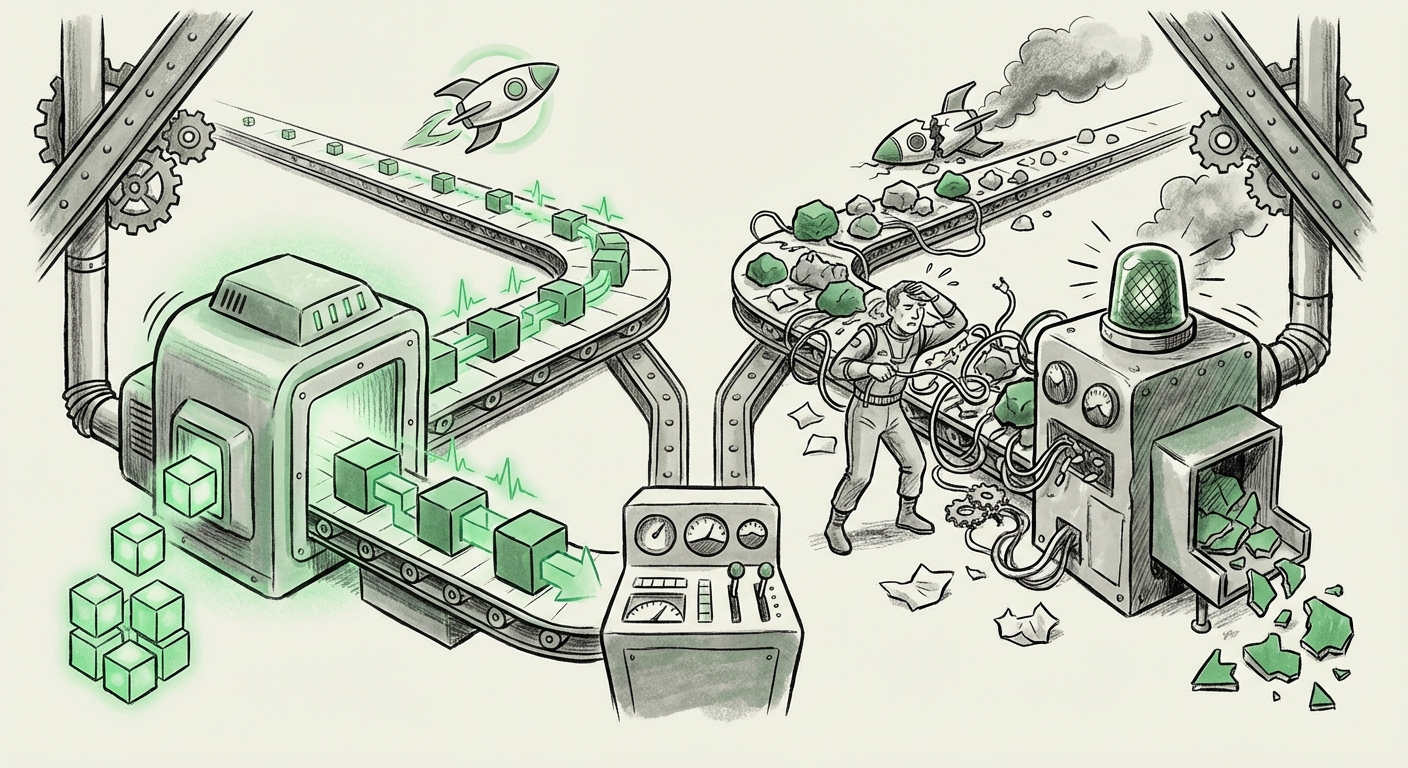

Software development is, by nature, a domain built on structure, logic, and clear outputs. This makes it the ideal environment for current-generation LLMs to operate as agents. We can visualize the success in coding as a function of three key environmental advantages:

- Structured Inputs and Outputs: Code is inherently structured. A prompt results in a syntax, and the desired output is verifiable correctness. This structured environment allows agents to define clear success metrics and iterate reliably without descending into endless ambiguity.

- Immediate, Automated Feedback Loops: When an AI agent writes code, the compiler or test suite immediately provides feedback. If the code breaks, the agent knows instantly and can self-correct. This creates a tight, rapid feedback loop that speeds up learning and validation—a necessary component for any true agent system.

- Low Consequence of Failure (Relatively): While bad code is costly, a bug in a sandbox environment or a small feature rollout is recoverable. For other high-stakes industries (like medicine or aviation), the cost of an agent making a factual error or a poor judgment call in an initial deployment is prohibitive.

Tools like GitHub Copilot or specialized coding agents are not just auto-completing text; they are acting as junior developers, managing branches, writing tests, and even debugging based on error logs. This capability is confirmed by industry benchmarking, where we see significant movement toward agentic assistance in tech workflows over administrative or analytical roles.

To validate this software-centric view, analysts are actively seeking reports confirming these adoption ratios. Sources tracking `"AI agents in coding" adoption rates vs other industries` consistently show exponential growth in developer tool integration compared to slower, more cautious uptake in sectors like law or marketing.

The Great Divide: Barriers Outside the Terminal

If AI agents can write Python, why aren't they mastering legal discovery or portfolio management? The answer lies in the complexity and ambiguity of non-technical domains. Research into the `"challenges deploying autonomous AI agents in non-technical sectors"` reveals that the hurdles are fundamentally different from those in software engineering.

The Unstructured Data Problem

Coding deals in tokens and logic. Law, finance, and strategic planning deal in human language, context, nuance, and ever-changing regulatory frameworks. These are domains saturated with unstructured data. An agent trying to manage compliance in finance faces regulatory documents that shift quarterly. Unlike a stable coding library, these rules are fluid, subjective, and often require nuanced interpretation—something current LLMs struggle to maintain autonomously over long task chains.

The High Stakes of Error

In these environments, the cost of hallucination or misinterpretation is existential. A misplaced comma in a financial model or a misread clause in a contract can lead to significant financial or legal liability. This forces organizations into a highly supervised, human-in-the-loop process, which fundamentally prevents the system from becoming a true *agent* and keeps it relegated to a sophisticated assistant.

The Autonomy Paradox: Trusting the Machine Colleague

Perhaps the most revealing piece of the Anthropic puzzle is the finding that even within software development, users are not granting agents full autonomy. This phenomenon suggests a universal challenge spanning all sectors: the user trust gap.

We must examine sources focused on `"user trust and autonomy in generative AI agents"`. Why would a developer, faced with an AI capable of 80% of a task, choose to spend time manually reviewing and tweaking the output rather than letting the agent finish the last 20%? The answer often relates to explainability and the "handoff problem."

For developers, trust is built on transparency. If an agent’s reasoning path—its internal decision-making steps—is opaque, the developer must treat the output as black-box input, which necessitates a full manual check. This erodes the time savings promised by autonomy. This is known as the automation paradox: the more capable the automation, the more rigorous the human monitoring must be to catch rare, catastrophic errors.

Practical Implications for Business Strategy

For executives looking to adopt agentic AI, the implication is clear: do not aim for full autonomy first. Instead, focus on creating workflows where the human supervisor’s job is made easier by the agent’s speed.

In coding, this means treating the agent as a super-powered junior programmer whose work must pass peer review. In law, it means the agent drafts the *first, messy first version* of a document, allowing the lawyer to jump immediately to the high-value, judgment-intensive editing phase.

Looking Ahead: Where the Next Breakthroughs Will Emerge

The current state is a snapshot, not a destination. While software is leading, forward-looking analysis of `"emerging AI agent applications in research and finance 2024"` shows targeted innovation designed to break the current barriers.

We are seeing significant R&D focused on domain-specific agents:

- Scientific Discovery Agents: These systems operate in highly controlled, often simulated environments (like molecular dynamics). The "code" here is the physics simulation, offering a structured environment similar to software engineering.

- Compliance Agents with Dynamic Rule Engines: New frameworks are emerging that connect LLMs directly to live regulatory databases, allowing agents to check their own reasoning against the latest documentation, closing the loop on the high-stakes regulation barrier.

- Augmented Search Agents: In research, agents are being developed not to answer questions outright, but to perform complex literature synthesis—identifying key papers, summarizing conflicting viewpoints, and creating verifiable citation maps. This leverages structured retrieval mechanisms to provide higher trust.

These niche applications suggest that agentic adoption will not happen universally at once. Instead, it will spread sector-by-sector, jumping to the next domain that can successfully mimic the structured, testable environment that software development already provides.

Actionable Insights for Navigating the Agent Landscape

For organizations assessing their AI readiness, understanding this stratification is key to making smart investments:

- Software Teams: Optimize Supervision. Don't just deploy agents; invest in better tools for tracing agent reasoning (Explainable AI or XAI interfaces). The efficiency gain comes from reducing the cognitive load of review, not eliminating the review entirely.

- Non-Technical Teams: Focus on Task Decomposition. If you want an agent in marketing or HR, break the job down into the most structured sub-tasks possible. Start with data transformation or simple report generation before attempting complex strategic planning.

- Strategy: Prioritize Agentic Infrastructure. The greatest bottleneck for non-coding agents is access to reliable, clean APIs and structured data sources. Investing in better data governance and creating internal APIs for legacy systems is the essential prerequisite for agent success outside of development.

The initial surge of AI agents in coding confirms that autonomy is technically achievable when the environment is right. The current challenge is not the intelligence of the models, but the inherent messiness and high-stakes nature of the human world. The next phase of AI innovation won't just be about better models; it will be about building better, more structured environments for those models to safely learn and operate.