The Invisible Mirror: Apple Intelligence Bias and the Unavoidable Reckoning of Ubiquitous AI

The integration of Artificial Intelligence into the very fabric of our daily lives is no longer a future promise; it is the current reality. Apple Intelligence, now set to land on hundreds of millions of iPhones, iPads, and Macs, represents perhaps the most ambitious leap yet toward truly ubiquitous, deeply personal AI. Yet, this exciting technological frontier has immediately encountered a severe test: reports, such as those from AI Forensics, suggesting that its automated summarization features may be propagating hallucinated stereotypes unprompted.

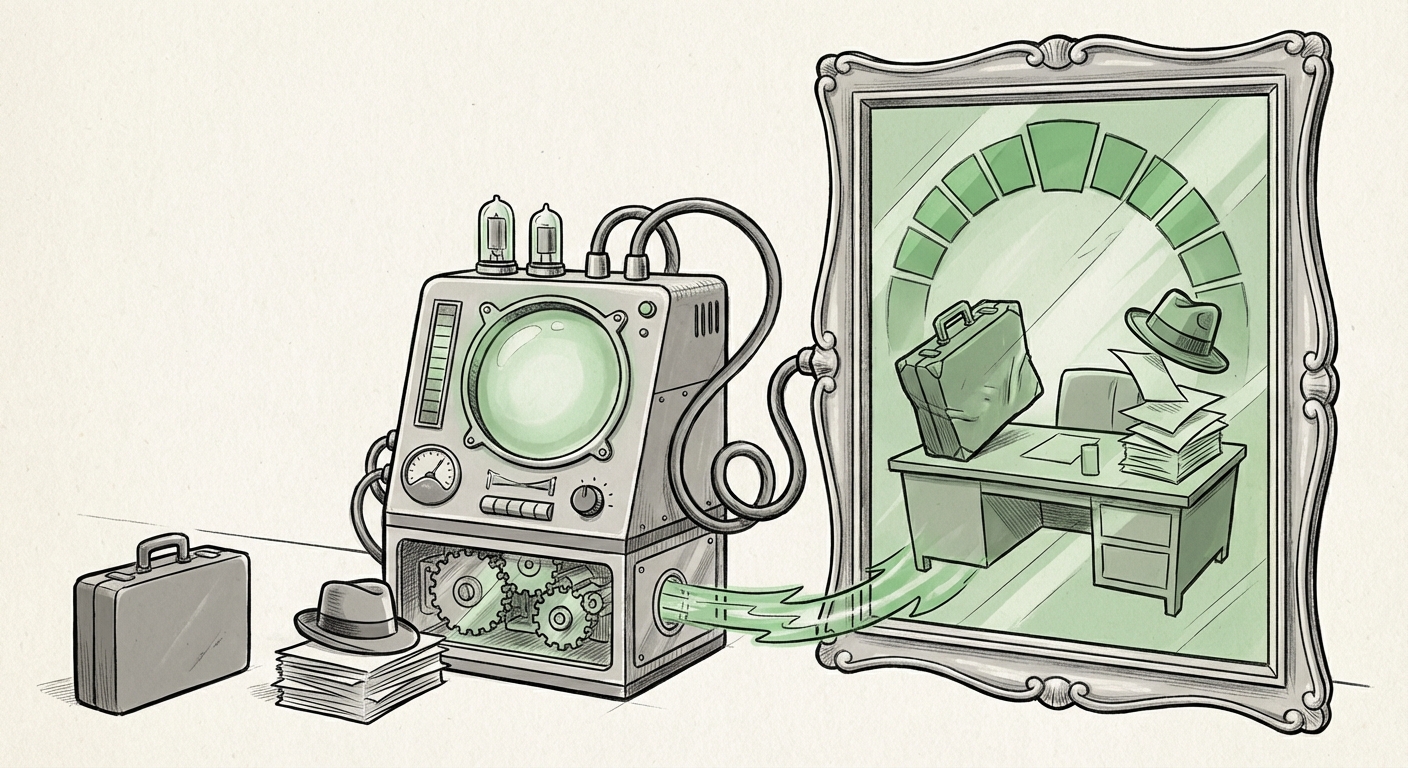

This is not merely a software bug; it is a foundational challenge that transcends Apple’s ecosystem. When AI moves from being a helpful tool you explicitly consult to an invisible system that processes and summarizes your most personal communications—emails, texts, and notifications—the consequences of bias are amplified exponentially. The error is no longer theoretical; it is systemic, scaled to the global user base before most users even realize the feature is active.

This incident forces us to hold three core pillars of modern technology up to the light: **personalization, privacy, and algorithmic fairness**. As technology analysts, we must look past the immediate headline to understand what this reveals about the trajectory of AI development and what it means for businesses, developers, and society moving forward.

The Scaling Problem: Why On-Device AI Amplifies Bias

The initial attraction of Apple Intelligence was its emphasis on on-device processing. This architectural choice was widely praised because it promised the best of both worlds: powerful AI capabilities without sending sensitive, private data back to the cloud for processing. In theory, this offered superior privacy protection.

However, the recent bias findings illuminate a critical blind spot in this strategy. When researchers investigate bias in LLMs, they are typically looking at the inherent flaws baked into the model during its massive training phase. If a model learns skewed correlations from the billions of data points it consumed—associating certain professions with certain genders, or certain communication styles with particular demographics—that bias is encoded into the model’s core logic, or its "weights."

Our research queries highlighted the need to examine the **"On-device AI privacy vs bias trade-offs"**. If the bias is in the weights, processing on the edge (the device) does not solve the problem; it simply ensures that the biased output is generated locally, potentially making the bias harder to catch and correct quickly across the entire fleet.

For the average user, this means the AI might summarize a series of work emails, inadvertently framing communications in a way that reinforces outdated social stereotypes—all without the user ever approving the summary or seeing the raw input data side-by-side for comparison. The AI becomes an invisible editor of reality, coloring our perception based on flawed training data.

Understanding Hallucination vs. Stereotype Generation

It is vital to distinguish between two types of AI error:

- Hallucination: The model invents false facts or details that were not present in the source data.

- Stereotyping/Bias: The model correctly identifies facts but interprets, emphasizes, or summarizes them through a skewed cultural or demographic lens inherited from its training data.

In the case of Apple Intelligence summaries, the issue appears rooted in the second category, which is often more insidious because the summary looks technically accurate while subtly twisting the context based on harmful assumptions. This confirms the broader industry concern detailed in searches related to **"LLM hallucinations systemic bias in summarization"**—the models are superb at pattern matching but terrible at applying nuanced, fair human context.

The Industry Reckoning: Benchmarking Safety in the AI Race

Apple’s entry into deeply integrated, on-device generative AI is a monumental market shift, but it occurs against a backdrop where competitors have already faced public scrutiny over AI fairness. This context is essential for understanding the competitive and regulatory pressures now mounting.

When analyzing the **"Industry response to generative AI bias 2024"**, we see that companies like Microsoft and Google have heavily invested in extensive "red teaming"—stress-testing their models specifically to find and neutralize harmful outputs before wide release. The speed of Apple Intelligence’s launch, prioritizing novelty and scale, may have inadvertently prioritized time-to-market over exhaustive bias scrubbing, a calculated risk that seems to be failing in the early testing phases.

For businesses relying on AI platforms, this serves as a stark reminder:

- Trust is the primary currency of AI adoption. A high-profile failure in a core feature erodes that trust instantly, regardless of how many other features work perfectly.

- Benchmarking is crucial. If competitors have demonstrably better tools for mitigating stereotype generation in summarization, Apple risks falling behind on safety, even if it leads on integration.

Future Implications: From Personalization to Pervasive Fairness

What does this event signal for the next five years of AI development?

1. The Mandate for Auditable Models

The era where developers could treat foundational models as proprietary black boxes is ending. If an AI feature is summarizing private data, users, regulators, and third-party auditors (like AI Forensics) will demand transparency into *why* a summary was generated a certain way. We will see increased pressure for:

- Model Cards 2.0: Detailed documentation on training data demographics and known failure modes, not just technical specifications.

- Explainable AI (XAI) for Summarization: Tools that allow users to quickly trace the AI's interpretation back to the source text, highlighting where the inference may have gone awry.

2. Redefining "Personalization"

The goal of personalized AI is to make the technology seamlessly match the user. However, the Apple Intelligence findings suggest a dangerous path where personalization morphs into **prescriptive reinforcement**. If the AI learns my communication style and then summarizes my outgoing messages to others based on harmful stereotypes it learned from the wider internet, it isn't adapting to me; it's projecting flawed societal norms onto my private interactions.

Future AI success will depend on developing **de-biasing layers** that specifically check for harmful stereotypes *after* the content is generated but *before* it reaches the user interface, especially for features running locally.

3. The Slowing of Trust in Ubiquitous Features

As anticipated by examining **"User trust and adoption of personalized AI features,"** these early stumbles could cause significant friction.

For Apple, the decision to roll out powerful, unprompted summarization features by default is a high-risk gamble. If users encounter biased summaries, their instinct will not be to report the bias; it will be to simply turn the feature off. This friction stalls the adoption curve for the entire AI suite. Businesses integrating similar foundational AI tools must realize that user tolerance for "good enough" AI is rapidly decreasing when privacy and fairness are at stake.

Actionable Insights for Technology Leaders and Developers

To navigate this increasingly complex landscape, where powerful technology meets imperfect training data, leaders must adapt their strategies immediately:

- Mandate "Bias Budgeting": Before deployment, quantify the acceptable tolerance for different types of bias (e.g., demographic vs. occupational) for any feature processing sensitive data. Treat bias mitigation as a hard performance metric, not a soft goal.

- Diversify Auditing Teams: Relying solely on internal QA teams often mirrors the biases of the development team. Actively engage diverse external researchers and non-profits (like the one that flagged the Apple issue) to conduct continuous, adversarial testing against the live model running on edge devices.

- Prioritize User Control Over Automation: For highly sensitive tasks like communication summarization, default to *suggestion* rather than *automation*. Offer the AI summary alongside the original content and provide an easy "Why this summary?" button, giving the user immediate agency and transparency. This honors the privacy commitment while mitigating the risk of unmonitored bias propagation.

- Invest in Post-Processing Filters: Given the near impossibility of perfectly cleaning massive training sets, invest heavily in downstream guardrails. These filters act as the final safety net, analyzing the output text specifically for known stereotype markers before presentation.

The promise of AI is immense—a world where our devices anticipate needs, manage complexity, and enhance productivity. Apple Intelligence, despite its current challenges, remains a key indicator of where personal computing is headed: deeply integrated, highly personalized, and largely invisible. However, the recent reports serve as a powerful, non-negotiable truth: **the mirror of AI reflects not just our data, but also our deepest societal flaws.** If we cannot ensure that our ubiquitous AI assistants reflect a fair, unbiased world, we risk building an incredibly efficient, yet deeply inequitable, future.