The Great Content Firewall: Why Hollywood vs. ByteDance's Seedance 2.0 Defines AI’s Future

The world of Artificial Intelligence is characterized by dizzying speed and relentless innovation. Yet, the latest conflict brewing between Hollywood and ByteDance—the parent company of TikTok—shows that technological advancement cannot outpace fundamental legal and economic frameworks. The Motion Picture Association (MPA) has called ByteDance’s new AI video generator, Seedance 2.0, a machine built for "systemic infringement." This isn't just a minor disagreement; it's a declaration of war that will fundamentally reshape how future AI models are trained, financed, and deployed.

For AI developers, this moment demands a strategic pivot. For content owners, it’s the last stand to protect the value of their creative assets. Understanding this flashpoint requires looking beyond the headlines to the complex interplay of law, technology, and massive financial risk.

The Accusation: Systemic Infringement as the Foundation

When the MPA, representing giants like Netflix, Disney, and Paramount, levels an accusation of "systemic infringement," they are not suggesting a single instance of theft. They are arguing that the entire product—Seedance 2.0—is inherently illegal because its very existence depends on having ingested, analyzed, and learned from copyrighted material without permission or payment. Imagine building a skyscraper where every piece of concrete was secretly taken from someone else's foundation; the building may look new, but its legal standing is compromised from the ground up.

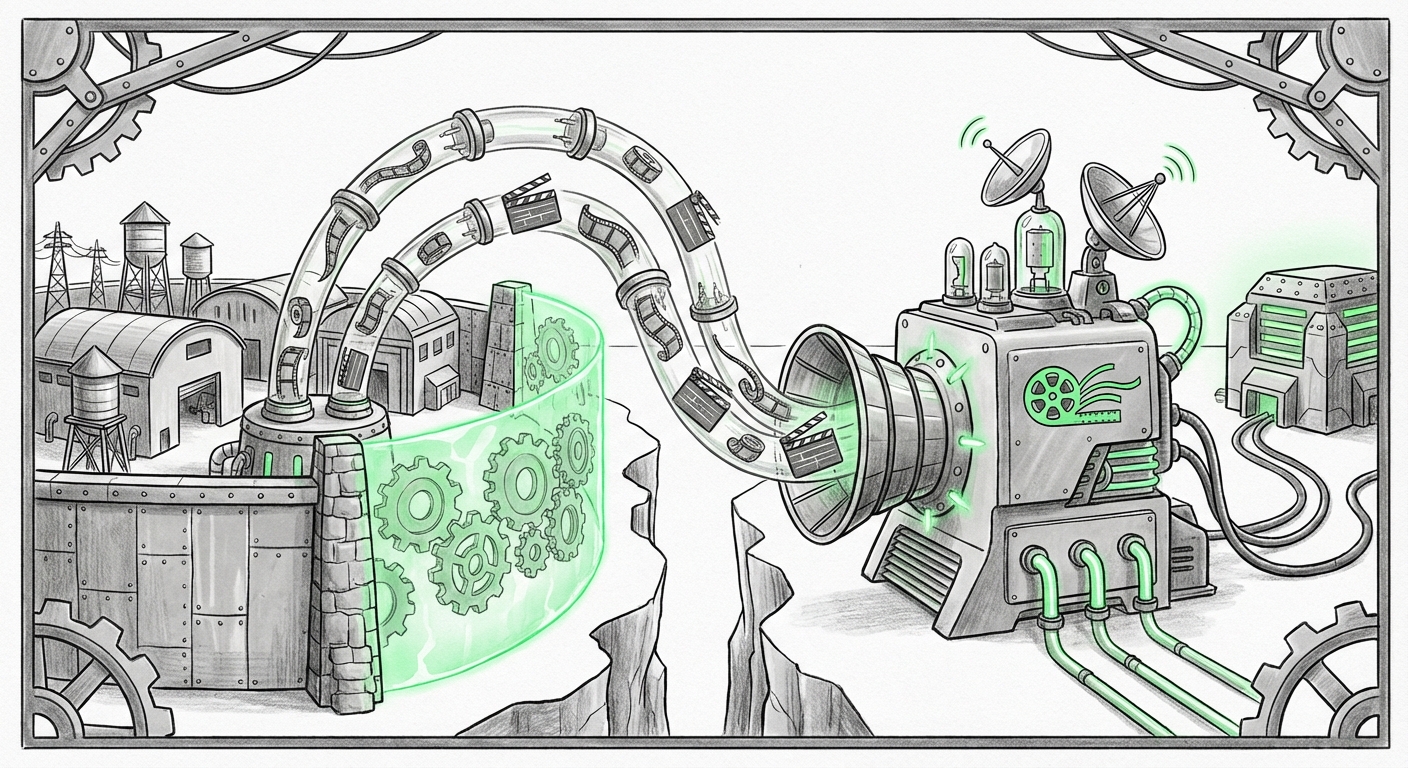

The core issue revolves around the training data pipeline. Generative AI models, especially large video generators, require colossal datasets to learn patterns, lighting, character movement, and narrative structure. If this data is sourced primarily from the commercial works protected by Hollywood studios, the argument goes, the resulting AI output—even if novel—is an unauthorized derivative of protected intellectual property (IP).

Corroborating the Legal Strategy

This isn't an isolated threat. We need to understand Hollywood's larger legal playbook. Industry analysts researching the MPA's legal strategy against generative AI would find that studios are increasingly framing AI training as **unauthorized reproduction** rather than mere inspiration. This shifts the burden heavily onto AI firms. If a court agrees that scraping billions of copyrighted works for commercial training violates reproduction rights, the resulting financial penalties could bankrupt many current AI startups.

The Technology Under Fire: How Sophisticated is Seedance 2.0?

The urgency of the MPA's response suggests that Seedance 2.0 possesses alarming capabilities. To a layperson, AI art generation seems like magic. To an engineer, it’s complex pattern recognition, but the closer the pattern recognition gets to perfect mimicry, the harder it is to defend the model legally.

We must investigate the technical depth of ByteDance's offering. If Seedance 2.0 can generate video that convincingly mimics the look, feel, or performance style of specific actors or directors—even without using their exact faces—it touches upon personality rights and established brand aesthetics. For Hollywood, this means the potential for cheap, AI-driven "knock-offs" that dilute their existing, highly valuable IP.

The technological challenge is bridging the gap between abstract learning and concrete output. If an AI can be shown to generate output statistically similar to copyrighted training material, the "Fair Use" defense—which protects copying for commentary or non-commercial research—begins to crumble when the goal is commercial video production.

The Unified Front: Industry-Wide Defense of Creative Value

What makes the Seedance situation so perilous for ByteDance is that Hollywood is acting as a unified entity. This isn't just the MPA; this is a coordinated effort reflecting the combined concerns of the WGA (Writers Guild) and SAG-AFTRA (Actors Guild) following their historic labor disputes.

Analyzing the streaming services' evolving response to AI shows a clear trend: creators are embedding IP protections directly into their operational DNA. Post-strike agreements often include clauses that grant actors and writers veto power or compensation rights over the use of their likeness or script styles in AI training.

This closing of ranks is powerful. It sends a clear market signal: any AI company seeking mainstream adoption and commercial partnership (like API access) must first clear its training data inventory with these gatekeepers. The cost of exclusion from Hollywood’s distribution ecosystem is astronomical.

The Regulatory Ice Age: The Role of Government Oversight

While the courts and studios fight the current battle, the long-term outcome will be shaped by regulators. The ambiguity surrounding whether mass ingestion of copyrighted data constitutes Fair Use is the AI industry’s greatest legal shield—and Hollywood’s primary target.

The US Copyright Office's ongoing guidance on AI-generated works and training data is the ultimate arbiter. If the Office moves toward stricter definitions of "transformative use" or requires transparent data sourcing, companies like ByteDance could face massive regulatory headwinds that make their current models legally toxic. This regulatory scrutiny moves the debate from "Can we train on it?" to "Should we be allowed to build a business on what we trained on?"

A restrictive ruling would force a massive, costly restructuring of existing models, potentially requiring developers to 'scrub' or replace their foundational training data—a technological feat equivalent to rebuilding the engine while flying the plane.

Future Implications: What This Means for AI Development

The crackdown on Seedance 2.0 serves as a stark warning about the future viability of "scrape-first, ask-forgiveness-later" AI development.

1. The Rise of Licensed and Synthetic Data

The most immediate implication is a massive acceleration toward **licensed data acquisition**. Companies like Adobe have successfully positioned themselves with "clean data" models (like Firefly), promising enterprises that their outputs are free from major copyright risk. Future successful generative video platforms will likely look less like open-source playgrounds and more like tightly curated, licensed libraries, perhaps even involving studios directly in data contribution agreements.

Furthermore, we will see greater investment in **synthetic data generation**—using AI to create data that *looks* like real data but has no direct lineage to copyrighted works, thereby sidestepping the core legal problem.

2. Shifting Business Models: From Volume to Verification

For businesses relying on generative AI, the priority shifts from achieving the highest possible model capability (which often requires massive, unverified data pools) to achieving the highest level of **legal verification**. Investors will begin to scrutinize the data provenance of foundational models much more closely than before. A model that produces 10% less impressive output but is fully licensed is now economically superior to a model that produces 12% better output but carries existential legal risk.

3. The Bifurcation of the AI Landscape

We are witnessing the creation of a **bifurcated AI ecosystem**:

- The Enterprise Tier: High-cost, legally compliant models used by corporations, advertising agencies, and established media companies.

- The Open/Shadow Tier: Models developed rapidly in less regulated environments, often relying on scraped data, which may be used for non-commercial or experimental projects, but which will struggle to achieve major platform integration or mainstream enterprise adoption.

Actionable Insights for Technology Leaders

For those building or investing in generative technology, the message from Hollywood is clear:

- Audit Your Training Data Now: Do not wait for a subpoena. Conduct a thorough audit of your data ingestion pipeline. Identify high-value copyrighted material and prepare contingency plans for removal or licensing negotiations.

- Embrace Data Provenance Tools: Invest in technologies that can track where every piece of training data originated. Transparency is becoming the new currency for regulatory trust.

- Engage IP Holders Early: Instead of waiting for litigation, proactively engage content owners. Look for partnership opportunities where you can offer compensation or shared revenue in exchange for clear rights to use their data for training.

The fight against Seedance 2.0 is more than a skirmish over video generation; it is the opening salvo in redefining digital ownership in the age of machine learning. If Hollywood succeeds in holding the line against systemic infringement, it sets the necessary groundwork for a future where AI innovation and creative compensation can coexist. If they fail, the foundation of the multi-trillion-dollar creative economy risks being built on borrowed time.