Nvidia DreamDojo: The End of 3D Engines and the Dawn of Predictive Robotics

The world of Artificial Intelligence is accelerating at a pace that constantly forces us to rewrite the rules of engagement. Rarely, however, does a single development signal such a fundamental technical pivot as the recent unveiling of Nvidia’s DreamDojo. This open-source project is not just another tool; it represents a profound architectural shift in how we train intelligent agents, especially robots.

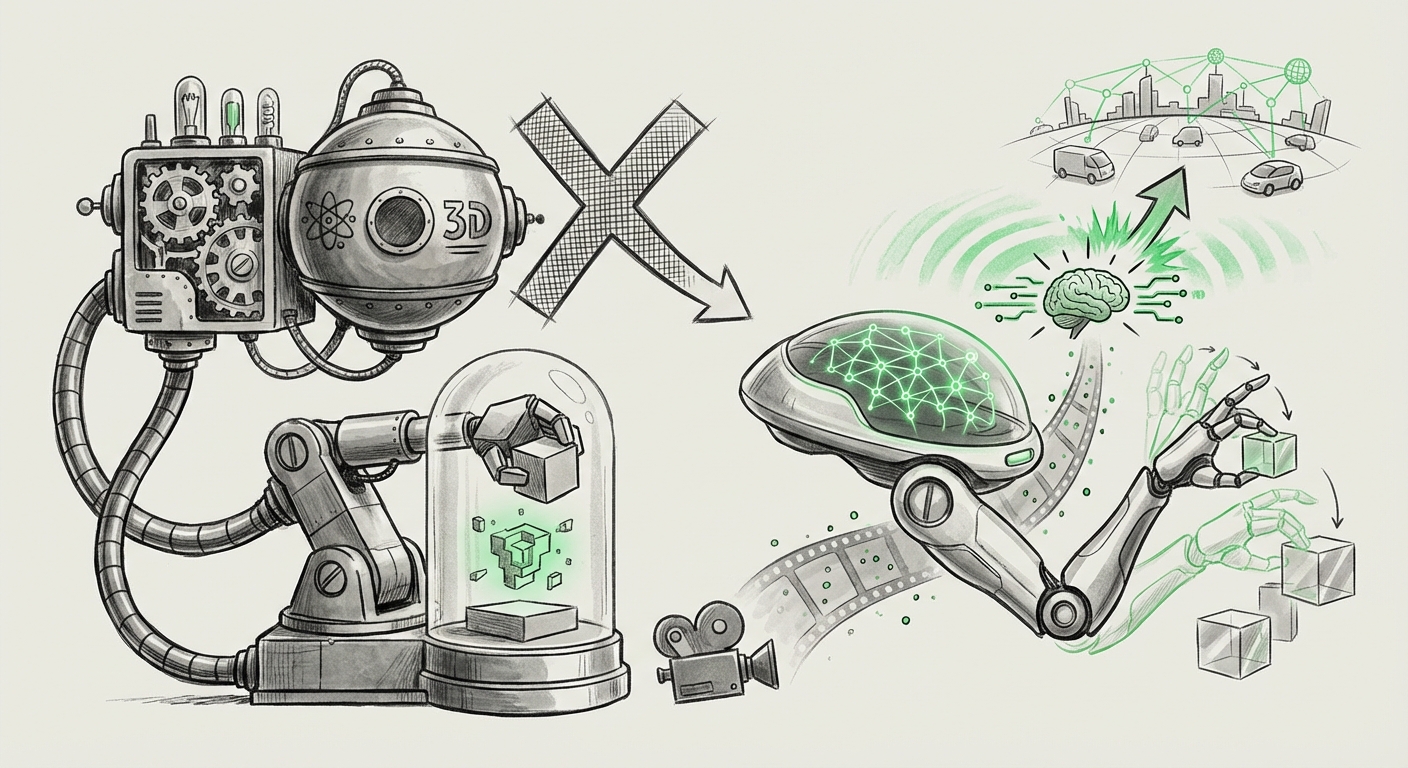

The core innovation? Moving robot training out of complex, heavy, and often brittle 3D physics engines and directly into AI-driven world models capable of generating simulated futures purely from video data. This move validates a long-held hypothesis in AI research and sets the stage for a new era of scalable, efficient, and generalized embodied intelligence.

The World Model Revolution: Learning the Rules of Reality

To understand the significance of DreamDojo, we must first understand what a "world model" is. In simple terms, imagine an AI that watches hours of video—a robot picking up a cup, a car driving down a street, a ball bouncing. Instead of just learning *what* those things look like, a world model learns the underlying *rules* that govern their motion and interaction. It learns the 'physics' implicitly.

Traditionally, teaching a robot to manipulate objects required building a highly detailed 3D simulation (like a sophisticated video game environment). Engineers painstakingly program the friction, gravity, and material properties into the simulation software. While accurate, this process is incredibly slow and restrictive. If the real-world task involves a novel object or environment not perfectly modeled in 3D, the robot often fails—a problem known as the "sim-to-real gap."

Bypassing the 3D Engine Constraint

DreamDojo attacks this problem head-on. It uses neural networks to create a predictive model trained *directly on observational data* (videos). Once trained, this model can take a current snapshot (a frame of video) and predict what the next several frames (the near future) will look like, without ever invoking a conventional physics simulator. This capability is crucial for embodied AI, allowing the agent to internally rehearse possible outcomes before committing to an action in the real world.

This development aligns perfectly with academic inquiry into this space. Research into **"World Models in Robotics"** consistently points toward the long-term efficiency gains of predictive modeling over high-fidelity, explicit simulation. DreamDojo seems to be a highly practical, scalable realization of this research, moving the heavy computational burden from rendering geometry to generating prediction tensors.

The Trend of Scaling: Data-Driven Simulation Over Physics Engines

The push away from traditional 3D simulation is a clear industry trend, driven by the insatiable demand for data and speed required by modern foundation models. We are seeing a migration across industries—from self-driving cars to factory automation—toward systems that favor breadth of experience over perfect, narrow accuracy.

This shift is directly linked to the challenge of **"Scaling AI Simulation for Robotics."** Running millions of highly detailed physics simulations is computationally expensive and time-consuming. Neural simulators, like the one underpinning DreamDojo, leverage the parallel processing power of GPUs to generate synthetic experiences much faster because they are fundamentally performing matrix multiplications (neural network calculations) rather than complex physical calculations.

For CTOs and investors, this means the cost-per-training-hour drops dramatically. If a robot needs ten million attempts to learn a complex grasp, running those attempts inside a fast, data-driven world model drastically reduces the time-to-market and capital expenditure required for deployment. It effectively decouples the speed of learning from the speed of rendering.

Corroboration: The Neural Simulator Landscape

This move is consistent with the broader recognition that "Neural Simulators are Overcoming the Fidelity Gap." While early neural simulators struggled with maintaining physical consistency over long prediction horizons, advances in architectures (often leveraging diffusion models or advanced recurrent networks) mean they are now becoming sophisticated enough to handle the complexities of real-world physics in a data-efficient manner. DreamDojo appears to be Nvidia’s major effort to standardize this neural simulation layer for the robotics community.

The Power of Open Source in Embodied AI

Nvidia’s decision to make DreamDojo open source adds another critical dimension to this analysis. In the realm of Large Language Models (LLMs), the open-source movement has proven to be a powerful engine for rapid iteration, debugging, and security auditing. Applying this philosophy to embodied AI is transformative.

When examining the trend of **"Open Source Foundation Models for Embodied AI,"** we see that access to pre-trained world models is a massive accelerant. Before DreamDojo, developing a world model meant significant internal research investment. Now, startups, academic labs, and even hobbyists can leverage Nvidia’s massive training foundation to immediately begin training task-specific policies.

- Democratization: Smaller players can now compete on task performance without needing the simulation infrastructure of tech giants.

- Generalization: Open sourcing allows the global community to feed in diverse real-world data, helping the world model learn edge cases that a single company might miss, thus making the overall system more robust.

- Safety and Trust: Open codebases allow for greater scrutiny, which is vital when deploying autonomous systems in public or industrial spaces.

Implications: From Reactive Control to Proactive Intelligence

The implications of this shift extend far beyond simply making robots train faster. We are moving from reactive control loops to proactive, predictive intelligence. This is evident in other highly dynamic fields like autonomous driving, where the ability to anticipate a pedestrian’s path three seconds in advance is the difference between a successful maneuver and an accident.

The success of DreamDojo hinges on its ability to generalize these predictive skills, aligning with the importance of **"Predictive Modeling for Autonomous Systems Beyond Vision."** If the world model can accurately predict the chaotic dynamics of a warehouse floor based only on visual input, that same predictive architecture can be adapted to forecast financial market fluctuations or complex weather patterns—any domain where understanding the trajectory of dynamic variables is key.

Practical Implications for Industry

For businesses looking to adopt automation, the barrier to entry is changing:

- Focus on Data Quality, Not Simulation Fidelity: The new bottleneck will shift from needing perfect 3D models to acquiring high-quality, diverse video data to feed the world model during its initial training phase.

- Rapid Prototyping: Deploying new robotic tasks—such as a new assembly line function—will be reduced from months to weeks, as the policy training can happen almost entirely in the virtual DreamDojo environment.

- Skill Transfer: A robot trained to stack blocks in simulation might quickly adapt to packaging irregularly shaped items because the world model has learned the underlying geometric relationships, not just the specific textures of the blocks.

The Road Ahead: Challenges and Actionable Insights

While DreamDojo is a landmark achievement, the path to fully autonomous, world-model-driven robotics is not without obstacles. The primary challenge remains the long-term predictive horizon and the fidelity gap. While short-term predictions (the next few frames) are often excellent, maintaining perfect physical consistency over hundreds of simulated steps remains an open research problem. Errors accumulate rapidly.

Furthermore, the open-source nature requires careful management. Ensuring that the core model doesn't inadvertently learn undesirable or unsafe behaviors from heterogeneous community data is paramount.

Actionable Insights for Technology Leaders

- Invest in Data Pipelines: Assume your next-generation robotics training will rely on massive video datasets. Prioritize infrastructure for data capture, cleaning, and labeling that feeds into world models.

- Experiment with Neural Simulation: Do not wait for DreamDojo to be "perfect." Start experimenting with integrating lightweight neural simulation layers into your current simulation stacks (like Nvidia Isaac Sim) to test latency improvements and speed gains now.

- Embrace Open Foundations: Actively contribute to or adopt open-source embodied AI projects. The future capabilities of your proprietary robots will be enhanced by the generalized knowledge embedded in these communal foundation models.

Conclusion: A New Foundation for Intelligence

Nvidia’s DreamDojo is more than a technical curiosity; it is a strategic declaration. It signals the end of an era where robotics training was shackled to the slow, deterministic constraints of graphical physics engines. By harnessing the power of AI to model reality directly from observation, we unlock simulation at the speed of computation, not the speed of rendering.

This transition to data-driven world models is the necessary foundation for achieving true general-purpose robotics. As these models become more sophisticated and open, the deployment of intelligent, adaptable machines across every sector—from logistics and healthcare to manufacturing—will accelerate far beyond current projections. The future of robotics is no longer built in a virtual workshop; it is dreamed by an AI that has learned the rules of reality itself.