The Trinity of AI Power: Capital, Compute, and Global Strategy Reshaping the Future

The current landscape of Artificial Intelligence is defined not just by incremental software updates, but by seismic shifts in finance, geopolitical positioning, and foundational technology. Recent events—a massive capital injection into a leading AI lab, a major emerging economy staking its claim on the global AI stage, and the relentless march toward superior model architecture—paint a clear picture of where the industry is heading.

As analysts, we must look beyond the headlines. These developments are interconnected, forming a powerful trinity that determines who leads the next decade of technological progress. We analyze the significance of these three pillars and what they mean for business strategy and societal readiness.

I. The Gravity of Capital: Why OpenAI's Funding Matters More Than Just Dollars

When a leading AI entity secures a staggering capital infusion—often reaching valuations that defy traditional metrics—it signals a profound shift in investment logic. This isn't just about hiring engineers; it’s about **buying access to the future's most constrained resource: compute.**

The Compute Arms Race

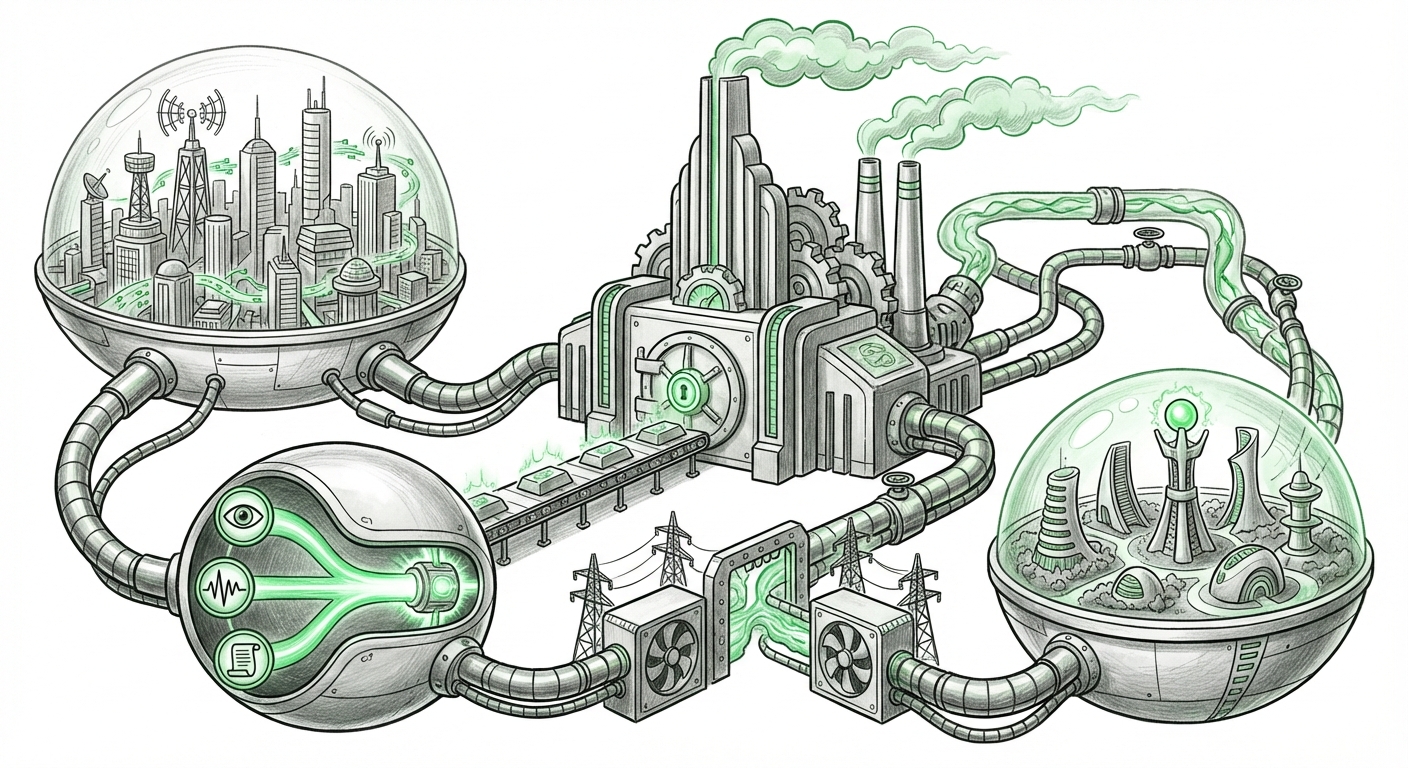

For a general audience, imagine building the world’s largest factory. You need land, materials, and specialized machinery. In AI, the "land" is the market, the "materials" are the data, and the specialized machinery is the GPU cluster. The recent funding surges directly translate into purchasing power for these clusters.

As we investigate broader funding trends (Search Query: "AI startup funding trends Q2 2024 valuation bubble"), it becomes clear that while the market is hot, the investment gravity pulls hardest toward those companies that can deploy capital at massive scale. This creates a bifurcated ecosystem:

- The Titans: Companies with massive funding can secure supply contracts years in advance, effectively locking out smaller competitors from cutting-edge hardware necessary for training the next generation of trillion-parameter models.

- The Specialists: Smaller startups must pivot to efficiency, focusing on fine-tuning existing open-source models or developing specialized, vertical applications where compute requirements are lower.

Implications for Business Strategy

For established enterprises, this means strategic partnerships with compute providers (cloud hyperscalers) or direct investment into foundational model research are no longer optional luxuries—they are strategic necessities to avoid technological obsolescence. If you cannot afford the training cost, you must master the deployment cost.

We must heed the warnings from financial analysts tracking these trends, as unsustainable valuations often precede market corrections. However, in AI infrastructure, the cost of *not* investing today can be far higher tomorrow.

II. Geopolitics Meets Silicon: India's Assertive AI Stance

The global AI narrative has long centered on Silicon Valley and a few Western/Eastern economic blocs. However, recent high-profile summits and national strategy announcements from countries like India signal a decisive move toward establishing digital sovereignty in the AI era.

Building the Indigenous AI Stack

India’s strategy, often focused on developing its Digital Public Infrastructure (DPI) and fostering Indic language models (as highlighted by searches like: "India national AI strategy focus on LLMs and digital public infrastructure"), is a direct response to Western dominance.

This focus is vital for several reasons:

- Cultural Relevance: Models trained predominantly on English data often fail in nuanced local contexts. Indigenous development ensures AI tools are effective for India's vast, multilingual population.

- Data Sovereignty: Keeping the training data, governance frameworks, and model weights within national borders is a crucial step in data security and regulatory control.

- Economic Leapfrogging: By becoming a producer, not just a consumer, of AI technology, India aims to capture significant economic value in services, healthcare, and governance, similar to its success with IT outsourcing.

Future World Order

This geopolitical positioning impacts every global tech firm. Companies entering the Indian market must now contend with strong governmental preference for utilizing or integrating locally built AI solutions. The future of AI is not singular; it is becoming a landscape of powerful regional ecosystems, each with its own preferred standards and trusted models.

III. The Next Frontier: Technical Leaps Beyond Scale

While billions are spent on larger models, the true technical frontier is often defined by *efficiency* and *capability*. The primary article hints at new models, and technical deep dives confirm that the next generation is focused less on size and more on integration.

The Multimodal Mandate

The technical challenge currently exciting researchers revolves around seamless multimodality. We are moving past models that primarily handle text or images separately. The current objective, explored through queries like "Architectural advancements in multimodal foundation models June 2024," is creating unified intelligence.

Imagine an AI that can watch a complex engineering video, understand the spoken commands, read the displayed schematics, and then generate optimized code based on that holistic input. This requires architectural innovations that allow different data types (vision, sound, text, sensor data) to influence a single reasoning core simultaneously.

Actionable Insight: Efficiency vs. Brute Force

For engineers, the actionable insight is twofold:

- For Researchers: Focus shifts to novel attention mechanisms, sparsity techniques, and distillation methods that shrink large models into deployable forms while retaining high performance.

- For Developers: Look for breakthroughs in efficiency that enable powerful AI to run locally on edge devices (smartphones, industrial sensors), reducing latency and reliance on distant cloud servers.

IV. The Unseen Bottleneck: Infrastructure as the Ultimate Gatekeeper

None of the above—neither the massive funding nor the advanced models—is possible without physical silicon. The third, often invisible, pillar underpinning the entire AI structure is the **compute supply chain**.

Analyzing reports on hardware demand (Search Query: "Global semiconductor supply chain constraints AI hardware demand") reveals an intense bottleneck. Every leading AI lab is fighting for a finite supply of the most advanced accelerators. This physical limitation dictates the pace of AI development more strictly than any budget line item.

The Infrastructure Reality Check

For CEOs and CIOs, this means AI readiness is fundamentally tied to infrastructure procurement:

- Lead Time is Everything: Securing high-end AI infrastructure now requires commitment cycles of 18 to 24 months. Decisions made today lock in capabilities for years.

- Geopolitical Risk: Since chip manufacturing is heavily concentrated geographically, supply chain stability is now a direct component of AI risk management. Diversification of cloud partners and compute types is paramount.

- Cooling and Power: The sheer energy density of modern AI clusters means that data center readiness (power delivery and cooling systems) is becoming the new limiting factor, moving beyond chip availability itself.

If capital is the fuel, and new models are the vehicle, then compute infrastructure is the road network—and right now, the road network is congested and under construction.

Synthesizing the Future: A Multipolar, High-Stakes Era

The recent confluence of events confirms that the AI revolution is transitioning from an academic novelty to a mature, capital-intensive industrial sector defined by geopolitical competition.

What does this mean for the future? We are entering an era of AI multipolarity. The leading edge will be defined by who can marshal the most capital for compute access, creating a clear advantage for established tech giants and their backers. Simultaneously, national strategies, particularly from large, digitally sophisticated nations like India, will carve out distinct, sovereign AI spheres.

The technical trajectory confirms that while scale remains important, the value will increasingly accrue to those who can deploy smarter, more efficient, and contextually relevant multimodal systems. For businesses, the mandate is clear: Accelerate infrastructure planning, prioritize partnerships that de-risk supply chain exposure, and aggressively experiment with application-layer AI that leverages the specialized capabilities these new frontiers promise. Ignoring any one of these three pillars—Capital, Geopolitics, or Compute—is a direct risk to future relevance.