The AI Super-Cycle: Unpacking Capital Leaps, Geopolitical Shifts, and the Next Frontier of Models

The Artificial Intelligence landscape is moving at a velocity that defies traditional industry cycles. What we are witnessing is not merely iterative improvement but a fundamental re-architecting of the technology sector, driven by unprecedented capital flow, strategic geopolitical maneuvering, and relentless technical innovation. Recent developments, highlighted by massive funding rounds, significant national commitments like those from India, and the pursuit of fundamentally new model architectures, signal that we are firmly in an AI Super-Cycle.

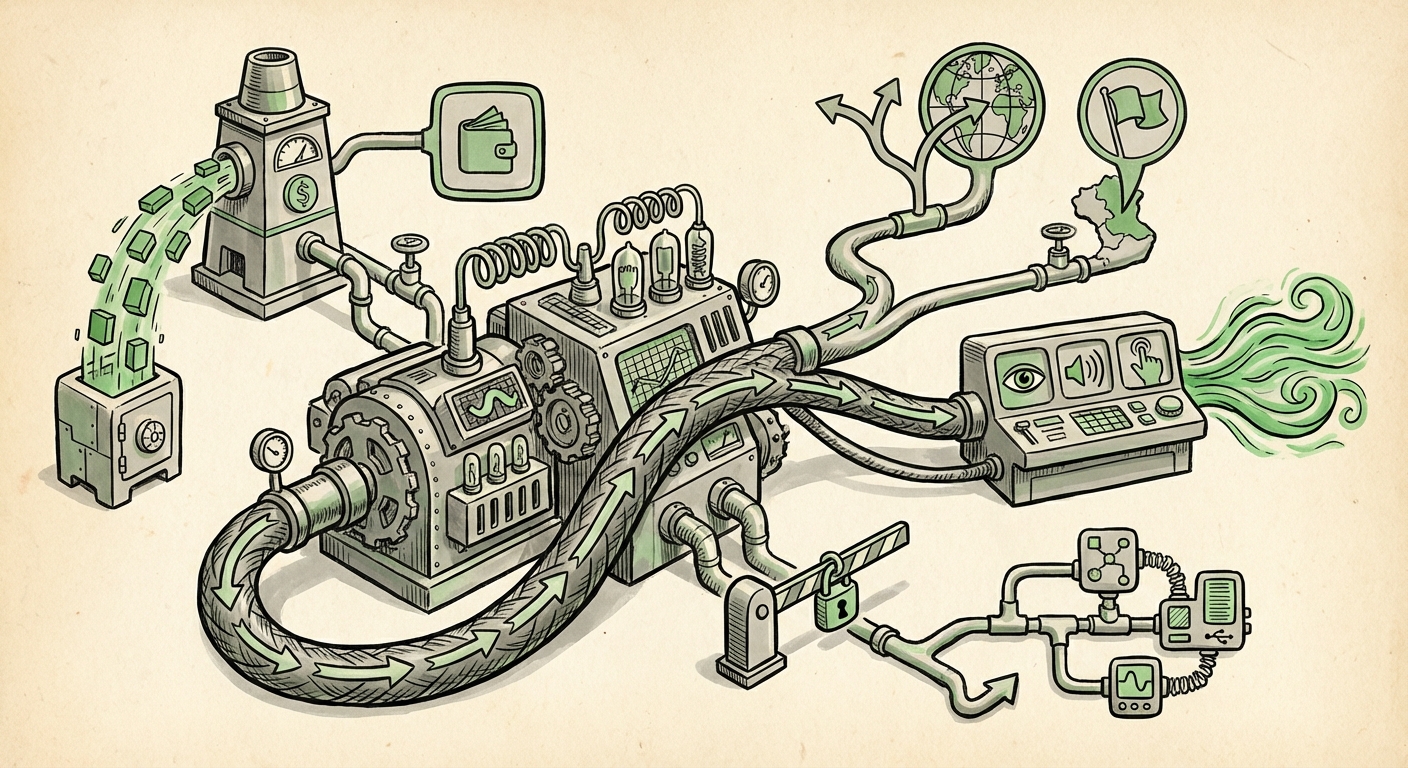

For technologists and business leaders alike, understanding the interplay between these three forces—Money, Map (Geopolitics), and Mechanics (Models)—is critical to forecasting the next decade of digital transformation.

1. The Trillion-Dollar Race: Valuations and the Capital Barrier to Entry

The most visible sign of this Super-Cycle is the sheer volume of money pouring into foundational AI research and deployment. When leading labs secure funding rounds that push their valuations into the stratosphere, it signals two critical truths about the state of modern AI.

Firstly, frontier models are prohibitively expensive to build. Training models with trillions of parameters requires specialized, cutting-edge hardware (primarily GPUs) and massive, dedicated energy resources. This reality acts as an almost insurmountable barrier to entry. Only a handful of well-capitalized entities—those backed by mega-investors or tech giants—can afford to play at the very edge of capability.

The continued massive funding for entities like OpenAI, often involving complex secondary market sales, reinforces the dominance of these incumbents. As reported in financial news, these valuations aren't just paper wealth; they translate directly into purchasing power for compute clusters, allowing these leaders to stay years ahead of smaller competitors in model training runs.

Implication for Business: Businesses are increasingly reliant on renting capability rather than building it themselves. Strategic vendor lock-in becomes a significant risk. The choice of which foundational model provider to partner with is less a feature preference and more a long-term bet on which technology stack will command the necessary infrastructure.

2. The Geopolitical Chessboard: India's Ascent and the Digital Sovereignty Push

AI is no longer just a technical pursuit; it is the central pillar of 21st-century economic and military power. The global AI competition is heating up, moving beyond the US-China dynamic to include emerging powerhouses seeking technological sovereignty. India's recent high-profile AI summit and strategic push underscore this shift.

India’s strategy is particularly fascinating because it leverages its unique strength: Digital Public Infrastructure (DPI). Systems like Aadhaar (digital identity) and UPI (payment infrastructure) provide a massive, structured, and digitized population dataset unlike any other nation. The ambition is to create AI models trained specifically on this unique data, tailored for Indian languages, contexts, and use cases, thereby offering a credible alternative to models optimized primarily for Western or East Asian data.

This ambition clashes directly with the capital concentration mentioned earlier. Can a nation build models that compete with Google or OpenAI using a national strategy, or will it become reliant on licensing foreign technology? The success or failure of national AI initiatives like India's will define the next era of global technological alignment.

Actionable Insight for Policy Makers: Countries must decide whether to invest heavily in national compute capacity (the expensive route) or focus on becoming a prime consumer and customizer of foreign frontier models, accepting technology dependency in exchange for speed of adoption.

3. The Next Generation: Multimodality and the Quest for True Reasoning

While today’s celebrated models excel at language generation, the technical community is already looking past the current Transformer-based Large Language Models (LLMs). The "Next Frontier" is defined by three technical demands: efficiency, multimodality, and reasoning.

Efficiency: Making AI Ubiquitous

The cost of running inference (using the model) for enormous LLMs is too high for ubiquitous deployment, especially on local devices like smartphones or industrial sensors. Research is intensely focused on efficiency improvements—creating smaller, faster, and highly specialized models (Small Language Models or SLMs) that can perform specialized tasks locally without latency or high cloud costs. This is crucial for AI embedded everywhere.

Multimodality: Seeing, Hearing, and Doing

The next generation is inherently multimodal. Current models often handle different data types (text, images, video) through separate encoders stitched together. True next-generation models will be designed natively to process and reason across sensory inputs simultaneously. Imagine an AI system that watches a factory floor video, reads the maintenance manual, and dictates the repair steps—all seamlessly integrated. This requires fundamental architectural changes beyond simple scaling.

Future Implication: When models achieve true multimodal reasoning, the scope of automation explodes beyond clerical tasks into complex physical and diagnostic domains. This is where AI truly begins to interact with the physical world in sophisticated ways.

4. The Great Platform War: Competition and Governance

The massive capital required to build frontier models has created intense competition among the tech behemoths. Microsoft’s deep partnership with OpenAI creates a powerful ecosystem advantage, forcing competitors like Google to accelerate their own timelines and deploy significant capital elsewhere, often backing rivals like Anthropic.

This strategic funding race, often reported in detail by business journals, is about securing preferred access to the most powerful intellectual property.

However, this rapid ascent is met with increasing friction from regulators. As models become more capable and ubiquitous, concerns over safety, bias, economic disruption, and even existential risk intensify. The political conversation is shifting rapidly from "What can AI do?" to "What should AI be allowed to do?"

Regulatory frameworks, such as the EU AI Act, are attempting to catch up. The key challenge lies in regulating frontier capabilities without stifling the innovation that drives economic growth. For any organization deploying AI, navigating this growing thicket of governance—from data privacy to model transparency—is becoming as complex as the engineering itself.

Practical Implications for Your Business:

- Build vs. Buy Reassessment: Unless you are a hyperscaler, focus on building proprietary value *on top* of foundational models, rather than trying to replicate them.

- Geopolitical Risk Mapping: Understand where your data originates and where your chosen model provider is headquartered. Supply chain security now includes AI model access.

- Compliance Readiness: Begin auditing current AI use cases against emerging global standards for transparency and explainability, preparing for future compliance mandates.

The convergence of near-limitless funding, ambitious national strategies, and groundbreaking technical evolution is creating a volatile, yet opportunity-rich environment. The AI Super-Cycle demands agility, deep technical literacy, and a keen awareness of the global political stage upon which this technology is being built.

Conclusion: Navigating the AI Super-Cycle

We are witnessing a technological moment comparable to the invention of the internet or the microprocessor. The scale of investment is cementing the lead of a few players, while nations like India are scrambling to ensure local relevance. Meanwhile, researchers push the boundaries toward systems that understand the world not just through text, but through sight, sound, and interaction.

For the average user and the enterprise architect, the key takeaway is momentum. AI adoption will not slow down. Businesses that treat AI as a core strategic imperative—integrating financial forecasting with geopolitical awareness and technical roadmap planning—will be those best equipped to harness the revolutionary potential unleashed by this capital-fueled, technologically advanced, and globally contested AI Super-Cycle.