The $500 Billion AI Reality Check: Why the Stargate Project Stall Signals a New Era of Infrastructure Battle

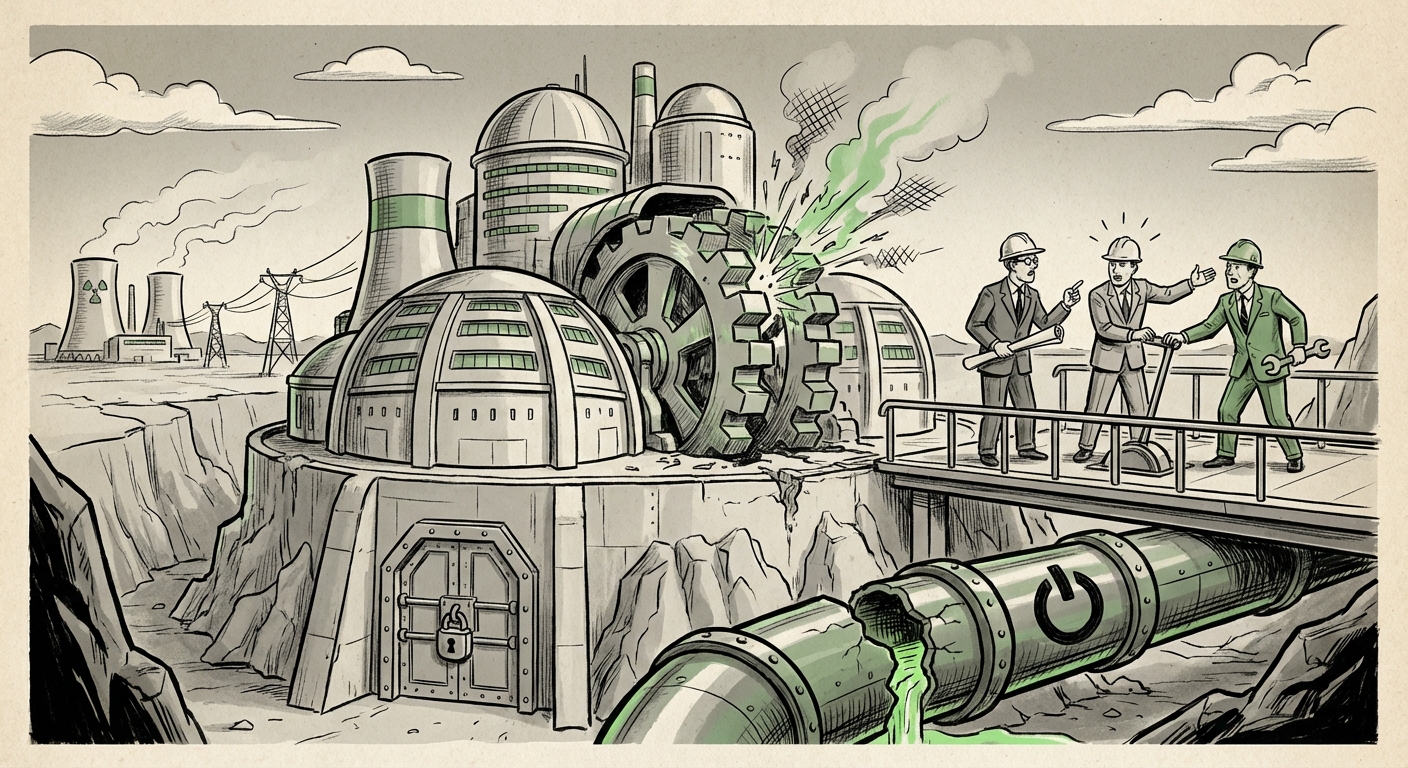

The race to build Artificial General Intelligence (AGI) is no longer just a contest of algorithms; it has become a brutal battle for physical resources. The reported stalling of the "Stargate" project—a supposed $500 billion endeavor intended to create the world's largest AI data centers—is more than just a footnote in tech news. It serves as a profound, flashing warning sign about the architectural bottlenecks confronting the entire AI industry.

When OpenAI, the perceived vanguard of AI development, partners with giants like Oracle for cloud infrastructure and SoftBank for strategic capital, we expect a smooth, forward march. However, news that this monumental project is grinding to a halt over disputes highlights a harsh reality: the future of AI compute requires marrying bleeding-edge science with messy, traditional realities like debt financing, energy procurement, and multi-party governance.

The Sheer Ambition: Corroborating the Compute Demand

To understand why the stall is significant, we must first grasp the scale being attempted. A $500 billion infrastructure commitment implies a compute cluster that dwarfs existing hyperscale deployments. For context, even multi-billion dollar investments by Microsoft and Google into new data centers are often dedicated to general cloud expansion; a project of Stargate's magnitude is explicitly targeted at training and running models orders of magnitude larger than GPT-4.

This demand confirms a core technological trend: AI scaling is transitioning from an incremental software upgrade to a fundamental civil engineering challenge.

We are moving past the era where a few thousand GPUs sufficed for groundbreaking work. Frontier models now require millions of specialized processors working in perfect concert. This level of orchestration demands physical infrastructure that must be resilient, incredibly fast (requiring cutting-edge networking), and utterly massive. The search for evidence of this scale—looking at planned expenditures by major players—validates the premise that the industry needs such clusters, even if Stargate itself falters.

This context frames the situation: the ambition is real, driven by demonstrable technological needs. The problem isn't demand; it’s execution.

The Financial Friction: Lenders Grow Cautious in the AI Gold Rush

The most immediate reason for a project of this size to stall is often financial. The original reports pointed toward lender hesitancy. This signals a critical juncture for AI infrastructure financing. Previously, venture capital poured money into the software layer (the models themselves), accepting high risk for exponential return. Now, the focus shifts to the physical backbone—data centers, power grids, and cooling systems.

Building $500 billion worth of physical assets requires something different: massive, long-term, low-interest debt from banks and institutional investors. These lenders are far more risk-averse than VCs. They ask hard questions:

- Return Longevity: How long will today's leading GPU architecture remain dominant before a competitor renders the hardware obsolete?

- Energy Security: Can the power supply for this facility be guaranteed for 10-15 years, despite rising global energy costs and regulatory pressure?

- Valuation Stability: Is the valuation of the AI entity (OpenAI) stable enough to guarantee repayment, or is it built on hype that could deflate?

This financing hurdle proves that the "AI Bubble" dynamic is hitting the real economy. The capital structure of AI development is being forced to mature, demanding traditional infrastructure-level due diligence. For businesses, this means that while access to foundational AI models might remain centralized, the physical means to train them will require traditional, regulated, and slow-moving financial instruments. This divergence creates a natural point of failure in fast-moving partnerships.

The Governance Gridlock: Who Holds the Keys to AGI?

Perhaps the most illuminating aspect of the Stargate stall is the internal dispute between OpenAI, Oracle, and SoftBank over responsibilities. This isn't just about who pays the electricity bill; it’s about fundamental control in the race for AGI.

Consider the roles:

- OpenAI: Controls the foundational IP and the destination (the resulting AGI). They want guaranteed, unfettered access.

- Oracle: Provides the physical cloud infrastructure and operational expertise. They want control over the deployment environment, security protocols, and potentially service revenue streams from the resulting platform.

- SoftBank: Provides massive financial backing, often tied to equity and strategic placement in physical assets (real estate, energy deals). They demand clear paths to monetization and risk mitigation.

When these interests collide at the scale of half a trillion dollars, the partnership fractures. If OpenAI insists on proprietary control over the deployment architecture, Oracle may balk at the operational risk without commensurate control. The disputes center on "data gravity" and "governance rights." Who owns the resulting hardware architecture? If the system fails, whose liability is it? These governance questions become exponentially harder the closer the project gets to realizing truly autonomous, potentially unpredictable, AGI systems.

This friction implies that future successful infrastructure deals will require unprecedented clarity in legal frameworks before a single server rack is ordered. The lessons from past tech joint ventures are being applied with extreme scrutiny here, slowing down the entire process.

The Necessary Strategic Rethink: Diversification Over Centralization

The article notes that OpenAI must "fundamentally rethink its strategy." This suggests the monolithic, centralized "AI Super-Factory" model might be inherently brittle—too complex to finance, too difficult to govern, and too energy-intensive to power reliably.

What does this strategic rethink look like? It points toward architectural diversification:

- Hardware Specialization and Decoupling: Instead of relying solely on one vendor's centralized GPU cluster, the industry may pivot toward custom silicon (ASICs) tailored for inference or specific training phases, potentially spreading the workload across diverse, smaller, specialized facilities rather than one mega-site.

- Geographic Distribution: To mitigate energy risk and satisfy national security concerns (a growing factor in AI deployment), compute power must be spread globally, tied to regional power sources (like specific nuclear or geothermal plants). This fragments governance but stabilizes energy supply.

- Federated and Distributed Training: Research accelerates on methods that allow models to train collaboratively across disparate, smaller data centers without requiring all data or processing to be centralized in one physical location.

If the Stargate model is too heavy, the future of AI infrastructure will be lighter, more modular, and potentially managed by consortiums rather than single hyperscalers.

Future Implications: What This Means for AI Adoption and Society

The stall is not a failure of AI ambition, but a necessary—albeit painful—speed bump for its industrialization. The implications cascade across technology, business, and geopolitics:

For Businesses: Compute Access Becomes the New Moat

If the path to building AGI-scale clusters becomes fraught with financial and political hurdles, access to *existing* powerful infrastructure (via Microsoft Azure, AWS, or Google Cloud) becomes the ultimate moat. Businesses that previously relied on building proprietary hardware now face a stark choice: pay premium prices for guaranteed access to existing hyperscalers, or wait years for the next generation of decentralized infrastructure to mature.

Actionable Insight: Focus R&D budgets on model efficiency (inference optimization) rather than brute-force training scale, as the training costs are becoming exponentially harder to shoulder.

For Energy and Utilities: The New Frontier

The massive power demands foreshadowed by projects like Stargate will force an unprecedented alignment between AI firms and energy providers. Power Purchase Agreements (PPAs) will become strategic assets. Regions with surplus clean power will see themselves as the new geography of technological power, potentially leading to 'Compute State' competition.

Actionable Insight: Energy firms should aggressively pursue modular nuclear or sustainable energy projects specifically earmarked for data center capacity, as AI is now a guaranteed, high-value anchor customer.

For Geopolitics: The Centralization Risk

If only a handful of entities (backed by deep government relationships or unparalleled balance sheets) can overcome the Stargate hurdle, AI development will centralize further. This concentration of world-class compute power in a few hands raises significant regulatory and geopolitical concerns. Control over the world’s most advanced training facilities is effectively control over the next generation of global economic and military advantage.

Conclusion: Maturation Through Friction

The collapse or suspension of the Stargate project is a critical reality check. It pulls the curtain back on the transition from theoretical AI science to applied, multi-trillion-dollar industrial development. The messy disputes over control, the difficulty in securing traditional financing for speculative future returns, and the sheer physical limits of energy and real estate—these are the new challenges defining the AI landscape.

The future of AI infrastructure will likely not be defined by a single, monolithic monument to compute power, but rather by a highly complex, fragmented, and legally dense web of specialized partnerships. Success will belong not just to the best coders, but to the savviest dealmakers who can navigate the convergence of physics, finance, and federal regulations.