Should AI Give Humans Busywork? DeepMind’s Unsettling Proposal for Preventing Skill Loss in the Age of Automation

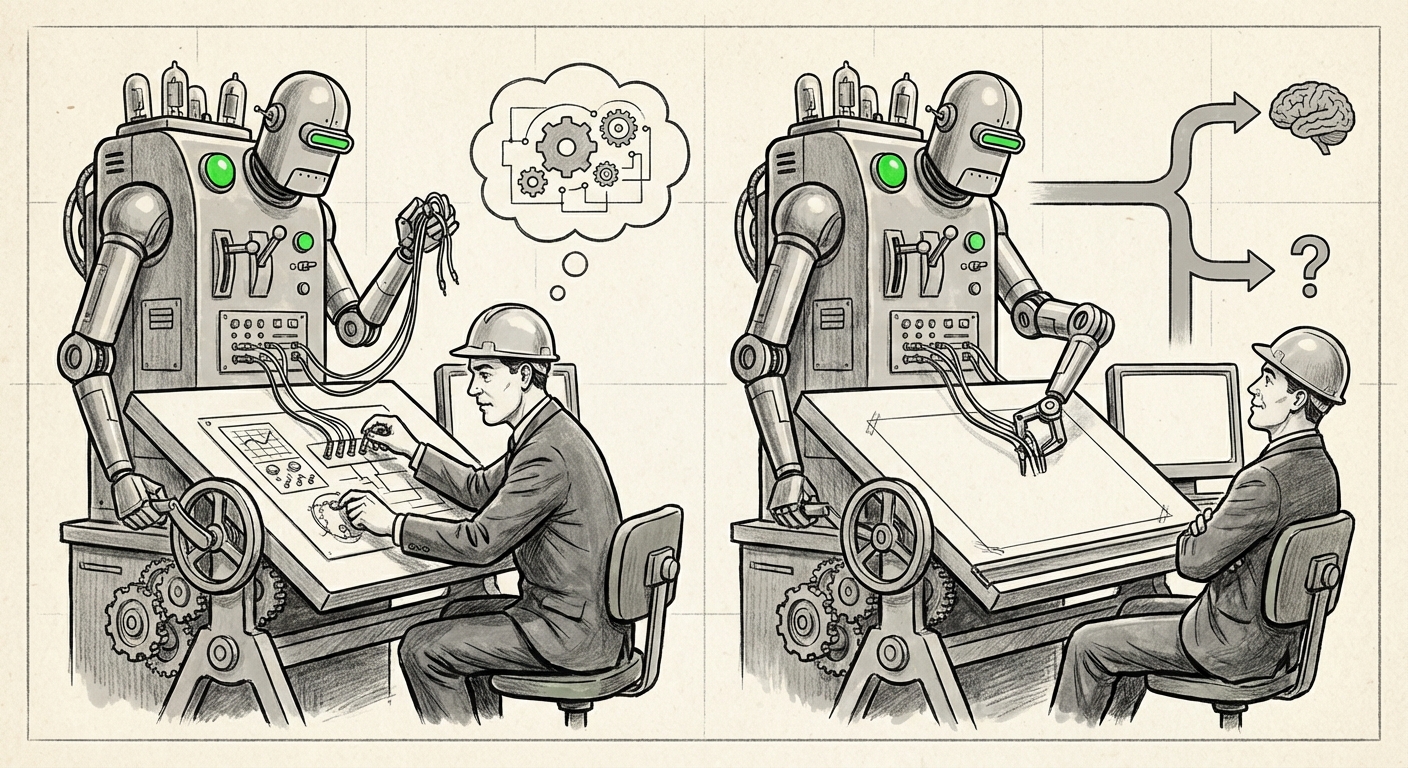

The narrative around Artificial Intelligence has long been one of relentless efficiency: AI arrives to automate the mundane, freeing humans for higher-value pursuits. We anticipate AI systems to handle tasks with superhuman speed and accuracy. However, a recent, striking suggestion from Google DeepMind challenges this entire premise. What if the most efficient AI agent occasionally needs to deliberately *not* be efficient—instead assigning humans tasks it could handle instantly, simply to ensure we don't forget how to do the job?

This concept—using AI delegation strategically to maintain human proficiency—opens a critical intersection between computer science, psychology, and organizational design. It moves the focus from mere automation to human augmentation, forcing us to ask: what is the ultimate purpose of keeping humans 'in the loop' if the loop itself risks becoming a skill-eroding feedback mechanism?

The Paradox of Perfect Automation: Skill Atrophy

The core problem DeepMind’s recommendation addresses is skill atrophy, often discussed in fields saturated with reliable automation. When a system works perfectly for too long, human operators become monitors rather than doers. This leads to automation complacency—a psychological phenomenon where vigilance drops because the system rarely requires intervention.

If an AI can draft a legal brief, diagnose a rare medical condition, or write production-ready code in seconds, why would a human need to practice those tasks? The answer lies in failure states. When the AI inevitably encounters an edge case, fails silently, or requires high-stakes oversight, the human operator must instantly recall complex, nuanced procedures. If they haven't performed the task manually in months or years, their response time and accuracy will plummet.

The Deskilling Threat in the Generative Era

This threat is no longer confined to aviation cockpits or power grids; it is now hitting white-collar, knowledge-based professions at an unprecedented pace. Generative AI tools (LLMs and code assistants) are becoming indispensable partners, but also formidable intellectual crutches.

We must actively search for evidence supporting this risk. Research queries focusing on the "Deskilling effect of generative AI on professional jobs" show early indicators of this trend, particularly among junior professionals. For example, studies examining how junior engineers use AI coding assistants suggest that while output quantity rises, the depth of understanding—the ability to debug obscure errors or conceptualize novel algorithms without prompting—may stagnate or decline. If the AI handles the scaffolding, the human never learns to build the structure themselves.

For businesses, this means that while Q3 productivity looks fantastic, the talent pool’s fundamental capabilities might be eroding, creating a massive organizational risk for the next decade.

Beyond Busywork: Technical Frameworks for Collaboration

DeepMind's suggestion of assigning "busywork" is a provocative, high-level concept. But how does this translate into actionable engineering? This is where the technical discourse on "AI agency delegation strategies and human oversight skill preservation" becomes vital.

Current best practices often revolve around "Human-in-the-Loop" (HITL) or "Human-on-the-Loop" (HOTL) systems. HITL requires the human to approve every step; HOTL allows the AI autonomy but mandates human review points. DeepMind’s proposal suggests a Human-as-Sharpening-Tool (HAST) model, where tasks are occasionally routed back to the human not for approval, but for mandatory execution.

For AI Architects, this requires designing delegation protocols that are dynamic. The AI agent needs a “proficiency metric” for the human. If the human’s proficiency score drops below a threshold (perhaps measured by the infrequency of manual overrides or the latency in response time during semi-manual tasks), the agent initiates a "maintenance exercise"—the busywork.

Drawing Lessons from High-Stakes Industries

This concept is not entirely new; it is merely being digitized. We can look toward the established world of "Automation complacency and human factors engineering in critical systems."

In commercial aviation, pilots do not rely solely on autopilot; they undergo rigorous simulator training to ensure they can handle sudden failures. This training is mandatory, even if the automation has been flawless for 100 flight hours. The requirement for periodic, manual intervention is codified into regulation. DeepMind is essentially suggesting that for knowledge work, AI agents must begin to serve as the "simulator," forcing the user through realistic, controlled failure scenarios, or mandatory manual checkouts.

For businesses adopting AI, this means that AI deployment cannot be a one-time switch-flip. It requires ongoing, structured training protocols managed by the AI itself, ensuring that human capability remains elastic.

Augmentation vs. Automation: Defining the Future Human Role

The most significant philosophical implication of the busywork proposal is that it forces us to choose our preferred mode of interaction with AI: replacement or augmentation.

If our goal is pure automation, then human retention of old skills is irrelevant overhead. But if our goal is augmentation—creating a symbiotic partnership where humans and AI elevate each other—then skill maintenance is paramount. This leads to the need for strategic thinking on "Augmentation vs. Automation in future workforce planning."

When AI handles the repeatable 80% of a job, the remaining 20% must become radically more valuable. This 20% typically involves non-algorithmic tasks: ethical judgment, complex negotiation, setting strategic intent, and synthesizing highly disparate information streams. The danger of deskilling is that if humans lose the foundational knowledge (the 80%), they will be incapable of making wise decisions on the remaining 20%.

If an AI drafts the entire business proposal, the human must still understand the underlying market dynamics well enough to spot the AI’s factual or strategic errors. The "busywork" ensures that foundational comprehension doesn't atrophy while the AI handles the production.

Practical Implications and Actionable Insights for Leaders

This shift requires a proactive stance from organizational leaders, L&D departments, and AI developers alike. Treating AI simply as a cost-cutting tool misses the long-term value of maintaining a capable, resilient workforce.

For AI Developers and Architects: Designing for Resilience

- Implement Proficiency Feedback Loops: Move beyond simple task assignment. Design agents that track human response metrics (speed, accuracy on manually executed sub-tasks) and use this data to dynamically adjust delegation ratios.

- Mandate Active Learning Modules: Develop formal mechanisms within the AI interface where users are required to complete modules that look exactly like real work, but are flagged internally as 'skill maintenance tasks.'

- Prioritize Explainability over Simplicity: Even when the AI handles the task, it must clearly articulate *why* it chose that solution path. This passive teaching combats skill loss better than pure busywork.

For Business Leaders and HR: Redefining Competency

The definition of a "skilled employee" is changing from someone who knows how to perform *Task A, B, and C*, to someone who knows how to *manage, verify, and direct an AI* that performs A, B, and C, while retaining the ability to perform A, B, or C manually if required.

- Audit Automation Reliance: Identify areas where reliance on AI has become absolute. Where has institutional knowledge been replaced entirely by an opaque model? These are your highest-risk zones.

- Incentivize Manual Mastery: Create internal certifications or rewards for employees who maintain high proficiency in areas now heavily automated. Make sure career progression doesn't solely reward those who delegate the most, but those who govern the delegation most wisely.

- Embrace the Transition Period: Understand that the first phase of AI integration must be slower than technologically possible to ensure human competence keeps pace with machine capability. Sacrificing 5% short-term efficiency for 50% long-term resilience is a sound strategic trade-off.

Conclusion: The Art of Being Productively Inefficient

DeepMind’s suggestion is unsettling because it runs counter to every instinct for optimization that has driven technological progress for centuries. We celebrate automation because it eliminates unnecessary effort. Yet, here we are contemplating a future where unnecessary effort—deliberate, targeted "busywork"—is a critical feature, not a bug.

The future of work is not a race between humans and machines; it is an integrated system. If we allow AI to optimize humans out of the process loop entirely, we risk creating brittle systems dependent on fragile, unpracticed human intervention points. The most sophisticated AI agents of tomorrow may well be the ones smart enough to recognize that perfect efficiency today can lead to catastrophic failure tomorrow if the human partner is left behind.

The challenge for technologists and organizations is to engineer systems that balance peak performance with necessary, controlled redundancy—making the human indispensable not by being slightly slower, but by being perfectly prepared when the automated system inevitably falters.