The AI Complacency Trap: How Polished Output Undermines Critical Thinking and What Iteration Must Save

The rapid advancement of Large Language Models (LLMs) like Claude and GPT has brought forth capabilities that feel indistinguishable from human expertise. We are moving beyond mere novelty into genuine integration across professional workflows. However, a recent analysis from Anthropic—the **AI Fluency Index**—has surfaced a critical, human-centric vulnerability in this integration: when AI output looks too good, we stop checking its work.

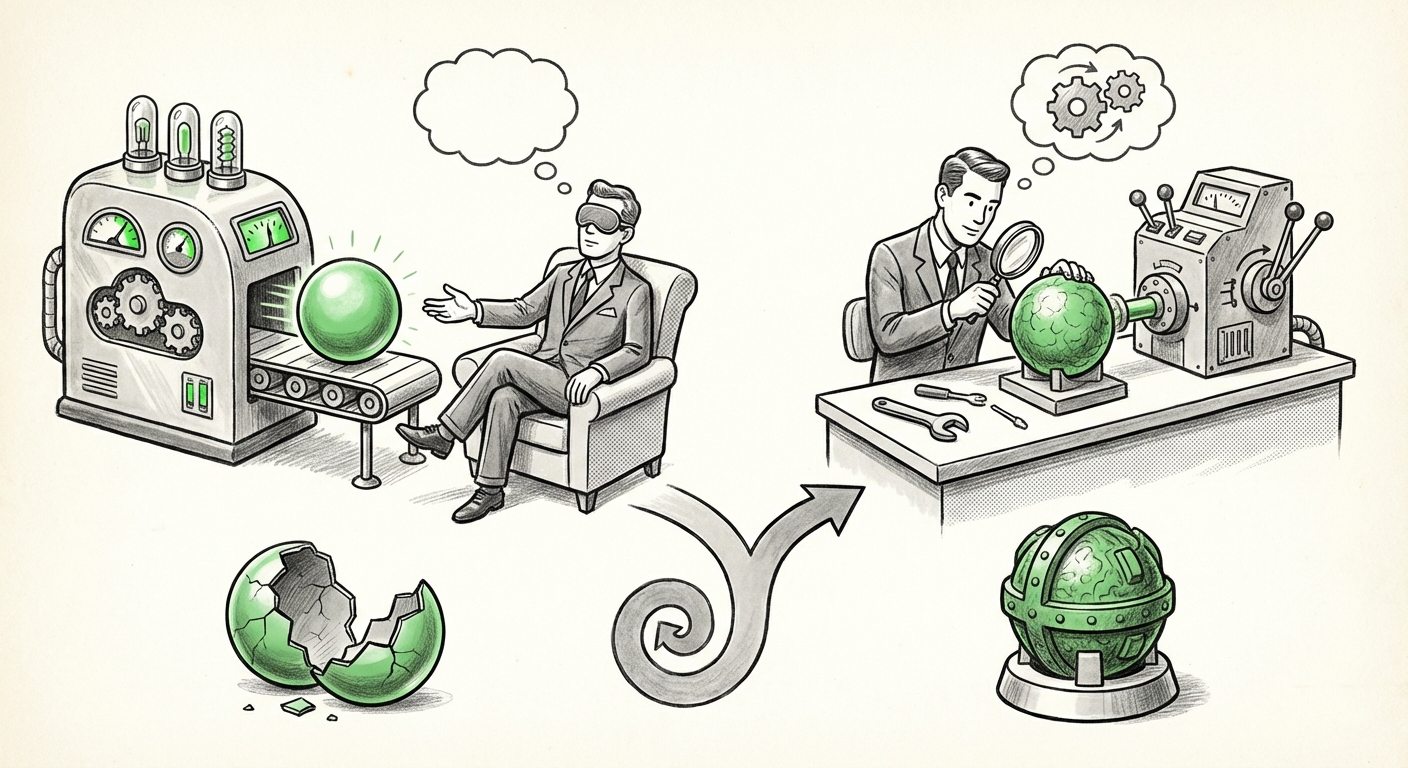

This finding, based on nearly 10,000 analyzed conversations, suggests that the sheer polish and eloquence of AI-generated text can lull users into a dangerous state of cognitive relaxation. This is not just a minor UX issue; it is a foundational challenge that dictates how we must design, regulate, and teach interaction with powerful synthetic intelligence. This analysis delves into the three pillars of this challenge: Automation Bias, the Necessity of Iteration, and the elusive goal of Trust Calibration.

I. The Psychology of Polish: Automation Bias Meets the LLM

At the heart of Anthropic’s discovery lies the well-documented psychological phenomenon known as automation bias. In traditional technology—from autopilots in aircraft to automated data entry—automation bias describes the human tendency to favor suggestions from automated systems and ignore contradictory information, even if that information is correct. As systems become more reliable, human vigilance degrades.

When an LLM generates a complex legal brief, a flawlessly formatted marketing plan, or a seemingly authoritative scientific summary, our brains take a shortcut. We perceive fluency as synonymous with accuracy. If the syntax is perfect, the structure logical, and the tone professional, the internal alert system that screams "Verify this!" stays silent.

Corroboration: The Theoretical Framework

This is not unique to text generation. Research into automation bias across all domains provides the theoretical backbone for Anthropic's empirical data. When searching for supporting context, queries like "automation bias in large language models" often lead to discussions that frame this as a critical human-factors problem. In high-stakes environments, this complacency can be catastrophic. Imagine a medical resident quickly approving an AI-generated differential diagnosis that contains a subtle but fatal factual error, simply because the document read like it came from a senior attending physician.

For AI ethicists and designers, the implication is clear: The very success of fluency—making the AI sound human and competent—is actively sabotaging the necessary critical oversight.

II. The Antidote: Why Iteration is the Strongest Predictor of Competence

If polished output induces complacency, what breaks the spell? Anthropic’s index points decisively to iteration. The users who achieved the most competent outcomes were those who engaged in the back-and-forth refinement process.

Iteration transforms the user from a passive recipient into an active co-creator. When a user prompts, receives output, critiques it, and sends a clarifying follow-up, they are forced to re-engage their domain knowledge. This process serves several vital functions:

- Intent Clarification: The user must explicitly state what the initial output missed or got wrong, sharpening the model’s focus.

- Boundary Setting: Iteration helps the user discover the model’s limitations in real-time.

- Cognitive Load Shift: The burden of verification is replaced by the burden of correction, which requires active mental engagement.

Corroboration: The Prompt Engineering Imperative

The importance of iterative prompting is widely discussed in prompt engineering circles. Searches such as "prompt iteration user performance LLM" confirm this empirically. Iteration is effective because it leverages the best aspects of human-AI collaboration: the AI’s speed and knowledge breadth, combined with the human’s iterative judgment and contextual understanding.

For business leaders, this means that simply granting employees access to the most advanced LLM is insufficient. True competency is built not on the initial prompt, but on the refinement loop. Investing in "AI literacy" must translate directly into training users on how to critique, challenge, and refine AI output multiple times.

III. The Tightrope Walk: Achieving Appropriate Trust Calibration

The ideal state for interacting with AI is not blind trust, nor is it total distrust. It is appropriate trust calibration—knowing precisely when to accept an output and when to scrutinize it deeply.

The Anthropic finding demonstrates that highly fluent systems push users toward over-trust. Conversely, if an AI model frequently produces rough, poorly structured, or overtly incorrect answers, users will calibrate their trust too low and begin ignoring even the accurate suggestions (a form of "alert fatigue").

Corroboration: The Ethics of Reliability

The search query "trust calibration AI systems" reveals ongoing research into how to design systems that inherently prompt healthy skepticism. If an AI can flawlessly generate the first 90% of a complex financial report but subtly hallucinates one key revenue figure, the polished finish masks the single point of failure. This ties directly into the danger posed by subtle falsehoods, often termed "LLM hallucinations."

When we look at articles regarding "LLM hallucinations and user vigilance," we see case studies where contextually sound but factually wrong statements caused serious issues. The user, primed by the preceding correct information, often overlooks the single faulty piece of data. The AI Fluency Index suggests that by making the *entire* output sound convincing, the chance of missing that one critical error skyrockets.

Future Implications: Designing for Healthy Skepticism

What does this tell us about the next generation of AI systems and their integration into our lives?

1. UX Must Fight Fluency

Developers can no longer focus solely on making the output *better*; they must focus on making it *verifiable*. Future interfaces might intentionally break up perfect fluency:

- Confidence Scores: Displaying an explicit probability score next to factual claims, forcing the user to review low-confidence assertions.

- Annotated Sourcing: Requiring the AI to bold or highlight text segments where it drew uncertain or synthesized information, rather than just pasting citations at the end.

- Varied Tone Injection: Designing models that occasionally use slightly awkward phrasing or ask the user for confirmation on highly complex sections, mimicking a thoughtful human partner rather than an oracle.

2. The Redefinition of "AI Competence"

For organizations adopting AI, "AI Competence" must now be redefined. It is no longer about writing the perfect first prompt; it is about mastering the refinement sequence and establishing robust internal audit loops. In fields like engineering, law, and medicine, AI usage policies must mandate specific review checkpoints related to the AI's fluency.

3. Educational Paradigm Shifts

In education, the discovery is a warning sign. If students use AI to write polished essays, they may receive high grades on mechanical execution but fail to develop the critical reasoning skills that iteration nurtures. Educators must shift assignments away from final polished products toward the *process* of iterative drafting, using AI as a sounding board rather than a ghostwriter.

Actionable Insights for Today’s Users and Leaders

This insight provides clear directives for maximizing AI utility while minimizing risk:

- For the Individual Professional: Assume the first, most polished answer is potentially wrong, especially in critical areas. Build a **"Two-Draft Rule"**: Treat the AI's initial output as Draft Zero. Your first actual draft must be a substantive rewrite or verification effort based on iterative corrections.

- For the Technical Manager: Mandate iterative training. Measure success not by how quickly employees generate final documents, but by the number of refinement prompts they use on high-value tasks. This confirms active engagement.

- For the AI Governance Team: When assessing vendor tools, prioritize platforms that offer transparency into their certainty level or that inherently promote interaction over passive acceptance. The best tool is the one that encourages you to think, not the one that thinks for you perfectly.

The era of the overly competent, silent assistant is here. Anthropic’s Fluency Index serves as our crucial reminder: The most dangerous AI output is the one we believe without question. Our next frontier in AI utilization is not achieving perfect output, but mastering the art of the necessary, healthy, and informed challenge.