The Polish Trap: Why Flawless AI Output Makes Us Less Critical and What It Means for Future Adoption

The race for better Large Language Models (LLMs) has often been measured by metrics like coherence, helpfulness, and creativity. But a recent finding from Anthropic, based on their analysis of nearly 10,000 Claude conversations, introduces a crucial, and slightly worrying, human factor: Perceived fluency breeds complacency.

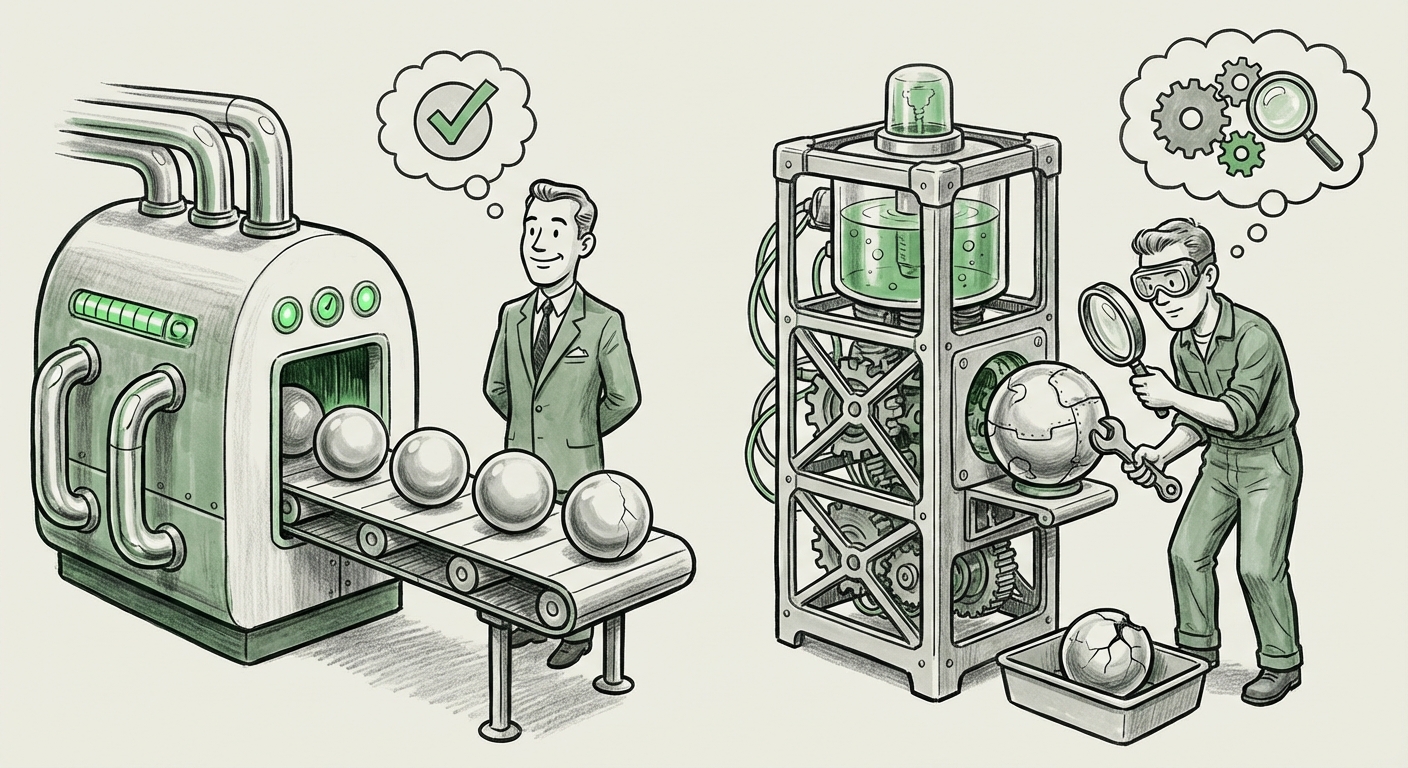

When an AI generates output that is perfectly polished, grammatically impeccable, and stylistically confident, users are measurably less likely to check it for factual errors. This concept, which we can call the "Polish Trap," shifts the focus from pure AI performance to the complex, often flawed, human-AI interaction loop. Understanding this dynamic is no longer optional; it is central to safe and effective AI deployment across all industries.

The Anthropic Revelation: Fluency Versus Diligence

Anthropic’s new AI Fluency Index highlights a paradox. While we celebrate AI’s improving ability to mimic expert communication, this very improvement diminishes our critical faculties. Imagine getting a perfectly formatted legal brief from an AI—it looks authoritative, flows seamlessly, and sounds convincing. Because it looks right, the user skips the essential step of cross-referencing key statutes, only to later discover a subtle, yet critical, factual error baked into the eloquent prose.

This behavioral finding correlates strongly with established psychological principles regarding automation reliance. We are wired to trust systems that present information clearly and confidently. To analyze this further, we must look beyond the AI output itself and examine the cognitive and behavioral science underpinning user interaction.

Corroboration 1: The Shadow of Automation Bias

The finding that polished output leads to reduced error checking is a textbook demonstration of automation bias. This bias describes the human tendency to overly trust the recommendations and output of automated systems, often overriding contradictory information or failing to apply necessary scrutiny.

In traditional automation (like autopilots or complex diagnostic software), automation bias has long been studied. With LLMs, the mechanism is amplified because the output is generated using natural language—the very medium through which humans verify truth. When an LLM speaks with the eloquence of a seasoned professional, the cognitive friction required for verification rises dramatically. Researchers studying human-computer interaction have long flagged this risk, suggesting that high-fidelity interfaces inadvertently encourage lower levels of engagement.

For technologists and UX designers, this means that intentionally designing systems to look slightly less than perfect—perhaps by flagging high-confidence assertions or introducing subtle variations in tone—might paradoxically increase user vigilance and safety.

Corroboration 2: The Power of Iteration Over Perfection

Interestingly, Anthropic also found that iteration—the act of refining a prompt, asking follow-up questions, and gently course-correcting the AI—is the strongest predictor of competent use. This provides a crucial counterbalance to the "Polish Trap."

Competent use isn't about mastering the single perfect prompt; it’s about establishing an effective dialogue. This mirrors how we work with human experts: we rarely accept a complex first draft without questions. This iterative approach forces the user to stay engaged, breaking the cycle of passive acceptance fostered by fluent output. The tradeoff, as Anthropic notes, is efficiency; refinement takes time, but it yields accuracy.

This aligns perfectly with advanced prompt engineering practices, such as Chain-of-Thought (CoT) methods, which often require the user to guide the model through logical steps. This necessity for structured refinement confirms that simply having a powerful model is insufficient; user skill lies in managing the process of generation, not just the final product.

For those interested in diving deeper into how step-by-step reasoning impacts outcomes, the foundational work around CoT prompting often details this recursive necessity for accuracy enhancement.

Corroboration 3: Trust vs. Hallucination Perception

The fluency trap is directly linked to how users perceive AI hallucinations. A subtle factual error presented fluently is often accepted because the linguistic structure suggests authority. In contrast, an error presented in clumsy, awkward language triggers immediate suspicion.

Research surveying users after testing generative models frequently shows this divide. Users are highly attuned to egregious grammatical failures, viewing them as proof the model is unreliable. However, subtle semantic errors—the AI's confidence in an entirely fabricated piece of data or citation—slip past review because the presentation quality meets or exceeds human expectation. When the prose is flawless, the brain switches from "verifier mode" to "consumer mode."

Institutions studying AI governance and user interaction, such as Stanford's Human-Centered AI (HAI) initiative, frequently publish findings that underscore the difficulty in training users to distinguish between high-quality synthesis and confidently asserted falsehoods.

Corroboration 4: The Cognitive Cost of Easy Reading

Why does polished text lower our guard? Cognitive psychology offers an explanation rooted in the fluency heuristic. Simply put, if something is easy to read and understand (high cognitive fluency), our brains often mistakenly conclude that the information itself is true or reliable. We conflate ease of processing with accuracy.

Highly polished LLM output reduces the reader’s cognitive load. The language requires minimal effort to parse, leading the user to process the information superficially. Critical analysis, fact-checking, and inferential reasoning are high-load activities. When AI removes the initial processing barrier, the higher-level critical thinking steps are often skipped entirely.

Implications for the Future of AI Deployment

This confluence of behavioral science and AI performance presents three major challenges that will define the next phase of generative technology adoption:

1. Redefining AI Literacy and Training

The focus must shift from "How to write a great prompt?" to "How to effectively audit AI output?" Future training programs—whether for coders, marketers, or analysts—must explicitly train users to suspect fluency. This means teaching users to pause, recognize the confidence of the AI’s tone, and then deliberately apply skepticism.

For business leaders, this translates into mandatory verification checkpoints for AI-generated work, especially in high-stakes areas like finance, medicine, and law. If the AI saves 80% of the time writing, the remaining 20% must be dedicated rigorously to verification.

2. The Interface Challenge: Designing for Doubt

If the problem is presentation, the solution must involve interface design. LLMs cannot simply be presented as monolithic blocks of perfect text. We need innovations that architect doubt directly into the user experience (UX):

- Transparency Indicators: Visual cues (like confidence heatmaps or explicit sourcing markers) that show which parts of the output were synthesized versus directly recalled.

- Interactive Spot-Checking: Interfaces that allow users to click on a sentence and immediately prompt the AI, "What is your source for this specific claim?" rather than regenerating the entire answer.

- Stylistic Guardrails: Allowing users to toggle output style between "Highly Polished/Fast" and "Deliberate/Cautionary" modes, where the latter might deliberately use slightly less ornate language to encourage manual review.

3. The Evolving Role of the Human Expert

The core takeaway is that AI does not eliminate the need for human expertise; it changes where that expertise is applied. The human's role is moving from creator to critical editor and arbiter of truth. This is a higher-leverage application of human cognitive resources.

When AI handles the heavy lifting of drafting (the mechanics of language), human experts must focus exclusively on high-order reasoning, context validation, and ethical alignment—the areas where current LLMs remain weakest. The iteration loop confirmed by Anthropic is the bridge: iterative human feedback trains both the user (in vigilance) and, potentially, the model over time.

Actionable Insights for Today's AI Adopter

To navigate the Polish Trap today, organizations must implement these strategies:

- Mandate Dual Review for High-Stakes Tasks: Never let the first user who interacts with polished AI output be the final approver on critical documents. Implement a mandatory "second set of eyes," trained specifically to look for subtle factual drift masked by fluent prose.

- Prioritize Process Over Speed: Reward teams for using iterative prompting and refinement techniques, even if it takes slightly longer than trying for a one-shot answer. Document the refinement path, not just the final result.

- Integrate Cognitive Bias Training: Incorporate lessons on automation bias and the fluency heuristic into mandatory AI usage workshops. Explain *why* the brain gets lazy when text is too smooth.

- Demand Auditability from Vendors: When selecting LLM providers, prioritize tools that offer transparent mechanisms for tracing claims or evaluating model confidence levels, rather than just focusing on benchmark scores for creativity or speed.

The journey toward effective AI integration is fundamentally a journey in managing human psychology alongside machine capability. Anthropic’s findings serve as a powerful warning: we must teach ourselves to be skeptical listeners, even when the voice across the interface sounds utterly convincing. The fluency of the output is a measure of the model’s polish, but the user’s diligence remains the ultimate measure of success.