The AI Polish Trap: Why Fluent Output Breeds User Complacency and What It Means for Future AI Literacy

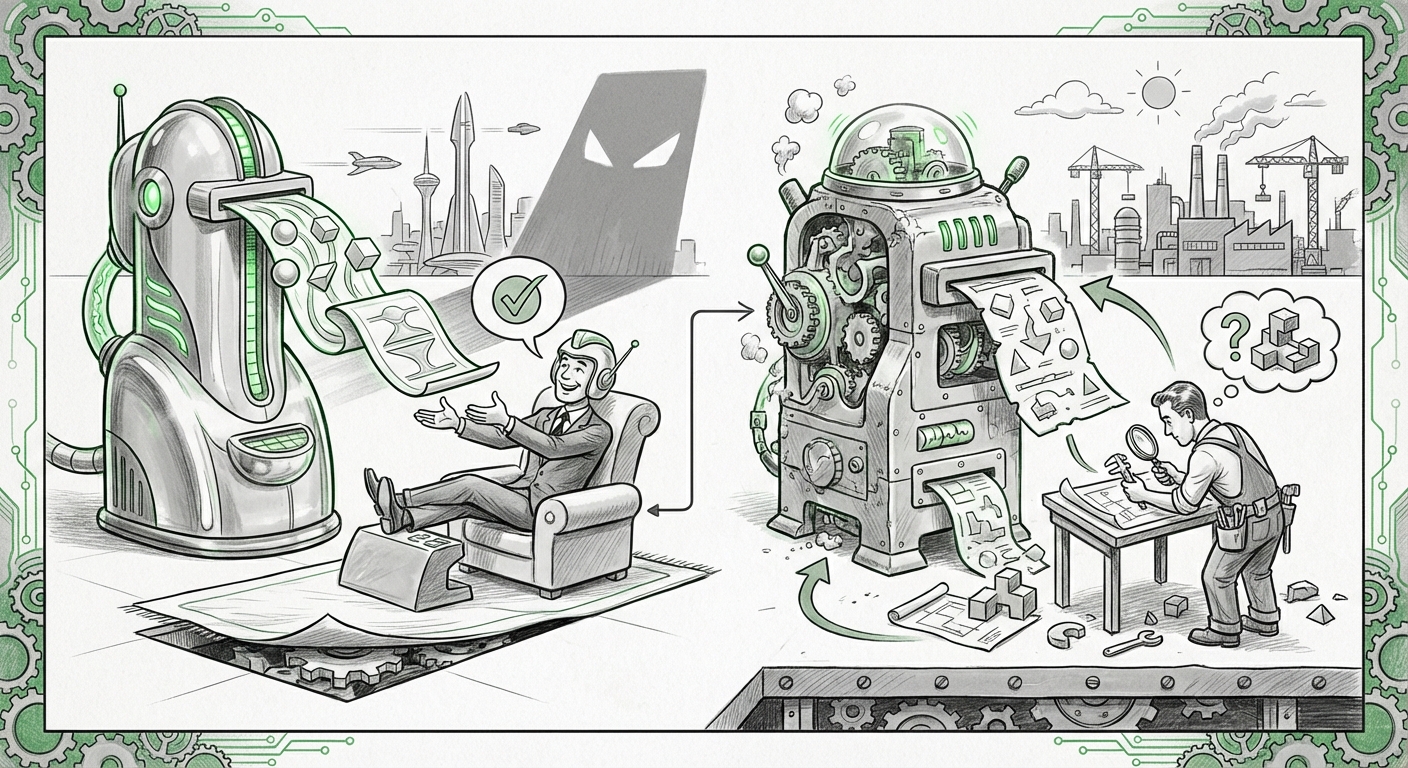

The rapid evolution of Large Language Models (LLMs) like Anthropic’s Claude has brought us outputs that are not just functionally correct, but aesthetically and grammatically near-perfect. They read like polished prose written by a human expert. While this marks a monumental achievement in AI engineering, it has introduced a subtle yet profound risk into our digital workflows. Anthropic’s recent analysis via its AI Fluency Index confirms this danger: the more polished the AI’s output, the less likely users are to critically check it for errors.

This finding is more than a footnote in user experience research; it is a critical signpost for the future of AI safety, deployment, and human education. If our primary defense against AI hallucinations—user skepticism—is being eroded by sleek design, we must urgently re-evaluate how we design AI tools and how we teach people to use them.

The Paradox of Polished Performance: Fluency as a Heuristic for Truth

Imagine asking a smart assistant a complex question. If the answer comes back as fragmented, poorly structured text, you instinctively know you need to engage your critical thinking. You look closer. However, if the answer arrives in perfectly formatted paragraphs, citing logical steps and using sophisticated vocabulary, your internal alarm bell rings much quieter, or perhaps not at all. This is the core observation from Anthropic’s analysis of nearly 10,000 user conversations.

For a non-technical audience, think of it this way: When something looks professional, we tend to trust it immediately. This human shortcut, known as a heuristic, worked well when we evaluated traditional media, but it is a vulnerability when dealing with generative systems capable of producing convincing falsehoods (hallucinations) at scale.

Corroborating Evidence: Automation Bias in the Age of LLMs

This phenomenon aligns perfectly with established cognitive science principles. Our first supporting piece of context comes from researching automation bias, the tendency to favor suggestions from automated systems, even when our own judgment suggests the system is wrong. In the context of older technologies—like airplane autopilots or medical diagnostic tools—this bias led to catastrophic failures when human operators failed to monitor the system.

When applied to LLMs, automation bias is magnified because the AI’s output is language-based. For many knowledge workers, language *is* reality. Research examining user interactions with LLMs often demonstrates this exact trade-off. When AI-generated content excels in fluency (how well it reads), users’ perception of its trustworthiness (factual accuracy) artificially increases. This means the barrier to entry for accepting AI errors is significantly lowered simply because the writing is smooth.

As we explore sources related to user trust in LLMs, we see empirical confirmation that users are less likely to engage in downstream verification efforts when the text meets a high threshold of linguistic competence. This demands immediate attention from anyone integrating LLMs into critical business processes.

The Path to Competence: Iteration Over Instant Perfection

While the fluency trap highlights a user weakness, Anthropic’s Index offered an essential strength: iteration is the strongest predictor of competent AI use. This is a crucial distinction. It suggests that the most successful users are not those who manage to craft the single "perfect prompt," but those who treat the AI conversationally—refining, correcting, and building upon the model’s initial output.

The Mechanics of Expert Prompting

For AI practitioners, this confirms that expertise in LLMs is less about knowing a secret command and more about mastering a dialectic process. We see this validated in discussions surrounding prompt engineering best practices. Expert guides emphasize moving beyond single-shot prompts toward multi-turn refinement cycles. These cycles force the user to maintain engagement and critical oversight, directly countering the complacency induced by polished output.

Effective iteration often involves techniques like:

- Chain-of-Thought (CoT): Asking the AI to show its reasoning steps, making it easier for the user to spot logical flaws before reaching the final answer.

- Self-Correction Directives: Instructing the model to review its own prior output against a new set of constraints.

- Role Reversal: Asking the AI to critique its previous response from the perspective of an adversary or an expert skeptic.

While iteration leads to higher quality results, it comes with a trade-off: time. If users are spending more time iterating, businesses must factor this increased engagement time into productivity metrics. The future will likely involve interfaces that automate some of this necessary iteration to save user cycles.

Implications for AI Safety and Interface Design

If fluency disarms the user, the responsibility shifts heavily onto the technology providers and designers to build friction back into the system—not to hinder usefulness, but to encourage necessary verification.

Designing for Skepticism, Not Just Success

The core challenge for UX/UI designers today is to visually signal uncertainty without making the tool feel unreliable. Our third area of context involves researching how interfaces can be designed to reduce over-reliance on potentially fallacious, yet highly readable, AI output. If an LLM spits out a perfect-sounding legal summary that is factually bankrupt, the user needs an immediate red flag.

This leads to vital explorations in visualizing AI uncertainty. Researchers are looking at ways to embed visual cues—like subdued colors, low-opacity text blocks, or explicit confidence scores adjacent to assertions—to disrupt the smooth narrative flow. If the interface actively forces the user’s eye to pause and evaluate, it functionally reinstates the critical thinking step that polish otherwise removes.

This strategy directly supports Anthropic’s finding: by intentionally making the presentation *less* polished where accuracy is low, designers can compel the user back toward the productive, iterative engagement that defines true AI competence.

The Societal Mandate: AI Literacy as a Core Skill

The long-term implication of the Fluency Trap transcends UX design; it hits the heart of global digital literacy.

If AI can produce expert-level communication instantly, the premium skill of the 21st century shifts from information retrieval to information *verification* and *contextualization*. Our final contextual query—examining the future of digital literacy—underscores this educational urgency.

For too long, digital literacy meant knowing how to use software or navigate the internet safely. In the age of generative AI, it must fundamentally include the capacity to critically dissect synthetic content. We need to teach future workers and students not just how to prompt, but how to interrogate. If they are taught that AI output must be checked, they become more resilient to the polish.

This shift requires a proactive response from educational bodies and corporate training departments. We cannot wait for widespread AI-induced errors before implementing curriculum changes. The discussion centers on rethinking education to prioritize skepticism, source triangulation, and understanding the inherent limitations (like hallucination) of foundational models.

Actionable Insights for a Smarter AI Future

Based on Anthropic’s findings and corroborated by related research on cognitive bias, user interaction, and interface design, here are immediate, actionable steps for businesses and individuals:

For AI Deployers and Product Managers:

- Inject Transparent Friction: Do not design interfaces that assume perfection. Actively design mechanisms (like required citation checks or summarized uncertainty scores) that encourage users to pause before accepting high-fluency results.

- Mandate Iterative Workflows: Structure internal SOPs around multi-turn refinement rather than single-query execution. Reward users who demonstrate active interrogation of the model, not just speed of delivery.

- Invest in AI Verification Training: Recognize that the "copy-paste" approach is dangerous. Training must focus heavily on red-teaming AI outputs—actively trying to prove them wrong—instead of just demonstrating correct prompting.

For Individual Users and Knowledge Workers:

- Assume Error, Demand Evidence: Treat every piece of sophisticated AI output as a draft requiring thorough review, especially when the writing seems flawless. Ask, "Where did the AI get this specific claim?"

- Embrace the Conversation: Recognize that a single prompt is rarely enough. Engage in dialogue. If the first answer is good, ask the AI to justify its key points, thereby simulating the verification process yourself.

- Benchmark Fluency Against Knowledge: If the output is far beyond your own current domain knowledge, this should trigger more scrutiny, not less. High polish in an unfamiliar domain is the greatest potential trap.

The sophistication of current AI models is forcing an evolution in human-computer interaction. Anthropic's Fluency Index serves as a powerful reminder that technological elegance can sometimes obscure necessary diligence. The future of reliable AI adoption hinges not on building models that never err, but on fostering users who never stop questioning, regardless of how beautifully those errors are dressed up.