The Hardware Cartel: AMD's 6GW Blitz and Equity Stakes Reshape the AI Supply War

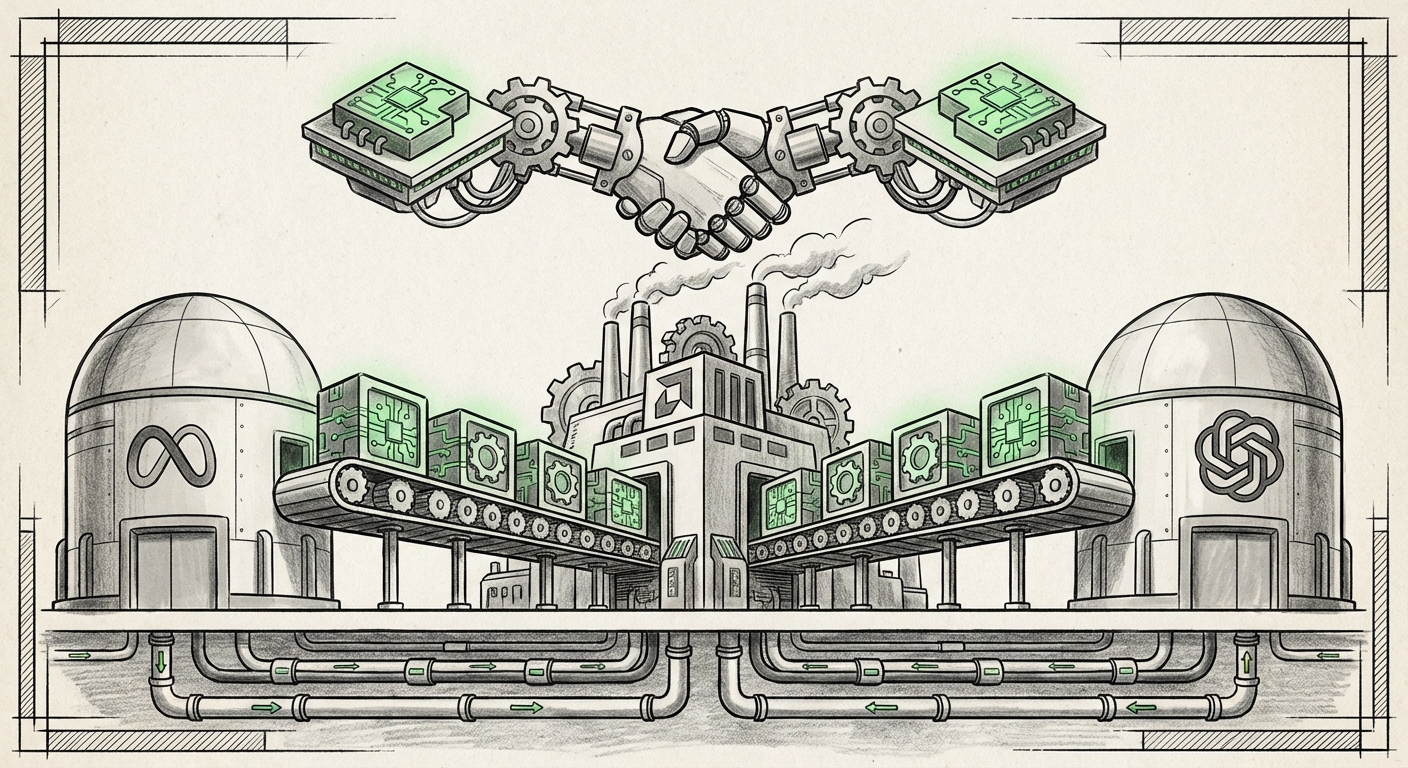

The Artificial Intelligence landscape is often discussed in terms of algorithms, model sizes, and breakthrough capabilities. But beneath the surface of every ChatGPT query or generative art piece lies a brutal, multi-billion-dollar war for silicon supremacy. Recent reports indicating that Advanced Micro Devices (AMD) has structured its massive partnership deals with Meta and OpenAI using near-identical blueprints—including enormous power commitments and unusual equity stakes—signal a profound shift in this infrastructure battleground.

This is not merely a story about AMD catching up; it is about the emergence of a standardized, aggressive strategy by AMD to disrupt the established dominance of Nvidia. For technology leaders and investors, understanding the implications of these "copy-pasted" deals is critical to predicting the future velocity and structure of AI deployment.

The Three Pillars of AMD’s New Offensive

The deals reportedly signed with two of the world’s most influential AI builders—Meta (the infrastructure giant) and OpenAI (the innovation leader)—share three defining characteristics:

1. The Scale: Six Gigawatts of GPU Power

The commitment of up to six gigawatts (GW) of compute power is staggering. To put this into perspective, 6 GW is roughly the power output of a large nuclear reactor, dedicated solely to running AI workloads. This scale indicates a desire by Meta and potentially OpenAI to move beyond experimental deployment and integrate these advanced accelerators directly into their core, always-on services.

This commitment serves two masters: it guarantees AMD a massive, reliable revenue stream, and, crucially, it signals to the market that AMD’s Instinct MI series accelerators are ready for prime time. As we search for confirmation of these massive infrastructure blueprints, the focus shifts to the timing. Will these be phased in over two years or five? The answer dictates how quickly AMD can genuinely challenge Nvidia’s current supply advantage.

2. The Focus: The Unsung Hero of Inference

Crucially, the partnership heavily emphasizes inference over training. Training a massive Language Model (LLM) like GPT-4 takes months on thousands of the most powerful chips available. However, once trained, running that model—answering user queries instantly—requires consistent, efficient inference hardware. As AI moves from research labs to consumer mobile apps and enterprise chatbots, the cost and efficiency of inference become the primary economic drivers. AMD is positioning its hardware, particularly the high-memory variants of the MI series, as the optimal choice for serving this next wave of AI applications.

3. The Novelty: Strategic Equity Stakes

The most unusual element, reportedly mirrored from the OpenAI deal, is the inclusion of an equity component, possibly up to a 10% stake in one entity or division. In standard tech procurement, a customer buys hardware; they do not typically buy a piece of the supplier, nor does the supplier buy into the customer’s future success in this manner. This moves the relationship from transactional to profoundly strategic. For AMD, it locks Meta/OpenAI into their roadmap and provides insulation against competitive bids. For the customer, it secures supply longevity and potentially grants insight or influence over future silicon design.

This kind of "hardware-for-equity" negotiation is a tactic of desperation or immense foresight. Given the current constraints, it suggests a clear message: "We will secure your supply not just with contracts, but with partnership structure."

Why This Strategy is Necessary: Breaking the Nvidia Monolith

AMD is not playing nice; it is playing aggressively because the stakes demand it. Nvidia currently dictates the terms of AI compute. Their CUDA software ecosystem is deeply entrenched, creating a massive barrier to entry for any competitor. To overcome this "software moat," AMD must offer compelling incentives that go beyond raw chip performance.

The 6GW commitment and the equity offer serve as powerful market signals designed to alleviate customer risk:

- Supply Security: Major AI labs cannot afford to have their entire future hinge on one vendor’s manufacturing cadence. By securing massive, dedicated blocks of AMD capacity, Meta and OpenAI hedge against potential Nvidia supply shortages or price gouging.

- Architectural Diversity: Relying on one hardware architecture (Nvidia’s) is a long-term risk. If a future AI breakthrough requires a specific memory topology or interconnect standard that AMD supports better, these deep partnerships ensure Meta and OpenAI are positioned to pivot quickly.

This isn't just about selling chips; it's about creating a viable, industrialized alternative ecosystem.

Future Implications: What This Means for the AI Economy

The scaling of AI infrastructure—moving from training clusters to continent-spanning inference networks—will have profound effects on how businesses operate and how society interacts with AI.

The Decentralization of AI Compute Power

If AMD successfully embeds itself as the indispensable second source, the AI hardware market will evolve from a monopoly to a duopoly, or perhaps a triopoly if specialized custom silicon (ASICs) from hyperscalers like Google and Amazon gain more ground. A true duopoly fosters competition on price and innovation. This pressure will eventually trickle down, making sophisticated AI models cheaper to run for smaller enterprises and startups.

For enterprise customers trying to deploy AI, this is fantastic news. The intense competition will drive down the cost-per-inference, finally allowing AI ROI calculations to look attractive outside of the top tech giants.

The Rise of Specialized Hardware Optimization

The specific mention of inference implies that hardware optimization will become hyper-focused. We are moving past general-purpose training behemoths. Future hardware procurement decisions will be less about "who has the fastest chip overall" and more about "who has the best chip for running sparse models at 99.99% uptime." This level of specialization will require deeper collaboration between software teams (like Meta’s) and hardware providers (like AMD).

The equity stake can be viewed as a direct investment in this collaborative optimization, ensuring AMD’s R&D roadmaps align perfectly with Meta's deployment realities.

The Financialization of Hardware Procurement

The equity component introduces a fascinating financial dynamic. It treats critical hardware supply—something traditionally viewed as a CapEx expense—as a strategic asset, similar to an investment in a burgeoning startup. Companies securing supply this way are betting on the overall success of the AI wave and using their procurement leverage to gain a financial foothold in their suppliers’ growth.

This trend may be emulated. We could see more tech giants demanding convertible notes or equity warrants attached to multi-year, multi-billion-dollar cloud or hardware contracts, fundamentally altering how large-scale technology purchasing is structured.

Actionable Insights for Business and Technology Leaders

How should businesses navigate this rapidly changing hardware landscape?

- Mandate Software Portability Now: If your AI strategy relies heavily on vendor-specific software (like CUDA), you are vulnerable to the strategies of the chip makers. Start allocating engineering resources to explore and test frameworks that support alternative architectures, such as ROCm (AMD’s platform) or emerging open standards. Do not wait until your current supplier raises prices or experiences a bottleneck.

- Re-evaluate Inference TCO: If you are planning large-scale deployment of generative AI applications this year or next, you must model the Total Cost of Ownership (TCO) using non-Nvidia hardware estimates. The price for high-end inference is about to face significant downward pressure due to this increased competition.

- Watch the Software Maturity Curve: AMD’s hardware prowess is clear, but adoption hinges on software ease-of-use. Closely monitor the maturity of AMD’s ROCm platform. As it closes the gap with CUDA, the financial case for switching or diversifying becomes undeniable. Investigate partnerships with smaller software optimization firms that bridge this gap.

- Scrutinize Partnership Structures: For any major procurement over the next 18 months, ask vendors about long-term commitment mechanisms beyond standard purchase orders. Are they offering favorable financing, guaranteed allocation, or technology roadmap input? These non-monetary benefits often outweigh marginal price differences in times of scarcity.

Conclusion: A New Era of Hardware Leverage

AMD’s alleged strategy—a standardized, high-volume, equity-backed approach applied to the titans of AI—is a clear declaration of war on Nvidia’s near-monopoly. This is not a quiet competition; it is a calculated power play to secure the foundation of the next generation of computing.

For the AI ecosystem, this signals an impending transition: the era of singular dependence is ending, replaced by a competitive landscape that will reward customers who build flexible, diversified infrastructure capable of leveraging the best performance-for-cost across multiple silicon providers. The infrastructure buildout is accelerating, and the deals being struck today—complete with equity carrots—will define the technological winners and losers of the next decade.

Further Reading on Context and Corroboration

- Analysis of Nvidia H100 Supply Constraints Driving Diversification (Context for why Meta seeks alternatives)

- The State of AMD ROCm and Software Interoperability in 2024 (Context for inference adoption risk/reward)

- Hyperscalers and the Future of Custom AI Silicon vs. Commercial Chips (Broader context on diversification)

- Original Report on the AMD-Meta Deal (Core source)