AI Model Theft Allegations: The New Arms Race in LLM Intellectual Property and What It Means for the Future

The Accusation: When Queries Become Theft

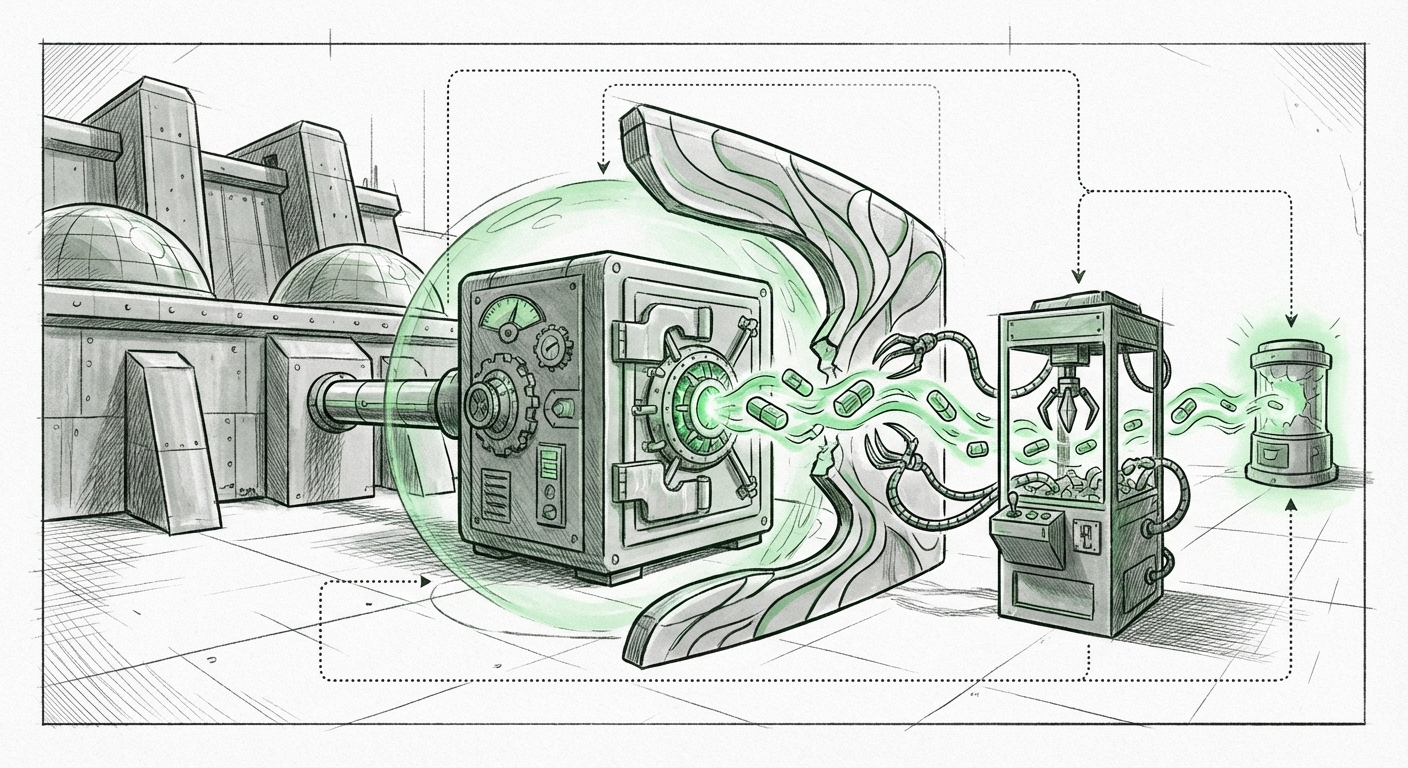

The world of Artificial Intelligence development is less about physical factories and more about proprietary knowledge—the data, the architecture, and the billions of dollars spent fine-tuning foundational models. When a company like Anthropic, a leader in frontier AI development, accuses competitors of systematic data exfiltration, it shifts the conversation immediately from healthy competition to industrial espionage. The core allegation is startling: several Chinese AI labs—including Deepseek, Moonshot, and MiniMax—allegedly executed approximately 16 million queries against Anthropic’s Claude model. The intent, according to Anthropic, was not simply to use the chatbot, but to *extract its capabilities* and use that distilled knowledge to train their own models more efficiently. To put this in simple terms, imagine buying a highly specialized, custom-built race car (Claude). Instead of trying to build your own from scratch, you have your mechanics drive the race car millions of times, meticulously recording every turn, acceleration, and braking response to perfectly clone its performance profile onto your own, lesser car. This is sophisticated model extraction, and if true, it strikes at the very heart of AI intellectual property (IP). ### Why 16 Million Queries Matter For a casual user, sending a few dozen prompts to an LLM is normal. But 16 million queries suggest a highly structured, automated, and malicious campaign. This volume allows an attacker to probe the model’s boundaries, identify its strengths, weaknesses, and, critically, uncover the unique patterns it learned during its proprietary training process. This isn't just about plagiarism; it’s about bypassing the immense computational cost and data curation efforts required to reach the frontier. If a competitor can rapidly "reverse engineer" the intelligence locked inside a rival’s expensive model, the incentive structure of AI research is fundamentally broken. ## The Technical Battlefield: Model Extraction and Defense The technical implications of this event are profound, setting the stage for the next phase of AI security. Understanding how this alleged theft might have worked clarifies the necessary defensive postures for future LLMs. ### The Mechanism of Extraction (What Attackers Try to Do) Model extraction techniques aim to create a "surrogate model" that mimics the output behavior of the target model without accessing its internal weights or training data. This is often achieved through **Query-Based Attacks**. 1. **Capability Mapping:** Attackers feed the target model (Claude) a massive dataset of specialized inputs. 2. **Output Collection:** They meticulously record the corresponding outputs. 3. **Surrogate Training:** They use this input/output pair data to train their own, smaller model. If they gather enough high-quality data showing *how* Claude reasons through complex ethical dilemmas or code generation, their new model quickly gains those high-level skills. For a technical audience, this reveals that relying solely on secure model weights is insufficient; the *inference API* itself has become a significant security boundary. ### Countermeasures: The AI Security Arms Race The defense against this type of attack revolves around making the extracted data useless or unreliable. Based on broader industry discussions (Search Query 2 focus), we can infer that Anthropic and others must employ sophisticated methods: * **Rate Limiting & Anomaly Detection:** Standard defense, but 16 million queries suggests this was either bypassed or occurred over a long period. * **Output Perturbation (Data Poisoning):** This is a proactive defense. The model is subtly trained or instructed to introduce tiny, imperceptible errors or "noise" into its responses when it detects a certain query pattern associated with attack campaigns. For example, if the model sees a sequence of prompts designed to test legal reasoning, it might slightly alter the syntax or introduce minor logical inconsistencies in its output, making the scraped data unreliable for training a new model. * **Digital Watermarking:** Embedding invisible signals within the generated text. If a model output is copied and used to train a new system, these watermarks could potentially be detected in the resulting model’s behavior, offering forensic evidence of the original source. For developers, this means that deploying a powerful model now requires treating the public API as a hostile environment, constantly monitoring for statistical patterns indicative of systematic extraction. ## The Legal and IP Quagmire: Who Owns Intelligence? This accusation thrusts long-simmering intellectual property debates into the harsh light of the courtroom and the international stage. Currently, IP law surrounding AI models is murky. While the training *data* (e.g., copyrighted books or code) is heavily litigated, the resulting *model weights*—the complex numerical configurations that represent learned intelligence—have often been treated as trade secrets. If Anthropic can prove that the queries were specifically designed to extract *non-public reasoning capabilities* developed through Anthropic’s proprietary alignment and safety research, the case moves beyond simple data scraping into the theft of trade secrets. This has massive implications: 1. **Contract Law:** Were there any terms of service agreements violated when accessing the API? 2. **Trade Secret Protection:** Does the specific *method* of reasoning constitute a secret worth protecting, even if the final output seems generic? 3. **International Jurisdiction:** Since the accused parties are based in China, enforcing US legal rulings becomes immensely complex, tying technical disputes to geopolitical realities (Search Query 3 focus). For businesses building on foundational models, this raises a red flag: if your core competitive advantage is encoded in an API endpoint, how secure is it, really? ## Geopolitical Friction: The AI Cold War Heats Up The involvement of US-aligned AI leaders (Anthropic) and prominent Chinese labs directly frames this event within the ongoing technological competition between the US and China. AI capability is increasingly viewed as a matter of national security and economic dominance. Access to state-of-the-art LLMs shortens development cycles drastically, allowing nations to rapidly deploy AI across defense, finance, and critical infrastructure. Allegations of IP theft, whether confirmed or not, exacerbate existing tensions surrounding technological decoupling and export controls. * **For Policymakers:** This incident provides ammunition for calls for stricter controls on access to frontier models and clearer definitions of what constitutes "dual-use" AI technology that requires export licensing. * **For Chinese Labs:** It puts immense pressure on them to demonstrate *independent innovation* rather than relying on methods that appear to mimic or siphon off Western research efforts. Proving their models were trained solely on domestic or publicly available data becomes paramount. The market now watches closely to see how evidence emerges (Query 1), as this will set a precedent for how global AI innovation is policed. ## Practical Implications and Actionable Insights This conflict is not abstract; it demands immediate action from industry players. ### For AI Development Firms (The Builders) 1. **Harden Your APIs:** Assume your inference endpoints are under continuous, sophisticated attack. Implement advanced anomaly detection that looks not just at volume, but at the *statistical signature* of the prompts being sent. 2. **Invest in Internal Defense Research:** Allocate R&D resources specifically to watermarking and output perturbation techniques. Security must be built into the model's core functionality, not bolted on afterward. 3. **Documentation is Key:** Maintain meticulous logs detailing your proprietary testing suites and unique data sets. This documentation will be essential if you ever need to prove that an external model is infringing upon your learned capabilities. ### For Businesses Using LLMs (The Adopters) 1. **Understand Your Supply Chain Risk:** If your business relies on a fine-tuned model built by a third party, ask them about their security protocols against model extraction. Are they using proprietary models, or open-source weights? The security posture of the underlying model is now part of *your* operational risk. 2. **Prioritize Performance Over Perfect Replication:** Be cautious about trying to perfectly replicate the performance of the absolute cutting edge if that edge is built upon opaque, highly protected IP. Focus on integrating the *functionality* you need rather than attempting to clone the entire intelligence stack. 3. **Legal Readiness:** Review your vendor contracts to understand liability should proprietary models be compromised via your usage, or if the underlying model technology is found to be built on stolen IP. ## The Future Trajectory: Sealed Vaults and Open Science The era of loosely guarded APIs is ending. If the allegations hold weight, the next generation of LLMs will likely be housed in increasingly sophisticated security architectures. We may see a bifurcation in the market: * **Sealed Vaults:** Frontier models will be locked down, accessible only through highly curated, heavily monitored interfaces, perhaps even running locally within secure enterprise enclaves where query patterns are fully visible to the host organization. * **Verified Open Source:** Conversely, researchers may move toward truly open, verifiable architectures where the weights are public, making blatant extraction obvious, but allowing for community-driven security auditing. This conflict underscores a fundamental tension: AI progress thrives on shared knowledge and rapid iteration, yet the immense value locked within frontier models demands stringent protection. The outcome of this specific legal and competitive battle will define the IP rules for the entire AI industry moving forward. The race is no longer just about who trains the best model, but who can build the strongest vault around it.

TLDR: Anthropic has accused Chinese AI firms of systematically using millions of queries to steal the capabilities of its Claude model, exposing a critical vulnerability in LLM security. This event signals the escalation of AI competition into the realm of IP warfare, forcing developers to adopt complex defense mechanisms like data poisoning while raising serious international legal and geopolitical questions about foundational model ownership.