DeepSeek, Blackwell Chips, and the Geopolitical Firestorm Shaking Silicon Valley's AI Supremacy

The Artificial Intelligence landscape, long dominated by the triumvirate of Google, OpenAI, and Anthropic, is facing a potential tremor from the East. Recent reports suggest that DeepSeek, a formidable player emerging from China, is on the verge of releasing its next-generation large language model (LLM). What makes this anticipation so acute isn't just the implied performance jump, but the whispers surrounding the training hardware: Nvidia’s next-generation Blackwell chips.

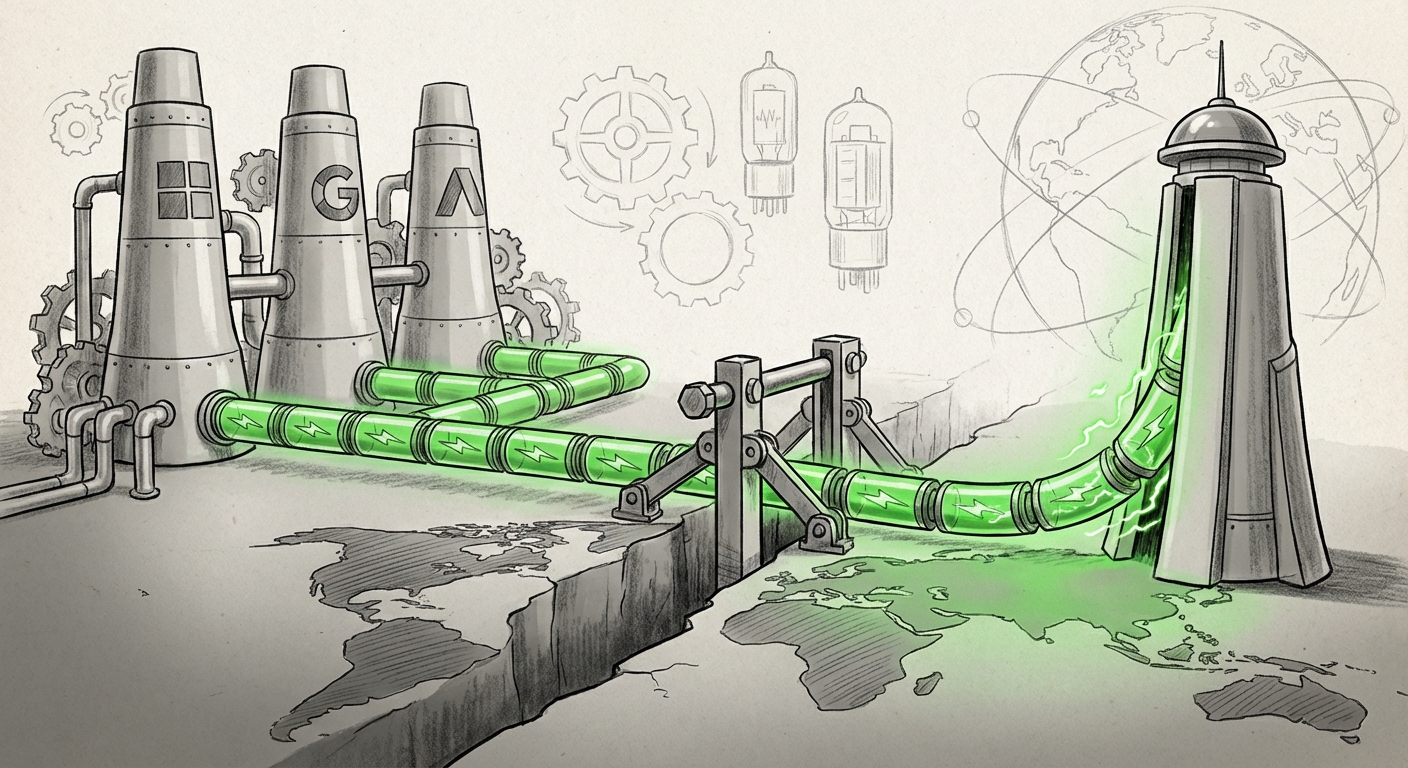

This situation creates a perfect storm—a convergence of cutting-edge AI capability, high-stakes hardware access, and international regulatory friction. For technology leaders, investors, and policymakers alike, understanding this moment is critical. This isn't just another software update; it’s a stress test on the entire global AI supply chain and competitive structure.

The Performance Imperative: Why Giants Are Bracing

For major AI labs to be reportedly "bracing" for a competitor's release, the threat must be existential, or at least significantly disruptive to market share. DeepSeek has previously made waves, particularly in specialized domains. For example, the release of models like **DeepSeek Coder 33B** demonstrated that Chinese developers are not merely copying, but innovating to close the gap with proprietary leaders like GPT-4 in specific tasks.

When we look for quantitative proof (Corroboration Query 2), we find evidence that these competitors are already trading blows on public leaderboards. The next frontier model from DeepSeek is expected to challenge the current state-of-the-art in general reasoning, multimodal capabilities, and context window size. If DeepSeek achieves performance parity—or better—at a potentially lower operational cost or with a more accessible open-source licensing structure, the business model for established players relying on expensive API access comes under severe pressure.

For the technical audience: A new high-performing, non-US-centric frontier model forces immediate re-evaluation of fine-tuning strategies, inference engine optimization, and the Total Cost of Ownership (TCO) for deploying AI solutions.

The Open vs. Closed Debate Intensifies

If DeepSeek releases a powerful model that is semi-open or open-weight, it democratizes access to frontier-level performance outside the walled gardens of the US tech giants. This accelerates open-source adoption globally, bypassing the traditional gatekeepers and shifting the competitive ground from proprietary cloud service dominance to community-driven innovation and deployment speed.

The Hardware Anomaly: Blackwell and the Geopolitical Choke Point

The most explosive detail in this scenario is the alleged use of Nvidia’s Blackwell architecture for training. This is where technology transcends engineering and enters the realm of international policy.

Nvidia’s H100 and, more recently, the B200/Blackwell chips are the undisputed currency of modern AI training. They represent the pinnacle of high-performance computing. However, the US government has implemented strict export controls aimed at preventing advanced semiconductor technology from reaching entities in China, specifically targeting the enablement of sophisticated AI training that could have military or strategic implications.

If DeepSeek has successfully trained its new model on Blackwell architecture (Corroboration Query 1), it signifies one of two major developments:

- Massive Pre-emptive Stockpiling: The lab secured a huge volume of these highly advanced chips *before* the latest, tighter restrictions were fully enforced, demonstrating foresight in supply chain management.

- Circumvention or Alternative Sourcing: The lab has navigated complex international trade routes or is leveraging domestic alternatives that are rapidly approaching parity—a significant challenge to US technological supremacy.

For regulators and policy analysts, this event acts as an immediate audit of existing controls. As hypothetical reports suggest, if Nvidia is confirming new limitations on H200 shipments, DeepSeek’s success with the theoretical Blackwell training proves that the existing choke points are permeable.

The AI Supply Chain Under Siege

The demand for high-end AI accelerators dwarfs current global production capacity. This scarcity creates a "GPU Hunger Games" scenario, where access to compute dictates who can build the next generation of AI (Corroboration Query 3).

This environment is forcing major operational shifts across the industry:

- The Rise of Domestic Competitors: Chinese hardware makers, like Huawei with their Ascend series, are under immense pressure and investment to offer viable, government-supported alternatives to Nvidia. If DeepSeek can train a frontier model using domestic infrastructure, it signals a crucial turning point toward true technological self-sufficiency.

- Cost Inflation: For US-based labs, the continued struggle for next-generation silicon drives up the already astronomical cost of training, potentially slowing their own release cadence or forcing higher prices for end-users.

- The Cloud Provider Dilemma: Major cloud providers must balance demand from domestic and international clients, all while navigating the legal uncertainties surrounding AI hardware acquisition and deployment locations.

Practical Implication: Businesses relying on the US tech stack must monitor the performance of these alternative supply chains. If Chinese models prove superior or even equal, but are sourced from a different supply ecosystem, diversification away from a single US hardware dependency becomes a strategic necessity.

Navigating the Regulatory Divide

The development of DeepSeek occurs within a fundamentally different regulatory context than that faced by its Western counterparts. Understanding the interplay between national policy and model development (Corroboration Query 4) is key to predicting long-term market trajectory.

In the US, the narrative often centers on safety, alignment, and responsible deployment, leading to self-imposed caution and ongoing dialogues with the government about "frontier model" risk management. In contrast, China has adopted a state-sponsored push for AI dominance, prioritizing rapid advancement and integration into national strategic goals.

This regulatory divergence means that Chinese labs might iterate faster on raw capability, unburdened (or at least differently burdened) by the immediate political pressures surrounding open-sourcing powerful general intelligence.

What This Means for Future AI Deployment

The future of AI will likely be multi-polar, not centralized:

- Divergent Model Ecosystems: We will see distinct ecosystems evolve. Western models may focus heavily on verifiable safety and compliance for highly regulated industries (finance, defense), while models emerging from other regions might prioritize raw capability, speed, and efficiency in different languages and cultural contexts.

- Sovereign AI Clouds: Governments worldwide are accelerating efforts to build "Sovereign AI" infrastructure—computing power controlled entirely within their borders—to reduce reliance on US Big Tech for critical AI infrastructure. DeepSeek’s success validates the need for and viability of these independent compute stacks.

Actionable Insights for Stakeholders

The impending release from DeepSeek is a catalyst forcing immediate strategic reconsideration across the board.

For Enterprise Technology Leaders:

Stress-Test Your Multi-Model Strategy: Do not rely on a single API provider. Begin internal evaluations of models accessible via alternative channels. If DeepSeek offers a substantial performance leap, your competitive edge may soon depend on integrating that capability quickly, even if it means managing slightly different data governance protocols.

Watch the Talent Migration: Look for engineers and researchers who specialize in optimizing non-Nvidia hardware (like AMD or specialized domestic Chinese GPUs). Their expertise will become highly valuable as companies seek resilience against supply chain bottlenecks.

For Investors and Venture Capital:

Look Beyond the Hyperscalers: Investigate the infrastructure companies (software, middleware, optimized compilers) that bridge the gap between powerful but potentially constrained hardware and the final LLM deployment. These "plumbing" providers offer high leverage regardless of which specific frontier model wins.

Geopolitical Risk vs. Performance Premium: Assess the discount or premium applied to models based on their geopolitical origin. A model that is 90% as good as GPT-5 but available immediately and cheaply presents a massive investment opportunity compared to waiting 12 months for the next fully US-vetted iteration.

For Policymakers:

Re-evaluating Compute Controls: The scenario suggests that controls on advanced chips like Blackwell may create friction but will not halt progress in determined ecosystems. Policy must shift from purely blocking hardware sales to focusing on *software enablement* and maintaining a clear, attractive domestic environment for the world's best AI talent.

Conclusion: The Era of Decentralized AI Power

The anticipation surrounding DeepSeek’s next model, fueled by rumors of cutting-edge hardware usage, serves as a powerful signal: the golden age of undisputed US leadership in AI foundation models may be reaching its inflection point. This isn't just about one company winning; it’s about the decentralization of AI capability.

The response from Google, OpenAI, and Anthropic will be fascinating to observe. Will they double down on closed, proprietary safety measures, or will competitive pressure force them toward faster, more flexible deployment strategies? The market seems to be demanding resilience, performance, and diversity in the foundational building blocks of the next technological era. The future of AI will be defined not only by the models we build but by who is allowed to build them, and on what hardware they choose to run.