Deepseek, Blackwell, and the Global AI Compute Race: The Next Market Shakeup

The world of Large Language Models (LLMs) has long been dominated by a handful of well-funded Western giants: OpenAI (backed by Microsoft), Google (DeepMind), and Anthropic. These labs control the narrative, the funding, and, most crucially, the access to the world’s most powerful AI training chips, primarily those from Nvidia. However, faint rumblings from the East suggest a gathering storm that could redefine the competitive landscape.

Reports indicate that Deepseek, a highly capable Chinese AI firm, is on the cusp of releasing its next-generation model. What makes this imminent release market-shaking is not just the expected performance leap, but the *whispered rumors* surrounding the hardware used for its training: **Nvidia’s Blackwell chips.** If true, this signifies a profound challenge to the existing order, centered not just on coding brilliance, but on **Compute Sovereignty**.

Part 1: Validating the Threat – Deepseek’s Trajectory

For Google, OpenAI, and Anthropic to be "bracing" for a competitor, that competitor must have a proven track record of rapidly closing the gap. Deepseek is not a newcomer; its recent models have demonstrated impressive capability, often punch for punch with larger, better-known models. This credibility stems from smart architectural choices, like leveraging Mixture-of-Experts (MoE) designs, which allow for high performance without the colossal, continuous computational cost of traditional dense models.

Analysts keenly track benchmarks like the MMLU (general knowledge) and HumanEval (coding ability). When a model like Deepseek V2 shows up near the top of global leaderboards, it proves two things:

- The fundamental research coming from these labs is world-class.

- They are optimizing compute usage better than expected.

The fear among established labs is that Deepseek’s next release, trained on potentially superior hardware, won't just keep pace; it might **leapfrog** current market leaders. For product managers and strategists, this means a potential erosion of the technological moat built by US-based companies.

Part 2: The Geopolitics of Silicon – The Blackwell Context

The core tension point in this story is the mention of Nvidia’s Blackwell chips. These next-generation processors are the absolute cutting edge, designed to accelerate the massive workloads required for training frontier AI models. Yet, the US government has placed strict export controls on certain advanced chips, specifically aiming to limit the access of specific entities (often Chinese firms) to hardware capable of building highly advanced AI capabilities.

These restrictions are a direct attempt to manage the technological balance of power. However, technology moves faster than regulation. The rumor that Deepseek has access to these processors—which may have been acquired before stricter rules were finalized, or sourced through complex international channels—is explosive. (To understand the regulatory framework causing this tension, one would investigate recent reports detailing Nvidia's export compliance updates regarding China, as reported by Reuters.)

Compute Sovereignty: The New Arms Race

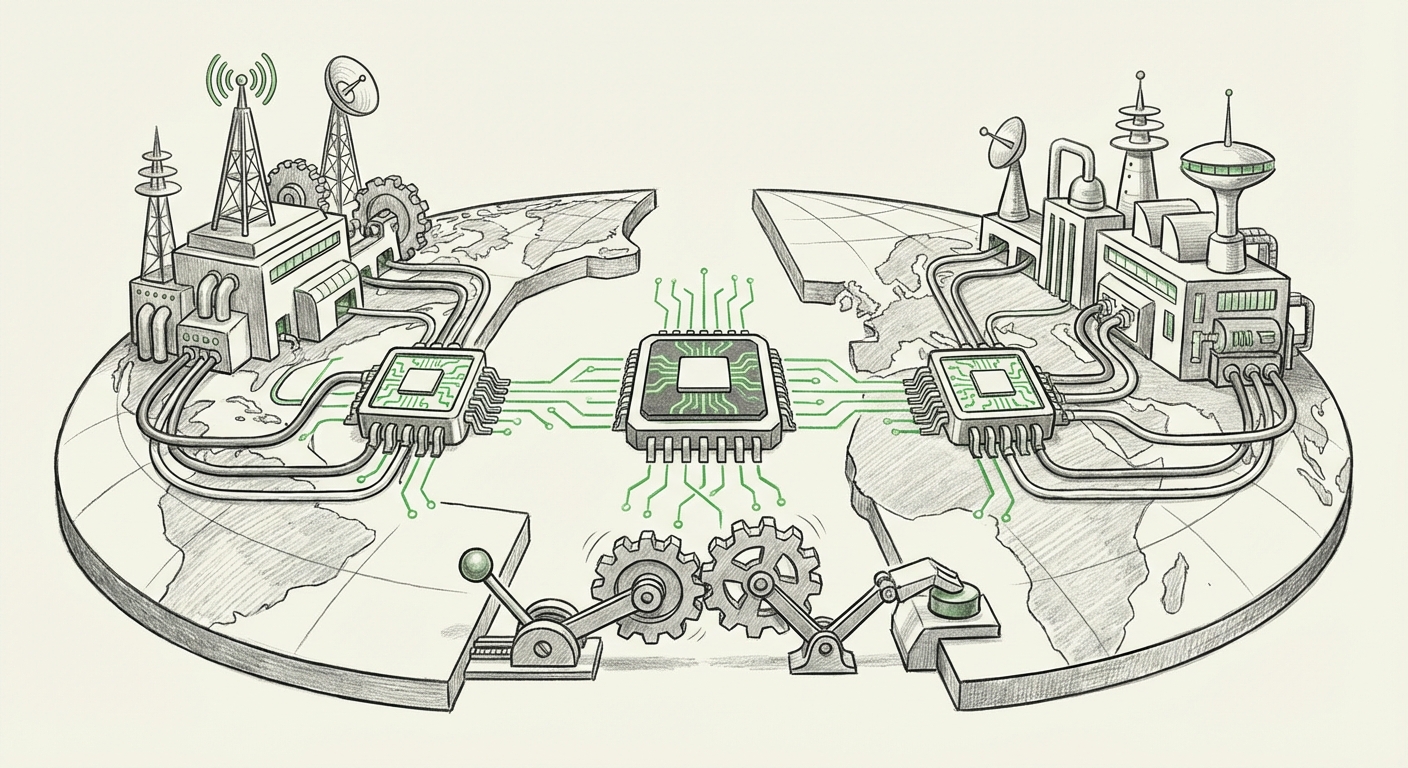

For a highly technical audience (AI Researchers and Hardware Analysts), this signals a critical divergence in infrastructure. While OpenAI and Google are locked in a battle to secure delivery timelines for their allocated H200s and future Blackwells, non-US labs might have successfully built parallel, high-performance compute clusters. If Deepseek has managed to train a frontier model efficiently on Blackwells, it demonstrates that **Compute Sovereignty**—the ability to develop cutting-edge AI independent of US supply chains—is achievable.

Part 3: The Shifting Global Ecosystem

This development isn't isolated; it's part of a macro shift where AI innovation is rapidly decentralizing away from Silicon Valley’s epicenter. Western perception often centers AI development around the "Big Three" labs, but global leaders like Mistral (France), Cohere (Canada), and major players in Asia are aggressively challenging this dominance (Query 4).

For Business Development teams and VCs, understanding the funding dynamics is key (Query 3 & 5). How are these non-US firms affording multi-billion dollar compute budgets when US firms are already straining their resources? Often, it involves a combination of massive domestic investment, state support, and a willingness to explore entirely new data acquisition and model fine-tuning strategies.

A successful, powerful release from Deepseek acts as a powerful validator for the entire international AI ecosystem. It shows that the talent and the financial backing exist to compete at the very highest level, even under restrictive trade environments. This pressure forces US companies to innovate faster on *efficiency* (e.g., better MoE implementations) just to maintain their current lead.

What This Means for the Future of AI and How It Will Be Used

The impending release of Deepseek’s new model, set against the backdrop of global hardware constraints, dictates several profound future implications for technology and business.

1. Acceleration of Model Diversity and Specialization

When compute access is uneven, labs are forced to be smarter about *how* they train. We will see an explosion of models optimized for specific hardware footprints or computational budgets. If Deepseek’s model proves highly efficient, other global labs will pivot immediately to replicate that efficiency—perhaps favoring Mixture-of-Experts architectures over dense models for longer. This means the era of "one monolithic giant model" might fade, replaced by a tapestry of highly specialized, extremely powerful, yet leaner models.

2. The Re-Emergence of Hardware Agnosticism

The reliance on a single vendor (Nvidia) and a single geographical pipeline (US/Taiwan) creates a massive systemic risk. The rumors about Blackwell usage underscore the urgency for alternatives. Google has long championed its TPUs, and other players are investing heavily in custom silicon or exploring open-source hardware solutions. For enterprise adoption, this means IT leaders must adopt a **multi-vendor compute strategy** to ensure resilience against geopolitical trade shocks or supply constraints.

3. Deepening Geopolitical Tech Friction

The race is no longer just for AI capability; it is for **AI infrastructure control**. The ability to train and deploy frontier models is now seen as a national security asset. If Deepseek’s success is tied to using restricted hardware, expect US and allied governments to tighten export controls further, potentially impacting everything from cloud service providers to academic research collaboration. This creates a fragmented "AI Internet," where models and data operate differently based on where they were trained.

4. Accessibility and Open Source

Paradoxically, intense competition often fuels democratization. To compete against proprietary titans, open-source or highly accessible models become crucial. If Deepseek releases a powerful, commercially viable model that sets a new performance baseline, it raises the bar for open-source projects like Llama or Mistral’s openly released weights. This pressure is beneficial for smaller businesses and researchers, forcing higher capability at lower costs.

Practical Implications and Actionable Insights

How should technology leaders, investors, and developers respond to this intensifying global competition?

For Business Leaders and Product Managers:

- Diversify Model Strategy: Do not stake your entire product roadmap on a single foundational model provider. Begin integration testing with leading non-US models (like those from Mistral or potential Deepseek releases) to prepare for necessary swaps if pricing, availability, or licensing terms shift unfavorably.

- Focus on Fine-Tuning over Foundation: Given the speed of foundation model updates, the real differentiator will be your proprietary fine-tuning data and domain expertise. Invest heavily in making your data pipeline robust—this moat is harder for international competitors to replicate quickly.

- Monitor Regulatory Impact: Keep a close eye on updates regarding chip exports (Query 2). Future shifts could impact the cloud services you rely on or the hardware you might purchase for on-premise deployment.

For AI Researchers and ML Engineers:

- Embrace Efficiency Benchmarks: Pay close attention to Deepseek’s reported performance metrics (Query 1). If they achieve better results with fewer parameters or less compute time, immediately explore techniques like sparse modeling, efficient quantization, and advanced MoE routing.

- Explore Alternative Compute: Start researching non-Nvidia solutions. Whether it’s cloud providers using AMD Instinct accelerators, custom ASICs, or cloud access in non-sanctioned regions, ensuring compute flexibility is paramount for long-term training goals.

Conclusion: The Era of Asymmetric AI Development

The anticipation surrounding Deepseek’s next release is a clear signal that the AI oligopoly is under siege. It’s a story not just about superior algorithms, but about the strategic acquisition and deployment of the world's most precious resource: cutting-edge compute.

If Deepseek has truly leveraged restricted Blackwell hardware to build a model competitive with the US incumbents, it reveals that the bottleneck in AI advancement is rapidly moving from the realm of pure theoretical computer science into the sphere of geopolitical logistics and supply chain mastery. The next few quarters will determine if the centralized US approach maintains its lead, or if this asymmetric, globally distributed challenge forces a fundamental, faster evolution across the entire AI industry.