Google's Educator Gambit: How Training 6 Million Teachers on Gemini Will Redraw the AI Map

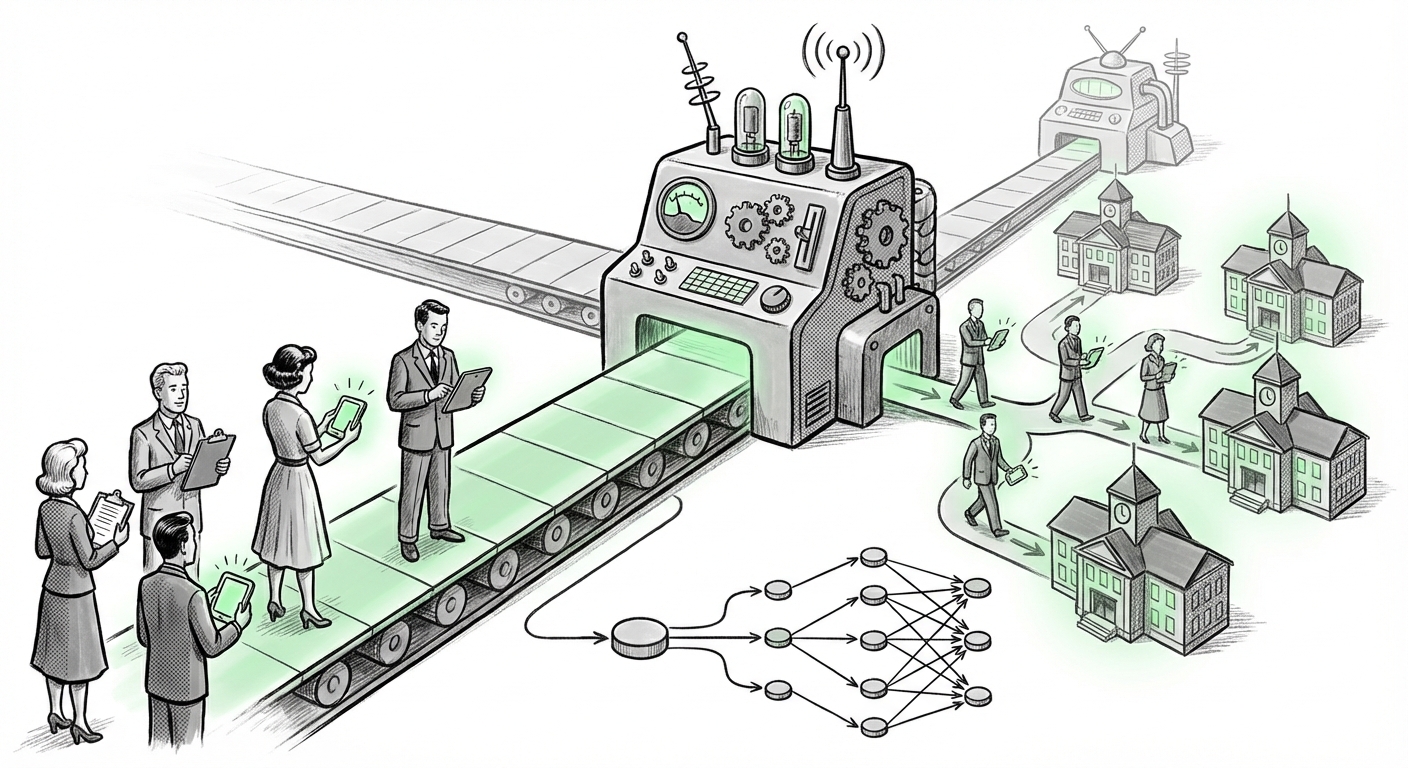

The technology landscape is defined by battles for infrastructure, but increasingly, the real war is fought over adoption. When a tech giant like Google announces it will provide free, large-scale training in its flagship AI, Gemini, to every one of the approximate six million K-12 educators in the United States, this is not a simple act of corporate generosity. It is a calculated, strategic maneuver designed to inoculate the foundational layer of the U.S. education system with their specific AI architecture.

As an AI technology analyst, my immediate reaction is to look past the press release and analyze the underlying competitive, regulatory, and practical shifts this move signals. Google isn't just trying to sell software; they are trying to shape how the next generation learns about and interacts with artificial intelligence. This analysis synthesizes this move with crucial market context, exploring what this means for the future of AI deployment in high-stakes environments like education.

The Strategy: Platform Entrenchment Through Fluency

The core thesis behind Google’s educational outreach is simple: Familiarity breeds dependency. By making Gemini training free and accessible, Google addresses two massive adoption hurdles simultaneously:

- Cost Barrier: Training budgets are often the first to be cut. Removing the financial barrier to entry for professional development makes this an easy 'yes' for overburdened school districts.

- Skill Gap: Many educators feel unprepared for Generative AI. Google is positioning itself as the primary, reliable guide through this transition.

This is an investment in human capital oriented around Google’s tools. When a teacher spends 10 hours learning the best prompts for Gemini to create differentiated worksheets, they are implicitly agreeing to use Gemini for those tasks moving forward. This grassroots momentum can often force the hand of district IT departments and procurement officers.

The Competitive Arena: Eyeing the Microsoft Heartland

This move cannot be analyzed in a vacuum. The EdTech market has long been dominated by the Microsoft ecosystem—Windows, Office 365, and Teams. Google's strength has been in Chromebooks and Google Workspace (Docs, Sheets). The introduction of powerful generative AI like Gemini and Microsoft Copilot marks the new battleground.

If Microsoft is actively bundling Copilot features into their existing, deeply entrenched Office 365 Education licenses (Query 1), Google needs a way to break through the centralized procurement structure. Offering free, high-quality training on Gemini is a classic flank maneuver. They cultivate a direct relationship with the end-user—the teacher—who then demands its integration into their daily workflow. This creates an internal push that procurement teams must address, effectively undercutting a potentially more expensive bundled competitor.

The Readiness Question: Systemic Hurdles to AI Adoption

While the supply of training is massive, the demand side—the educational system itself—presents significant friction points. Are schools ready to absorb, validate, and integrate this influx of AI knowledge?

Research into AI literacy standards for K-12 teachers (Query 2) shows that while enthusiasm is high, formal, mandated guidelines are still nascent. Many states and districts are scrambling to define what "AI competent" means. Google’s timely offering allows them to fill this vacuum. If Google's training aligns closely with emerging best practices, they become the de facto standard-setter for AI instruction, whether officially sanctioned or not.

For administrators and policy makers, this trend highlights the speed mismatch between technology development and educational regulation. The technology is moving at the speed of Moore’s Law, while curriculum updates and professional development cycles move glacially.

The Critical Balancing Act: Privacy and FERPA Compliance

No discussion of placing AI into the hands of millions of educators interacting with student data can ignore privacy. In the U.S., the Family Educational Rights and Privacy Act (FERPA) governs student records. The question of privacy and data governance for student AI models (Query 3) is paramount.

The allure of "free" training must be weighed against the risks of data leakage or using student interactions to further train commercial models. For Google, ensuring their Gemini platform meets stringent, documented FERPA compliance is non-negotiable for mass adoption. If the training materials emphasize secure, anonymized inputs, Google positions itself as the trustworthy steward of this sensitive data realm. Conversely, any misstep regarding PII—even through teacher error—could derail the entire initiative and invite intense regulatory scrutiny.

For businesses and society, this serves as a crucial case study: Widespread enterprise AI deployment hinges not just on capability, but on ironclad data contracts that explicitly forbid the use of sensitive institutional data for model retraining.

The Real-World Payoff: Teacher Workload and Pedagogical Shift

Why would a teacher dedicate precious out-of-hours time to learn a new system? The answer lies in utility, as proven by pilot programs and academic reviews concerning the impact of generative AI tools on teacher workload (Query 4).

Teachers report spending staggering amounts of time on administrative tasks: drafting parent communications, creating differentiated quizzes for varied learning levels, and writing feedback on assignments. Research suggests that tools like Gemini can automate up to 30-40% of these preparatory tasks. When training demonstrates how Gemini can instantly draft three versions of an assignment tailored for reading levels 6th, 8th, and 10th grade, the value proposition becomes irresistible.

This shifts the teacher's role from content generator to content curator and critical evaluator. The future teacher isn't replaced by the AI; they are augmented by it. They become experts in prompt engineering specific to pedagogical outcomes, focusing their energy on high-value human interactions like emotional support, complex ethical discussions, and individualized mentorship.

Actionable Insights for Stakeholders

This strategic move by Google has immediate ramifications across the technology and education sectors:

For Technology Vendors (Competitors):

The response must be swift. If you are a competing cloud or productivity provider, you must either offer a direct counter-incentive (e.g., free Copilot certification tracks) or rapidly deepen your product integration within existing education contracts to create switching costs.

For School Administrators (Buyers):

Do not treat this free training as a mandate, but as an opportunity for due diligence. Use the training period to pressure-test Gemini’s security protocols, draft specific data use agreements (DUsA) that clearly forbid student data reuse, and establish clear ethical guidelines for AI usage *before* mass deployment.

For Educators (Users):

Embrace the training with a critical eye. Treat Gemini not as an answer engine, but as a powerful, sophisticated co-pilot. Learn its strengths in synthesis and drafting, but rigorously audit its outputs for factual accuracy and cultural bias. Your expertise remains the essential filter.

The Broader Future Implication: AI as Infrastructure

Google’s initiative solidifies a critical trend in the deployment of frontier AI models: the move from consumer novelty to essential institutional infrastructure. We are witnessing the standardization of an AI layer across key societal sectors.

When infrastructure becomes standardized, the battle shifts from the core technology to the peripherals—the integrations, the specialized data layers, and the user experience specific to that domain (like lesson planning). By securing the educators, Google ensures that Gemini will be the AI infrastructure underpinning classroom operations for years to come, making the transition to future, more powerful models (Gemini 2.0, 3.0, etc.) seamless for millions of active users.

This isn't just about AI in schools; it’s about Google winning the next generation of digital literacy. If you learn to write in English using a specific word processor, you are likely to continue using that brand for your professional life. By ensuring 6 million teachers become fluent in Gemini's language, Google is effectively writing the introductory chapter of the AI era for an entire generation of students.