The End of Lag: How OpenAI's Speed and Voice Upgrades Unlock True AI Agents

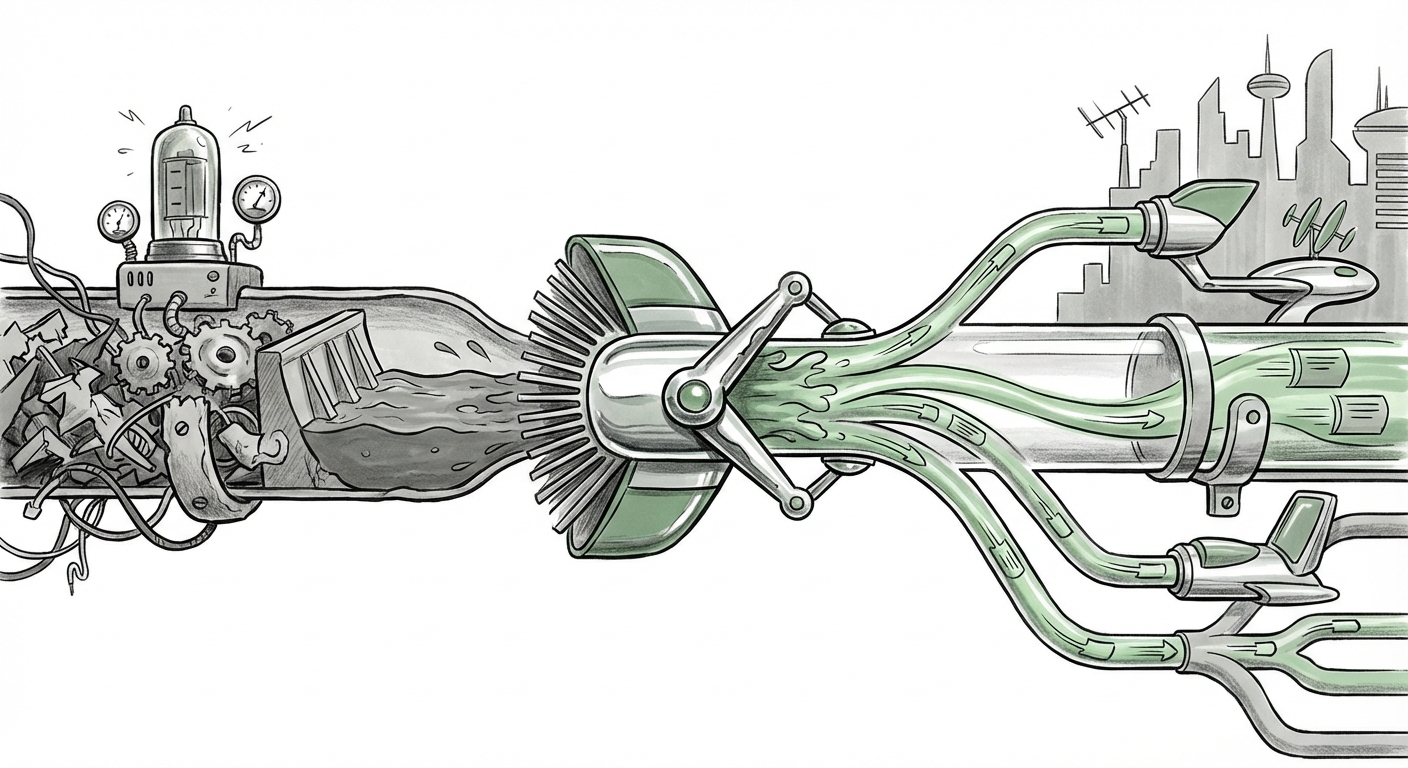

The evolution of Artificial Intelligence is rarely marked by single, revolutionary breakthroughs, but rather by incremental, yet profoundly significant, engineering victories. The recent API upgrades unveiled by OpenAI—sharply focused on reducing latency (delay) in voice interactions and dramatically increasing the operational speed of AI agents—represent one of these critical inflection points. These aren't mere feature tweaks; they are foundational shifts moving AI from a novelty tool to an essential, real-time partner in business and daily life.

As an analyst, I see this development not just as an improvement in response time, but as the key that unlocks the next generation of autonomous software. To understand the gravity of this release, we must examine the technical hurdles overcome, the market demand driving this change, and what this means for the infrastructure powering the digital world.

The Friction Point: Why Speed and Reliability Matter

For years, interacting with sophisticated AI models felt like talking to someone who pauses awkwardly before answering. This lag, even if measured in mere milliseconds, breaks the illusion of a natural conversation. When an AI agent is tasked with managing a complex workflow—booking travel, diagnosing a software bug, or handling a sensitive customer complaint—that pause is where trust erodes and errors creep in.

The Voice Barrier: Moving Past the Talking Head

Voice interaction is the most intuitive way for humans to communicate, but it is notoriously complex for computers. Processing speech requires a multi-stage pipeline:

- Audio Capture and Noise Reduction: Cleaning up the sound input.

- Speech-to-Text (STT): Converting audio waves into written words (tokens).

- LLM Processing: The core AI model understands the text and generates a response.

- Text-to-Speech (TTS): Converting the text response back into natural-sounding audio.

Each step adds delay. If the upgrade focuses on "voice reliability," it suggests OpenAI has optimized this entire chain, potentially allowing for streaming responses—where the audio output begins before the AI has fully finished generating the entire thought, mimicking human conversational pacing.

This technical mastery, as corroborated by industry discussions surrounding "real-time conversational AI reliability", transforms simple voice commands into true, flowing dialogue. For industries like customer service or telepresence, this means the difference between frustrating robotic calls and effective, human-like assistance.

The Agent Speed Metric: From Thoughts to Actions

Beyond voice, the focus on agent speed speaks directly to the ongoing "rise of autonomous AI agents in business operations". An AI agent isn't just answering a single question; it’s performing a sequence of actions: checking a database, running code, making an external API call, and then summarizing the result. If each step takes too long, the entire workflow grinds to a halt.

When developers report faster API connections, they are signaling that the overhead—the time spent connecting, authenticating, and receiving preliminary data back from the server—is minimized. This efficiency gain allows developers to build sophisticated, multi-layered agents that can execute complex tasks in seconds rather than minutes. This is the crucial step toward making AI agents viable replacements for tedious, repetitive digital labor.

The Engineering Undercurrent: Infrastructure as the New Frontier

These performance boosts are not conjured from thin air. They reveal intense optimization occurring at the infrastructure level—a topic frequently debated in circles focused on "AI agent latency improvements" and "LLM infrastructure."

Optimizing the Highway of Data

Serving large language models (LLMs) is computationally expensive. Making them faster requires optimizing hardware utilization and minimizing data movement. Key infrastructural areas being addressed likely include:

- Inference Optimization: Techniques like model quantization (making the model smaller without losing much accuracy) and speculative decoding (guessing the next steps quickly) are now being baked into the core API serving layer.

- Geographic Proximity: Faster connections often mean better placement of inference servers closer to end-users, reducing the physical distance data must travel.

- Batching Efficiency: Smart systems that group incoming requests efficiently onto GPUs ensure that resources are never sitting idle, leading to lower perceived latency for everyone.

This push confirms a macro trend: the next competitive battleground in AI is not just model size (how many parameters), but model serving efficiency (how fast can we deliver the answer). Companies that master inference at scale—like those using advanced NVIDIA hardware or custom silicon—will hold a significant advantage.

Future Implications: The Age of Sub-Second Interaction

What happens when the delay disappears? The implications ripple across every digital sector.

1. The Death of the Menu System

Consider telephony. Today’s Interactive Voice Response (IVR) systems are infamous for making users navigate endless, brittle menus. With reliable, low-latency voice AI, the IVR system can be replaced entirely by an agent that understands context, recognizes frustration, and resolves the issue immediately. For a bank, this means instant fraud verification via voice; for a retail store, it means instantly locating inventory across multiple warehouses simply by asking.

2. Hyper-Personalized Education and Coaching

In education, personalized tutoring has always been hampered by the awkwardness of the back-and-forth. If a student asks a complex physics question and receives a relevant, nuanced response instantly—and audibly—the learning process accelerates dramatically. The pace of the AI tutor can now perfectly match the pace of the student, fostering deeper engagement.

3. Shifting Economic Models

As performance increases, the conversation inevitably turns to cost, often explored when analyzing "OpenAI API pricing models vs performance boosts." If an agent can complete three times the work in the same amount of time due to speed enhancements, the marginal cost per task drops significantly. This efficiency gain can lead to two potential outcomes:

- Lower Prices: Developers can offer these high-speed services more cheaply, democratizing access.

- Increased Complexity: Developers keep the cost stable but leverage the speed to build vastly more powerful, complex agents that previously were economically unfeasible.

The sweet spot is likely both—initial high-value applications justify current pricing, while long-term, high-volume usage benefits from efficiency savings.

Actionable Insights for Stakeholders

For those building or investing in the AI ecosystem, these API upgrades provide clear directives:

For Business Leaders: Re-evaluate Use Cases

If your previous pilot projects involving voice or rapid agent sequences were shelved due to poor performance or frustration, now is the time to revisit them. Any application requiring genuine back-and-forth—especially customer-facing roles or internal process automation—is now viable. The focus shifts from "Can we build it?" to "How quickly can we deploy the *best* version of it?"

For Developers: Embrace Synchronicity

Stop designing applications around expected latency. Developers must now program for the assumption that the AI response *will* be instantaneous. This means designing user interfaces that flow seamlessly from voice input to action output, treating the LLM less like a database query and more like a true co-pilot whose presence is felt but whose processing time is invisible.

For Infrastructure Analysts: Watch the Serving Layer

The competition for efficient inference hosting will only intensify. Monitoring which cloud providers or specialized hardware solutions are most frequently cited in discussions about "LLM infrastructure" optimization will be key to predicting future leaders in model deployment, regardless of who wins the model architecture race.

Conclusion: The Threshold of Ubiquity

OpenAI’s commitment to perfecting speed and reliability in its API is more than a quarterly update; it is a clear signal that the era of laggy, frustrating AI interactions is rapidly ending. When an AI system communicates as fast and fluidly as a human peer, its utility multiplies exponentially. We are moving from a phase where users had to adapt their behavior to the machine, to one where the machine adapts seamlessly to human interaction patterns.

This technical polishing removes the last major barrier to widespread, trust-based adoption of autonomous AI agents. The next few years will not be about what AI *can* do, but about how fast and reliably it can do it for us—making the invisible infrastructure that supports sub-second AI response the true silent hero of the coming technological wave.