The Speed and Sound of Intelligence: Analyzing OpenAI's API Leap in Voice Reliability and Agent Performance

The recent rollout of API upgrades from OpenAI, targeting enhanced voice reliability and significantly improved agent speed, serves as a clear directional signal for the entire artificial intelligence industry. These are not minor feature tweaks; they represent a direct assault on the two biggest barriers preventing truly useful, real-time AI deployment: conversational clunkiness and sluggish workflow execution.

For developers and enterprise leaders alike, the message is clear: the era of the slow, text-only, single-step prompt is ending. We are rapidly moving toward systems that can think, speak, and act almost instantaneously. As an analyst tracking these foundational shifts, it’s crucial to contextualize these improvements against industry benchmarks and competitor movements to understand their true long-term implications.

The End of the Lag: Why Voice Reliability Matters Now

For years, AI voice interaction felt inherently unnatural. Whether it was the time gap between speaking and receiving a response (latency) or the uncanny, robotic tone of the output (reliability/naturalness), voice AI lagged far behind text chat interfaces. OpenAI’s focus on a new audio model suggests a deep dive into optimizing the entire voice pipeline—from speech recognition to final audio synthesis.

If an AI assistant takes too long to process speech, the user feels they are talking to a machine stuck in thought. If the voice output jitters, mispronounces words, or sounds too synthesized, trust erodes rapidly. The goal here is co-presence—the feeling that you are having a smooth, human-like conversation.

Corroboration: The Multimodal Race

To understand the significance, we must look externally. If OpenAI sets a new low bar for audio latency, competitors must respond. Our initial research path involved querying how real-time audio latency compares across leading AI models in 2024. This benchmark scrutiny is vital. If other major players like Google or Anthropic have already achieved near-zero latency on their internal roadmaps, OpenAI's upgrade confirms they are fighting hard to maintain parity or leap ahead in the race for multimodal supremacy. For AI Engineers and Product Managers, this dictates the foundational model choice for any application that relies on natural human interaction, such as in-car assistants, educational tutoring systems, or high-touch customer service.

(Note: The actual depth of this race, which we would verify through current industry reports, determines whether this OpenAI launch is an industry-defining moment or a necessary catch-up.)

The Agent Economy: Speed Unlocks Complexity

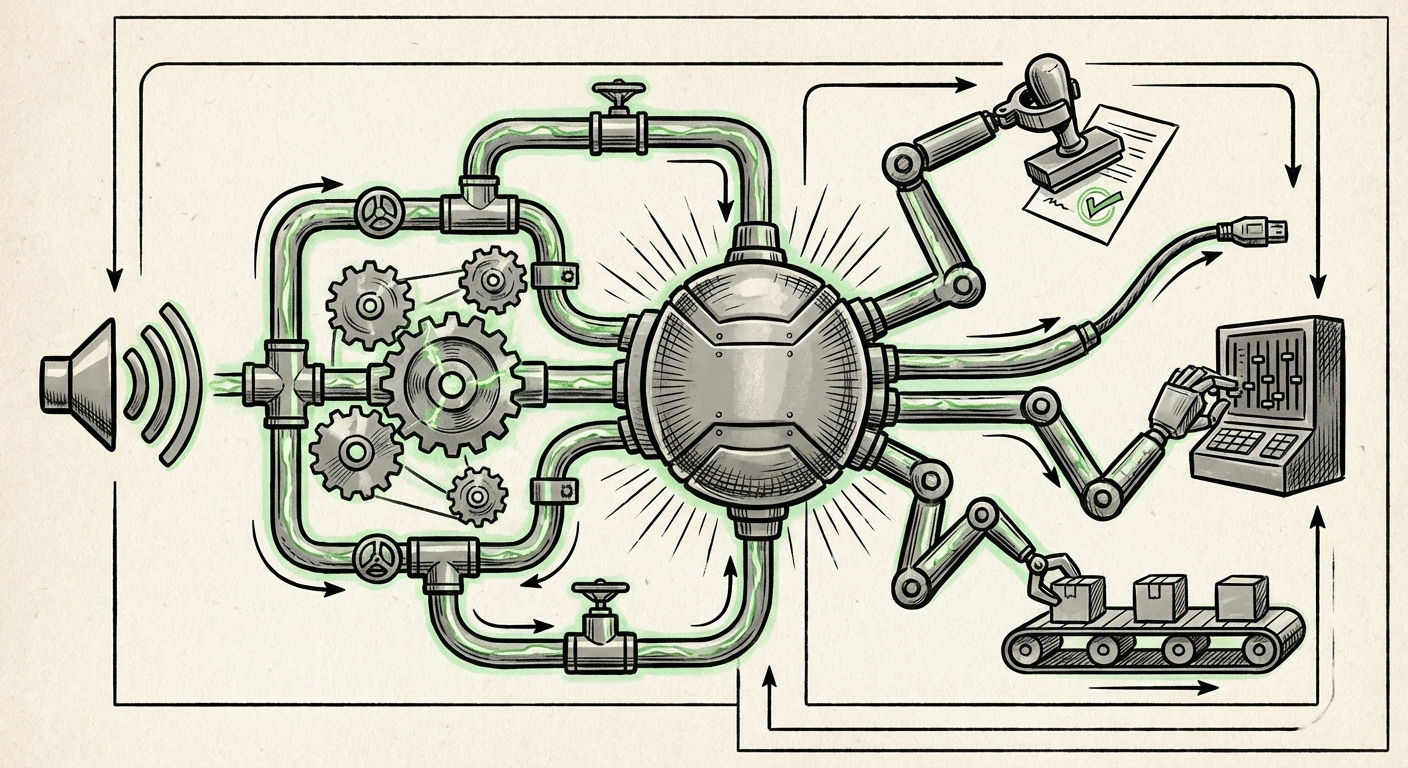

Perhaps the most transformative aspect of these upgrades is the boost to agent speed. An AI agent is not just a chatbot; it’s a system designed to perform multi-step tasks autonomously—booking travel, debugging codebases, managing inventory, or running complex data analysis chains. These processes require the AI to think, decide on a tool (like running code or searching the web), execute that tool, analyze the result, and then decide the next step. Each step adds latency.

When an agent is slow, complex tasks become unfeasible. A five-minute wait for a routine financial reconciliation is unacceptable in a business setting. A 10-second delay in an interactive programming session breaks the developer's flow state. By improving the underlying API connection speed, OpenAI directly reduces the time-to-completion for these sequential reasoning tasks.

The Business Utility of Velocity

This velocity improvement is what truly fuels the enterprise adoption of autonomous AI agents. Business Strategists are looking for solutions that deliver immediate ROI by replacing tedious, multi-step human workflows. If the new API stack allows agents to complete complex financial audits in minutes instead of hours, the business case becomes undeniable. This isn't about making simple tasks faster; it's about making previously impractical, complex tasks commercially viable.

For the consumer, this translates into agents that feel proactive rather than reactive. Imagine a personal assistant that can monitor your calendar, spot a conflict, check flight prices, book the necessary changes, and confirm the revised schedule—all within the span of a single, short phone call. That requires speed.

Under the Hood: Infrastructure and Operational Efficiency

API optimization isn't just about user experience; it's a fundamental shift in how AI models are served and scaled. When OpenAI enhances connection speed, they are optimizing the inference engine—the powerhouse that runs the model.

Cloud Trends and Economic Impact

This focus on API efficiency feeds directly into the broader conversation surrounding LLM API efficiency and cloud computing trends. Every millisecond saved on inference time translates to less time required on expensive, high-powered GPUs. For OpenAI, this means they can serve more requests with the same hardware, significantly improving their margins and capacity.

For Cloud Architects and VCs tracking the AI investment landscape, this efficiency is critical. It suggests a maturing infrastructure layer where the focus shifts from simply having the largest model to having the fastest and most cost-effective model at scale. If OpenAI can deliver GPT-4 quality performance at GPT-3.5 speeds (or better), they dramatically lower the barrier to entry for developers building massive-scale applications.

This infrastructural maturity signals a move away from research novelty toward sustainable product integration.

Trust and Transaction: The Reliability Paradox

Speed is useless if the output is unreliable. In the context of agents, reliability often means successful **tool use**—the model knowing *when* and *how* to call an external function (like accessing a database or sending an email) and correctly interpreting the returned data.

Developers universally understand the frustration of an agent that frequently fails to use a tool correctly or misinterprets the output from a function call. This requires rigorous testing.

Validating the Claims: Developer Sentiment

Our exploration must include checks on LLM agent tool use reliability benchmarks after model updates. Technical consultants and hands-on developers rely on independent testing suites to confirm that these backend speed boosts haven't introduced new forms of reasoning errors or destabilized complex reasoning chains. If independent testing confirms that agent reliability has held steady or improved alongside speed, then OpenAI has successfully solved a fundamental engineering challenge: optimizing performance without sacrificing grounded accuracy.

This validation builds the necessary trust for developers to move beyond simple experimental agents into mission-critical enterprise deployment.

Practical Implications: What Businesses Must Do Now

These API upgrades are a call to action for businesses currently experimenting with AI. The technological friction that justified a 'wait-and-see' approach is rapidly dissolving. Here are actionable insights:

- Audit Existing Voice Channels: If your customer service or internal help desk still relies on clunky, slow voice bots, immediately prioritize testing the new voice reliability features. True, low-latency conversational AI can radically change customer experience metrics.

- Accelerate Agent Framework Implementation: Review your current workflow automation plans. If complexity was a blocker due to perceived execution time, those roadblocks are being removed. Focus development efforts on end-to-end agent workflows rather than chained simple API calls.

- Re-evaluate Cost Models: With improved efficiency (Context 3), the cost-per-token or cost-per-interaction for high-volume tasks should decrease or remain stable while performance increases. Model your ROI projections based on faster throughput.

- Shift Focus to Agent Guardrails: Since speed is addressed, the next critical engineering challenge becomes control. Developers must invest heavily in robust safety layers, grounding checks, and definitive exit conditions for autonomous agents. Speed demands rigor in governance.

The Future is Fluent and Fast

What OpenAI has unveiled in these API upgrades is more than just a software update; it's a realization of the next phase of generative AI adoption. We are moving past the era of AI as a novelty generator and entering the age of AI as a true, responsive digital partner.

The integration of seamless, reliable voice communication coupled with the acceleration of complex agentic reasoning means AI is breaking out of the screen interface. It is becoming ambient—available instantly through voice, and capable of executing complex business logic behind the scenes at speeds that mimic real-time human reaction.

For the technology landscape, this tightening of the feedback loop—faster thinking, faster talking, faster doing—will compress the timeline for innovation. The competitive advantage will no longer belong only to those who have the best underlying model, but to those who can deploy that model with the lowest latency and highest reliability in the real world. The groundwork for truly autonomous enterprise systems is being poured, and it requires both impeccable sound and breakneck speed.