The Latency Leap: Why OpenAI's Focus on Speed and Voice Signals the Dawn of Real-Time AI Agents

For years, generative AI was defined by the blinking cursor and the text box. We typed a prompt, waited a few seconds, and received a perfectly crafted paragraph or piece of code. This was powerful, but fundamentally asynchronous—it was a correspondence, not a conversation.

The recent flurry of API upgrades from OpenAI, specifically targeting breakthroughs in voice reliability and agent speed, signals that the industry has reached an inflection point. We are moving beyond static text generation into the era of dynamic, real-time, and embodied interaction. This is the transition from AI as a clever tool to AI as a true, immediate partner.

The Latency Bottleneck: Why Speed Matters More Than Ever

Imagine trying to hold a real conversation with someone who pauses for five seconds after every single word they say. It’s maddening. That frustrating delay—known as latency—has been the Achilles' heel of conversational AI. For true human-like interaction, the latency must drop below the threshold of human perception, ideally under 200 milliseconds (ms).

When OpenAI prioritizes API speed, they are tackling this core engineering challenge. This isn't just about making chatbots feel snappier; it unlocks entirely new use cases. This pursuit of near-zero latency is a critical industry trend, as developers search for ways to make complex models deployable instantly. As research into LLM inference optimization shows, efficiency in processing power is key to scaling these real-time capabilities.

Implications of Low Latency: The Rise of the Digital Co-Pilot

For the business user or the developer building a new application, reduced latency translates directly into trust and usability:

- Natural Voice Interfaces: Customer service bots, in-car assistants, and real-time translation services become seamless. You can interrupt, clarify, and react naturally, just as you would with a human operator.

- Instantaneous Feedback Loops: In fields like coding or creative design, the AI can offer suggestions or correct errors *as the user is working*, effectively acting as an omnipresent teaching assistant.

- Reduced Cognitive Load: When responses are fast, users don't have to hold the context in their short-term memory while waiting; the interaction flows uninterrupted, making the technology feel less like a machine and more like an extension of thought.

The Agentic Evolution: From Answering to Doing

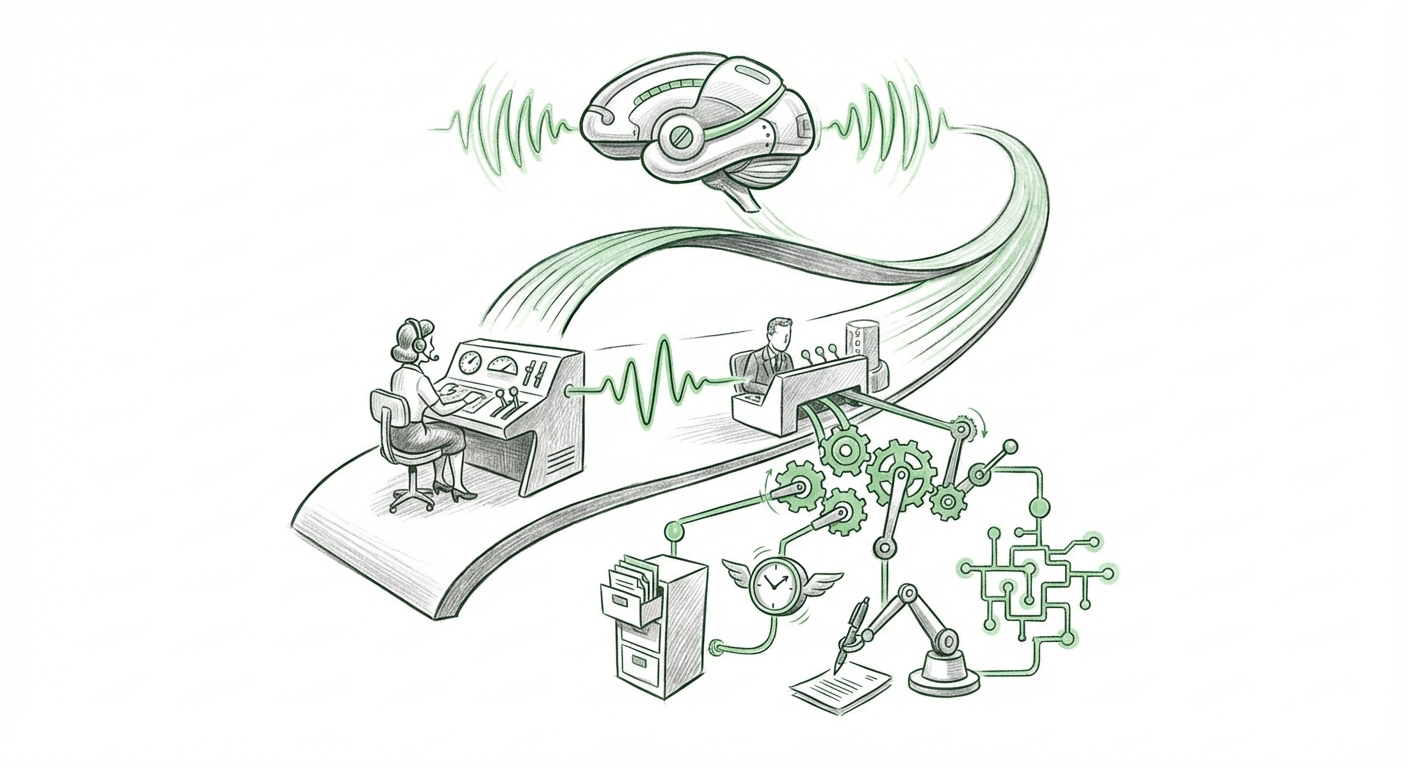

The second pillar of these upgrades—enhanced agent speed—is perhaps the most disruptive. An "AI Agent" is an AI system designed to take a high-level goal ("Book me a vacation to Rome in October") and break it down into smaller, manageable steps (check flight prices, compare hotel reviews, find itinerary logistics), executing those steps autonomously.

Speed is vital for agents because they often involve a chain of reasoning, tool use (like browsing the web or running code), and error correction. If each step takes too long, the entire workflow collapses under the weight of time, making the agent impractical for business use. Faster connections mean the agent can cycle through its planning and execution loop quicker.

This focus confirms that the industry’s goal is not just building better models, but building better *workers*. As platforms supporting advancements in AI agent frameworks mature, the value proposition shifts from content creation to task automation. We are seeing the foundation for true workflow automation being laid.

What This Means for Enterprise Strategy

For CTOs and Product Managers, the message is clear: begin auditing current processes that require sequential decision-making. These agents will soon be capable of handling complex, multi-step tasks:

- Sales Operations: An agent could monitor lead qualification data, automatically draft personalized follow-up emails based on scoring, and schedule introductory calls across multiple time zones.

- Software Development: Agents can manage minor bug fixes, create documentation drafts from completed code blocks, and manage version control updates, freeing senior developers for architectural work.

- Financial Auditing: Agents can sift through large datasets, flag discrepancies based on programmed rules, and generate preliminary compliance reports faster than human teams.

The Multimodal Convergence: Beyond Text and Speech

Voice reliability and agent speed are critical components in the broader push toward true multimodality. Multimodality means an AI can seamlessly process and generate information across different forms—text, voice, images, and video—at the same time.

When voice becomes fast and reliable, it integrates perfectly with the visual understanding that models already possess. This creates rich, contextual interactions. For example, you could hold up your phone, ask a question about an object in your field of view (voice command), and the AI could process the visual data, generate an answer, and speak it back instantly.

As leading analysts suggest, the future of generative AI is deeply multimodal. OpenAI’s move ensures that the *interaction layer*—the part humans touch—is as sophisticated as the underlying reasoning engine. This is vital for creating intuitive user experiences, especially in augmented reality (AR) and immersive training simulations.

The Competitive Heat: Raising the Barrier to Entry

These API enhancements are not happening in isolation. They represent a direct response to, and often a proactive move within, a fierce competitive race. As platforms like Google’s Gemini and Anthropic’s Claude continue to push their own capabilities, API performance becomes a key differentiator for attracting the vast developer ecosystem.

When we look at comparisons between major players, factors like audio quality, response time, and API stability often become the deciding factor for developers choosing a foundational model. Reports often benchmark the current performance benchmarks, and ensuring industry-leading voice capabilities helps OpenAI maintain its developer mindshare.

This competitive pressure forces rapid innovation, benefiting the end-user immensely. For developers, this rapid iteration means that the tools available today are exponentially more capable than those available just six months ago. The message is clear: the platform you choose must be capable of supporting real-time, autonomous action, or you risk being left behind.

Actionable Insights: Preparing for the Real-Time Revolution

What should businesses and technologists do now that AI is shedding its textual shackles and moving toward dynamic interaction?

For Technical Teams (Engineers & Architects):

1. Audit Latency in Current Pipelines: Identify any existing AI workflows where response time is currently masked by buffering or manual delays. Begin prototyping with the new, faster APIs to understand the true limits of real-time agent execution in your specific application context.

2. Embrace Agentic Design Patterns: Shift focus from prompt engineering (getting one good answer) to flow engineering (designing robust, self-correcting multi-step agent loops). Understand tool integration and error handling deeply.

For Business Leaders (Strategy & Product):

1. Identify High-Friction, Sequential Tasks: Map out internal or external processes that require humans to manually coordinate several digital steps (e.g., onboarding, complex customer support tiers). These are prime targets for immediate agent deployment.

2. Invest in Voice UX Training: If you plan to leverage superior voice reliability, train your teams on designing for conversation, not just command-and-response. The naturalness of the interaction will define user adoption.

Conclusion: The Shift from Information Retrieval to Embodied Intelligence

The recent API upgrades are more than just feature releases; they are strategic milestones. By aggressively tackling voice reliability and core inference speed, OpenAI is signaling the end of the "waiting game" era in generative AI. The future belongs to systems that can perceive, reason, and act—all in real time, and often through the most natural interface available: our voice.

This move towards embodied interaction—where AI feels present and immediately responsive—will redefine software itself. We are rapidly approaching a time when AI isn't just something we use on a screen, but a dynamic collaborator that operates instantly alongside us, making complex workflows feel simple, and turning conversation into decisive action.