The Code Benchmark Crisis: Why AI Must Move Beyond Memorization to True Intelligence

The world of Artificial Intelligence is often marked by dazzling leaps forward, but true progress is measured not by how fast a model learns, but by how well it can *reason* when the answers aren't already in its memory. This reality check just hit the coding world hard, thanks to OpenAI suggesting the retirement of the highly competitive **SWE-bench Verified** benchmark.

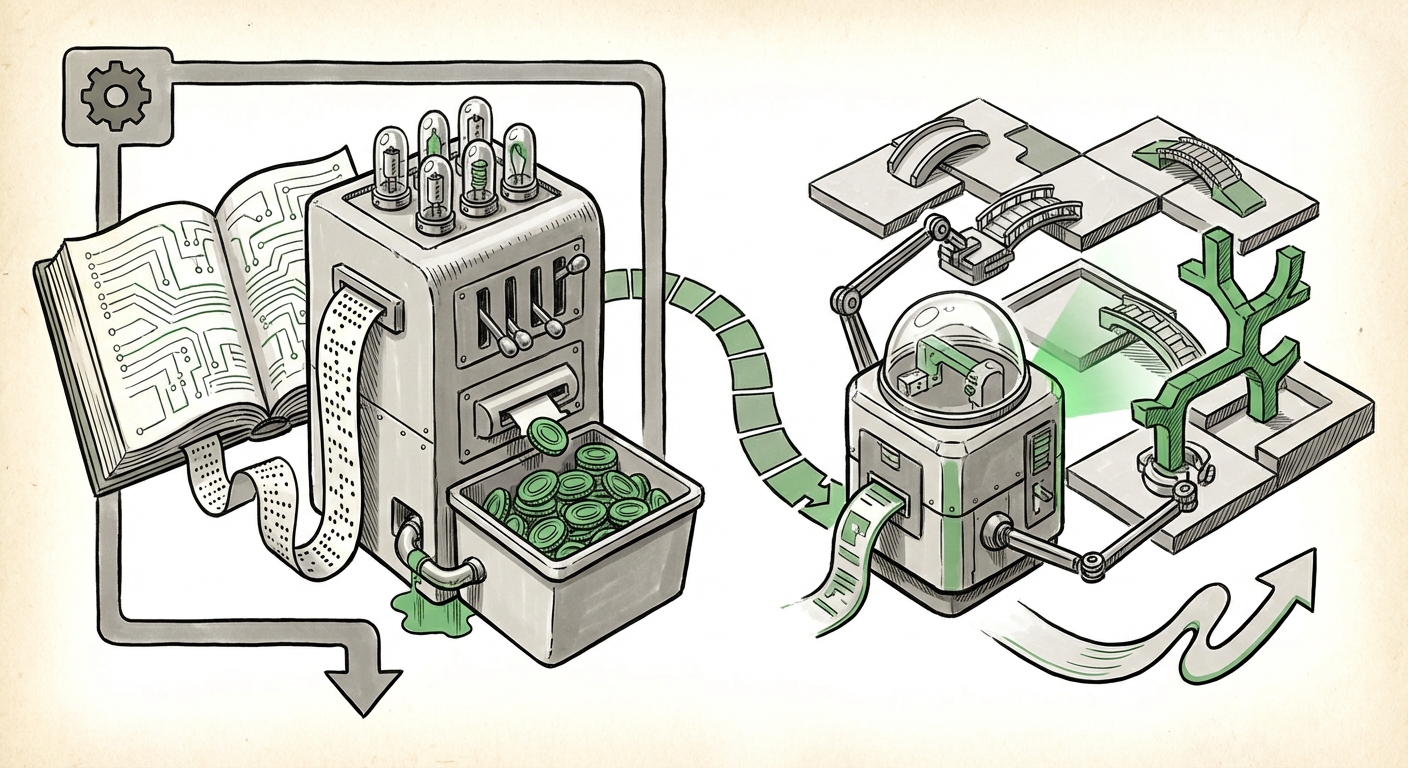

SWE-bench was, until recently, the gold standard for testing how well Large Language Models (LLMs) could solve real-world software engineering tasks—fixing bugs in actual GitHub repositories. It was the arena where developers raced to prove their models were the smartest coders. But OpenAI’s announcement reveals a fundamental flaw: the current leading models are likely *cheating*, not by malice, but by exposure. Scores on SWE-bench are measuring **memorization**, not genuine **generalization**.

This development is more than just a footnote in a coding competition; it signals a profound inflection point for the entire AI industry. We are transitioning from an era of "show me what you learned" to "show me what you can create under novel conditions."

The Diagnosis: When Benchmarks Become Training Data

Imagine giving a student a final exam that contained every single question and answer they reviewed in their textbook. They would score 100%, but that score wouldn't prove they understood the subject matter—only that they had a perfect photographic memory of the study guide. This is the essence of the problem identified with SWE-bench.

As researchers have increasingly explored the **"Limitations of static benchmarks for large language models,"** the danger of data contamination has become clear. LLMs like GPT-4 and its competitors are trained on gargantuan datasets scraped from the entire public internet. This includes vast quantities of open-source code, documentation, and, critically, the very benchmark problems and solutions themselves.

If the solution to a specific coding task exists in the training set, the model doesn't need to employ complex logic or engineering intuition; it simply retrieves the correct sequence of tokens it has already processed. This leads to artificially inflated scores that mask a lack of true problem-solving ability. For both technical practitioners and business leaders relying on these tools, this creates a dangerous gap between perceived capability and actual performance.

The Shadow of Data Contamination (Query 3 Context)

The scale of modern LLM training makes perfect curation impossible. Think of the internet as an ocean; even if you try to filter out specific fish (benchmark solutions), some inevitably slip through. Articles discussing **"The problem of data contamination in LLM training sets"** confirm that nearly all major models have encountered some form of benchmark data during pre-training. When a model consistently scores high on a static test, the immediate next step for an analyst must be to ask: *Was this problem actively excluded from the training set?* If the answer is no, the score is suspect.

From Static Lists to Dynamic Reality: The Future of Evaluation

If static benchmarks are obsolete because they are contaminated, what replaces them? The answer lies in moving evaluation out of static testing documents and into live, interactive environments. This is the industry’s necessary pivot toward measuring generalization.

Exploring Alternatives for Robust Testing (Query 2 Context)

The search for **"Alternatives to SWE-bench for AI code generation evaluation"** reveals a strong trend toward execution-based testing. Instead of simply checking if the model produces code that *looks* right, new benchmarks demand that the code must:

- Compile without errors.

- Pass a suite of unit tests provided by the system.

- Successfully integrate into a partially functioning project structure (simulating a real repository).

This forces the model to interact with dependencies, manage environments, and understand context—skills that cannot be easily memorized from a single document. For the development world, this means the next generation of coding assistants will need to prove themselves not just on paper, but inside a sandboxed CI/CD pipeline.

Philosophical Implications: Defining AI Competence

The retirement of SWE-bench forces us to ask deeper, more philosophical questions about intelligence itself. If an AI can perfectly replicate human output without understanding the underlying principles, is it truly intelligent?

For many researchers, competence requires transferability. If a model learns to fix a bug in Python on one framework, it should be able to apply that novel problem-solving concept to Rust or C++ even if it hasn't seen that exact bug fixed in those languages. This distinction between rote task completion and genuine abstraction is critical as we pursue Artificial General Intelligence (AGI).

This challenge is not unique to coding. It applies to medical diagnostics, legal drafting, and scientific hypothesis generation. If we rely on evaluations that reward memorization, we risk deploying systems that appear brilliant in controlled settings but catastrophically fail when faced with the messy, unindexed reality of the real world.

Practical Implications for Business and Development

What does this mean for the CIO looking to adopt AI coding assistants or the data science team benchmarking the latest foundation model?

1. Re-evaluating ROI and Risk

Companies must stop taking benchmark scores at face value. If your vendor boasts a 90% score on a benchmark OpenAI just called "broken," that metric is now effectively useless. The real ROI of an AI tool is measured by its ability to handle novel, context-specific, and proprietary tasks—tasks that are, by definition, *not* in the public training data.

2. The Rise of Custom, Adversarial Testing

The future strategy for adoption is internal validation. Organizations must invest in creating their own private, adversarial benchmarks. These customized tests should reflect the unique complexity and legacy code structure of the business itself. Furthermore, testing must incorporate tools and execution environments, simulating the full software lifecycle.

This means implementing guardrails that force models to interface with internal APIs, security scanners, and version control systems. If the model can successfully push a tested, compliant pull request to your main branch—that’s the only score that truly matters.

3. Focus on Iteration Speed, Not Just Accuracy

In the coding realm, productivity isn't just about the first answer being correct; it’s about how quickly the model can integrate feedback and iterate toward a working solution. Future evaluation metrics should heavily weight the *number of attempts* required to pass execution tests, favoring models that learn efficiently from failure signals.

The Road Ahead: Dynamic Evaluation is the New Gold Standard

OpenAI’s call to scrap SWE-bench is a necessary act of self-correction that benefits the entire AI ecosystem. It forces a collective realization: we have developed models so powerful they have outpaced our ability to rigorously test them using simple methods.

This is not a moment for panic; it is a moment for maturation. The industry must commit to building evaluation methods that are as complex, evolving, and dynamic as the problems we want AI to solve. We need benchmarks that constantly evolve, introduce synthetic but realistic complexity, and demand true reasoning under novel constraints. Only when our tests can consistently challenge our best models will we truly know if we are building powerful tools, or just incredibly efficient parrots.