The Benchmark Crisis: Why AI’s Coding Tests Are Broken and What Comes Next for Software Engineering

The artificial intelligence landscape thrives on measuring progress. We cheer when a model breaks a new score on an established test, using these metrics as proof that we are steadily climbing the ladder toward general intelligence. However, the very structure that validates our progress is now threatening to collapse.

The recent news that OpenAI is advocating for the retirement of the widely used **SWE-bench**—a benchmark designed to test an AI’s ability to solve real-world software engineering issues—is not just a minor adjustment; it’s a flashing warning light for the entire field. It signals a critical inflection point: the metrics we use to judge AI coding prowess are becoming obsolete, polluted by the very technology they seek to measure.

The Illusion of Progress: Memorization vs. Mastery

For years, benchmarks like SWE-bench served a vital purpose. They took actual issues from open-source GitHub repositories and challenged models to fix them. A high score meant the AI could read a problem description, identify the necessary code changes, and execute a solution. This was the gold standard for assessing practical coding ability.

The problem, as highlighted by OpenAI, is twofold:

- Data Contamination: Leading models have been trained on massive swathes of the internet. It is highly likely that the specific problems—and perhaps the exact solutions—from SWE-bench repositories were ingested during the training process.

- Flawed Tasks: Many of the benchmark tasks themselves were not robust enough. They allowed for correct solutions to be incorrectly rejected, or conversely, allowed clever but incomplete answers to pass based on superficial checks.

When a model aces a test because it has memorized the answers, its score measures data recall, not reasoning ability. For technical leaders and software engineers, this distinction is everything. A model that memorizes a fix for Bug X is useless when faced with Bug Y, which requires genuine problem-solving.

The Systemic Issue: Data Contamination Across the Board

This contamination isn't unique to coding benchmarks. As research has shown—a recurring theme when searching for broader context on "AI benchmark data contamination" LLM evaluation—it infects almost every standardized test, from standardized academic exams to general reasoning tasks (like MMLU). The faster models train on bigger data pools, the higher the probability they have already seen the future test questions. We are entering an era where high benchmark scores might actually signal a stale, overly public test set, rather than superior underlying intelligence.

If the entire scientific community stops trusting the public scores, how do we advance?

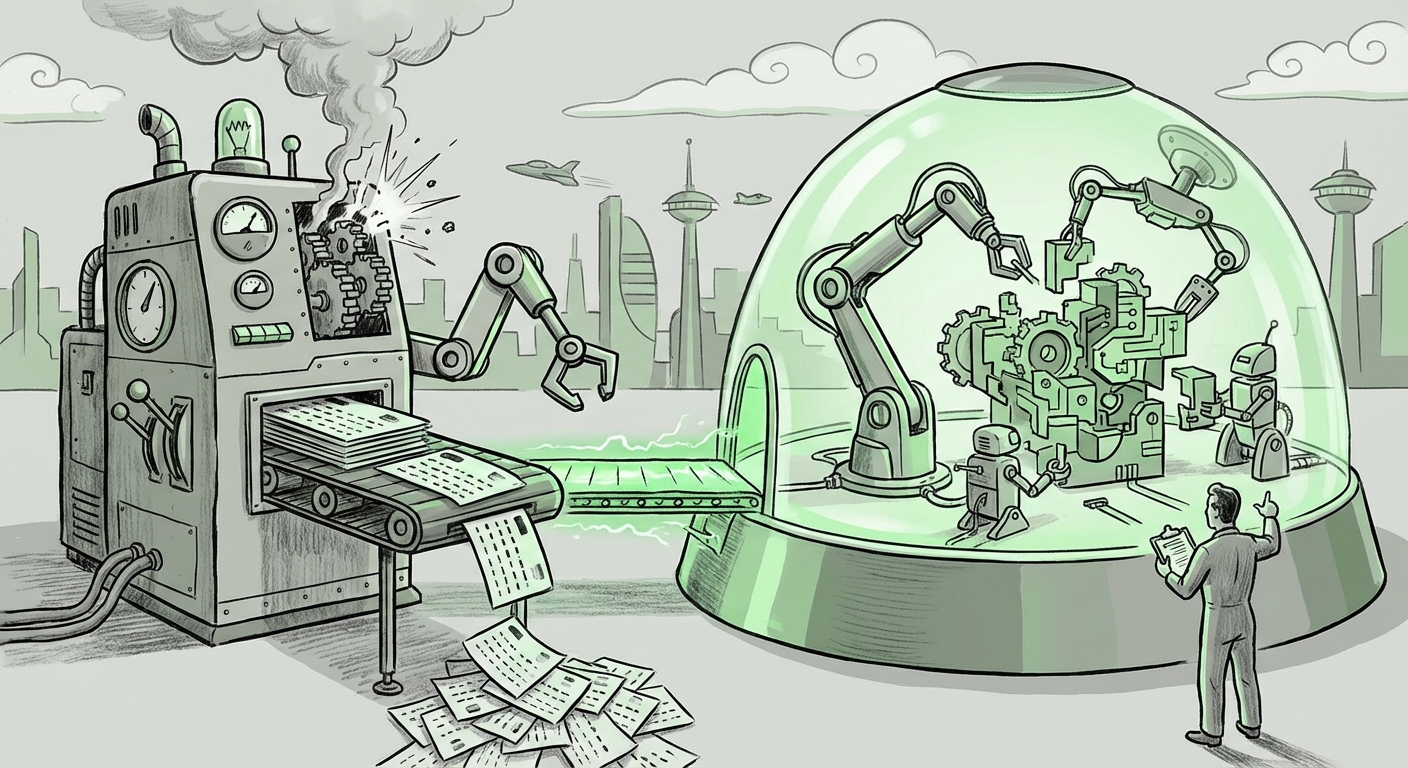

The Pivot: From Static Answers to Dynamic Execution

The clamor to retire SWE-bench forces the industry to look beyond simple, static accuracy scores toward methodologies that better simulate the engineering workflow. This transition is from evaluating syntactic correctness (does the code compile?) to assessing semantic integration and procedural competence (does the code solve the underlying business problem reliably and efficiently?).

The Rise of Agentic Benchmarks

The search for "Next generation software engineering AI benchmarks" immediately points toward frameworks that require models to operate like autonomous agents. Future benchmarks cannot rely on a single input/output pair. They must test:

- Iteration and Debugging: Can the AI run the code, read the compiler error, and attempt a repair?

- Dependency Management: Can the AI correctly install necessary libraries and resolve environment conflicts?

- External Tool Use: Can the AI effectively use Git, documentation (via web search simulation), or static analysis tools?

This shift leads directly to the dichotomy between "real-world" vs. "synthetic" coding benchmarks for LLMs. Synthetic benchmarks (like simple coding challenges) are easily memorized. Real-world emulation requires dynamic sandboxing—a live environment where the model must interact with a virtual terminal, manage files, and handle unexpected failures. These agentic systems are vastly harder to contaminate because the exact sequence of required interactions is often novel, even if the initial task is known.

Consider the hypothetical emergence of tools like Devin, which aim to be full software engineers. The true measure of such a tool isn't whether it can write a function, but whether it can manage a month-long feature branch with minimal human intervention. This necessitates evaluation systems built around prolonged, interactive tasks.

Implications for Business and Technology Strategy

For CTOs, VP of Engineering, and technology strategists, the breakdown of old benchmarks carries immediate, practical consequences:

1. Reassessing ROI on Coded Output

If a vendor claims their model achieves 90% on SWE-bench, that number should now be treated with extreme skepticism. Businesses must demand evidence of in-house evaluation using private, proprietary codebases that the model has never seen. Trust should be built on performance within your specific tech stack (e.g., proprietary frameworks, internal libraries) rather than generalized public leaderboards.

2. The Value of Specialized, Private Datasets

This crisis underscores the strategic value of proprietary, clean data. Companies that invest in curating high-quality, internal datasets that reflect their real-world complexity will have a major advantage in fine-tuning and benchmarking models safely, shielded from the contamination issues plaguing public tests. This is a crucial area explored when looking at "OpenAI feedback on coding evaluation standards"—the best evaluation will likely happen behind closed doors.

3. Shifting Hiring and Training Focus

If AI tools become excellent at generating boilerplate or fixing common bugs (the low-hanging fruit captured by flawed benchmarks), the value of human engineers shifts upward. We stop paying for debugging known issues and start paying for architectural oversight, novel problem design, and complex integration tasks—the very areas dynamic, agent-based testing aims to measure.

Actionable Insights: How to Navigate the New Reality

The deprecation of old standards is not a crisis of capability; it’s a crisis of measurement. Here is what organizations and researchers must do:

For Developers and ML Engineers: Embrace Agentic Frameworks

Start building local, dynamic test harnesses for your LLMs. Use tools that force the model to execute code and observe runtime behavior. If you are evaluating code generation, you must execute the code, run unit tests, and check for side effects. This mimics the real debugging cycle.

For Business Leaders: Demand Transparency in Evaluation

When procuring AI tools, do not accept headline benchmark scores at face value. Ask vendors specific questions:

- "How do you ensure your evaluation set was not part of the training data?"

- "Can you demonstrate the model solving a problem that requires more than five steps of iterative reasoning?"

- "What is the pass rate on our internal, proprietary code integration test suite?"

For the Research Community: Focus on Novelty and Security

The next generation of benchmarks must prioritize adversarial robustness. This involves testing models against deliberately confusing or corrupted starter code, or by introducing tasks that rely on reasoning about system dependencies rather than simple pattern matching. We need benchmarks that are constantly evolving, perhaps even collaboratively generated and obscured by cryptographic means until testing begins.

The Road Ahead: A More Honest Appraisal

OpenAI’s call to retire SWE-bench is a moment of necessary humility for the AI community. It acknowledges that models are outpacing our ability to measure them reliably, creating a gap between perceived competence and actual utility. While early LLMs succeeded by absorbing the internet, the next generation of AI will be defined by its ability to reason, adapt, and execute in novel, controlled environments.

The excitement around AI coding assistants remains high, but the focus must pivot. The race is no longer about simply writing code; it’s about proving that the AI understands what it’s writing. By moving toward dynamic, agent-based, and contamination-resistant evaluation, we ensure that the AI revolution in software engineering is built on genuine skill, not just excellent memorization.