The Great Benchmark Reckoning: Why OpenAI Retiring SWE-bench Signals the End of Static AI Testing

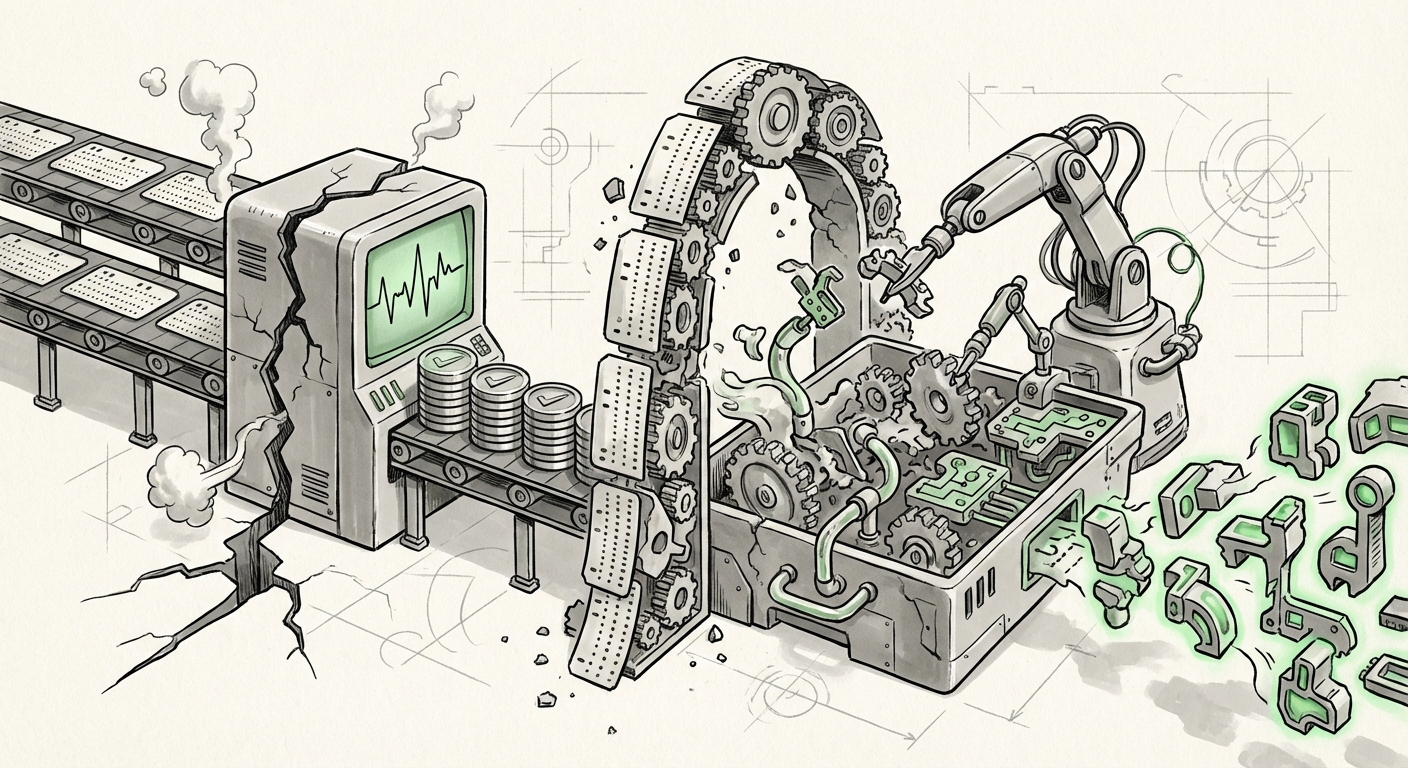

The race for Artificial Intelligence supremacy has long been measured by leaderboards. These benchmarks—standardized tests like HumanEval or, more recently, SWE-bench for coding—act as the finish line for competing models. High scores are public declarations of superiority. However, the very systems designed to measure progress are now showing signs of systemic failure. OpenAI’s recent call to retire the widely used SWE-bench highlights a crucial, perhaps inevitable, inflection point: we are moving away from validating AI through static, easily memorized tests toward methods that demand genuine, real-world utility.

For both researchers and business leaders, this development is profound. It signals that the era of simply "acing the test" is over. The AI lifecycle is demanding validation that mirrors its intended application—a transition from synthetic performance to functional reliability.

The Breakdown of the Static Leaderboard: Why SWE-bench Failed

SWE-bench was designed to test a model’s ability to solve real-world software engineering issues pulled from GitHub repositories. On the surface, it sounds perfect. Yet, OpenAI’s assessment points to two fatal flaws that plague most widely adopted benchmarks:

- Data Contamination (Memorization): The core issue is that leading models, having been trained on massive swaths of the public internet, have inevitably ingested the benchmark problems and their associated solutions. When a model "solves" a SWE-bench task, it is often recalling a pre-learned solution path rather than synthesizing a novel fix. This measures recall, not reasoning.

- Flawed Task Design: OpenAI noted that many tasks within the benchmark were "flawed enough to reject correct solutions." This means the testing environment itself was brittle, penalizing valid answers simply because they didn't perfectly match the expected output format, further reducing the measure’s validity.

This situation is not unique to coding. As we dive deeper into complex reasoning, the specter of data leakage haunts every major academic leaderboard. This forces us to confront the uncomfortable truth about modern LLMs: high benchmark scores might only indicate highly effective statistical pattern-matching engines. As articulated in critiques about [Link to Analysis on LLM Emergent Capabilities vs. Recall], distinguishing true understanding from sophisticated interpolation is becoming the central challenge of AI science.

The Echo Across AI Disciplines

The critique of SWE-bench is a microcosm of a larger industry trend. We have seen similar exhaustion points in other domains. For instance, concerns around **[Link to Article Discussing MMLU Data Contamination]** show that even general knowledge benchmarks risk becoming obsolete as models grow larger and data sources overlap more frequently with test sets. This systemic failure of static leaderboards pushes the entire field toward a more rigorous methodology.

The Pivot: The Rise of Dynamic and Adversarial Evaluation

If static tests can be memorized, the solution must be dynamic. The industry consensus, supported by this benchmark retirement, is shifting toward evaluation systems that are inherently resistant to data contamination. This is the move toward dynamic and adversarial evaluation.

Imagine an opponent that constantly changes the rules of the game—that is the goal of the next generation of benchmarks. These systems share characteristics that make them superior measures of utility:

- Live Environments: Instead of providing a static text prompt, the model must interact with a functional sandbox, perhaps executing code, managing memory, or interacting with a simulated user interface. Errors are judged by observable outcomes, not fixed text strings. This demands genuine functionality.

- Adversarial Creation: New tests are generated *after* the model is deployed, or they are specifically designed by other AI systems to exploit known weaknesses in the target model. This constant need to adapt mirrors real-world deployment challenges. Reports on **[Link to Article Describing a New Adversarial Benchmarking Tool]** suggest these systems are computationally expensive but necessary for true progress.

- Interactive Problem Solving: The evaluation requires multi-turn communication and adaptation, forcing the model to manage context over extended periods, a far better proxy for a software engineering task than a single-shot prompt-response.

For technology strategists and investors, this means the AI competitive advantage will no longer be found in who has the largest training corpus, but in who has the most sophisticated and robust *evaluation infrastructure*.

Practical Implications: What This Means for Software Engineering Automation

The domain most immediately affected is software development. SWE-bench was supposed to be the gatekeeper for trusting LLMs with mission-critical code. Its invalidation forces a necessary pause and recalibration among CTOs and engineering leaders.

Shifting Trust from Score to Sandbox

When a model claims 90% accuracy on SWE-bench, businesses should now translate that number to "The model has likely seen and memorized solutions to 90% of these specific, potentially outdated, problems." The real question is performance in an environment it has never encountered before.

This shift means that the **[Link to Article on Enterprise Adoption of AI Coding Tools]** will become more nuanced. Companies cannot simply adopt the latest tool based on public scores; they must integrate internal, private validation loops that mimic their proprietary codebase complexity. Benchmarks are becoming less about public bragging rights and more about internal quality assurance gates.

The future of software engineering automation relies on models that can debug in an unfamiliar environment, not just recall textbook fixes. This places a premium on models trained not just on static code, but on error logs, debugging sessions, and dependency management failures—the messy reality of production software.

Measuring True Reasoning vs. Pattern Matching: The Philosophical Hurdle

The SWE-bench debate forces us to confront the philosophical core of current AI progress. If a model produces perfect code but cannot explain why a certain function is superior in a novel context, have we achieved intelligence, or just hyper-efficient automation?

For AI Ethicists and advanced researchers, this is a call to redefine the metrics of success. We are desperately seeking quantitative measures for qualitative concepts like "creativity," "abstraction," and "generalization." If an LLM passes a math proof benchmark, does it understand calculus, or did it perfectly reconstruct patterns from millions of similar textbook problems?

This challenge demands a move towards evaluation that probes the model's internal state and conceptual understanding. We must develop tests that require genuine analogical leaps—situations where the necessary knowledge must be assembled from disparate concepts learned across entirely different training domains.

Actionable Insights for the Next Phase of AI Deployment

For organizations betting their future on generative AI, the message from the benchmark retirement is clear: **Validation must adapt faster than the models evolve.**

- Prioritize Internal Benchmarks: Treat public leaderboards as interesting starting points, not deployment criteria. Invest engineering resources immediately into building private, dynamic evaluation suites modeled on your most complex, proprietary tasks.

- Demand Transparency in Evaluation: When selecting foundation models, ask vendors not just for their HumanEval scores, but for details on their evaluation methodology. Are they using live execution? Are their tests adversarial? How frequently are they refreshed?

- Focus on Failure Analysis: The value is increasingly found in studying where LLMs fail in dynamic tests. Understanding why a model rejects a correct answer or fails to debug a runtime error provides far more insight than knowing it passed 95% of the time.

- Embrace Continuous Learning Loops: Accept that AI performance will degrade or plateau if tested against the same static data. Adopt a MLOps philosophy where evaluation datasets are treated as living entities, constantly purged of seen examples and injected with novel, complex challenges.

The calling off of the SWE-bench competition is not a failure of AI; it is a testament to its rapid success. The models became too good, too quickly, for the tests designed to measure them. This moment compels the industry to mature its evaluation standards. The next generation of AI progress won't be announced on a static leaderboard; it will be proven in the unpredictable, messy, and ultimately more valuable environment of real-world application.