The Sora Inflection Point: Why AI Video Models Are Becoming Physics Engines

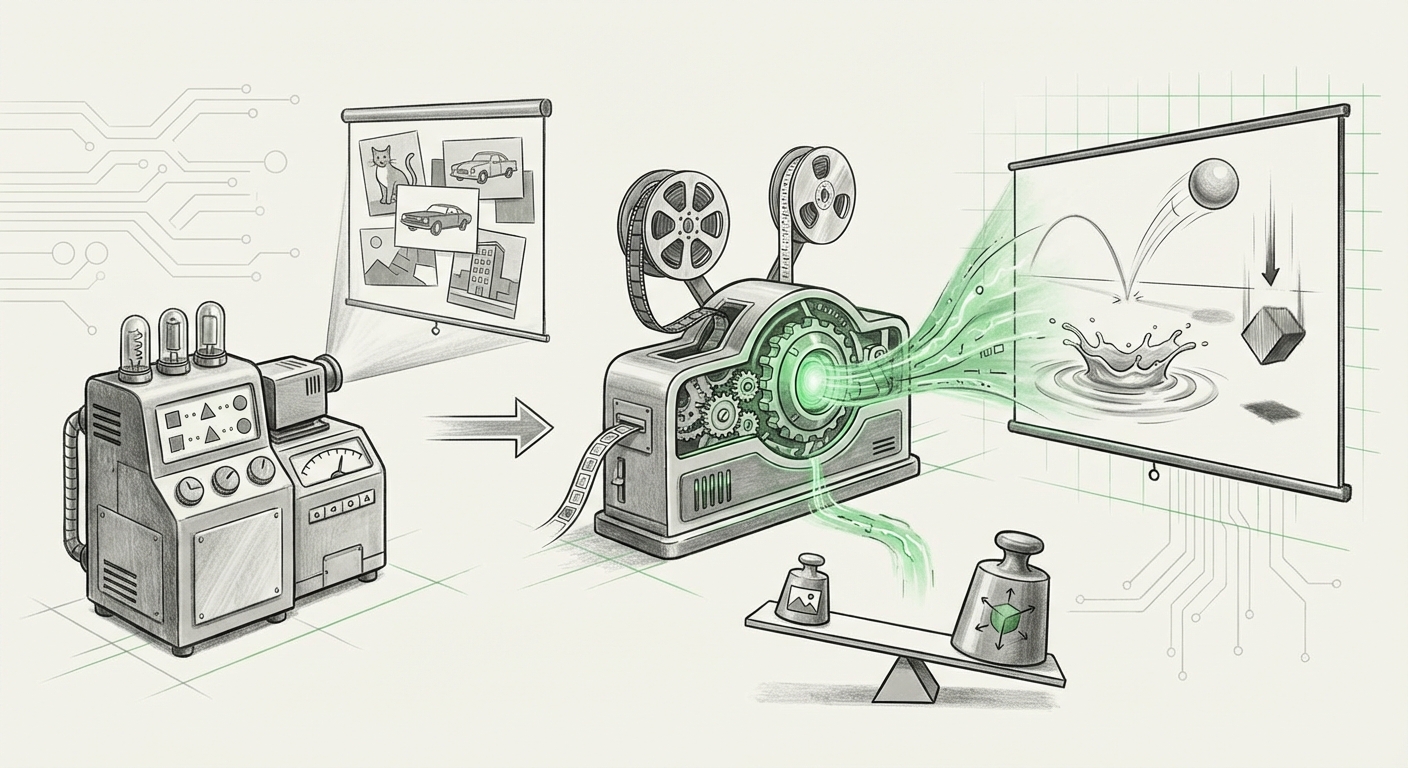

The introduction of OpenAI’s Sora model was not just another incremental improvement in generative AI; it signaled a profound architectural shift. For years, AI models excelled at recognizing patterns—identifying cats, translating text, or filling in missing words. Sora, however, suggests a new frontier: understanding the rules governing how objects interact in three-dimensional space and time. This is the core concept of a "World Model," and its emergence means that these models are beginning to act like rudimentary, learned physics engines.

To fully grasp this moment, we must look beyond the impressive visual fidelity and examine the theoretical scaffolding supporting this capability, drawing insights from leading researchers and the technical challenges overcome by massive scaling.

From Pixels to Prediction: The Birth of the World Model

What exactly is a World Model? Simply put, it is an AI’s internal simulation of reality. If you can accurately predict what will happen next in a video—where a ball will bounce, how smoke will dissipate, or how light will reflect off water—you are demonstrating an understanding of causality and physics, even if you don't know the Navier-Stokes equations by name.

This concept is championed by researchers like Yann LeCun, who argues that for true Artificial General Intelligence (AGI) to emerge, systems must be able to model the world they inhabit. As LeCun often discusses in relation to predictive coding, an agent learns by constantly trying to predict its sensory input. Sora appears to be achieving this on a grand scale using vast video datasets.

The Theoretical Weight of Sora

When we look at the outputs, we see objects maintaining physical consistency across frames—a critical hurdle previous models failed. A dropped object doesn't just disappear; it falls. Water splashes realistically. This continuity hints that the model is storing and manipulating latent representations of object permanence and momentum. This aligns perfectly with the hypothesis that high-scale training on diverse video forces the model to internalize physical laws.

This is fundamentally different from older generative models, which were essentially stitching together high-resolution images based on statistical likelihood. Sora seems to be modeling dynamics.

The Technical Engine: Transformers and Unprecedented Scale

How did we leap from blurry, short video clips to minute-long, high-fidelity scenes? The answer lies in architectural evolution and the relentless pursuit of scaling laws.

The foundational breakthrough for models like Sora often involves leveraging the **Transformer architecture**, the powerhouse behind Large Language Models (LLMs), and adapting it for spatiotemporal data. Transformers are excellent at understanding long-range dependencies—how a word at the beginning of a sentence relates to one at the end. In video, this translates to understanding how an action initiated in Frame 1 relates to the resulting scene in Frame 50.

When applied to video, the data must be tokenized, much like text. Researchers have had to figure out how to efficiently compress the vast dimensionality of video (height, width, and time) into tokens the Transformer can process effectively. This is where the secret sauce, likely involving novel diffusion techniques integrated with the Transformer structure, comes into play.

The Role of Data Volume

The sheer volume of high-quality, diverse video data required to teach an AI the "rules of the world" cannot be overstated. As empirical evidence suggests regarding scaling laws, performance often scales predictably with model size, data volume, and compute power. Sora’s leap suggests that the required data threshold for emergent world modeling capabilities in the video domain has finally been crossed. This contrasts with earlier attempts, such as Google DeepMind’s **Imagen Video** or **DreamFusion**, which showed promise but often struggled with long-term coherence and physical rigidity.

For the technical audience, this confirms that multimodal scaling—feeding the model not just text, but richly temporal, visual data—is the key driver for developing models that move beyond simple pattern matching into complex environmental simulation.

The Rippling Effect: Implications for Business and Content Creation

If AI can simulate physics and generate realistic scenes on demand, the economic and creative landscapes will undergo seismic changes. This isn't just about making better movie trailers; it’s about democratizing simulation and redefining digital labor.

Transforming Pre-Production and VFX

Consider the traditional visual effects (VFX) pipeline. It is painstakingly manual, requiring artists to model environments, apply physics simulations (like fluid dynamics or cloth movement), and then render these scenes over days or weeks. If Sora, or its successors, can generate these foundational shots based on text prompts—even imperfectly at first—it drastically compresses the ideation and blocking phases of production. Studios will rely less on expensive preliminary 3D work for concept visualization and storyboarding.

We are already seeing industry observers and media analysts discuss the potential upheaval in Hollywood and specialized digital labor. The ability to generate photorealistic, dynamic scenes instantly lowers the barrier to entry for high-fidelity visual content. This immediate impact on workflow creates an urgency for adaptation across film, advertising, and game development.

The Future of Digital Labor and Content Pipelines

The debate, as highlighted by concerns within creative guilds, is whether these tools augment or replace human artists. For now, they are powerful augmentation tools, allowing a single director or artist to visualize complex sequences previously requiring entire departments. However, the long-term trajectory suggests a shift in required skills: less emphasis on rendering minutiae and more emphasis on prompt engineering, art direction, and curating AI-generated outputs.

The Next Frontier: Comparing Learned vs. Explicit Physics

The most fascinating tension lies in comparing Sora’s "learned" physics engine against traditional, explicit simulation methods, such as those underpinning scientific modeling or even older techniques like **Neural Radiance Fields (NeRFs)**.

NeRFs are fantastic for capturing and rendering static or slowly moving scenes with stunning realism by modeling light fields. However, they are generally poor at *predicting* novel physics or complex, unseen interactions. Sora, trained observationally, appears to handle these dynamic interactions better. Yet, there remains a crucial gap:

- Explicit Simulators (Traditional/Differentiable): Know the rules (e.g., gravity is 9.8 m/s²). Highly accurate for known physics but computationally expensive to set up and cannot easily hallucinate or generalize outside their programmed parameters.

- Sora (Learned World Model): Learns rules implicitly from data. Incredibly flexible and fast for generation but prone to subtle physical errors (e.g., objects phasing through each other, odd fluid behavior) because its "knowledge" is statistical correlation, not axiomatic truth.

The research goal, as evidenced by explorations into Neural Simulators, is to merge these approaches: using large-scale foundation models to learn the structure of reality, then grounding those structures with verifiable, differentiable physical constraints. This hybrid model would offer both the creative flexibility of Sora and the reliability needed for scientific or engineering applications.

Actionable Insights for Moving Forward

Whether you are leading a technology roadmap or building a creative portfolio, the Sora moment demands proactive response:

- Invest in Multimodal Literacy: Technical teams must rapidly understand how Transformer scaling applies to non-text data. Understanding spatiotemporal tokenization and diffusion pipelines is becoming core competence, not niche expertise.

- Re-evaluate Simulation Costs: For businesses relying on complex 3D modeling (automotive design, architecture walkthroughs, film pre-viz), start piloting Sora-like tools immediately. The cost curve for generating initial, high-fidelity dynamic mocks is about to drop precipitously.

- Focus on Curation and Direction: For creative professionals, the value shifts from execution mastery to conceptual clarity. The ability to articulate complex, consistent visual narratives through precise prompting—guiding the AI’s learned world model—will be the premium skill.

The move toward World Models signals that AI is evolving from a sophisticated calculator into a nascent reasoner. Sora is showing us a future where the digital sandbox is governed by models that possess an intuitive, if imperfect, grasp of physics, opening up avenues for embodiment, robotics, and digital creation previously confined to science fiction.