The $500 Billion Stall: Why the Stargate AI Supercluster Project Halted and What It Means for AGI Funding

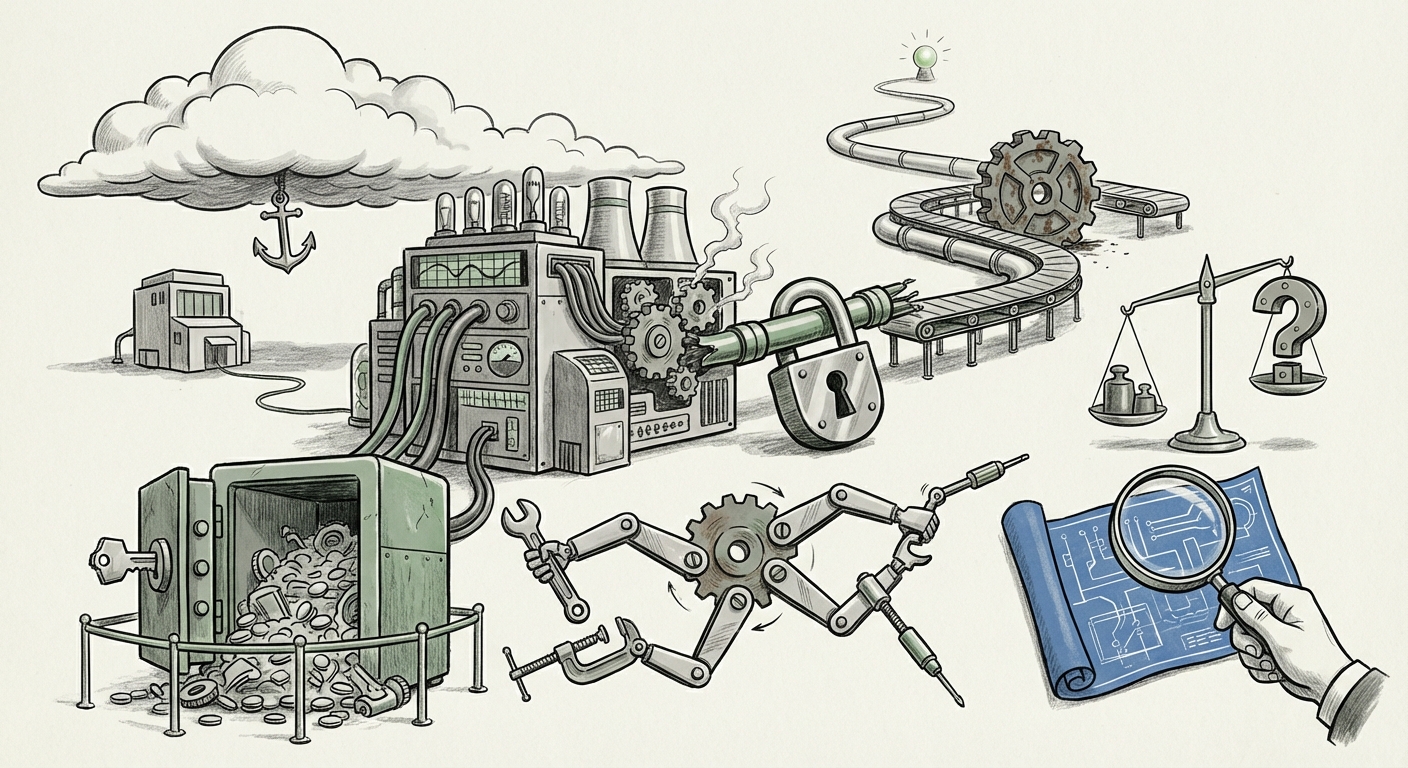

The race to Artificial General Intelligence (AGI) isn't just about smarter algorithms; it’s fundamentally a battle over physical infrastructure. This week brought a sobering development to that race: the reported stall of the "Stargate" project—a rumored, staggering $500 billion initiative designed to create massive, dedicated AI data centers, purportedly involving key players like OpenAI, Oracle, and SoftBank.

If confirmed, this delay reveals that the path to AGI is not a smooth technological upgrade but a messy, high-stakes negotiation fraught with geopolitical complexity, financial risk, and deep strategic disagreements. This isn't just a construction delay; it’s a potential inflection point for how the world funds and builds the next generation of AI.

The Unseen Mountain of Compute Cost

To understand the significance of the $500 billion price tag, one must grasp the sheer scale of modern AI training. Training frontier models like GPT-4 already cost hundreds of millions of dollars, consuming tens of thousands of high-end GPUs. Experts analyzing the cost of training GPT-5 and subsequent models suggest costs will scale exponentially, not linearly.

The Stargate concept was essentially a sovereign AI cloud—a custom-built ecosystem designed to run OpenAI’s future models without the overhead, latency, or competitive scrutiny of existing hyperscalers. For OpenAI, this promised complete control over their destiny. For the partners, it promised unprecedented revenue streams.

The reported stall suggests that the financial model underpinning this vision has hit turbulence. This connects directly to broader market assessments of AI infrastructure spending projections. Lenders and equity partners are starting to ask difficult questions: Is the ROI guaranteed if the next generation of models requires three times the compute but only delivers incremental performance gains over the current version?

The Practical Hurdle for Business Leaders

For businesses looking to adopt cutting-edge AI, this infrastructure turbulence is a crucial signal. It means that while AI capability advances rapidly, the physical means to access it—the data centers—are becoming a primary bottleneck, not just in terms of availability but in terms of **who controls the keys to the kingdom.** If the largest proposed project can't get off the ground due to complexity, smaller, bespoke builds for specific enterprise needs will face similar, albeit smaller, scaling challenges.

The Alliance Fracture: OpenAI, Oracle, and the Microsoft Shadow

The core of the reported dispute involves responsibility and control. OpenAI, having already secured a monumental partnership with Microsoft Azure, was looking to diversify its infrastructure dependency. This is a natural strategic move, aiming to avoid becoming entirely reliant on one provider.

However, this diversification creates tension. Reporting on OpenAI Microsoft partnership tensions suggests that when one partner seeks to build a massive, competing infrastructure pool (Stargate), it raises alarm bells for the existing primary backer. Microsoft has invested billions and built specialized Azure capacity *for* OpenAI. If OpenAI shifts massive future compute needs to an Oracle/SoftBank-backed entity, the value proposition for Microsoft changes overnight.

Oracle, being a formidable cloud provider itself, likely viewed Stargate as a massive opportunity to chip away at the dominance of AWS and Azure. The disputes likely centered on:

- Governance and Control: Who gets final say on hardware procurement (Nvidia vs. custom chips)?

- Data Sovereignty: Where will these centers physically reside, and under which regulatory framework?

- Revenue Sharing: How will profits from the resulting AGI services be split among the three major entities?

This dynamic is key to understanding the cloud provider leverage in AI deals. Hyperscalers are no longer just renting servers; they are underwriting national-scale compute projects. When an AI lab tries to build its own parallel universe, the existing partner feels threatened, leading to friction that can derail even billion-dollar plans.

SoftBank’s Vision Fund Pivot and Investor Scrutiny

The involvement of SoftBank, specifically Masayoshi Son’s Vision Fund, adds a significant geopolitical and investment lens. SoftBank has historically been defined by making paradigm-shifting bets, often involving huge amounts of capital to accelerate market adoption. Their interest in Stargate signaled a belief that AI infrastructure represented the single greatest investment opportunity of the decade.

However, recent analyses of SoftBank Vision Fund capital deployment hurdles suggest a more cautious approach has taken hold. After a period of intense spending, the economic climate has shifted. Investors are demanding clearer pathways to profitability and less speculative CapEx. Building a $500 billion data center complex requires long-term confidence that the returns will materialize before the debt matures. If lenders are hesitant, as the initial report suggests, it means the risk assessment on the entire AGI scaling roadmap has become significantly less favorable.

This reveals a maturing market. The "build it and they will come" mentality, which often characterized early tech booms, is being replaced by rigorous **enterprise demand assessment**. Are enterprises truly ready to sign contracts committing to the capacity Stargate promises?

The Demand Check: Is Enterprise Appetite Keeping Pace with Lab Ambition?

This is perhaps the most critical takeaway for strategic planners. An infrastructure project of this magnitude is not just for OpenAI’s internal research; it must be justified by massive, dedicated enterprise contracts. This brings us to the fourth crucial factor: enterprise demand for AI compute capacity.

While headlines scream about AI breakthroughs, many companies are still struggling to integrate current-generation models (GPT-4, Claude 3) effectively into their workflows. ROI on these foundational models can be murky, leading to caution when committing to multi-year, highly specialized infrastructure leases. If the promised ROI from next-gen AI doesn't immediately materialize for large corporate clients, then the market for dedicated, sovereign AI clouds shrinks dramatically.

The stall may signal a necessary pause where infrastructure development must align more closely with proven, demonstrable enterprise consumption patterns. We might see a shift from building monolithic "mega-clusters" to developing smaller, geographically diverse, and modular AI compute units that scale based on immediate customer acquisition, rather than purely on research ambition.

Future Implications: The Decentralization of Compute Power

What does the failure (or delay) of Stargate mean for the future of AI?

1. Hyper-Scaling Becomes Collaborative, Not Monolithic

The dream of one entity owning a single, world-dominating compute cluster is likely being replaced by a more distributed, risk-sharing model. Future mega-projects will look less like singular fiefdoms and more like consortiums where costs, governance, and hardware choices are spread across multiple interested parties—perhaps even nation-states looking to secure compute sovereignty.

2. The Rise of Specialized AI Silicon

The dependency on Nvidia GPUs for every step of AI development is a single point of failure. When disputes arise over infrastructure, control over the silicon (whether it's Nvidia, custom Google TPUs, or specialized chips developed by Oracle or Microsoft) becomes a bargaining chip. The pressure will intensify for companies like OpenAI to diversify hardware partners, which could slow down reliance on a single, monolithic stack.

3. Increased Focus on Efficiency Over Raw Size

The market reaction to the Stargate stall will likely push researchers to prioritize algorithmic efficiency. If building the next giant cluster is prohibitively difficult, the incentive increases dramatically to achieve similar performance gains with fewer parameters or less training time. The goal shifts from brute force compute to elegant, efficient computation.

Actionable Insights for Today’s Leaders

For organizations looking to navigate this turbulent infrastructure landscape, several actions are prudent:

- Diversify Cloud Strategy Now: Do not allow a single cloud provider to become the sole gatekeeper for your most critical AI workloads. Even if you primarily use Azure, begin stress-testing workloads on GCP or AWS to build negotiating leverage and continuity options.

- Scrutinize Long-Term CapEx Commitments: Be wary of infrastructure commitments that rely on the successful launch of "next-generation" models that haven't been fully defined. Focus on pay-as-you-go or capacity reservation models that allow for greater flexibility.

- Invest in Model Optimization: Direct R&D budgets toward techniques like quantization, distillation, and fine-tuning to maximize the utility of currently available compute resources, rather than waiting for the next $100 billion cluster to come online.

- Monitor Geopolitical Compute Alliances: As projects stall due to corporate friction, watch for governments (US, EU, Japan) stepping in to back similar infrastructure plays, potentially creating state-backed AI clouds that operate under different economic pressures than venture-funded endeavors.

The $500 billion Stargate proposal was the ultimate expression of AI ambition—the desire to build a dedicated, unparalleled engine for intelligence. Its reported grounding serves as a vital reality check. Scaling AGI is not just a technological hurdle; it is a colossal financial, political, and strategic challenge that demands unprecedented levels of cooperation, which, as this incident suggests, is often the hardest component to secure.