The $500 Billion Friction: Why the Stargate AI Project Stall Signals a New Era of Compute Realism

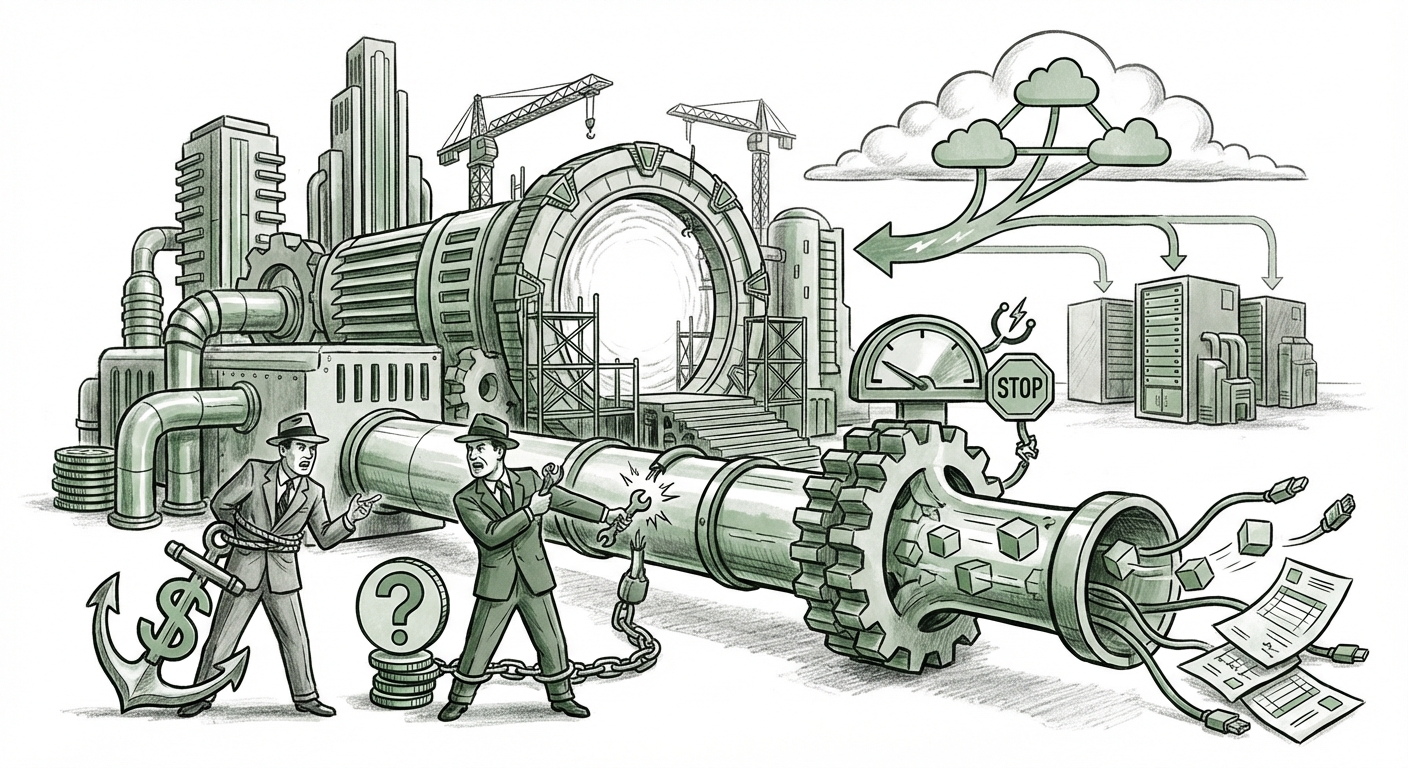

The ambition driving frontier Artificial Intelligence development is staggering. We are no longer talking about incremental software updates; we are discussing infrastructure projects that rival the scale of national budgets. The reported stall of the so-called "Stargate" project—a proposed $500 billion AI data center initiative involving OpenAI, Oracle, and SoftBank—serves as a crucial stress test for the entire AI ecosystem.

This isn't merely a boardroom dispute; it is a flashing signal illuminating the immense friction points where technological hunger meets physical reality, financial risk, and corporate rivalry. As an analyst, I see this setback not as a failure, but as a necessary confrontation with the complexities of building the next generation of intelligence infrastructure.

The Unseen Foundation: Compute Bottlenecks as the New Scarcity

To understand why three massive entities might struggle to agree on a $500 billion plan, one must first understand the underlying scarcity. Building an AI data center isn't like building a standard office block; it requires specialized, cutting-edge components manufactured on incredibly thin margins of global capacity.

The core of the Stargate concept involves deploying hundreds of thousands of the most advanced AI accelerators available—chips like Nvidia's H100s and the forthcoming B200s. These chips are the *gold* of the digital age, and the supply chain cannot keep up with demand.

For the business leader: Imagine trying to commission a fleet of 10,000 custom-built supercars, but the factory can only physically produce 1,000 per year. Disputes immediately arise over who gets priority access, who pays for the waiting time, and how the resulting cars will be utilized. The friction between OpenAI, Oracle, and SoftBank likely stems from this fundamental reality: the physical pie is too small for the projected plans.

Analysis of the hardware market confirms this constraint. Recent industry reports frequently cite persistent supply constraints for these high-demand GPUs and the lengthy lead times required to secure power contracts and construct facilities large enough to house them. This tangible bottleneck validates the severity of the challenge OpenAI faces in its quest for dedicated, massive-scale computing.

The Politics of Partnership: When Cloud Loyalty Collides with Ambition

OpenAI’s current operational backbone is heavily intertwined with Microsoft Azure. This relationship functions under a highly favorable, though somewhat captive, arrangement. The Stargate proposal, involving competitors like Oracle and strategic entities like SoftBank, represents an attempt to achieve true compute independence—a necessity if OpenAI intends to train models significantly larger and more complex than GPT-4.

However, establishing a new, multi-party infrastructure consortium creates political and operational nightmares:

- Responsibility Allocation: Who manages the physical security? Who owns the failure recovery protocols? Who guarantees uptime if the system relies on Oracle’s cloud services while SoftBank provides financing structure?

- Data Sovereignty and Control: In a traditional hyperscaler relationship (like OpenAI/Microsoft), the lines of control, while complex, are ultimately dictated by the master agreement. A three-way split introduces ambiguity over who has the ultimate veto or priority access during peak demand.

- Competitive Friction: Oracle is a competitor to Microsoft in the cloud space. Having a massive, critical workload that requires deep integration with Oracle services while maintaining a strategic partnership with Microsoft creates unavoidable strategic tension.

This dynamic contrasts sharply with the established AI investment models. When Microsoft invests billions in OpenAI, the structure is relatively clear: capital in exchange for optimized access and strategic alignment. Stargate attempts to create a new model—a specialized, neutral infrastructure fund—and the complexities of sharing risk and reward among rivals appear to be overwhelming the initial negotiations.

The $500 Billion Question: Financial Viability and ROI

Perhaps the most potent reason for the stall is lender hesitation. A half-trillion dollars is not just a large number; it represents a financial commitment based on projections of future AI service revenue that are inherently uncertain. Lenders and financial partners are naturally cautious when the required capital expenditure (CapEx) dwarfs the currently visible, contracted revenue streams.

The Cost Curve Problem: Training models is increasingly expensive. Estimates for the next generation of frontier models suggest computation costs could easily exceed $10 billion per run. Lenders need assurance that the infrastructure built today will not become obsolete or underutilized by the time the next, more efficient generation of chips arrives, or that the anticipated capabilities will justify the massive upfront investment.

If OpenAI must "fundamentally rethink its strategy," it likely means shifting from an aggressive "build it now and figure out the service revenue later" approach to a more disciplined, staged financing model. This realism is essential; even world-changing technology cannot defy basic financial discipline indefinitely.

What This Means for the Future of AI Infrastructure

The friction surrounding Stargate acts as a powerful corrective lens, focusing the industry on three critical future pathways for AI scaling:

1. The Resilience of the Hyperscaler Oligopoly

The stall reinforces the dominance of the existing cloud giants. Microsoft, Amazon (AWS), and Google Cloud possess the regulatory approvals, existing supply chain leverage, operational muscle, and—crucially—the necessary *balance sheets* to absorb these massive, multi-year infrastructure gambles. For most AI developers, the path of least resistance and highest reliability will continue to be renting time on Azure, AWS, or GCP. Building independent infrastructure at this scale is proving too complex politically and logistically.

2. Decentralization Through Modularization, Not Monoliths

The failure of the singular, monolithic "Stargate" concept may lead to a pivot toward decentralized compute strategies. Instead of one massive, single-purpose facility controlled by a consortium, we may see:

- Regional Pods: Smaller, highly customized AI compute pods deployed across different jurisdictions, perhaps involving sovereign wealth funds interested in technology sovereignty rather than pure profit maximization.

- Specialized Hardware Stacks: Projects focusing only on inference (running already-trained models) rather than the astronomical cost of training, which requires the absolute latest, most expensive chips.

3. The Rise of AI Infrastructure as an Asset Class

For financiers, Stargate’s troubles signal that pure, non-contracted AI infrastructure is extremely high-risk. However, it simultaneously validates AI infrastructure as a necessary asset class. The future will see creative financial instruments—perhaps securitizing future GPU access or long-term power purchase agreements (PPAs) explicitly tied to AI workloads—that allow more varied capital sources (pension funds, insurance companies) to participate without taking on the operational headaches of OpenAI or Oracle.

Practical Implications and Actionable Insights

How should businesses and technology leaders interpret this friction?

For Large Enterprises (The Consumers of AI):

Actionable Insight: Avoid "Compute Lock-In" Ambiguity. Do not commit all future AI workloads to a single provider until infrastructure stability is guaranteed. Maintain flexibility across the major cloud providers. If your core application relies on custom infrastructure only available to the builder (like the proposed Stargate), you are exposed to the strategic whims and partner disputes of that builder.

For Startups and Mid-Sized AI Developers:

Actionable Insight: Optimize for Efficiency, Not Scale (Yet). Focus relentlessly on optimizing your models (quantization, smaller parameter counts) to run on existing, proven hardware stacks. The most successful startups will be those that can deliver massive value using 10,000 GPUs rather than needing 1,000,000. The race for the biggest model is pausing; the race for the most efficient model is accelerating.

For Hardware and Data Center Providers:

Actionable Insight: Focus on Standardization and Throughput. The industry needs standardization to de-risk massive contracts. Providers who can offer modular, pre-qualified, deployable AI "racks" with guaranteed power and cooling capacity, rather than bespoke $500 billion blueprints, will capture the immediate wave of distributed AI buildout.

Conclusion: Reality Check for the AI Superstructure

The dream of the Stargate project—a dedicated, self-funded, unified super-brain factory—has collided with the hard realities of supply chain constraints, competitive corporate landscapes, and the unforgiving math of financial returns. This stall is a powerful reminder that even organizations backed by the world’s most ambitious technology leaders are tethered to physics and finance.

What emerges from this necessary strategic rethink will be a more mature, diversified, and perhaps more sustainable path forward for AI scaling. The next phase won't be defined by the size of the ambition alone, but by the robustness of the partnerships and the grounded reality of the supply chain that underpins it all.