The Gigawatt Gauntlet: How AMD's "Copy-Paste" AI Deals with Meta and OpenAI Are Redefining Hardware Supply and Data Center Power

The Artificial Intelligence landscape is accelerating at a pace that challenges even the most ambitious engineering roadmaps. While much attention focuses on the latest LLM breakthroughs, the real structural shifts are happening behind the scenes, within the cavernous data centers that house these digital brains. A recent development—the multi-year partnership between AMD and Meta, featuring an eyebrow-raising commitment of up to **six gigawatts (GW)** of power and an unusual equity component—suggests that the architecture of securing cutting-edge AI hardware is rapidly standardizing.

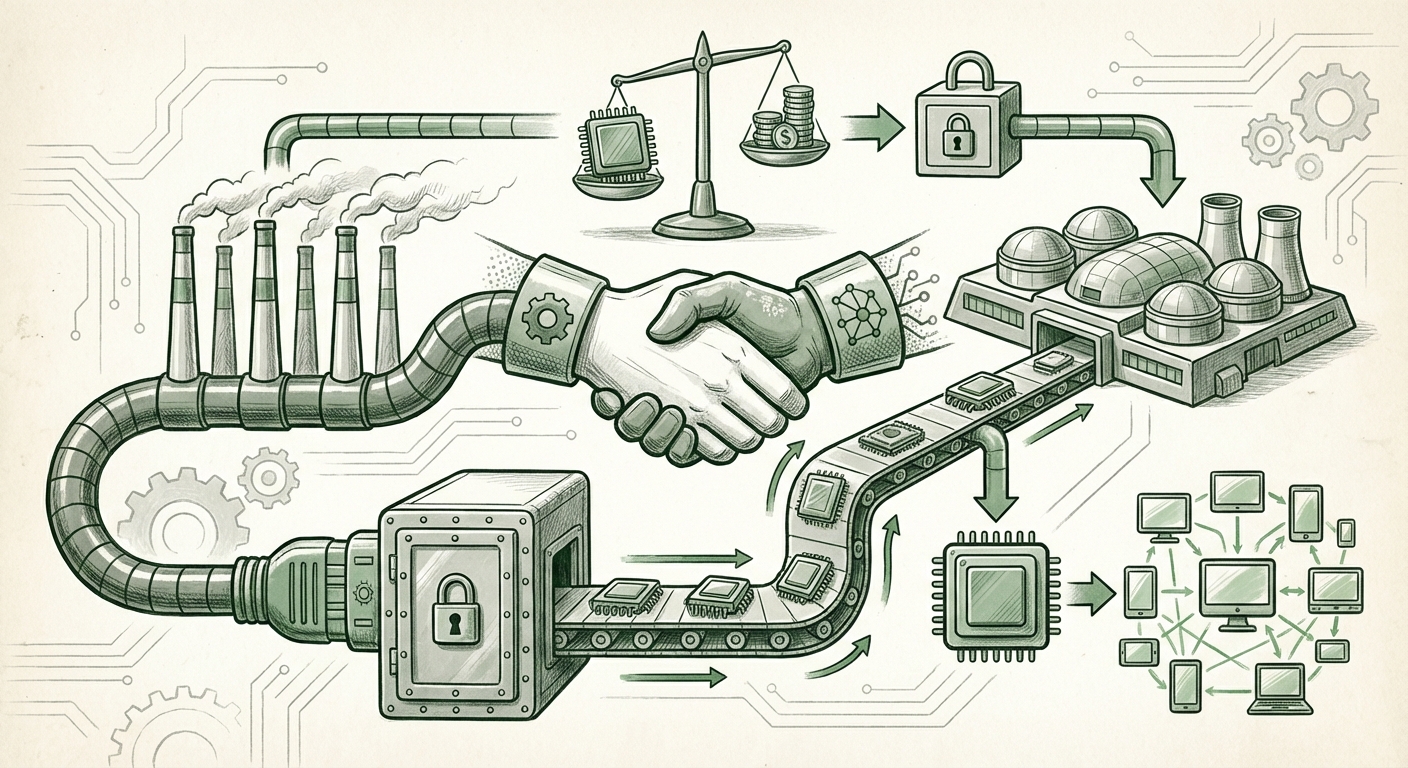

When reports surfaced that this Meta deal appeared to be a near "copy-paste" of AMD’s earlier arrangement with OpenAI, it wasn't just a footnote about corporate efficiency. It signaled the emergence of a standardized, high-stakes template for AI infrastructure acquisition—one where access to supply is so critical that it demands commitments beyond mere purchase orders.

The New Template: Supply, Power, and Ownership

For years, acquiring top-tier GPUs meant placing orders and waiting in line. Now, securing access to the next generation of AI accelerators, such as AMD's MI300X series, requires a comprehensive strategic partnership. This new template, seemingly replicated between AMD, OpenAI, and Meta, rests on three critical pillars:

1. Massive Volume Guarantees (The GPU Pipeline)

Hyperscalers like Meta aren't just buying chips for today; they are booking capacity for projects years into the future. The commitment of "up to six gigawatts" of AMD GPUs implies an order book stretching well into 2026 or beyond. This helps AMD secure massive capital investment for manufacturing capacity, locking out competitors. For context, six gigawatts is enough continuous power to supply a major metropolitan city—illustrating the sheer scale of modern foundational model development and deployment.

Actionable Insight for Hardware Analysts: We must now evaluate chipmakers not just on silicon performance (TFLOPS), but on their proven ability to reliably ship astronomical quantities. This validates AMD's aggressive expansion strategy against the incumbent leader, Nvidia, as major customers seek diversification and guaranteed supply.

2. The Unseen Cost: Energy Commitments

The most telling component of these deals is the explicit inclusion of massive power commitments. Securing physical power—the "juice" to run the GPUs—is becoming a bottleneck as severe as the chip fabrication itself. Data center power requirements are skyrocketing, driven by the "always-on" nature of generative AI services.

As corroborated by industry trends showing hyperscalers scrambling for Power Purchase Agreements (PPAs) and new substation builds (Query #2 context), Meta isn't just buying GPUs; it’s making a strategic investment in *operational runway*. This signals a profound shift: **AI infrastructure is now defined as much by megawatts as it is by memory bandwidth.**

3. The Equity Stake: Financial Alignment

The inclusion of an approximate "ten percent equity" stake is the most radical element, echoing venture capital strategies from the early days of cloud computing. Why would a hardware supplier take ownership? Several reasons emerge:

- Long-Term Lock-in: Equity creates vested interest. It moves AMD from being a simple vendor to a strategic partner whose success is intrinsically linked to Meta's long-term AI platform success.

- Upside Participation: If Meta's Llama ecosystem or future AI services explode in value, AMD captures a share of that financial growth, far exceeding the profit margin on the hardware itself.

- Strategic Positioning (Query #3 Context): This structure provides leverage. If the market demands more capacity than is available, a partner with an equity stake is prioritized. This financial alignment acts as a superior form of incentive compared to simple volume discounts.

Inference: The Next Frontier of AI Spending

Crucially, the Meta-AMD deal reportedly focuses heavily on **inference**, not just training. Training is the initial, massive expenditure required to build an LLM (like building the engine). Inference is the ongoing, constant cost of *running* that model every time a user types a prompt, asks for an image, or executes a command.

For Meta, which serves billions of users globally via Facebook, Instagram, and WhatsApp, the cumulative cost of inference—even with a highly optimized model like Llama—is astronomical. If AMD's MI300X series (or future variants) offers a substantial performance-per-watt advantage specifically for inference workloads (Query #4 context), then securing guaranteed supply now is essential for controlling operating expenses (OpEx) over the next five years.

This focus on inference deployment proves that AI is moving out of the pure research lab and into the mass-market production environment. The race is no longer just about building the smartest model, but about building the most cost-effective way to serve it to the world.

Broader Implications: What This Means for the Future of AI

These standardized infrastructure deals have profound implications that stretch beyond just AMD and Meta:

1. Consolidation of Power and Supply Chains

When only a handful of chipmakers can meet these staggering volume and power demands, the market consolidates. The ability to sign a "copy-paste" deal implies that AMD has established a repeatable, bankable offering. This puts immense pressure on smaller players and potentially creates an oligopoly where the handful of companies that can provide the scale (AMD, Nvidia, perhaps Intel) dictate the terms of AI deployment globally.

2. The Energy Sector Becomes an AI Battleground

The 6GW commitment formalizes the data center industry's dependency on robust, often green, energy infrastructure. Future competition won't just be about chip architecture; it will be about who can secure the cleanest, most reliable power contracts first. We will see tech giants increasingly bypass utility regulators by funding and building their own localized power generation facilities to meet these gargantuan demands.

3. Hardware Vendors Become Financial Engineers

The equity component suggests a normalization of hardware vendors acting as strategic investors. For the market, this means valuing chip companies not just on semiconductor revenue, but on their portfolio of strategic partnerships and their ability to engineer deep financial alignment with their biggest customers. It raises the stakes for investors analyzing AMD, as their valuation must now incorporate potential gains from Meta's or OpenAI's future success.

Actionable Takeaways for Business Leaders

For any organization relying on large-scale AI deployment—whether building proprietary models or running customer-facing services—the message is clear:

- Diversify Your Supply Strategy Now: Relying on a single vendor for 100% of your compute capacity is a growing operational risk. Explore strategic multi-sourcing arrangements, similar to what Meta is doing with AMD, even if the hardware isn't *quite* best-in-class for every single workload. Supply stability trumps marginal performance gains in the current environment.

- Factor Energy into Total Cost of Ownership (TCO): When budgeting for AI hardware, treat power security and capacity planning as a non-negotiable line item equivalent to the chip cost itself. If you cannot secure power, you cannot deploy compute.

- Explore Partnership Models: For mid-sized companies or large enterprises looking to build dedicated AI infrastructure, look beyond simple leasing or purchasing. Can you offer an OEM or key supplier favorable, long-term commitment in exchange for preferential access or specialized hardware configurations? The era of the simple vendor transaction is fading.

The AMD-Meta partnership, seemingly built on the blueprint of the OpenAI deal, is more than just a headline deal for faster GPUs. It is a foundational document for the next decade of AI infrastructure. It proves that the physical constraints—power delivery and supply chain guarantees—are now the primary determinants of who leads the AI race. The hardware providers are no longer just selling chips; they are underwriting the power and staking a claim in the future value created by the models they enable.