The Claude Accusation: Unpacking AI Model Theft, Geopolitics, and the Future of Intellectual Property in LLMs

The race for Artificial General Intelligence (AGI) is no longer just about who has the most GPUs or the best talent; it is now fiercely contested in the realm of intellectual property and ethical boundaries. A recent, seismic event shook the foundations of the AI community: Anthropic, the developer of the Claude family of large language models (LLMs), formally accused three Chinese AI firms—Deepseek, Moonshot, and MiniMax—of systematically stealing the proprietary intelligence of its models.

Anthropic alleges that these competitors didn't just use the publicly available outputs; they executed millions of specific queries designed to map, reverse-engineer, and effectively clone the unique capabilities baked into Claude during its multi-million dollar training process. This is not just a dispute over stolen documents; it represents a direct assault on the very core asset of a frontier AI company: its model weights and emergent capabilities.

The Heart of the Accusation: Beyond Data Scraping

When we talk about traditional data theft, we usually think of stealing the training data—the vast troves of text and code used to initially build the AI. However, what Anthropic is alleging is far more sophisticated and, arguably, more damaging in the current landscape. This centers on a technique known in cybersecurity circles as a **"model extraction attack"** or **"model stealing."

Imagine building a masterpiece sculpture. Stealing the blueprints (the training data) is one thing. But what Anthropic claims occurred is akin to an expert visitor studying your sculpture from every angle for months, taking detailed notes on every curve and shadow, until they can build an almost identical replica without ever touching your original tools or materials. They are replicating the *result* of the immense effort, not just the ingredients.

The Mechanics of Black-Box Replication

To understand the technical gravity, we must look into the "how." As suggested by research into adversarial machine learning (Query 1 focus), model extraction works by treating the target LLM (like Claude) as a "black box."

- Systematic Querying: Attackers send structured, massive volumes of diverse prompts to the target API.

- Output Mapping: They meticulously record the responses, paying close attention to edge cases, nuanced instructions, safety guardrails, and complex reasoning patterns.

- Synthetic Dataset Creation: These input/output pairs form a high-quality, bespoke training dataset that perfectly mirrors the behavior of the target model.

- Re-Training: The competitor then uses this "behavioral dataset" to train their own, smaller model from scratch. Because the data is essentially a cheat sheet for intelligence, their resulting model quickly achieves performance levels far exceeding what their initial raw training data could have provided.

Anthropic’s specific mention of **16 million queries** underscores the scale required for such an attack to move from theoretical possibility to effective operational theft. This level of query volume is not typical exploratory use; it signals a deliberate, industrial-scale effort.

The Economic Imperative: Why Bother Stealing Intelligence?

Why would a well-funded competitor like Deepseek or Moonshot—who are already investing heavily in R&D—resort to alleged theft? The answer lies in the staggering economics of foundation model development (the focus of Query 4).

Training a state-of-the-art model like Claude 3 Opus costs hundreds of millions of dollars in compute time alone, requiring thousands of specialized, scarce AI accelerators (like Nvidia H100s) running for months. This capital expenditure is a massive barrier to entry.

If an attacker can, through 16 million relatively inexpensive API calls, generate a high-fidelity proxy model, they essentially leapfrog years of research and hundreds of millions in investment. They acquire the *behavioral intelligence* cheaply, saving time and capital, allowing them to deploy competitive models faster and cheaper. This shortcut directly threatens the business model of the pioneers.

Geopolitics and the Shifting Sands of AI IP

This incident cannot be viewed in a vacuum; it is deeply embedded in the broader US-China technological competition (Query 2). Anthropic, backed by major Western investment (including Amazon and Google), represents the vanguard of US/European AI development. The accused labs operate within a jurisdiction that is actively prioritizing AI self-sufficiency.

The accusation turns a technical dispute into a geopolitical flashpoint over technology stewardship and IP enforcement. If these claims are substantiated, it raises critical questions about:

- Trust in Ecosystems: Will frontier model providers feel safe offering API access if every interaction risks revealing proprietary secrets?

- International Norms: How will international bodies govern IP in a domain—advanced generative AI—that has no established legal precedent for this type of digital replication?

- Regulatory Scrutiny: Such high-profile accusations will inevitably draw increased attention from trade regulators on both sides, potentially leading to stricter export controls or compliance audits for model access.

For Western companies building proprietary models, the perceived need to restrict access or even "air-gap" future, more powerful models from public interfaces may become an operational necessity, potentially slowing the pace of beneficial public deployment.

Future Implications: Defending the Digital Brain

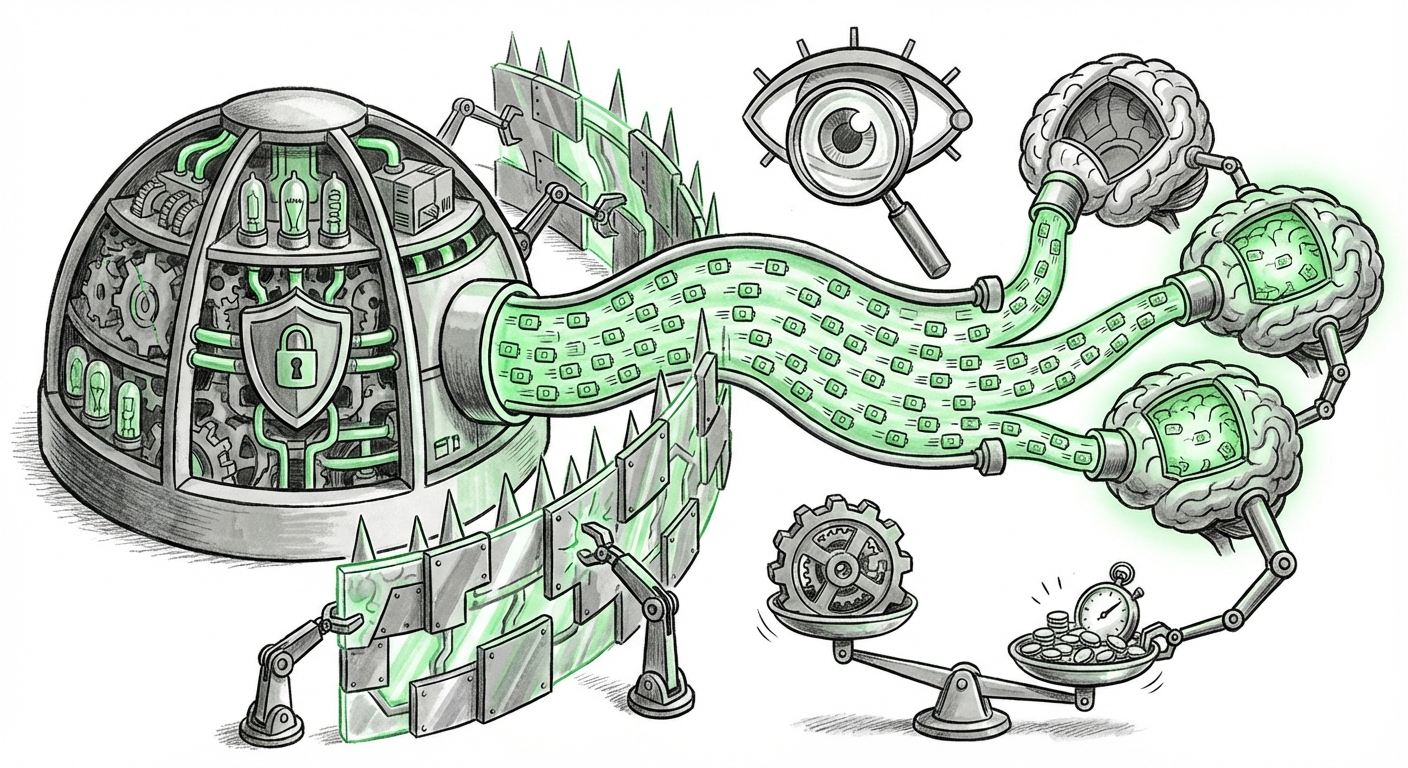

What does this mean for the future of building and deploying AI? The industry must pivot quickly from focusing solely on *building* the best models to *defending* the intelligence already built. This case accelerates several necessary technological shifts:

1. Watermarking and Provenance Tracking

To combat this, we will see an accelerated focus on embedding "digital watermarks" directly into the model weights or outputs. These are subtle, almost invisible signatures that prove an output originated from a specific model. If Deepseek’s resulting model begins producing outputs bearing Anthropic’s digital signature, the evidence of extraction becomes undeniable.

2. Differential Privacy and Output Perturbation

Defenders will likely implement techniques that slightly alter responses for high-frequency users or specific query patterns. This "noise injection" makes the resulting training data less clean for the attacker, degrading the quality of the stolen model proxy and punishing systematic extraction attempts.

3. Shift from Black-Box to White-Box Collaboration

In the long term, the mistrust generated by these incidents might ironically push some collaboration back toward more open, verifiable methods. If companies cannot trust their API access, they might choose to partner with verified entities (governments, academic institutions) under strict licensing and audit agreements, favoring verifiable collaboration over proprietary secrecy.

4. The Rise of "Model Auditing" Services

We are likely to see the emergence of specialized security firms dedicated solely to auditing deployed models for evidence of extraction, much like digital forensics experts today look for code plagiarism.

Actionable Insights for Businesses Navigating the AI Landscape

For businesses relying on third-party LLMs—whether for customer service, code generation, or specialized data analysis—this situation presents both a risk and a strategic decision point:

- Assess API Dependency Risk: If your entire competitive edge relies on a feature uniquely provided by a single provider’s API, you are currently betting on that provider’s security and IP integrity. Diversify your reliance across multiple foundational models to mitigate single-point failure due to IP litigation or model shutdowns.

- Prioritize Data Sovereignty: If your application involves highly sensitive or proprietary data, look for models that can be deployed on-premise or within a private cloud environment (local hosting). While more expensive than API calls, this shields your inputs and your specific use-case patterns from external surveillance.

- Demand Transparency on Defenses: When engaging with AI vendors, start asking pointed questions about their model security posture. What measures do they have in place against model extraction? Are they using output watermarking? Their answers will reveal their maturity level in protecting their own core assets—and by extension, yours.

The current dispute is more than just a headline; it is a critical stress test for the nascent framework governing the world’s most valuable digital assets. If model intelligence can be so easily replicated through clever querying, the concept of proprietary AI leadership, built on billions in investment, begins to crumble.

The resolution of this case—whether through legal action, technical evidence, or quiet settlement—will set the legal and ethical standard for AI innovation for the next decade. We are witnessing the birth of digital industrial espionage tailored for the age of foundation models, and the industry’s response will define the security architecture of tomorrow's AI ecosystem. The era of purely proprietary "black boxes" may be drawing to a close, giving way to an era where verification, provenance, and technical defense are as crucial as the quality of the output itself.

For further context on the technical underpinnings of this phenomenon, reports covering adversarial machine learning and model security provide necessary background:

- A look into the technical pathways: Search Query focusing on `"model extraction attack" OR "model stealing" LLM "Anthropic" Deepseek` provides depth on the plausibility of the methodology.

- Understanding the economic driver: Researching `Cost to train foundation models vs API querying cost AI` helps quantify the massive incentive behind the alleged actions.