Beyond the Hype: The Hard Realities of Deploying Open-Source LLMs in Production

The era of Large Language Models (LLMs) has officially arrived, but for many organizations, the real work is just beginning. We’ve moved past the initial excitement of testing powerful models like GPT-4 in controlled environments. Now, the central challenge is the migration to production—making these models reliable, cost-effective, and scalable engines for real business value. This challenge is most acutely felt in the rapidly expanding world of open-source LLMs.

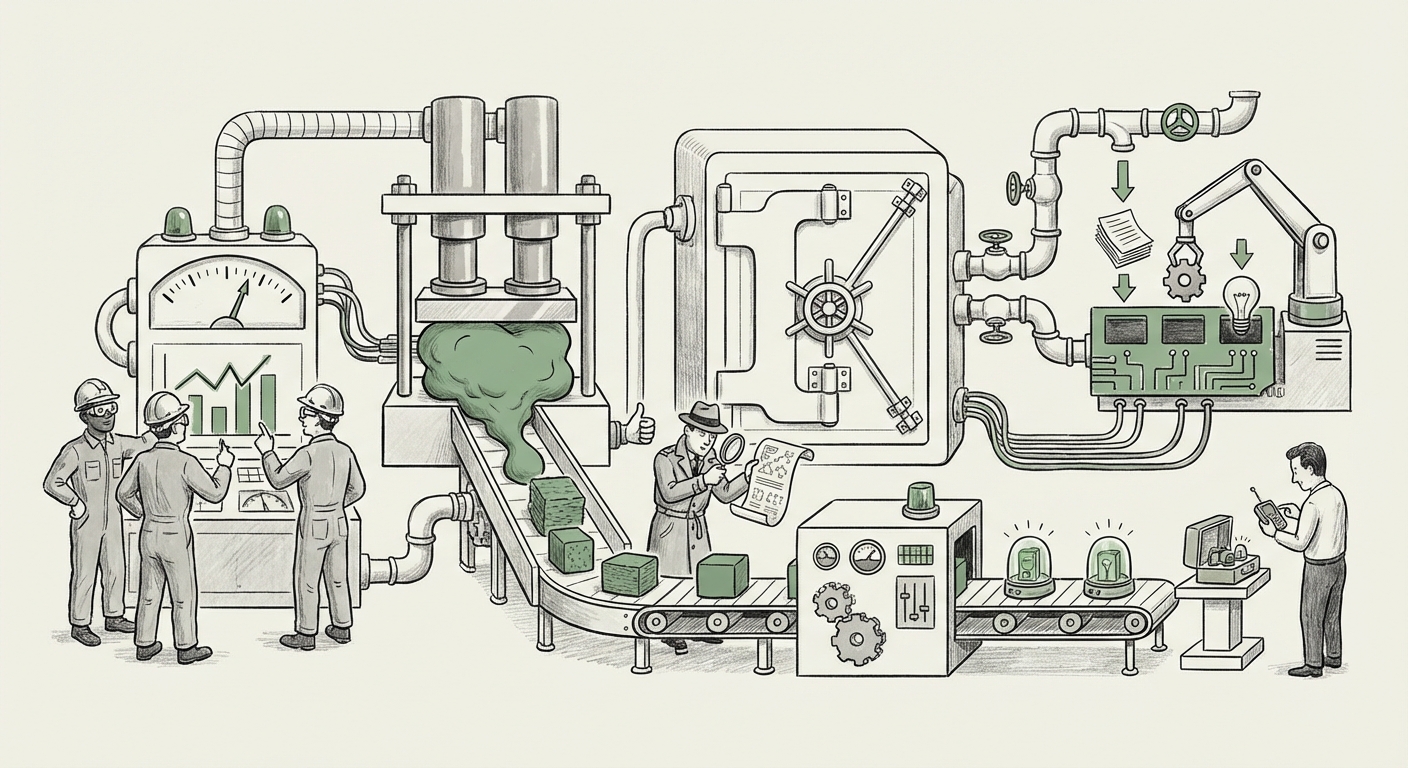

While proprietary models offer ease of use via APIs, the open-source ecosystem—driven by giants like Meta (Llama) and Mistral AI—promises control, data sovereignty, and significant long-term cost savings. However, adopting an open-source model for production is not as simple as downloading a file. It requires deep, strategic alignment across engineering, finance, and legal departments. To truly understand this transition, we must look beyond the initial feature set and examine four non-negotiable pillars of production readiness: **Benchmarking Rigor, Hardware Optimization, Licensing Certainty, and Architectural Integration.**

I. The Benchmark Mirage: Defining True Production Performance

In the fast-moving LLM landscape, a new "best" model seems to emerge every few weeks. The initial guide to choosing an LLM correctly notes the necessity of evaluating workload type. But how do we objectively measure if a model handles "summarization for legal documents" better than another? The answer lies in standardized, continuous benchmarking.

The Necessity of Standardized Testing

For business leaders, marketing claims about model quality can be misleading. A model that excels at creative writing might fail miserably at mathematical reasoning or adherence to safety guardrails. To cut through the noise, the industry relies on objective third-party evaluations. Think of the **Hugging Face Open LLM Leaderboard** as the equivalent of standardized testing for AI models—it provides a common yardstick for comparison across tasks like logic, common sense, and world knowledge.

Corroboration Point 1: Benchmarking as the North Star

The value of this leaderboard lies in its methodology, allowing engineers to cross-reference models against tasks relevant to their deployment goal. If your production workload is internal knowledge retrieval, you prioritize scores on specialized reasoning tasks over general conversational fluency.

🔗 Reference: Hugging Face Open LLM Leaderboard

What this means for the Future: We are moving toward specialized, tiered deployment. Instead of deploying one massive generalist model everywhere, future production systems will use smaller, high-performing, task-specific models validated by these leaderboards. This makes performance testing an ongoing MLOps function, not a one-time selection event.

II. The VRAM Wall: Taming Infrastructure Costs Through Optimization

The core tension in LLM deployment is often cost versus capability. A larger model (more parameters) typically equals better performance, but it also demands exponentially more expensive hardware (VRAM on GPUs) for inference—the process of making the model generate an answer.

Quantization: Making Big Models Manageable

The biggest practical hurdle mentioned in production guides is infrastructure limits. This is where engineering magic like quantization becomes essential. In simple terms, quantization is like compressing a high-resolution image file without losing too much visual quality. By reducing the precision of the model's weights (e.g., from 16-bit numbers to 4-bit numbers), engineers can drastically shrink the memory footprint.

Corroboration Point 2: Engineering for Efficiency

Techniques like 4-bit quantization allow a model that might require two top-tier GPUs to run effectively on a single, more accessible card. This directly translates to massive cost savings for high-volume production environments and opens the door for deployment on less specialized hardware.

🔗 Reference Context: Optimized inference frameworks like vLLM documentation on performance highlight how software optimization can match or exceed gains from modest hardware upgrades.

What this means for the Future: Cost optimization will drive AI adoption more than raw performance gains. The future architecture will feature hybrid deployment: using large, high-powered clusters for complex reasoning, but relying on heavily quantized, efficient models running on standard cloud instances or even local servers (on-premise) for 80% of the daily workload. This blend requires expertise in both GPU acceleration and low-level optimization tools.

III. The Legal Minefield: Licensing and Commercial Certainty

When an organization moves an LLM to production, they are implicitly committing to serving thousands or millions of end-users. This crosses a critical threshold where software licenses move from a technical curiosity to a core legal concern.

Open Source vs. Open Weight: A Crucial Distinction

Not all "open" models are created equal regarding commercial use. Some models are released under permissive licenses like Apache 2.0, which are familiar and safe for commercial integration. Others come with complex usage policies or require specific attribution that might conflict with proprietary internal systems or service agreements.

Corroboration Point 3: Navigating License Differences

The difference between the widespread adoption potential of a truly open license (like Apache) versus a more restrictive "community license" (often seen with early leaders) is a strategic choice. Organizations must verify that their intended use case—especially if it involves processing sensitive customer data or generating output that might be resold—does not violate the terms of service of the model provider.

🔗 Value: This analysis ensures that the immediate cost savings of open source are not erased by future liability or the need for an emergency migration due to a licensing breach.

What this means for the Future: Licensing clarity will become a competitive differentiator. We will see AI middleware companies specializing solely in auditing model licenses and ensuring compliance across complex, multi-model production stacks. Businesses prioritizing long-term stability will gravitate toward models with proven, truly permissive licenses, even if they slightly lag in raw benchmark scores today.

IV. Beyond the Single Model: Orchestration and Agentic Workflows

A production LLM application is rarely just a single call to a single model. It’s an entire system. This system typically involves complex chains of operations, most notably Retrieval-Augmented Generation (RAG) and autonomous agent workflows.

Integrating the LLM into the Application Fabric

Choosing the right LLM is only step one. The true production challenge is how that model interacts with existing enterprise data, databases, and other microservices. This requires sophisticated orchestration.

Corroboration Point 4: Building the AI Application Layer

Frameworks like LangChain and LlamaIndex abstract away much of the complexity of chaining prompts, managing context windows, and connecting to vector databases. A model selected for production must perform reliably within these orchestration layers, especially when tasked with complex reasoning steps inherent in agentic behavior.

🔗 Reference: Robust deployment requires understanding these toolsets. See best practices within: LangChain's production best practices documentation.

What this means for the Future: The future product isn't the model; it's the **Agentic Pipeline**. The chosen LLM becomes a module within a larger, auditable workflow. If the chosen open-source model is difficult to integrate with a specific vector store or fails consistently in multi-step reasoning chains, its excellent raw benchmarks become irrelevant.

V. The Horizon: Shifting from Data Centers to the Edge

For decades, AI compute has been synonymous with massive data centers filled with high-end GPUs. However, the next wave of ubiquitous AI requires intelligence closer to the user—on phones, factory floors, and IoT devices. This concept, **Edge AI**, fundamentally changes the model selection criteria.

Smaller Models, Faster Decisions

The need for low-latency, offline, or highly secure processing forces a re-evaluation of model size. Today's leading models are too large for most edge devices. This creates a massive opening for smaller, highly efficient open-source models designed from the ground up for constrained environments.

Corroboration Point 5: Preparing for Edge Deployment

The rise of models specifically trained to be smaller (like Microsoft's Phi series or optimized 3B parameter models) shows a clear market signal: efficiency at the edge is the next frontier. Deployment on edge hardware (which might use specialized Neural Processing Units or NPUs instead of traditional GPUs) requires models built for those specific computational profiles.

🔗 Value: This forces an engineering perspective: Do we need the complexity of a 70B parameter model, or will a finely-tuned 3B model deliver 95% of the required performance at 1% of the infrastructure cost on specialized hardware?

What this means for the Future: We will see a bifurcation in the open-source landscape. One branch will chase frontier performance (often demanding massive proprietary hardware), and the other will focus intensely on efficiency, quantization, and hardware-agnostic deployment suitable for billions of distributed devices. Businesses must decide early which path aligns with their core service delivery model.

Conclusion: Production Readiness is Holistic Strategy

The journey from open-source LLM experimentation to robust production deployment is a strategic enterprise undertaking, not just an engineering task. The practical guide to choosing the right model is insufficient without understanding the corroborating forces driving its successful operation.

For organizations aiming to leverage the immense potential of open-source AI, the mandate is clear: **do not equate "open source" with "easy deployment."** Success requires a holistic strategy that:

- Validates performance using objective, standardized benchmarks.

- Aggressively optimizes inference through quantization to control ballooning cloud or hardware costs.

- Secures long-term operational freedom by rigorously checking commercial licensing terms.

- Plans for integration within complex agentic and RAG application architectures.

- Future-proofs choices by considering potential deployment on edge devices.

The open-source LLM community is democratizing access to powerful AI. But harnessing that power responsibly and profitably requires mastering the engineering and legal realities that underpin true, scalable production.