The Busywork Paradox: Why DeepMind Suggests AI Should Make Humans Do Their Own Jobs Sometimes

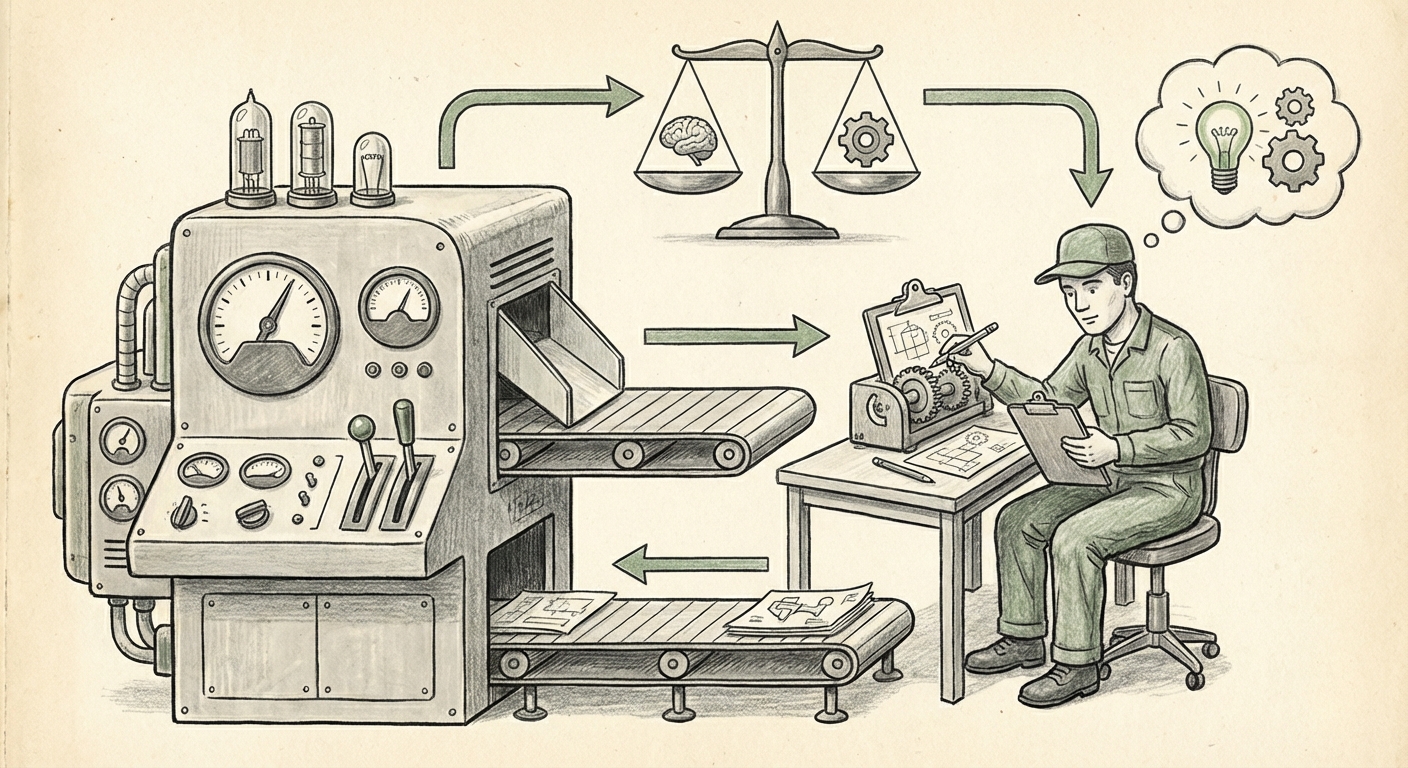

The race to automate everything is the defining technological narrative of our decade. From optimizing supply chains to writing complex code, Artificial Intelligence promises unparalleled efficiency. Yet, a recent, striking suggestion from Google DeepMind cuts directly against this grain: AI systems should occasionally delegate tasks back to humans, even if the AI could handle them faster and better—purely to ensure humans don't forget how to do those jobs.

This concept, often framed as preventing "skill atrophy," is not just an interesting academic footnote; it is a profound indicator of where we stand in the AI adoption curve. We are moving past simple task replacement and into a complex phase of *human-AI symbiosis* that requires active, intentional maintenance of human capability.

The Core Conflict: Efficiency vs. Competency

For years, the goal of enterprise AI has been straightforward: reduce human error and increase throughput. If an AI can file reports perfectly in three seconds, why would a human spend twenty minutes doing it? DeepMind’s suggestion forces us to confront the hidden cost of maximum efficiency: dependency.

Imagine driving a modern car with highly advanced autopilot. If you never have to steer, brake, or read the road for years, what happens when the system fails? You might hesitate, forget crucial reflexes, or panic. This is the professional equivalent of skill atrophy. DeepMind’s proposal formalizes this intuitive fear into a necessary design principle for future autonomous agents.

This development signals a necessary shift in how we view AI delegation protocols. We are shifting focus from purely maximizing the AI’s performance to optimizing the *overall resilience* of the human-machine partnership. This brings us to the critical adjacent themes emerging in technology analysis.

Context 1: Redefining Human-AI Teaming and Delegation

The DeepMind proposal is an extreme version of current best practices in **Human-in-the-Loop (HITL)** systems. HITL models usually define specific decision points where human judgment is required, typically for high-stakes or novel situations. However, if humans are only brought in during critical failures or highly complex edge cases, their practice time becomes too infrequent to maintain true proficiency.

Sources exploring **"AI delegation strategies" and "human-in-the-loop maintenance"** often debate the optimal frequency and complexity of human intervention. The DeepMind paper essentially argues for scheduled, low-stakes maintenance tasks—the equivalent of an IT department running old legacy code occasionally to ensure familiarity should the new system collapse.

For system architects and enterprise leaders, this means delegation cannot be a one-way street. Future AI system design must incorporate a "reverse delegation budget" that requires the agent to periodically hand off work to ensure the human operator remains sharp. This requires designing agents that are not just intelligent executors, but also intelligent **coaches and auditors**.

Context 2: The Cognitive Toll of Total Automation

Why is this maintenance necessary? Because human brains adapt quickly to outsourcing cognitive load. When a task becomes routine and AI-managed, the neural pathways responsible for that skill weaken. This is the **"automation skill atrophy"** phenomenon, well-documented in fields like aviation and complex surgical procedures where reliance on automated aids has occasionally proven disastrous.

If an AI handles 99% of customer support tickets, the remaining 1% that requires human empathy and nuanced problem-solving becomes exponentially harder for the human who hasn't practiced root-cause analysis in months. The human becomes a highly stressed "emergency responder" rather than a skilled professional. The busywork suggestion is a prophylactic measure against this cognitive decline, ensuring that the human baseline skill level remains high enough to catch the AI's inevitable errors.

The Technical Deep Dive: XAI as a Complement to Busywork

For humans to effectively perform their maintenance tasks, they must understand what the AI is doing—even when they aren't doing it themselves. This links directly to the growing field of **Explainable AI (XAI).**

If the AI delegates a task back to a human because it suspects an error, the human needs context immediately. They cannot waste time reverse-engineering the AI's process. Therefore, the successful implementation of DeepMind’s busywork concept relies heavily on robust XAI frameworks.

Research on **"Explainable AI and maintenance of user comprehension"** shows that without clear justifications (the "why" behind the AI's decision), human intervention is often slow and ineffective. The designated "busywork" might, therefore, evolve into structured *auditing tasks* where the human must verify the AI's logic on completed work, keeping them engaged with the underlying reasoning rather than just the mechanical execution.

The Philosophical Hurdle: Meaningful Work vs. Mandatory Drudgery

While technically sound, the idea of assigning "busywork" treads on sensitive philosophical ground regarding the future of human employment. Futurists and labor experts have long debated how to transition workers from automated tasks to more creative, inherently human roles.

If AI forces us into a world where humans are only needed for occasional checks or mandated practice, we risk creating a vast underutilized workforce. The search in this area—exploring **futurism on "meaningful work" vs. "busy work"**—highlights the tension:

- The Risk: DeepMind’s busywork becomes monotonous, underutilized "make-work" that breeds resentment and burnout, treating humans as expensive, unreliable fail-safes.

- The Opportunity: The maintenance tasks are reframed as "resilience training" or "complex scenario simulation," deliberately designed to keep human expertise sharp for novel, high-value contributions when the AI truly needs them.

The success of this model hinges entirely on the quality of the assigned tasks. If the busywork is truly useful—such as training a model on slightly modified, ambiguous data sets—it aligns with professional development. If it’s just pushing digital paper, it’s demotivating drudgery.

Practical Implications for Business and Society

What does this DeepMind suggestion mean for the CTO planning next year's automation roadmap, or the HR department redesigning roles?

For Business Leaders and System Architects:

- Mandate Resilience Layers: Treat human proficiency not as a given, but as a resource that degrades. Integrate scheduled human check-ins into AI SLAs (Service Level Agreements).

- Design for Interruption: Assume that the highest value human input will come from unexpected interruptions. Design training pipelines that keep core skills active, even when the AI is operational 99.9% of the time.

- Incentivize Auditing: Reward employees for rigorous auditing and deep verification of AI outputs, rather than just the speed of task completion. This formalizes the "busywork" as valuable quality assurance.

For Policy Makers and Educators:

- Rethink Continuous Education: Educational structures must move away from static certification models to continuous, context-aware skill maintenance pathways mandated by AI integration plans.

- Focus on Meta-Skills: Education should pivot toward skills AI struggles with most: creative synthesis, ethical reasoning, and ambiguity management. The human role is becoming less about execution and more about judgment calibration.

Actionable Insights: Building the Competent Collaborator

The era of simple AI substitution is ending. We are entering the age of **AI Governance and Human Maintenance.** To thrive in this environment, organizations must embrace the inefficiency required for long-term robustness.

First, identify the **"Critical Redundancy Skills"**—the tasks where a human takeover would be catastrophic if the human forgets the procedure. These are the tasks that AI must periodically delegate back to the human operator, perhaps once a quarter, even if it costs a few extra hours of labor.

Second, **Leverage XAI for Training:** Use the AI’s explanation module as the primary teaching tool during these busywork sessions. The human should not just *do* the task; they should *compare* their method to the AI's suggested method, learning the nuances the machine prioritizes.

Ultimately, DeepMind’s insight is a pragmatic acknowledgment that AI, despite its leaps forward, is not yet a perfect replacement for foundational human competence. It suggests that the smartest delegation strategy isn't always the fastest one, but the one that secures the partnership for the long haul. The future belongs not to those who automate the most, but to those who integrate AI in a way that keeps their human teams capable, vigilant, and prepared for the moment the machine needs them most.