Blackwell Leaks and AI Showdowns: Why Deepseek's Next Model Threatens the US AI Hegemony

The global Artificial Intelligence race is no longer a steady marathon; it is a series of sudden, jarring sprints. While the spotlight consistently shines on the titans of Silicon Valley—OpenAI, Google, and Anthropic—a different kind of pressure is mounting from emerging global competitors. Recent reports suggest that **Deepseek**, a formidable player often operating under the radar of mainstream Western press, is preparing a major model release that has the incumbents looking over their shoulders.

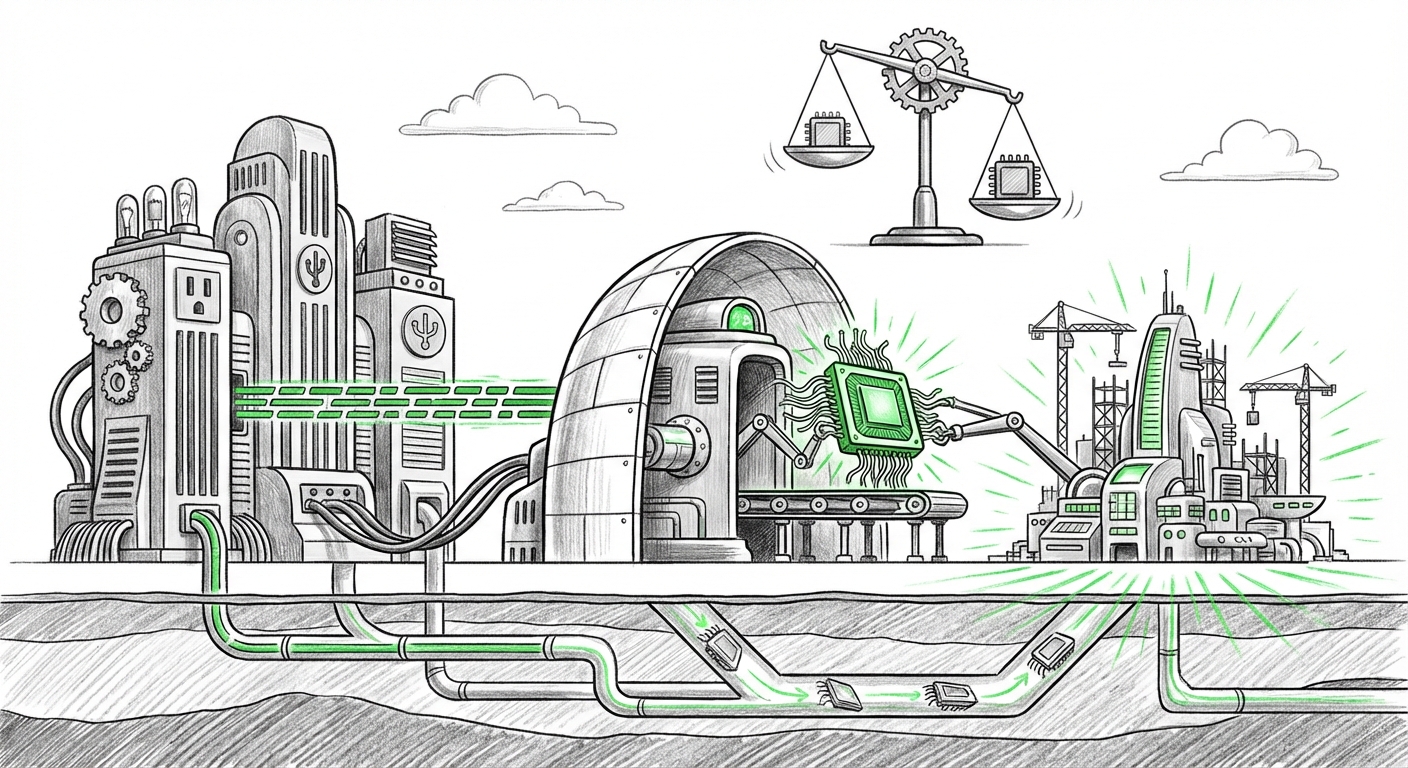

What makes this impending release truly seismic is the rumored foundation upon which it is being built: access to Nvidia’s cutting-edge, yet highly regulated, **Blackwell GPUs**. If true, this suggests not only a leap in capability for Deepseek but also a significant, potentially clandestine, hardware advantage that bypasses existing geopolitical bottlenecks. This article analyzes the three core pillars of this emerging crisis: the hardware controversy, the performance threat, and the strategic implications for the future of AI innovation.

The Hardware Battlefield: The Blackwell Conundrum

To understand the fuss, we must first understand the hardware. Graphics Processing Units (GPUs), especially those made by Nvidia, are the literal engines of modern AI. The most powerful, like the H100 and the upcoming Blackwell series, allow companies to train massive language models (LLMs) faster and more efficiently than ever before. For the US government, these chips represent strategic national assets.

The Shadow of Export Controls

The US Commerce Department has implemented strict export controls designed to slow down the AI development capabilities of certain foreign competitors by restricting access to the most advanced chips. The Blackwell architecture, designed for next-generation speed, is subject to these controls. Therefore, the suggestion that Deepseek trained its next model on these very chips is profoundly significant. It implies one of two things:

- Circumvention: The entity has successfully navigated or bypassed complex international trade regulations to acquire this restricted, bleeding-edge hardware.

- Alternative Sourcing: There is a secondary, perhaps rapidly emerging, supply chain for high-end accelerators that is not fully controlled by US export mandates.

If Deepseek can train models on hardware generally considered unavailable to them, it means their pace of iteration could outstrip US labs who are reliant on carefully metered supplies. For the layperson, imagine a Formula 1 team suddenly gaining access to an engine prototype that their rivals are legally forbidden from using. This accelerates the arms race dramatically.

Analyzing the geopolitical layer is critical here. Geopolitical tech strategists need to monitor reporting that details how these chips are moving. Searches focusing on the relationship between sanctions and supply chains, such as tracking reports on "Deepseek" "Nvidia Blackwell" training leak sanctions, reveal the direct tension between technological progress and national security policy.

The Performance Threat: Benchmarks That Demand Attention

Hardware only matters if the resulting model is good. The reason the major labs are "bracing" is not just because of the rumored chip access, but because Deepseek's previous releases have already shown remarkable competitiveness, especially in the open-source arena. When we investigate performance data—looking into queries like Deepseek LLM performance benchmarks vs GPT-4 Claude 3—a pattern emerges: rapid convergence.

Closing the Capability Gap

For a long time, the frontier models from OpenAI (GPT series) and Anthropic (Claude) maintained a significant lead in complex reasoning, coding, and nuanced instruction-following. However, models emerging from China, including those from Deepseek, have demonstrated an astonishing ability to quickly close these gaps, often achieving near-parity on standard industry tests.

A new Deepseek release, potentially trained on a massive cluster of high-end Blackwell chips, suggests that their next iteration won't just be "good for an open model," but might genuinely challenge the proprietary leaders on core intelligence metrics. For developers and engineers, this translates into a wider choice of powerful foundational models. If Deepseek’s model provides 95% of GPT-4’s capability at a fraction of the inference cost or under an open license, the economic incentive to switch becomes overwhelming.

This performance pressure forces established players to innovate faster, increasing the overall pace of AI research, even as they face supply constraints.

The Competitive Reaction: Market Disruption and Strategic Realignment

When the big labs feel threatened, the market feels it too. The anxiety noted in the initial reports reflects a genuine strategic shift. US-based labs have operated with a degree of certainty regarding their hardware access and data advantage. Deepseek’s potential breakthrough upsets this equilibrium.

The Shifting Balance of Power

The competitive climate is intensifying. We see this reflected in strategic reporting that examines US AI labs response to Chinese LLM competition "market disruption". This competition is forcing incumbents to:

- Accelerate Closed-Source Releases: They must continuously move the goalposts by releasing even larger or more specialized proprietary models to maintain differentiation.

- Focus on Ecosystem Lock-in: Strengthening platform advantages (e.g., Microsoft/Azure integration for OpenAI, Google Cloud ecosystem) becomes paramount to retain enterprise customers, even if the base model performance narrows.

- Re-evaluate Open-Source Strategy: The pressure from highly capable open models like Deepseek may force these labs to either release better open models themselves or risk losing the developer community entirely.

This dynamic is healthy for the technology ecosystem, but it introduces significant risk for early investors and enterprise adopters who placed massive bets on a few key providers. The market is demanding diversification in foundational AI capabilities.

Future Implications: Geopolitics, Democratization, and AI Cost

The alleged Deepseek scenario is more than a product launch; it is a flashpoint illustrating several core future trends in technology.

1. The Decoupling of AI Supply Chains

The reliance on a single geography (or set of friendly nations) for high-end compute is proving to be a strategic vulnerability. If non-US entities can successfully build frontier models using hardware obtained outside traditional Western distribution channels, the effectiveness of export controls diminishes. This will likely lead to even more aggressive measures regarding chip sales and may spur rapid investment in domestic, non-Nvidia hardware solutions across various governments and private labs.

2. The Velocity of Open vs. Closed Models

When high-performance models become widely accessible (even if through sophisticated open-source releases), the democratization of AI accelerates. Small businesses, startups, and researchers who cannot afford massive API calls to GPT-4 can rapidly deploy powerful, locally runnable models. This dramatically lowers the barrier to entry for AI-powered innovation across finance, healthcare, and localized industrial applications.

For the average person, this means better, cheaper, and more customized AI tools will appear faster. Think of it like software—once the operating system is free and capable, thousands of specialized applications bloom on top of it.

3. The End of the Compute Moat (Eventually)

The current AI race is largely a battle of compute access—who can afford the most racks of the latest chips? If Deepseek proves that significant performance gains can be achieved even by strategically using restricted hardware, it suggests that perhaps the software optimization and novel training methodologies (the "secret sauce") are becoming more important than sheer quantity of compute.

While compute remains king for now, breakthroughs in data efficiency, architectural design, or training algorithms can erode the advantage held by those with the largest budgets. This is the ultimate long-term implication: talent and ingenuity can overcome hardware supply imbalances.

Actionable Insights for Businesses and Stakeholders

How should businesses react to this heightened competitive tension and the potential arrival of a new, powerful, globally sourced model?

For Enterprise Technology Leaders: Diversify Your AI Portfolio

Do not anchor your entire AI strategy to one vendor (OpenAI, Google, or Anthropic). The market is diversifying rapidly. Begin rigorous internal benchmarking now. If a Deepseek-class model offers comparable performance on your core tasks (e.g., summarization, customer service routing), establish the integration pathways needed to switch platforms quickly. Treat vendor lock-in as a significant business risk.

For AI Researchers and Developers: Embrace Interoperability

Focus on creating agents and applications that are model-agnostic. Develop code that can easily swap out the underlying LLM via standardized APIs or framework layers. The coming period will see rapid shifts in which model offers the best price-to-performance ratio; flexibility ensures you can always use the optimal tool for the job.

For Investors and Strategists: Look Beyond the US Giants

While the US giants remain critical, investment theses must now account for sophisticated global competition. Scrutinize which companies are demonstrating hardware agility, superior optimization techniques, and the ability to attract top global AI talent regardless of geopolitical boundaries. The next major platform winner may not be where you expect them to be.

The race is on, fueled by silicon that may or may not be supposed to be in the training labs. The utilization of restricted technology suggests a heightened level of commitment and capability from Deepseek, turning a competitive landscape into a genuine, multi-front war for AI supremacy. The winners will be those who can adapt fastest to the shifting hardware reality and the democratization of truly powerful intelligence.