Deepseek's Shadow: How Hardware Allegations and Emerging Models Threaten the AI Hegemony of Google and OpenAI

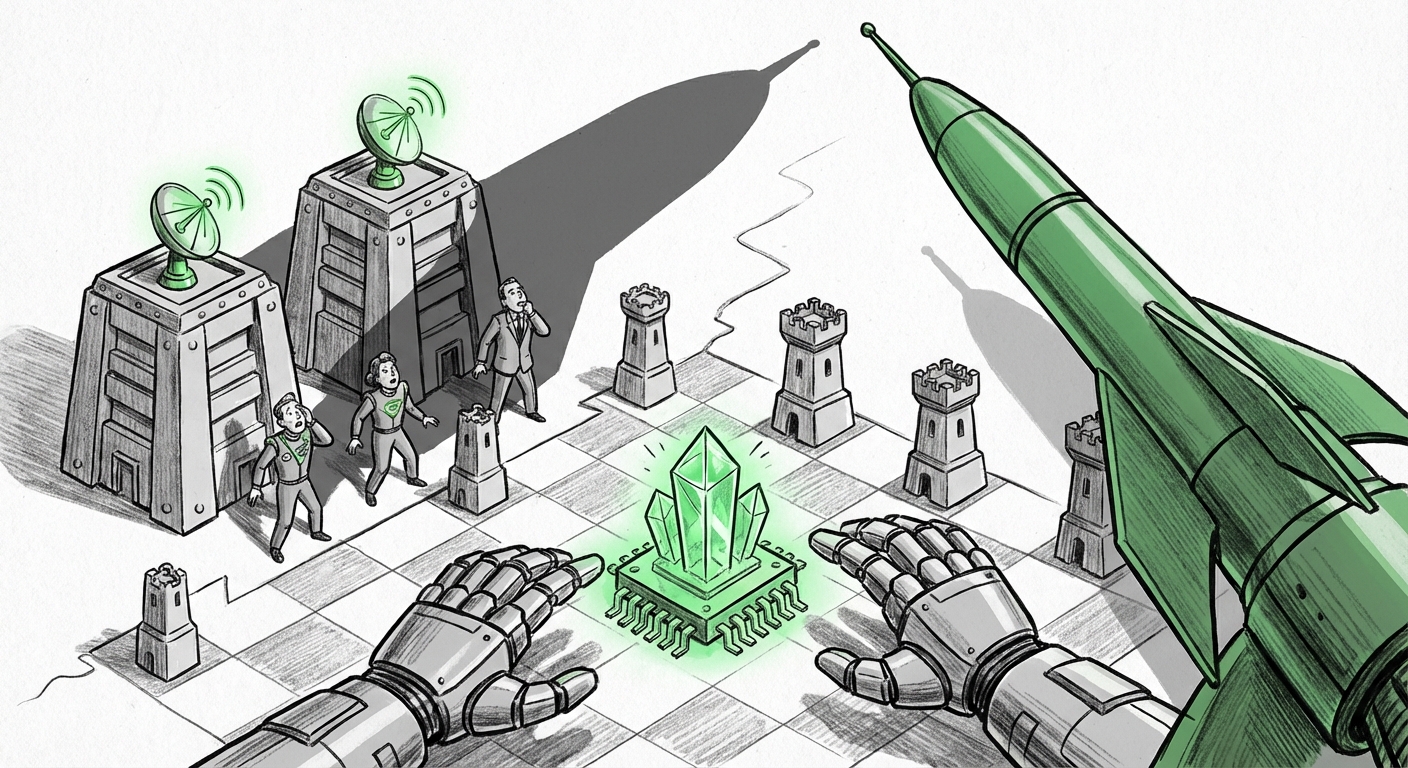

The race to build the world’s most powerful Artificial Intelligence is often framed as a dual between Silicon Valley giants—Google, OpenAI, and Anthropic. However, recent intelligence suggests that a significant tremor is coming from the East, centered around the Chinese AI powerhouse, Deepseek. Reports indicate that the organization is preparing a model release so potent that established Western labs are reportedly "bracing" for impact. What makes this anticipation so acute? It appears to hinge on allegations surrounding the very foundation of modern AI training: specialized, cutting-edge hardware.

This situation is not just about a faster chatbot; it’s a crucial convergence point where technological achievement meets geopolitical strategy. To truly understand the implications of Deepseek’s next move, we must move beyond the initial headlines and investigate the technical validation, the regulatory framework surrounding the hardware, and the resulting competitive dynamics.

The Crucible of Competition: Verifying Technical Prowess

In the world of foundation models, hype is cheap, but performance is currency. For Deepseek to genuinely "shake the market," its upcoming model must demonstrate superior general intelligence, efficiency, or multimodal capability compared to GPT-4 or Gemini Ultra. This is where independent verification becomes essential.

Analysts are intensely focused on searching for **"Deepseek AI model performance benchmarks GPT-4"** (Query 1). If Deepseek’s release is confirmed to place its model ahead of, or decisively equal to, current US leaders on standard tests—such as MMLU (general knowledge), GSM8K (mathematics), or complex reasoning tasks—the competitive narrative shifts instantly. It validates the massive investment pouring into non-Western AI labs.

Furthermore, given Deepseek’s history with efficient model architectures, deeper dives into **"MoE architecture advancement Deepseek"** (Query 5) will be telling. If they are achieving elite performance not just through sheer scale (which requires massive, often restricted hardware), but through architectural innovation—like improved Mixture-of-Experts (MoE) routing—it suggests a more sustainable and potentially adaptable path to future intelligence breakthroughs, irrespective of immediate hardware access.

Implication for Developers and Researchers:

If performance claims hold true, developers can anticipate a rapid "commoditization" of high-end intelligence. The gap between "premium" and "open/accessible" models shrinks, allowing smaller enterprises to build powerful applications faster and cheaper. The focus shifts from *accessing* frontier intelligence to *applying* it creatively.

The Geopolitical Fault Line: The Blackwell Chip Allegations

The most volatile element of this story is the claim that the new model was trained using Nvidia’s *Blackwell* chips. This is significant because the Blackwell series represents the absolute cutting edge of AI computational power, and its export to entities in China is severely restricted by US government mandates.

Investigating **"Nvidia Blackwell export controls US government China"** (Query 2) provides the necessary framework. These regulations are designed specifically to prevent foreign adversaries from leapfrogging US capabilities in high-performance computing, which is crucial for training the largest, most complex AI models. If Deepseek indeed utilized these processors—whether directly acquired, through complex international laundering, or via sophisticated simulation on older hardware—it immediately escalates the situation beyond a simple technology competition into a clear geopolitical confrontation.

This alleged usage forces a critical look at **"China AI hardware self-sufficiency semiconductor"** (Query 4). Whether this alleged usage is an isolated incident or a symptom of a broader, more successful national strategy to circumvent export controls dictates the long-term technological balance. A successful circumvention suggests that the US strategy of hardware throttling may be failing to keep pace with China's aggressive domestic push for self-reliance.

Implication for Policy and Security:

For policymakers, this is a stress test for current export controls. It signals that the battleground is moving from securing the raw hardware to monitoring its deployment and indirect utilization. Investors must re-evaluate risk profiles associated with firms operating in this gray area, while national security experts will analyze the effectiveness of supply chain choke points.

The Reaction: How the Incumbents Are Bracing

When a challenger generates enough buzz to cause incumbents to "brace," it signals a tangible threat to market share, talent acquisition, and R&D timelines. We must look for evidence confirming this anxiety through searches like **"OpenAI Anthropic reaction to emerging Chinese AI models"** (Query 3).

This reaction manifests in several ways:

- Talent Poaching and Retention: Increased spending on compensation and exclusivity agreements for top researchers.

- Speed of Deployment: Rushing slightly less-polished models to market to stay ahead of anticipated competitor announcements.

- Shifting Narrative: Emphasizing regulatory compliance and safety standards in public messaging, subtly casting competitors operating under different national rules as risky alternatives.

The fact that Google, OpenAI, and Anthropic are all reportedly on high alert suggests that Deepseek’s potential advancement is perceived as systemic, rather than niche. It implies that the new model might be targeting capabilities—perhaps superior reasoning in non-English languages or novel forms of efficiency—that directly threaten the core value propositions of the US market leaders.

Future Implications: Decentralization and the Era of Accessible Power

The anticipated Deepseek release, framed by hardware controversy, points toward several inevitable future trends in the AI ecosystem:

1. The End of AI Monopoly

For years, the sheer cost of building frontier models (tens of thousands of top-tier GPUs) created an almost impenetrable moat around a handful of companies. If Deepseek validates the idea that comparable intelligence can be achieved through faster iteration, architectural cleverness, or access to different supply chains, that moat erodes significantly. We are moving toward a world where the ability to innovate algorithmically matters as much, if not more, than sheer capital expenditure.

2. Increased Hardware Specialization and Diversification

The dependence on a single supplier (Nvidia) for the most advanced AI chips is proving to be a massive strategic vulnerability. This situation will accelerate investments in alternatives:

- Western labs (Google with TPUs, Amazon with Trainium/Inferentia) will double down on their proprietary silicon.

- Emerging players, like Deepseek, will become masters of leveraging *any* available high-performance hardware, or innovating around limitations, creating more diverse, complex supply chains that are harder for any single government to regulate.

3. The Blurring Lines of National AI Strategy

The next generation of AI development will be inextricably linked to national industrial policy. When an AI model is rumored to be built on restricted hardware, the technology itself becomes a national asset or liability. Businesses operating internationally must be acutely aware of where their models are trained, what data they consume, and where they are deployed, as regulatory environments shift rapidly based on perceived technological parity or superiority between nations.

Actionable Insights for the Road Ahead

Regardless of the final benchmark scores, the mere *anticipation* of this release provides critical lessons for every organization leveraging AI:

For Business Leaders: Do not assume US dominance is permanent. Start rigorously testing and piloting models from diverse sources now. Look beyond the established names for efficiency gains that might be hiding in academically strong, but commercially less visible, competitors. Your long-term AI strategy must be vendor-agnostic and politically aware.

For Technical Teams: Focus efforts on optimization. If advanced training hardware becomes scarcer or more expensive due to policy friction, the ability to extract maximum performance from existing or slightly older hardware (through quantization, better inference engines, or MoE techniques) becomes a competitive advantage.

For Investors: Watch the hardware manufacturers closely. Any perceived leak or successful circumvention of export controls will create volatility in the semiconductor sector, signaling either a failure of policy enforcement or a triumph of non-US engineering ingenuity. The next major AI wave may be fueled by hardware sources you are not currently tracking.

The era of quiet, predictable iteration in frontier AI is over. Deepseek's rumored release serves as a potent reminder that breakthroughs can emerge from unexpected corners, driven by immense national will and the constant, often shadowy, pursuit of superior computation. The race is now officially a multi-front war, and the world’s leading labs are right to be bracing.