The LLM Orchestration Revolution: How Modular AI Services Are Reshaping Infrastructure

The landscape of Artificial Intelligence is undergoing a fundamental transformation. For years, the focus was on building the single biggest, most capable Large Language Model (LLM)—a monolithic digital brain. While models like GPT-4 and Claude continue to astound us with their general knowledge, the *industrialization* of AI requires something more precise, reliable, and cost-effective.

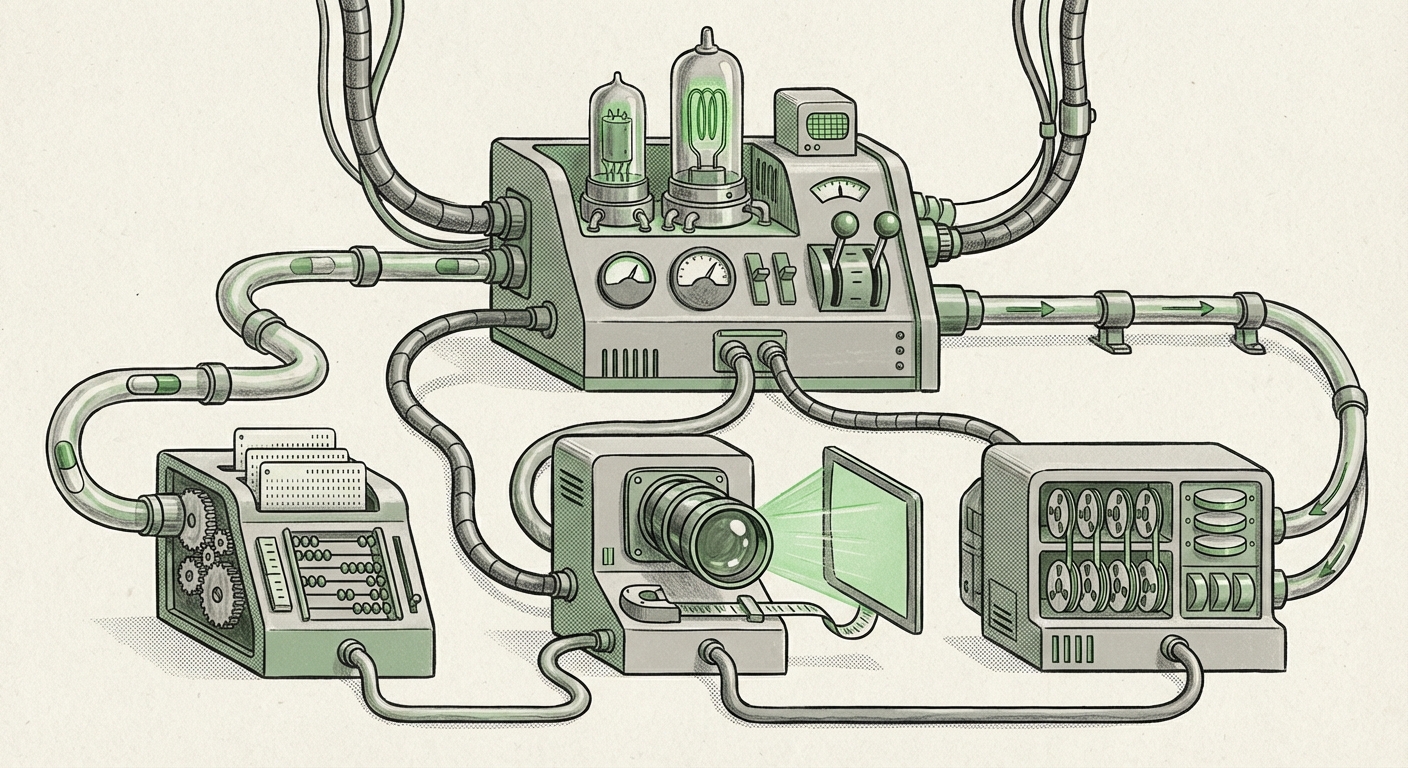

A critical new development, highlighted by platforms deploying specialized tools like Meta-Clustering Protocol (MCP) servers as callable endpoints, signals this shift. We are moving away from the isolated black box toward a sophisticated ecosystem where LLMs act as intelligent *orchestrators*, directing traffic to a fleet of specialized, high-performance microservices. This is the era of **Modular AI**.

The Birth of the AI Microservice: Beyond the Generalist

Imagine you hire a brilliant generalist consultant. They know a little about everything—marketing, law, coding, and accounting. If you need a highly complex tax optimization strategy, you probably wouldn't rely solely on the generalist; you’d want an expert CPA. In the AI world, LLMs are the brilliant generalists, but enterprises need expert CPAs.

Deploying specialized AI models (like the MCP server for deep data pattern recognition) as a simple API endpoint makes them instantly accessible. This treats specialized AI not as a research curiosity but as a reliable, deployable software component. This concept is crucial because:

- Precision Matters: Generalist LLMs often "hallucinate" or struggle with proprietary data structures. Specialized models, trained only on clustering data, offer superior, verifiable results for that specific task.

- Cost Efficiency: Running a massive foundational model for a simple task like "find related image clusters" is expensive. Calling a small, efficient, specialized model via a dedicated API is significantly cheaper and faster.

The Technical Bridge: Function Calling as the Universal Connector

How does the generalist LLM know *when* and *how* to call the specialized service? The answer lies in a breakthrough technology known as **Function Calling** (or Tool Use, as some platforms call it).

Function Calling is the standardized language that allows an LLM to understand that a user's request requires external computation or data access. When a user asks, "Analyze these customer segments and tell me the five most common purchasing behaviors," the LLM realizes it doesn't have the internal tools for deep analysis. Instead, it generates a structured output—a formal instruction (like JSON)—that says, "Call the `MCP_Cluster_Analyzer` function with these specific customer inputs."

This architectural choice solidifies the modular trend. If we look at how these tools are standardized across the industry, it becomes clear that this is the path forward for enterprise AI integration. As detailed comparisons between platforms like OpenAI's Function Calling and Google's Gemini Tool Use demonstrate, the underlying principle is identical: LLMs must become proficient at delegation [Search Query 1 Corroboration]. This delegation framework transforms the LLM from a mere text generator into a powerful workflow engine.

Implications for Developers (The AI Engineer Audience)

For developers, this means shifting focus. Instead of wrestling with prompt engineering to force a generalist model to approximate expert analysis, the task becomes designing, deploying, and documenting high-quality, secure API endpoints that the LLM can trust. The skill is now in crafting the *schema*—the detailed description of the tool the LLM needs to see—rather than just the prompt.

The MLOps Challenge: Scaling the AI Ecosystem

If every organization is creating dozens of these specialized AI microservices, how do they ensure they run reliably, quickly, and securely? This brings us squarely to the evolution of MLOps (Machine Learning Operations).

Deploying a traditional web application as an API is well-understood. Deploying a specialized model designed for heavy computation (like the MCP server) and ensuring it can handle bursts of requests from hundreds of simultaneous LLM calls is a different beast entirely. This is where trends in scalable API serving become paramount [Search Query 2 Corroboration].

Future-proof AI infrastructure must address:

- Low Latency Serving: The LLM waits for the tool's response. If the specialized API is slow, the entire interaction—and the user experience—fails. This drives adoption of optimized serving frameworks, GPU sharing, and edge deployment for smaller models.

- Version Control: If you update the clustering logic (the MCP model) to be 10% more accurate, you need a system that allows the LLM orchestrator to transition smoothly without breaking existing workflows.

- Security and Isolation: Each specialized service must be strictly sandboxed. If the LLM accidentally calls an internal database access tool instead of the public weather tool, the security breach potential is enormous.

The need to treat specialized models as robust, production-ready APIs is pushing MLOps tools to become more sophisticated in managing heterogeneous workloads—handling everything from massive, slow foundational models to tiny, fast inference tasks simultaneously.

The Strategic Balance: Specialized vs. General Purpose AI

The most profound implication of this modular shift is strategic. Businesses must now define a clear AI architecture policy: When do we use the Generalist, and when do we deploy a Specialist?

This strategic dilemma is widely recognized in the industry, prompting conversations about the long-term viability and role of different model sizes [Search Query 3 Corroboration].

Why Specialization Will Thrive

For tasks requiring deep, repeatable, verifiable processes—like fraud detection, precise financial modeling, medical imaging analysis, or proprietary data clustering—specialized models will dominate. They are cheaper, easier to audit, and less prone to the unpredictable failures of general-purpose systems.

The generalist LLM serves as the perfect "front-end"—handling natural language understanding, intent classification, and workflow management. It determines the question, then hands the math or the proprietary lookup to the expert microservice.

The Future Role of the LLM

The future LLM, therefore, is less of a knowledge base and more of a cognitive hub—a **System Administrator for Intelligence**. Its value lies not just in what it knows, but in how effectively it can assemble the right tools from the available ecosystem to solve a novel problem.

Practical Implications and Actionable Insights

For technology leaders looking to capitalize on this modular AI paradigm, several actionable steps emerge:

1. Audit Your AI Portfolio for Modularity

Identify every proprietary, high-performing model your company currently uses. If they are still running on legacy infrastructure or require manual steps, prioritize refactoring them into clean, well-documented REST APIs. Treat these internal models as first-class, external services.

2. Embrace Tool Definition Standards

Ensure your development teams are fluent in defining clear function schemas (API descriptions). The better the schema, the more reliably the LLM can invoke the service. This clarity reduces costly errors in orchestration.

3. Develop an Orchestration Layer Strategy

Decide whether you will use a third-party orchestration platform, build your own routing layer (perhaps using frameworks like LangChain or Semantic Kernel), or rely solely on the native function-calling features of foundational models. This layer must be robust enough to handle failures from downstream services gracefully.

4. Train for Delegation, Not Just Generation

When training internal teams on using modern AI, emphasize testing the LLM's ability to *choose the right tool* and *format the input correctly* for that tool, rather than simply testing its ability to write persuasive text.

Conclusion: The Assembly Line of Intelligence

The shift towards deploying specialized AI components like MCP servers as callable API endpoints, unified by intelligent LLM orchestration, represents a maturity phase in artificial intelligence. It moves AI from a fascinating technology demo to an essential, predictable piece of enterprise infrastructure.

We are witnessing the assembly line of intelligence being built. Large, powerful foundational models provide the vision and control, while lean, specialized models provide the high-speed, precise labor. This modularity ensures that AI systems are not just scalable but also interpretable, cost-efficient, and deeply integrated into the specific workflows that drive business value. The future isn't about finding one perfect model; it's about mastering the art of connecting the perfect set of models.