The Classroom AI Land Grab: Why Google's Free Gemini Training for 6 Million Educators Signals the Future of Tech Infrastructure

The recent announcement that Google intends to provide free training on its Gemini AI to all 6 million U.S. educators is far more than a benevolent gesture toward public service. It represents a calculated, high-stakes strategic maneuver in the ongoing technological arms race—a race to secure the foundational layer of the next generation of digital work and learning. For any technologist, business leader, or policymaker watching the trajectory of Artificial Intelligence, this move in the K-12 and higher education sectors is a bellwether for how AI will be embedded into the very fabric of American professional life.

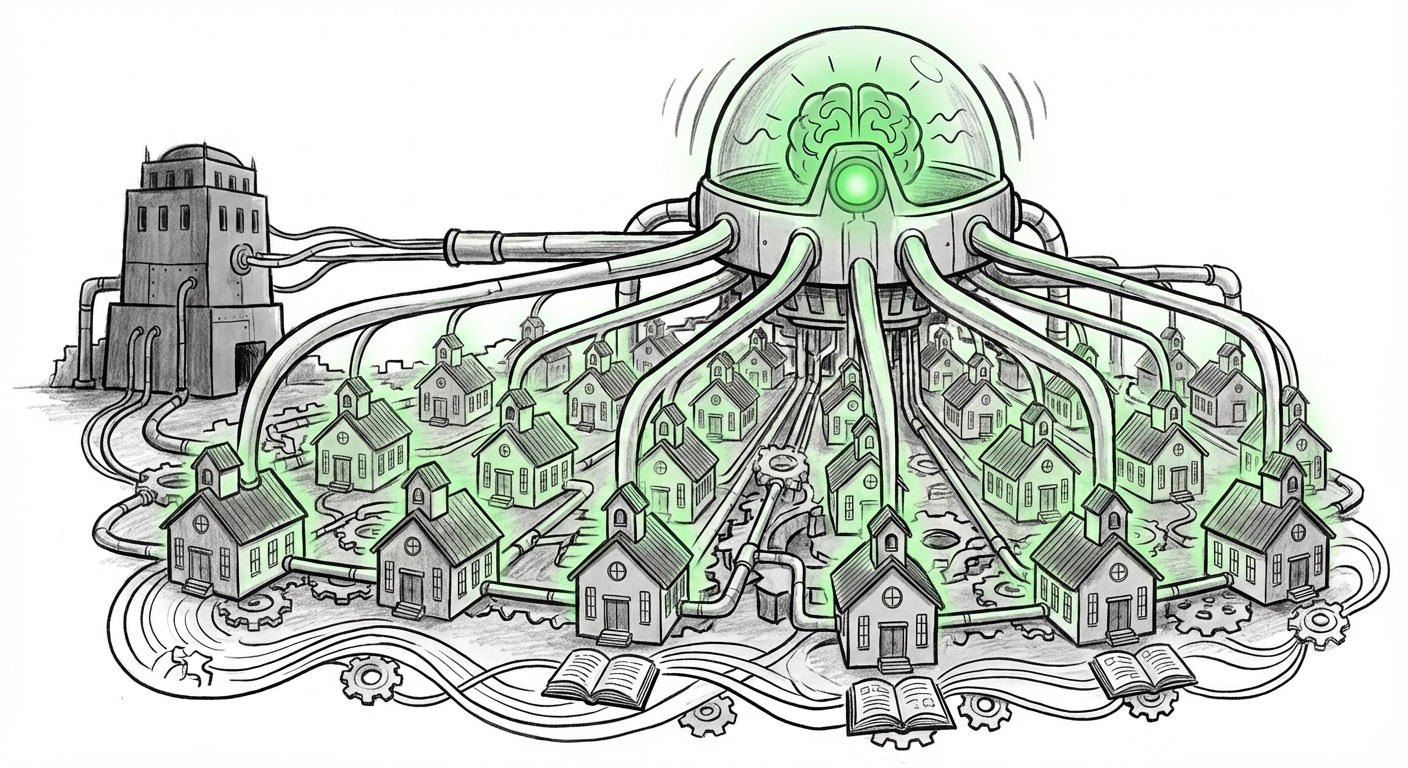

The Strategic Significance: Infrastructure, Not Just Software

When a company offers a massive, free educational initiative focused on its core technology, the goal is simple: adoption breeds dependency. Google is not just trying to sell software licenses; it is trying to install the operating system for how millions of professionals think about and execute their daily tasks. By familiarizing six million teachers—the gatekeepers of the next generation—with Gemini, Google is aiming to create an insurmountable moat.

Imagine a teacher who spends years designing lesson plans, grading rubrics, and creating personalized study guides using Gemini. When the time comes for that school district to upgrade its enterprise cloud or productivity suite, switching away from the AI tool they are intimately familiar with becomes incredibly costly in terms of lost institutional knowledge and retraining time. This is the long game being played in the education vertical.

The Competitive Crucible: Google vs. Microsoft

This strategy is best understood through the lens of fierce competition. The education technology (EdTech) space has long been dominated by a duopoly, primarily between Google Workspace (for Education) and Microsoft 365 (with Azure underpinning its services). As corroborating analysis often reveals, when discussing the `"Microsoft Copilot" education sector adoption trends`, Microsoft has historically held a strong incumbent advantage due to established contracts and familiarity with Office products.

Google’s free Gemini push is a direct counter-offensive. It seeks to bypass procurement departments momentarily and win over the end-users (the teachers) directly. If teachers mandate Gemini for their daily workflow efficiency, procurement teams will follow suit. This move shifts the focus from **who has the best enterprise contract** to **who has the most deeply integrated user experience** across the classroom.

This dynamic is summarized by examining **`Google for Education vs Microsoft Education strategy comparison`**. Both giants understand that the education market is sticky; once students and staff are trained on an ecosystem, migration is rare. Google is betting that the utility of Gemini *now* outweighs the convenience of existing Microsoft relationships.

Pedagogical Transformation: From Tool to Co-Pilot

For the 6 million educators targeted, the implications are immediate and profound. This isn't about learning to use a new piece of software; it’s about integrating a cognitive partner into teaching. This necessitates a rapid evolution in teacher skill sets, moving toward **AI literacy** rather than mere digital literacy.

As noted when researching `K-12 AI literacy standards and teacher preparedness`, the biggest gap today is not access to AI, but the pedagogical framework for its responsible use. Google’s training initiative attempts to fill this vacuum by defining, implicitly, *how* Gemini should be used—from generating differentiated instruction materials to summarizing complex research papers for advanced placement courses.

For the average teacher, this means:

- Efficiency Gains: Dramatically reducing time spent on administrative tasks (drafting emails to parents, creating quiz variations).

- Personalization at Scale: The ability to create learning paths tailored to individual student needs, something previously impossible due to time constraints.

- Curriculum Evolution: Shifting classroom focus from rote memorization (which AI excels at) to critical thinking, prompt engineering, and verification of AI outputs.

If this training is effective, it will set the baseline for what constitutes a "competent" teacher in the immediate future. Teachers who embrace this training become AI-enabled; those who do not risk being left behind by the speed of technological change.

The Higher Hurdle: Ethical and Policy Implications

While K-12 adoption can be driven by rapid, centrally mandated training, the higher education sector presents a more complex challenge, as highlighted when looking into the `Generative AI in higher education policy debate`. Universities are fiercely protective of academic integrity and data sovereignty.

Google's push forces university IT directors and academic senates to move faster than they might prefer. The central tension revolves around **data control**. When teachers upload student work or sensitive internal data to a third-party LLM for analysis or enhancement, where does that data go? Is it used for future model training? For K-12, district compliance with COPPA (Children's Online Privacy Protection Act) is paramount; for higher ed, FERPA (Family Educational Rights and Privacy Act) compliance is the barrier.

This leads to the crucial societal dimension addressed by studying the `Ethical implications of large-scale AI training for public sector workers`. Free training often means the user is the product. When tech giants flood a critical public sector like education with their tools, they gain immense influence over curriculum and data governance. Policymakers must ensure that this rapid integration does not lead to:

- Algorithmic Bias: If Gemini reflects biases present in its training data, embedding it across 6 million classrooms risks standardizing those biases across an entire generation of learning materials.

- Data Centralization Risk: Concentrating student interaction data with one or two major providers creates a national digital vulnerability.

Actionable Insights for the Future of AI Adoption

For organizations outside the classroom—businesses, government agencies, and even other large software providers—Google’s education strategy offers several key takeaways about successful AI deployment:

1. The Power of the Trojan Horse User

Businesses should recognize that grassroots adoption by end-users can often override top-down procurement strategies. Identify the most influential, technically savvy users in your organization (your "internal educators") and empower them first. If they become efficient and evangelical about a new AI tool, the rest of the enterprise will follow, making infrastructure investment decisions easier.

2. Standardization vs. Flexibility

Google aims for standardization (everyone using Gemini). While standardization drives efficiency and centralized support, organizations must build policies that allow for flexibility. As evidenced by the higher education resistance, mandating a single AI tool globally can stifle innovation in specialized departments. The future requires ecosystems that support **hybrid AI deployment**, allowing teams to use the best specialized model while keeping core data within secure boundaries.

3. Training as the Ultimate Moat

The market is saturated with powerful AI models. The true differentiator is **user capability**. Companies that invest heavily in training their workforce not just on *what* the AI does, but *how to think critically* around its outputs, will generate superior ROI. Google knows that proficient users of Gemini will outperform proficient users of a competitor's less-understood model, even if the underlying technology is comparable.

Conclusion: Shaping the Next Digital Generation

Google’s commitment to training 6 million U.S. educators is a pivotal moment in the history of educational technology. It solidifies the battle lines in the AI platform war, transforming the classroom into the next major frontier for cloud infrastructure dominance. If successful, Gemini will not just be a tool teachers use; it will become the cultural and cognitive baseline for how teaching and learning are conducted in the 21st century.

The implications stretch far beyond test scores. They touch on national digital literacy, data governance practices, and the competitive health of the entire U.S. tech landscape. We are witnessing the deliberate, large-scale installation of an AI mindset into the very institutions responsible for cultivating future workers and citizens. The next few years will reveal whether this strategic embedding leads to an educational renaissance or simply a consolidation of technological power.