The API Revolution: How Standardized Model Servers and LLM Function Calling Define Production AI

The world of Artificial Intelligence is rapidly maturing. We are moving past the excitement of large language models (LLMs) generating prose and entering the critical phase of operationalizing them—making them reliable, controllable, and useful within complex business logic. A significant convergence point in this evolution is the shift toward treating specialized AI components, such as Model Configuration Protocol (MCP) Servers, not as isolated experiments but as standardized, accessible services.

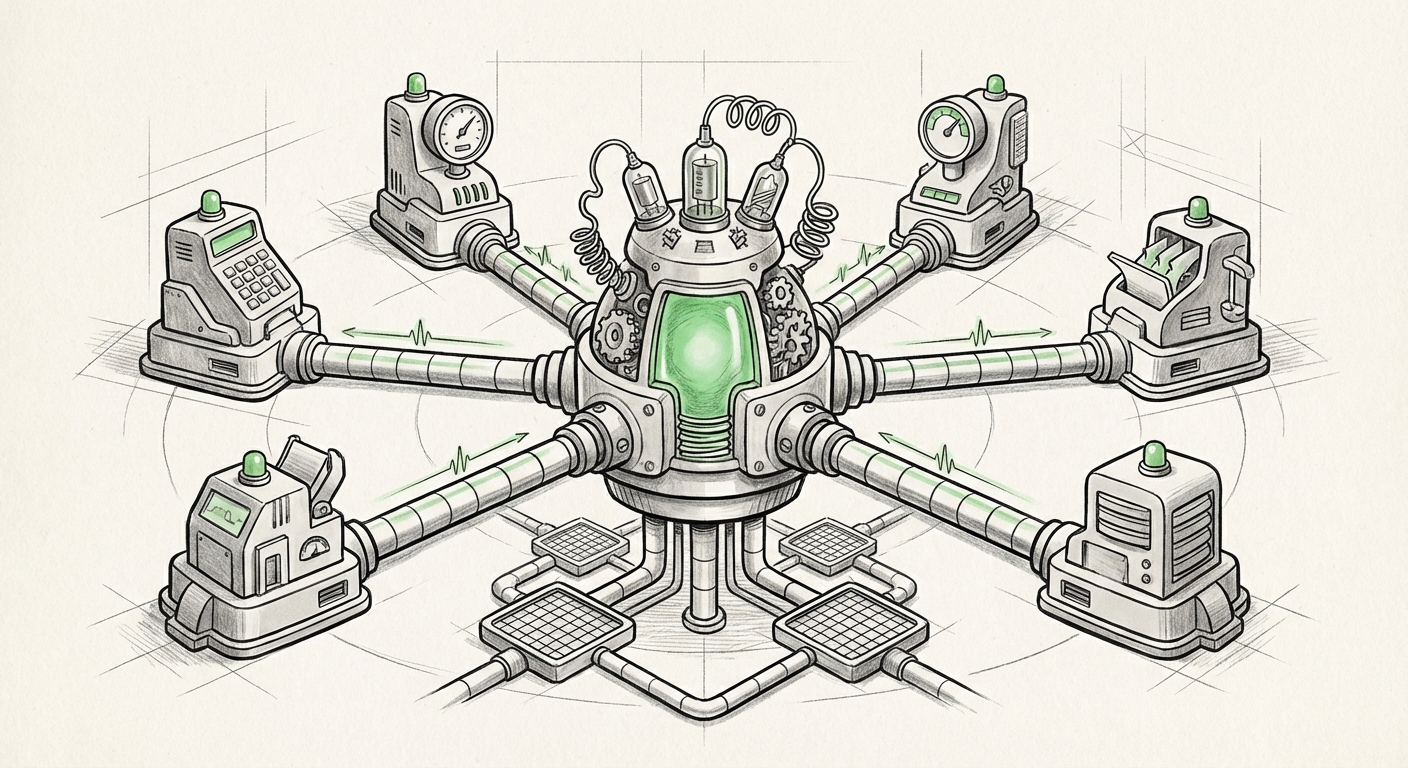

Recent developments, exemplified by efforts to deploy MCP Servers directly as API endpoints for LLM integration via function calling, highlight a powerful architectural pattern. This is the blueprint for the next generation of intelligent systems: systems where a powerful LLM acts as the conductor, seamlessly orchestrating specialized, governed models that perform specific tasks.

The Great Convergence: Standardization Meets Extensibility

To fully grasp the significance of this trend, we must examine its three foundational pillars:

- LLM Extensibility (Function Calling): Giving the LLM the ability to actively use tools.

- Model Standardization (MCP): Ensuring specialized models speak a common, manageable language.

- Robust Infrastructure (API Serving): Treating AI components like any other enterprise service.

Imagine a super-smart assistant (the LLM). It can talk beautifully, but to actually do things—like run a specific financial forecasting model or validate a compliance document—it needs access to reliable tools. Function calling is the universal remote control that lets the assistant decide which tool to use and how to feed it information.

When specialized tools, such as those managed by an MCP Server (which governs configurations, versions, and parameters for a specific model family), are exposed as a simple API endpoint, the LLM gains controlled access to that specialized capability. This transforms the LLM from a sophisticated text generator into a genuine workflow orchestrator.

Pillar 1: Function Calling—The LLM’s New Toolkit

The concept of LLM function calling or tool use has rapidly moved from an experimental feature to an industry expectation. It addresses one of the core limitations of foundational models: they are excellent at general reasoning but often lack access to real-time data or proprietary, highly optimized algorithms. As noted in discussions around tool-augmented generation, the ability for models to interface externally is paramount for real-world utility (Hugging Face: Tool-Augmented Generation).

Function calling is essentially a structured dialogue. The developer describes the capabilities of the external API (e.g., "This API calculates quarterly risk scores") along with the required inputs and expected outputs. When a user prompts the LLM, the model reasons if that tool is necessary. If so, it doesn't answer directly; instead, it generates a structured JSON call telling the system, "Please run the risk score API with these parameters." The system executes the call against the deployed server and feeds the result back to the LLM, which then generates the final, informed answer.

For the Business Audience: This means your customer service chatbot is no longer guessing; it can accurately check inventory, process a refund, or generate a specific compliance report by calling the correct internal software component on demand.

Pillar 2: MLOps and the Standardization Imperative

The success of function calling hinges entirely on the reliability of the endpoint being called. This brings us to MLOps best practices. You cannot expose mission-critical logic to an LLM unless you trust the underlying infrastructure. Deploying a specialized model server, whether it's for fraud detection or dynamic pricing, requires robust serving infrastructure (AWS Machine Learning Blog).

This is where protocols like MCP become vital. In an environment where hundreds of specialized models might need to be rapidly deployed, updated, or swapped out, a standardized protocol ensures that the way the model is configured, packaged, and served remains consistent. Whether it's a proprietary vision model or a fine-tuned financial predictor, if it adheres to a common configuration standard (like MCP), the LLM orchestration layer does not need to learn a new integration pattern for every new tool.

For the Technical Audience: Standardization dramatically reduces integration friction. It allows MLOps teams to use uniform pipelines for serving, versioning, and monitoring, regardless of the model architecture underneath. This consistency is the bedrock of governance and audited AI systems.

Pillar 3: The API Endpoint—Treating Models as Infrastructure

The final piece is the transition to the API. In the past, models were often run in bespoke environments or notebooks. To integrate effectively with enterprise software and LLM workflows, models must conform to the most universal language of modern computing: the REST API or gRPC endpoint.

By deploying an MCP server as an API, developers signal that the specialized AI function is now a piece of infrastructure. It must have SLAs, logging, authentication, and scalability—all the properties we expect from any critical service, such as a database or a message queue. This architectural maturity is essential for achieving enterprise-grade AI adoption.

Future Implications: Building the Composable AI Enterprise

This architectural pattern—LLM orchestrating standardized, API-served tools—is not just an incremental improvement; it represents a fundamental shift toward composable AI.

1. Hyper-Personalization and Agentic Workflows

The combination of general reasoning (LLM) and specific execution (API Tools) enables truly agentic workflows. Future applications will see AI agents managing entire business processes autonomously, not just answering questions. For example, an LLM-powered procurement agent could:

- Read a request for a new vendor setup. (LLM analysis)

- Call a specialized Compliance Model API (via MCP endpoint) to check geopolitical risk.

- Call a Legacy ERP API to verify budget codes.

- Call a Document Generation Model to draft the contract.

This transition from reactive chatbots to proactive, multi-step agents defines the next competitive frontier.

2. Enhanced Governance and Auditing

A critical concern in scaling AI is regulatory compliance and risk management. When an LLM is merely hallucinating information, auditing is difficult. When the LLM is invoking known, version-controlled, and governed APIs, accountability improves drastically. Discussions around AI governance stress the need for trackable deployments (Forbes: The Critical Role of Governance in the Age of Generative AI).

If the MCP server version `v2.1` produced a flawed risk assessment, you know exactly which deployed service caused the issue. Standardization (MCP) ensures that the interface contract remains stable even as the underlying model evolves, making AI behavior predictable and auditable.

3. The Shift in Model Deployment Strategy

While centralized cloud APIs offer scale and ease of management, specialized tasks sometimes demand proximity. The need to balance centralized control with latency and data sovereignty considerations will drive diversity in where these API endpoints live. Resources exploring decentralized deployment, such as running optimized LLM inference at the edge, show that the API structure remains the ideal abstraction layer regardless of the physical location of the compute (NVIDIA: Deploying LLMs at the Edge).

If the MCP server handles configuration efficiently, it can be rapidly deployed on-premise, in a private cloud, or even on specialized hardware near the point of action, all while communicating back to the main LLM orchestrator via the trusted API contract.

Actionable Insights for Technologists and Business Leaders

For organizations looking to leverage this architectural pattern, there are clear steps to take today:

For AI/ML and Software Architects: Prioritize Tool Definition Over Prompting

Invest time now in formally documenting your specialized models as callable functions. Define clear schemas for input parameters and expected outputs. This "contract-first" approach ensures that when your LLM platform matures to adopt advanced function calling, your tools will be immediately plug-and-play. Focus on making every specialized component speak HTTP/JSON.

For MLOps and Infrastructure Teams: Embrace Abstracted Serving

The primary goal of modern MLOps should be creating platform-agnostic serving wrappers. If your deployment pipeline relies heavily on proprietary configurations unique to one model type, you will struggle to feed models into generalized LLM orchestrators. Adopt containerization and API gateways that enforce service-level agreements, ensuring that the model endpoint is reliable, scalable, and secure enough to be trusted by an autonomous LLM.

For Business Leaders: Identify High-Leverage Automation Opportunities

Don't just think about using LLMs for content creation. Look for processes that require complex, multi-step decision-making involving proprietary data or specialized calculations. These tasks—which require reasoning *and* accurate computation—are the sweet spot for tool-augmented LLMs powered by standardized APIs. Identifying these tasks early will determine where you gain the fastest ROI from this new infrastructure paradigm.

Conclusion: The Path to Intelligent Autonomy

The deployment of Model Configuration Protocol servers as reliable API endpoints, ready to be integrated via LLM function calling, is the blueprint for building the next wave of enterprise AI. It moves AI development from an exercise in creative prompting to an engineering discipline focused on robust system integration.

By layering standardization (MCP) onto extensibility (Function Calling) and grounding both in mature infrastructure practices (API Serving), we are creating systems that are not just smarter, but far more reliable, controllable, and capable of true, high-stakes operational autonomy. The future of AI is not one giant brain, but a distributed network of specialized, obedient tools orchestrated by a generalist conductor.