The Diffusion Revolution in Language: How Mercury 2 Shatters Sequential LLM Limits

The world of Large Language Models (LLMs) has been dominated by a single architectural paradigm for years: the Transformer, operating in an autoregressive, word-by-word fashion. This sequential dependency is intuitive for human language, but it has always been the primary bottleneck for speed and efficiency.

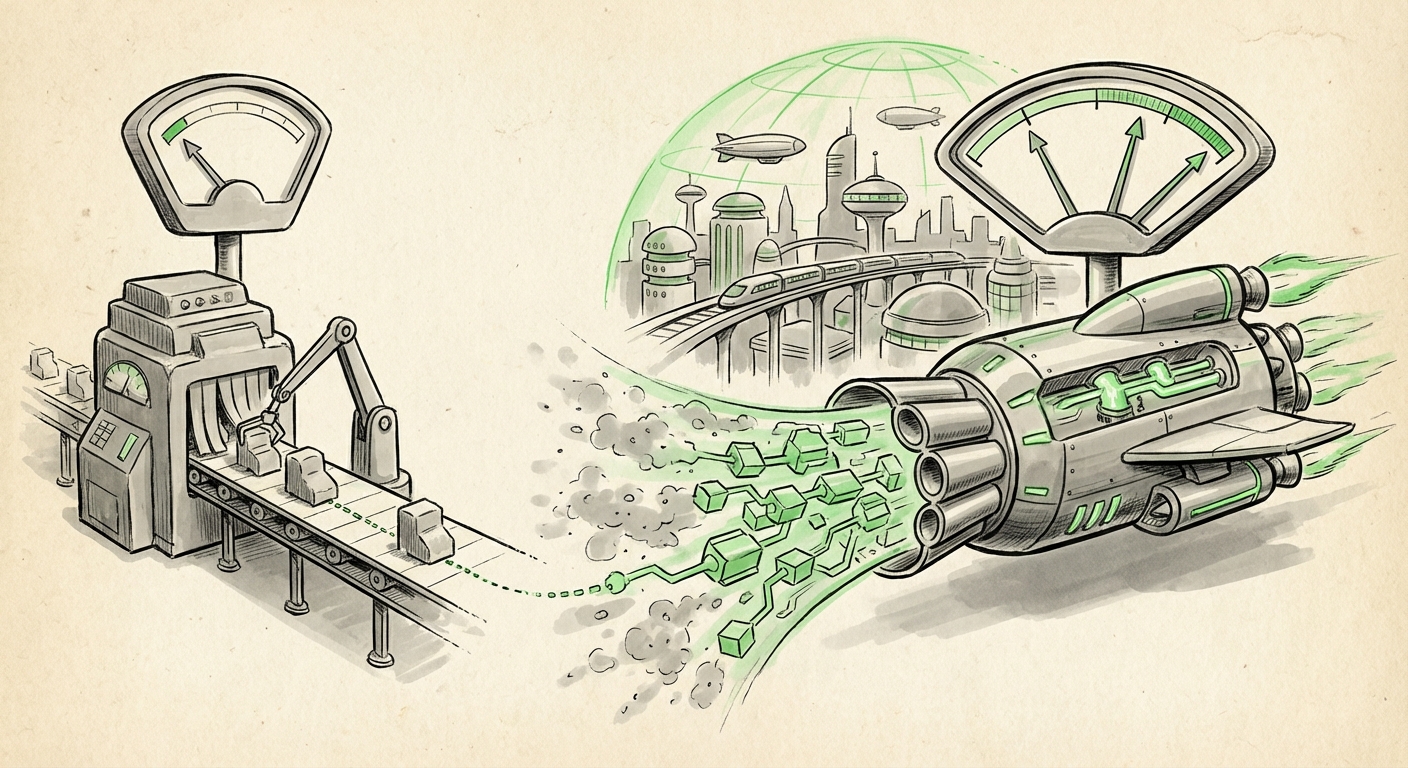

That paradigm is now facing its first major, production-ready challenger. Inception’s launch of Mercury 2, heralded as the first diffusion-based language reasoning model, signals a fundamental architectural pivot. By processing and refining entire passages in parallel, Mercury 2 claims performance gains of more than five times standard models. This is not just an incremental update; it’s a potential structural overhaul for how we build, serve, and utilize generative AI.

The Great Divide: Sequential vs. Parallel AI

To appreciate the magnitude of Mercury 2’s claim, we must first understand the current standard. Traditional LLMs, like GPT or Llama, generate text sequentially. If the model needs to write a 100-word answer, it must first generate word 1, then use word 1 to help generate word 2, and so on, 100 times. This dependency is computationally expensive, especially during inference (when the model is actually running and answering questions).

Mercury 2 borrows its core concept from the image generation world. Diffusion models, popularized by tools like Stable Diffusion, don't paint a picture pixel by pixel. Instead, they start with pure noise and iteratively denoise that noise, refining the entire canvas simultaneously across many steps until a coherent image emerges. Mercury 2 translates this noisy starting point into a reasoning problem.

Applying Diffusion to Discrete Language

The challenge of moving diffusion from continuous data (pixels) to discrete data (words/tokens) is non-trivial. As research into diffusion models for text generation shows, noise must be added and removed in a way that respects grammar and meaning—a much harder task than simply blurring an image. Mercury 2 suggests Inception has successfully navigated this complexity, transforming the task from "what word comes next?" to "how can I refine this entire chunk of text to better fit the prompt's intent?"

For a technical audience, this implies that the forward pass of the model is highly parallelizable. Instead of waiting for the preceding token, the model refines the entire latent representation of the desired output concurrently, leading directly to the reported 5x speedup.

The Battle for Inference Speed: Beyond Autoregression

The most immediate impact of Mercury 2 is on operational cost and latency. In the current AI landscape, inference—the actual serving of the model—is the single largest cost driver for companies deploying LLMs at scale. As we track the race for AI inference speed and efficiency, Mercury 2 offers a potentially disruptive architectural advantage over traditional methods.

Contextualizing the Performance Leap

While many companies are achieving speed boosts through hardware optimization, quantization (reducing the precision of numbers used in the model), or speculative decoding, Mercury 2 claims a fundamental structural advantage. Understanding this requires looking at prior attempts at parallel decoding large language models. Many previous efforts in non-autoregressive generation struggled with output quality; sentences were often grammatically correct but contextually weak or incoherent.

If Mercury 2 maintains state-of-the-art quality while achieving this speed, it sets a new benchmark for infrastructure planners and CTOs. A 5x speedup translates directly to a 5x increase in potential user throughput on existing GPU clusters, or a massive reduction in cloud compute expenditure for high-volume applications.

Reasoning Over Fluency: A Shift in Focus

Perhaps the most profound implication lies in the model’s designation: a "language reasoning model." The AI industry is increasingly recognizing a crucial difference between fluency (sounding human) and reasoning (solving complex, multi-step problems).

Traditional LLMs often use Chain-of-Thought (CoT) prompting to force the sequential model to simulate step-by-step reasoning. However, this is still constrained by the sequential pipeline. Diffusion, by its nature, involves iterative correction across a holistic space. When applied to reasoning, this suggests that Mercury 2 may be inherently better at maintaining logical consistency across complex inputs.

As analysts explore differentiating LLM reasoning vs generation, diffusion may provide the architectural answer. If a model can refine its entire output based on the underlying logical constraints simultaneously, it simulates a more global "check" mechanism, leading to higher fidelity in analytical tasks, code generation, and complex synthesis.

Future Implications: What Mercury 2 Means for AI Adoption

The transition from sequential to parallel processing in language models has deep implications across the technological and societal spectrum.

1. Real-Time Interaction and Agentic AI

For everyday users, the benefit is immediate responsiveness. Applications that require rapid back-and-forth—like customer service bots, real-time coding assistants, or interactive educational tools—will benefit immensely from sub-second response times, making the interaction feel truly instantaneous.

More importantly, this speed enables sophisticated Agentic AI. AI agents designed to perform complex tasks (e.g., research a market, book travel, manage software deployment) require thousands of internal reasoning steps. If each step is 5x faster, a complex task that previously took minutes might now take seconds, unlocking new tiers of automation.

2. Democratization of High-Performance Computing

Faster inference democratizes access to powerful AI. If a given hardware setup can run five times as many queries, the marginal cost of serving complex reasoning tasks plummets. This lowers the barrier for startups and smaller enterprises to integrate leading-edge reasoning capabilities without needing massive, dedicated GPU farms.

3. The Evolution of Model Training

While Mercury 2 is currently discussed in the context of inference speed, the underlying diffusion principle could revolutionize training as well. If the refinement process is inherently more stable or requires fewer total "passes" through the data to reach convergence, training times—currently measured in months and millions of dollars—could see efficiencies we haven't yet imagined.

Actionable Insights for Stakeholders

This development is a clear indicator that the LLM arms race is moving beyond simply scaling parameters (more data, bigger models) to optimizing core architectural efficiency.

- For ML Engineers & Researchers: Begin actively studying diffusion theory as it applies to discrete sequence modeling. The assumption that autoregression is the only path to high-quality text is now demonstrably challenged. Look closely at the denoising objective function used by Mercury 2.

- For Technology Leaders (CTOs/CIOs): Re-evaluate your long-term inference strategy. If Mercury 2’s speed claims hold up under third-party auditing, any significant investment in scaling sequential models today might be technologically obsolete within 18-24 months. Begin stress-testing applications for parallel processing readiness.

- For Product Managers: Focus development on latency-sensitive use cases. Features that were previously impractical due to slow model response times (e.g., hyper-personalized real-time content generation) are now viable.

Conclusion: A New Horizon for Generative AI

Mercury 2 is more than a faster chatbot; it is a proof-of-concept that the sequential constraint imposed by the Transformer model is not an immutable law of physics for language processing. By successfully migrating the proven efficiency of diffusion techniques from the visual domain to language reasoning, Inception has potentially opened a new frontier. If this parallel refinement paradigm proves scalable and stable, we are looking at the end of the era where speed and quality are an unavoidable trade-off. The future of AI will be defined not just by what the models *know*, but by how quickly they can realize that knowledge into coherent action.