The Era of Robust AI Agents: Why OpenAI's Speed and Voice Upgrades Signal Production Readiness

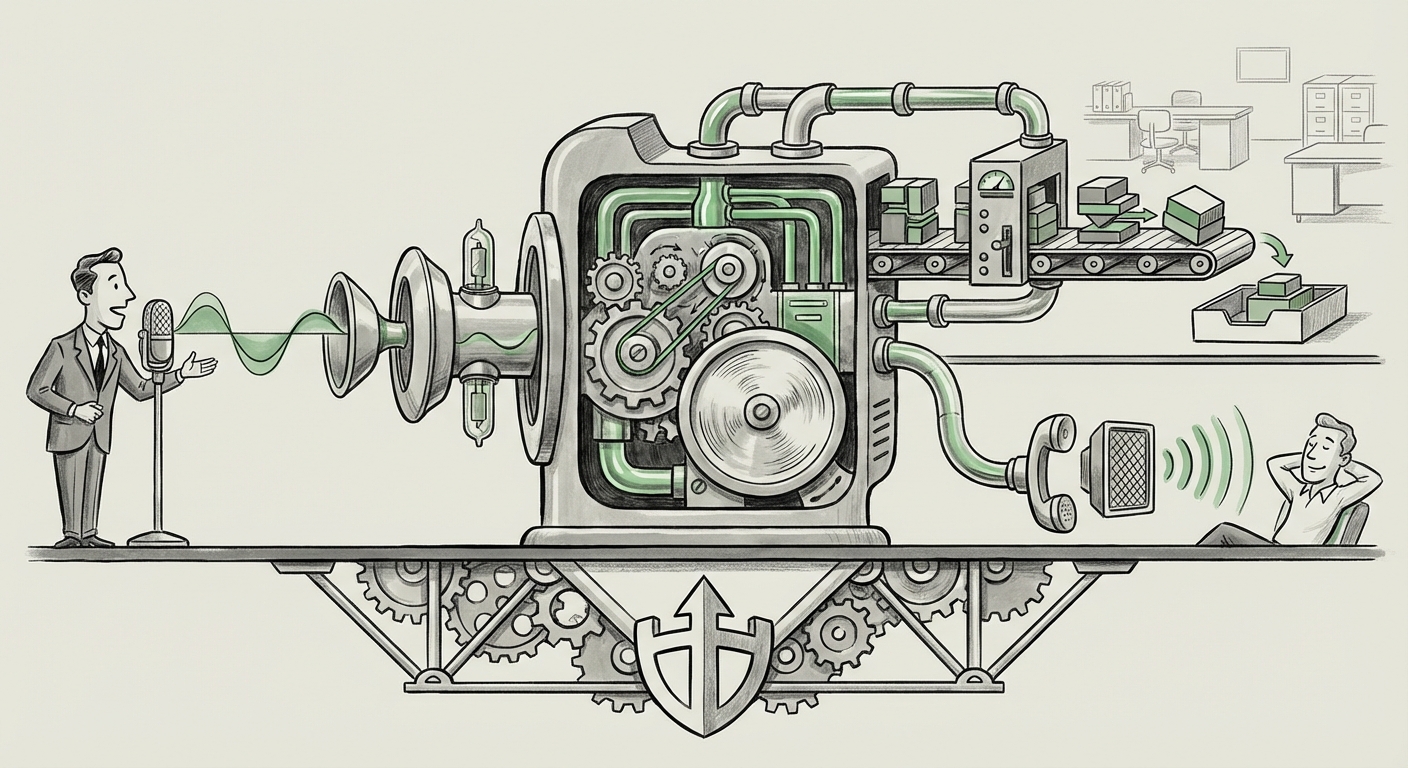

The recent announcement detailing OpenAI’s API upgrades is more than just a routine technical update; it’s a loud signal confirming the industry's most anticipated shift: the migration of large language models (LLMs) from fascinating novelty to mission-critical infrastructure. By specifically targeting improvements in voice reliability and agent speed, OpenAI is addressing the two primary pain points that have historically held back the widespread operationalization of advanced AI.

For years, AI demos were impressive—a model could write poetry or pass a bar exam. But putting that model into a system that has to talk to customers all day, or handle a sequence of complex tasks without failing, has been far trickier. The new upgrades directly tackle these real-world friction points, moving us firmly into the era of the Robust AI Agent.

The Pivot from Latency to Fluency: Voice Reliability Explained

Voice interaction is perhaps the most human way we interface with technology. Yet, early AI voice systems often felt clunky, robotic, or frustratingly slow. This failure wasn't usually the core LLM's fault; it was the pipeline—the series of steps required to turn your speech into text, process it with the AI, and turn the AI’s text response back into smooth, natural speech.

When we talk about voice reliability, we are talking about conquering the entire audio-processing stack. This means:

- Better ASR (Automatic Speech Recognition): The ability to accurately transcribe various accents, background noise, and rapid speech without errors.

- Reduced Jitter and Latency: Ensuring the delay between speaking and the system reacting is minimal—ideally under 500 milliseconds—to maintain conversational rhythm.

- Cohesive TTS (Text-to-Speech): Generating output that sounds emotionally resonant and contextually appropriate, not like a monotone robot.

These improvements, which we would investigate through searches on advancements in low-latency speech processing for conversational AI, are transformative for sectors relying on natural interaction. Imagine a customer service bot that doesn't sound like it’s reading from a script, or an in-car assistant that never mishears a crucial command. This reliability drastically lowers the user's cognitive load, making the AI feel truly helpful rather than just functional.

Contextual Insight: The Technical Race for Real-Time Audio

When assessing the impact, we must look at how this stacks up against the competition. The push for sub-second voice responses is a clear battleground. If external analysis (akin to what we would find by searching for advancements in low-latency speech processing for conversational AI) shows OpenAI is closing the gap or setting new standards, it validates their investment in foundational audio tooling rather than relying solely on third-party services for these critical components. This vertical integration enhances their overall platform offering.

Agent Speed: The Engine of Complex Automation

While voice is about *how* we interact, agent speed is about *what* the AI can accomplish in a sequence. An AI agent is an LLM given a goal and the tools (or ability) to break that goal down into multiple steps, execute them, evaluate the results, and try again if necessary. Think of it as an automated project manager.

In complex scenarios—like debugging code, planning a detailed itinerary, or managing enterprise workflows—an agent might need 5 to 10 sequential calls to the model. If each call takes several seconds, the total execution time balloons, rendering the agent unusable for anything requiring timely feedback.

OpenAI’s focus on API speed means that these multi-step processes are now significantly faster. This efficiency directly correlates with scalability and cost-effectiveness. Faster processing means higher throughput on the same server infrastructure, which can lead to lower costs per complex transaction for developers. This is the fundamental shift enabling true operational agents.

The Clash with Orchestration Layers

The push for internal speed improvement is also a strategic move against the burgeoning ecosystem of open-source and proprietary agent frameworks. When analyzing reports on AI agent frameworks competition latency benchmarks, we see tools built specifically to manage and speed up these multi-step reasoning chains. If the foundational model provider (OpenAI) makes its core API lightning-fast, it minimizes the overhead and complexity introduced by these external orchestration layers. For many simple to moderately complex tasks, developers may now opt to build the agent logic directly into their prompt chains, bypassing external framework dependencies.

For the enterprise CTO, this means deciding whether to build bespoke agent logic relying on OpenAI’s high-speed core, or investing time in integrating and maintaining an external framework like LangChain or AutoGen for highly specialized, multi-agent collaboration.

The Broader Context: Competitive Pressures and Maturity

These upgrades do not occur in a technological vacuum. They are a direct response to intense competition and the increasing demands of enterprise users who are now moving beyond pilot projects. Analyzing the Anthropic Claude 3 Opus agent capabilities vs OpenAI GPT-4 Turbo performance through industry benchmarks reveals a constant "arms race" not just for intelligence (context windows and reasoning quality), but for utility (speed and reliability).

When a competitor releases a model that excels in a particular area—say, advanced multi-tool usage—the pressure mounts for the market leader to ensure their core offering is not just smart, but also fast and dependable enough for industrial use.

Developer Trust as the Ultimate Metric

The most telling indicator of these upgrades' significance lies in the developer reaction to OpenAI API speed improvements for production systems. Developers are the first line of defense against poor performance. If benchmark threads on platforms like Reddit or specialized technical blogs confirm measurable, real-world improvements in timeouts, response handling, and cost-per-action, it solidifies developer trust. Trust, in this context, means developers are willing to stake their application’s uptime on this new infrastructure.

This community validation is crucial. It moves the technology out of the realm of press releases and into the realm of documented, repeatable success in production environments, especially in high-stakes use cases.

Practical Implications: What This Means for Business and Society

The combination of fast, reliable voice and rapid agent execution fundamentally changes the economic and operational feasibility of deploying AI across major business functions.

1. Revolutionizing Customer Experience (CX)

The most immediate beneficiary is contact center automation. Slow, frustrating voice bots are replaced by sophisticated conversational agents capable of handling nuanced, multi-step support requests in real-time. An agent can now listen to a customer explain a complex billing issue, pull up relevant account details, process a payment adjustment, and confirm the change—all within a fluid conversation that feels human-like. This capability promises significant labor cost reduction coupled with improved customer satisfaction.

2. Hyper-Efficient Knowledge Workers

For internal enterprise use, agents become true digital colleagues. Tasks that once required manual toggling between five different software systems (e.g., Sales CRM, Inventory Database, Finance Ledger) can now be abstracted by a fast, voice-enabled agent. A manager can simply ask, "Analyze Q3 sales projections for the European division against last year's actuals and flag any risks over 10%," and receive a synthesized report delivered instantly via text or summarized vocally.

3. Lowering the Bar for Real-Time Interaction

Reliable, low-latency voice opens up new modalities entirely. Think beyond customer service. We are looking at real-time, seamless translation for international business meetings, advanced accessibility tools for individuals with physical impairments, and more intuitive interfaces for complex machinery operation where hands-free interaction is paramount.

Actionable Insights for Moving Forward

For organizations looking to capitalize on this inflection point, the focus must shift from mere "experimentation" to "industrial deployment planning."

- Audit Your Voice Strategy: If your current AI roadmap involves voice, immediately re-evaluate vendor options based on these new latency standards. Prioritize providers who demonstrate end-to-end pipeline optimization, not just impressive LLM scores.

- Prototype Agentic Workflows: Identify complex, multi-step internal processes that currently suffer from manual handoffs. Build prototypes using the new, faster APIs to establish achievable performance benchmarks. The speed upgrade makes previously too-slow workflows viable now.

- Evaluate Framework Dependency: Assess your current reliance on third-party agent orchestration tools. If your workflows are becoming simpler due to core API improvements, you might be able to streamline your tech stack, saving development time and reducing architectural complexity.

- Focus on Guardrails: As agents become faster and more capable, the need for robust safety guardrails (ensuring agents don't take harmful or unauthorized actions) becomes even more critical. Speed cannot come at the expense of security and adherence to business rules.

The message from OpenAI’s latest API enhancements is clear: the building blocks for true, ubiquitous, and natural AI interaction are finally robust enough for the production floor. We are no longer waiting for the technology to catch up to our imagination; we are now tasked with designing the sophisticated applications that this newly reliable foundation can support.