The Benchmark Crisis: Why AI Evaluation is Breaking and What Comes Next

In the fast-paced world of Artificial Intelligence, leadership is often measured by leaderboards. For years, the **SWE-bench**—a rigorous test designed to see if AI models could correctly solve real-world software engineering tasks—has been the proving ground for cutting-edge Large Language Models (LLMs). Yet, a seismic shift has occurred: OpenAI is calling for its retirement.

This is not merely an administrative change; it signals a profound crisis in how we measure machine intelligence. The core finding is troubling: leading models are not necessarily getting smarter; they are getting better at *memorizing* the test.

The Unraveling of the Perfect Test: Memorization vs. Mastery

Imagine a student taking an exam where they accidentally received the exact questions and answers weeks before the test. They might score 100%, but that score tells you nothing about their ability to learn new concepts. This is precisely the problem identified with SWE-bench and similar static benchmarks.

OpenAI argues that current top models have likely "seen" the solutions during their massive training runs. When an AI model scores highly, we assume it performed complex reasoning—breaking down a software bug, selecting the right files, and writing clean code. However, if the answer pattern was already in the data, the score reflects **data contamination** rather than genuine capability. This leads to an "Evaluation Gap"—the gap between a high benchmark score and true, dependable real-world performance.

This situation highlights a critical trend across all of AI evaluation. As models grow exponentially larger, they absorb more of the internet—the very source where most public benchmarks are stored. Researchers are grappling with the **Limitations of static AI coding benchmarks** [1]. Once a test set is public, it eventually gets absorbed into the training data, rendering it useless as an independent measure of progress. For engineers and businesses building on these models, a high score on a contaminated benchmark is dangerously misleading.

Why This Matters for AI Integrity

This isn't just about coding. If models are trained on test answers for software engineering, they are likely doing the same for logic puzzles, medical questions, and legal summaries. The ethical implication is clear: we risk deploying systems that appear highly competent based on outdated metrics, only to fail catastrophically when faced with a novel problem—a scenario known as out-of-distribution failure.

The Pivot: The Race for Dynamic and Dynamic Evaluation

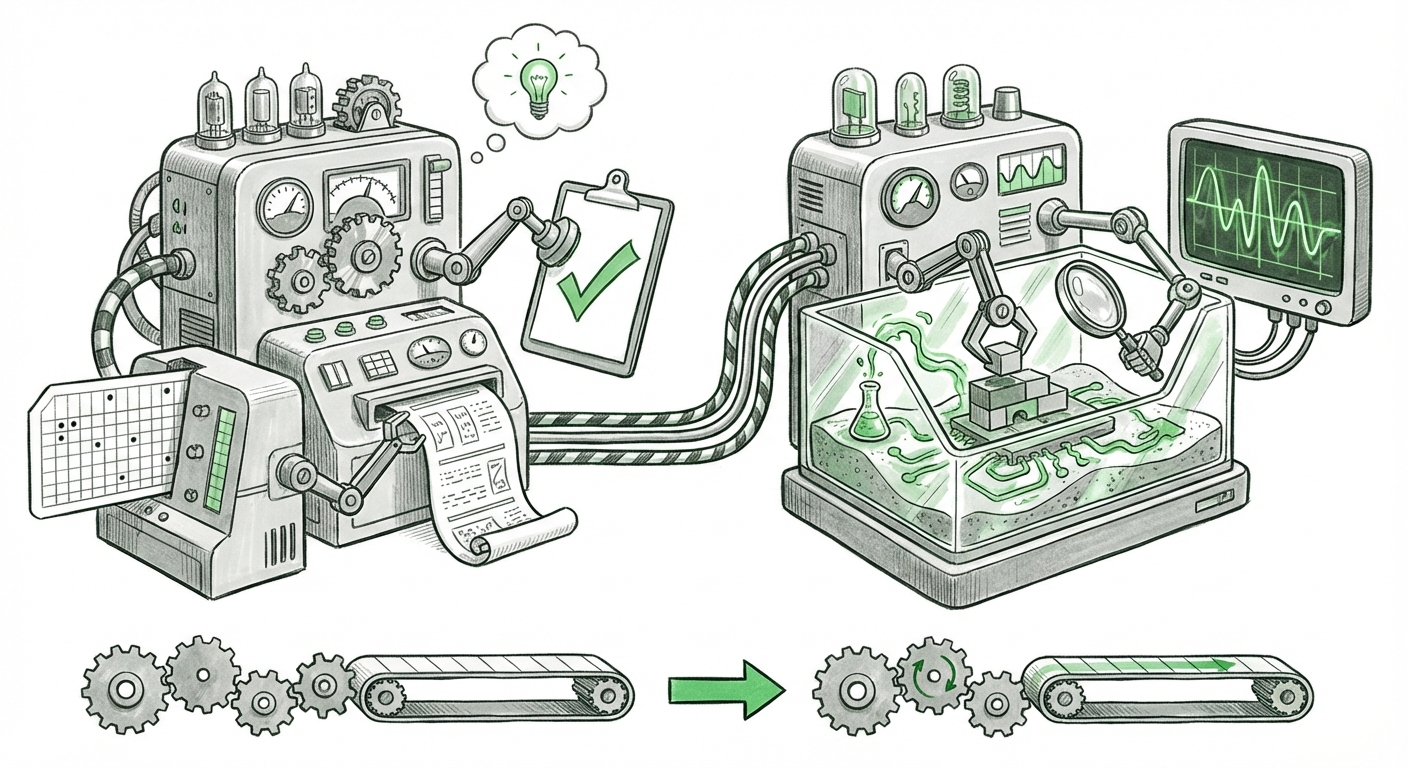

If the past was defined by finding the best static dataset, the future of AI evaluation will be defined by **dynamic evaluation** [2]. The industry is realizing that static snapshots are insufficient; we need evaluation environments that evolve.

What does dynamic evaluation look like? Instead of testing a model on a fixed set of pre-written problems, new methods focus on:

- Execution Environments: Models must run code in sandboxed environments, ensuring that suggested solutions not only compile but also pass runtime tests and security checks. This moves testing from symbolic correctness (writing the right syntax) to functional correctness (working as intended).

- Adversarial Generation: New benchmarks will be created "on the fly" by specialized AI agents designed to find the weaknesses in the model being tested. If the model fixes one vulnerability, the generator immediately creates a new, slightly harder one.

- Human-in-the-Loop Verification: For complex tasks, the process involves continuous, iterative feedback from human experts, mimicking how software developers actually work, rather than providing a single "submit" button for the answer.

For practitioners, this shift is necessary but challenging. It means the evaluation pipeline itself becomes a complex software system requiring significant engineering effort. This moves evaluation from a simple metric gathering exercise to a robust, ongoing **M.L. Operations (MLOps)** commitment.

The Competitive Landscape: Synthetic Data and the New Moat

When public benchmarks become unreliable due to data contamination [3], where do companies turn to prove their models are superior? The answer is increasingly in proprietary, internal, and **synthetic data** [4].

Synthetic data refers to data generated artificially (often by other AI models) rather than collected from the real world. In the context of evaluation, leading AI labs are beginning to generate vast, novel test suites that are guaranteed *not* to be in the public training corpus. This creates a powerful competitive advantage, often referred to as a "data moat."

Implications for Business Strategy

- Diversification of Metrics: Businesses relying on AI must stop focusing solely on generic benchmark scores advertised publicly. They must demand transparency regarding the evaluation methodology—specifically, whether the tests use live execution and private, contamination-free datasets.

- Cost of Evaluation Rises: Setting up dynamic testing environments (like robust code execution sandboxes) is expensive and complex. This raises the barrier to entry for smaller AI startups that cannot afford to build sophisticated, custom evaluation infrastructure.

- Internal Benchmarking Becomes Key: For critical applications (like financial modeling or autonomous systems), reliance on vendor-provided scores will diminish. Companies will need internal teams dedicated to stress-testing models against proprietary, real-world scenarios that simulate their exact use case.

Practical Implications: What This Means for Users and Developers

The death of the simple leaderboard score has direct practical consequences for everyone using or building with generative AI.

For Software Developers

You can no longer blindly trust that an LLM scoring 90% on SWE-bench will flawlessly handle your company's proprietary codebase. Developers must treat AI suggestions with healthy skepticism. Code generated by an LLM must be subjected to the same rigorous peer review, unit testing, and integration testing as code written entirely by a human. The AI is a powerful pair programmer, but it is not yet the sole architect.

For Business Leaders and CTOs

The focus must shift from *which model is best* to *which model is trustworthy for my specific task*. If your core business relies on accurate complex reasoning (e.g., synthesizing regulatory documents), you need to invest in building a private, dynamic evaluation suite specific to regulatory language. Generic performance is increasingly irrelevant; specialized, verified performance is the new gold standard.

For Society and Policy Makers

The ease with which benchmarks can be "gamed" through data contamination raises serious questions about transparency and regulation. Policy efforts should focus less on regulating the model itself and more on mandating auditable, transparent evaluation processes that prove capability in safe, controlled, and evolving environments, rather than relying on easily fabricated static scores.

Actionable Insights: Navigating the Post-Benchmark Era

As an analyst, I advise stakeholders to adopt a proactive stance toward AI validation:

- Demand Execution Proof: When procuring LLM services, insist on metrics derived from live code execution or functional simulation, not just token accuracy on static inputs.

- Isolate and Monitor: For production systems, segment model usage. Use less critical models for drafting or summarizing, but reserve the most scrutinized, rigorously tested models for core decision-making loops.

- Invest in Synthetic Generation: Begin exploring tools that allow your internal teams to generate novel, challenging test cases specific to your domain. Treat your evaluation data pipeline as seriously as your deployment pipeline.

The retirement of SWE-bench is a milestone indicating that AI has reached a new level of sophistication. It forces a necessary, albeit inconvenient, maturation of the entire ecosystem. We are moving away from easy scores and toward the difficult, necessary work of verifying genuine intelligence in complex, unpredictable environments. This difficult pivot is ultimately what will allow AI to move safely and reliably from the lab into the critical infrastructure of our modern world.