The End of Easy Scores: Why AI Coding Benchmarks Like SWE-bench Are Broken and What Comes Next

For years, the Artificial Intelligence world has been engaged in a visible, high-stakes competition. Progress in large language models (LLMs) was often tracked by leaderboards: who could score highest on benchmarks testing math, reasoning, and, crucially, coding. The **SWE-bench**—a platform designed to test AI models on real-world GitHub issues—was a cornerstone of this contest, suggesting models were finally ready to operate as capable software engineers.

However, a recent seismic announcement from OpenAI has thrown this entire system into question. They are arguing that SWE-bench, the very yardstick many have been using to measure success, is fundamentally flawed. If this critique holds true, it means the industry has spent critical development cycles chasing phantom metrics. We are at an inflection point where the tools used to measure AI progress are being retired, forcing a necessary, if uncomfortable, reckoning with what "intelligence" truly means in the age of massive datasets.

The Crisis of Contamination: Memorization vs. Meaningful Ability

Imagine studying for a big math exam by reading every single problem and its solution multiple times. If you score 100% on the test, did you learn the underlying math principles, or did you just memorize the answers? This is the central problem OpenAI has identified with SWE-bench.

The core critique boils down to two critical issues:

- Flawed Tasks: Many coding challenges within the benchmark are structured poorly. They contain errors or constraints that lead even a perfectly correct AI-generated solution to be incorrectly flagged as a failure. This means models are penalized for being right.

- Data Leakage (Memorization): The biggest problem is that leading LLMs, trained on the vast expanse of the public internet, have almost certainly ingested the solutions to these benchmark problems, or very similar ones, during their training phase.

As a result, high SWE-bench scores do not prove a model can reason through a novel software engineering problem; they prove the model is excellent at retrieving and slightly rephrasing an existing solution. This is pattern matching, not true algorithmic ability.

A Broader Industry Concern: The Contamination Effect

This is not just an OpenAI concern; it reflects a systemic vulnerability facing all large-scale AI evaluation. As models grow exponentially (a trend often described by scaling laws), the possibility of them absorbing the entirety of the public testing corpus increases dramatically. This phenomenon, often called benchmark contamination, plagues tests across the board.

When training data encompasses the test data, we cease measuring generalization and begin measuring data recall. This conflates memorization capacity with problem-solving intelligence.

We see echoes of this challenge in other complex domains. If an AI is tested on complex physics problems that were heavily featured in its training data, its apparent mastery is suspect. For business leaders and investors, this realization is vital: if the performance metrics are inflated by memorization, the perceived reliability of these tools in high-stakes environments—like writing secure, production-ready code—is severely undermined. This parallels research looking into how LLMs perform against adversarial security testing, where reliance on known patterns can lead to predictable failures under attack.

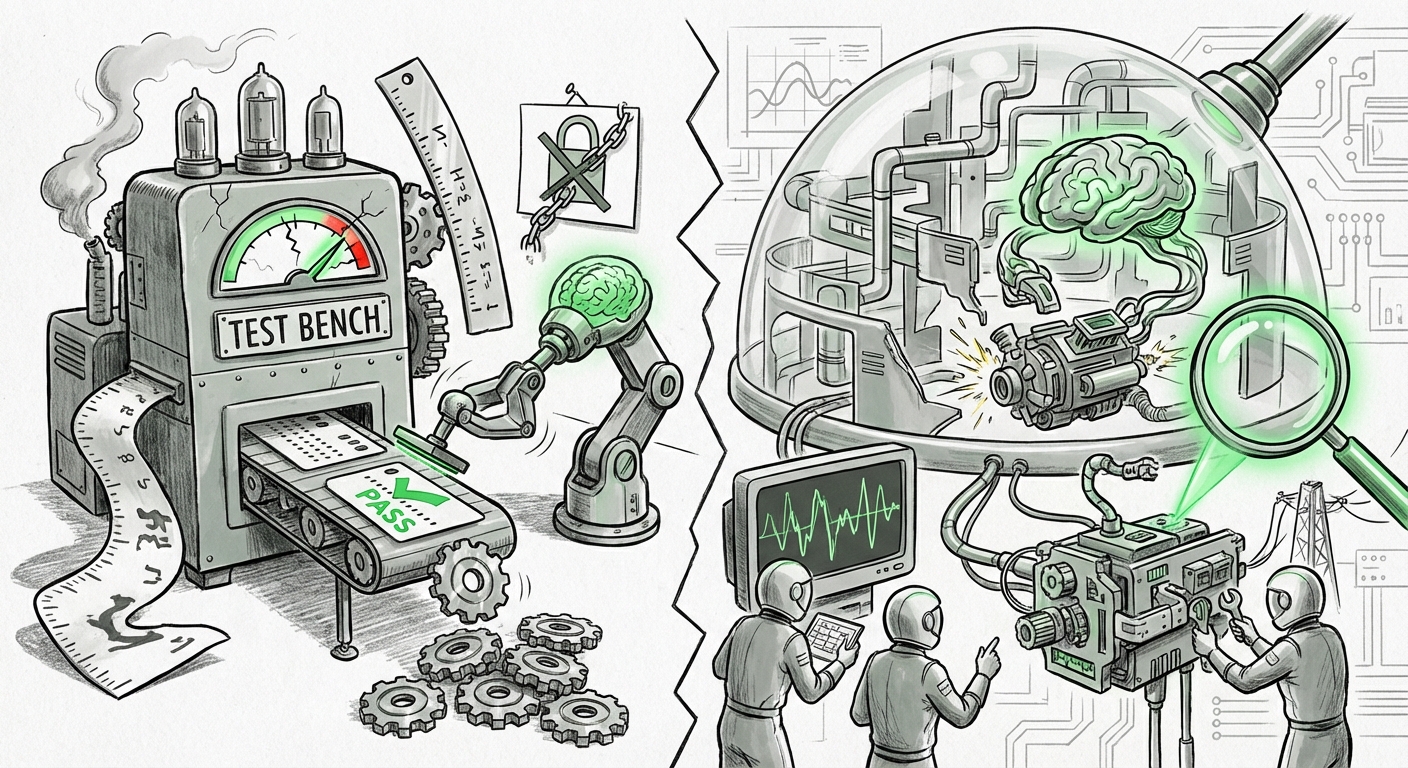

The Future of Evaluation: Moving Beyond Static Tests

If static, fixed benchmarks are failing, the AI community must pivot rapidly. The challenge now is to devise evaluation methods that are so dynamic, interactive, and complex that memorization becomes computationally impossible.

1. Dynamic and Generative Benchmarking

The most promising direction involves moving toward dynamic benchmarking. Instead of testing against a fixed list of problems, future tests will likely involve generating novel scenarios or integrating the AI agent into an entirely new, sandboxed software environment. This means the AI must understand the *context* of a running application, not just the syntax of a single function.

This evolution is already being discussed in research circles. The goal is to create tests where the problem itself changes based on the AI’s previous action. For an engineer, this is how real work happens: you fix one bug, and that action reveals two new integration issues. The benchmark must mimic that iterative, multi-step complexity. This forces models to rely on true reasoning rather than recalling a solution for "Issue #452."

2. The Shift to Acceptance Metrics

For enterprises adopting AI assistants like GitHub Copilot or similar internal tools, the focus must shift away from theoretical benchmark scores and toward practical, observable metrics:

- Code Review Acceptance Rate: How often does the AI-generated code pass human review on the first attempt?

- Security Vulnerability Introduction: Does the AI suggest insecure patterns, or does it proactively suggest security improvements?

- Integration Success Rate: Can the AI correctly modify existing, complex, proprietary codebases without breaking dependencies?

These real-world measures are harder to game and directly correlate with productivity gains. They test the AI's ability to collaborate, not just to complete a snippet.

3. Testing for Robustness and Alignment

When models rely on memorization, they often exhibit brittle behavior when faced with slightly altered inputs. True intelligence is robust. Future evaluation will place heavy emphasis on adversarial testing—trying to trick the model into producing unsafe, biased, or incorrect code by subtly changing the prompt or environment.

This is crucial for alignment. If we cannot trust a model to handle edge cases or adversarial prompts correctly, deploying it to manage critical infrastructure remains a massive liability. Benchmarks that test security robustness (Query 3) will become just as important as those testing functional correctness.

Implications for Technology Strategy and Investment

The potential collapse of existing coding benchmarks sends clear signals to various stakeholders:

For AI Developers and Researchers

The race is no longer about building bigger models; it’s about building *smarter evaluation systems*. The organizations that pioneer genuinely dynamic, un-leakable evaluation frameworks will gain a massive advantage in proving the real utility of their next-generation models. There will be a premium placed on transparency regarding training data cuts and evaluation protocols.

For Enterprise Users and CTOs

Do not base your purchasing decisions solely on a vendor’s claimed benchmark ranking. If a vendor touts a 90% score on an old test, be skeptical. Instead, demand proof-of-concept trials using your company’s actual, proprietary codebases under realistic constraints. The transition period following the realization that old scores are weak is a moment of opportunity to negotiate favorable terms based on genuine performance benchmarks.

For Investors and Analysts

The hype cycle driven by easily gamed metrics is due for a correction. Investors need to shift focus from vanity metrics (like parameter count or static benchmark leadership) toward evidence of sustainable, reliable, and safe deployment capabilities. The longevity of an AI company will depend on its ability to adapt to—and ideally, define—the next generation of rigorous, dynamic testing protocols (Query 4).

Actionable Insights: How to Navigate the Evaluation Shift

This moment requires action, not just analysis. To ensure your organization is leveraging AI capabilities accurately, consider these steps:

- Establish Internal Baselines: Immediately begin testing leading models against a small, curated set of your organization’s most critical and idiosyncratic coding tasks. This creates an internal "golden standard" immune to public benchmark contamination.

- Prioritize Contextual Understanding: Favor models that demonstrate deep understanding of large code contexts (multi-file dependencies, framework conventions) over those that excel at isolated function completion.

- Invest in Dynamic Sandboxes: Support the development and adoption of testing environments that dynamically generate tasks or simulate full integration pipelines. This is the future of reliable testing (Query 2).

- Mandate Security Audits: Treat all AI-generated code as if it were written by a junior developer with a security oversight problem. Mandatory security scanning and peer review should become standard before any AI-assisted code hits production.

Conclusion: The Necessary Pain of Maturation

The dismantling of SWE-bench, while potentially embarrassing for the industry, is ultimately a sign of AI maturation. We are moving from the easy wins of infancy—where simply showing *something* works is enough—to the rigorous demands of adulthood, where verifiable reliability and true generalization are non-negotiable.

When the ruler used to measure progress is found to be warped, you must discard it and forge a new one. The future of AI development depends not just on building smarter models, but on the collective wisdom to ask harder, more insightful questions about what those models can actually do when the training wheels come off.