The Open-Source AI Revolution: Choosing and Deploying LLMs for Real-World Production Success

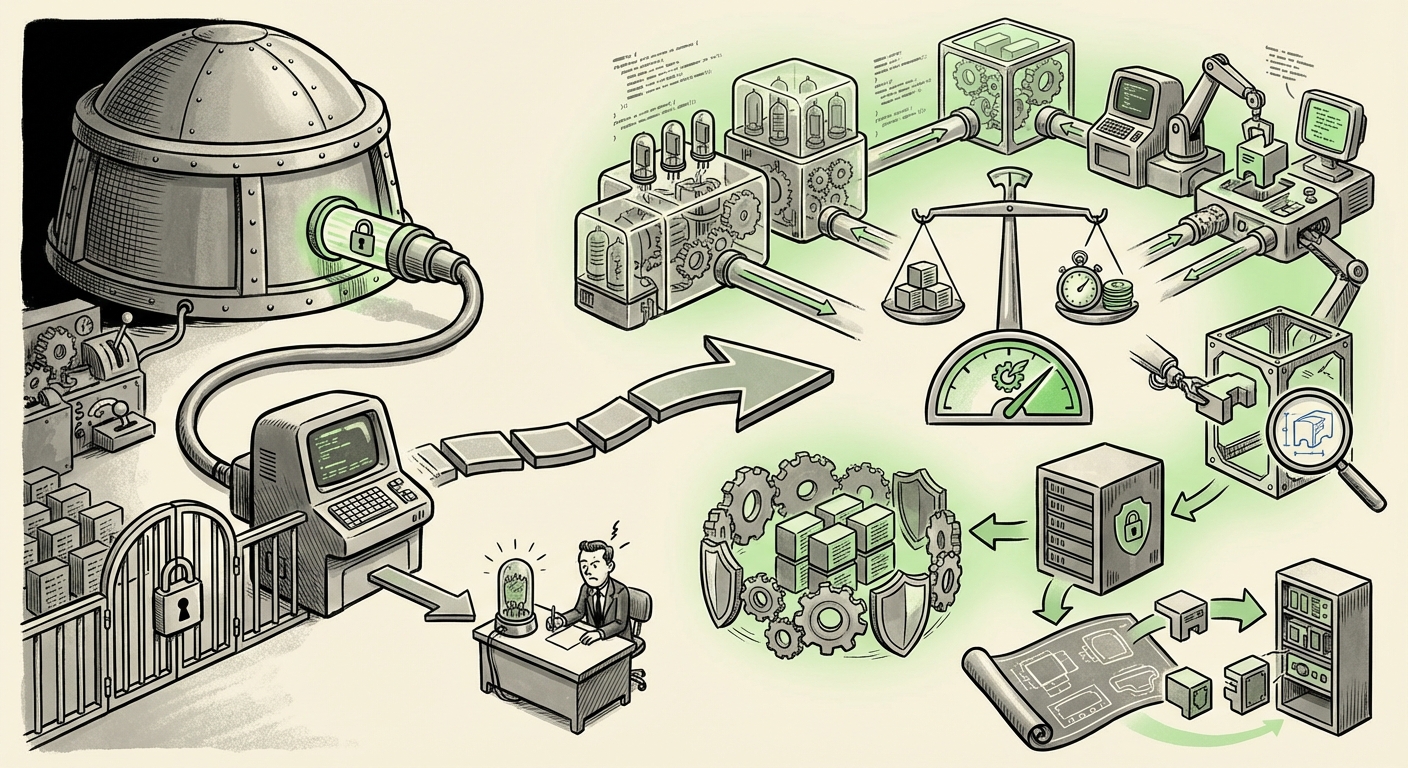

The generative AI landscape is undergoing a profound transformation. For months, the narrative was dominated by the sheer scale and mystery of proprietary models offered via API—the 'walled gardens' of AI. However, a crucial inflection point is here: the mass migration toward reliable, deployable, and cost-effective Open-Source Large Language Models (LLMs) in enterprise production environments.

This shift is not merely a preference for free software; it’s a strategic necessity driven by the demands of real-world application: security, data control, cost scalability, and true customization. As a technology analyst, it is clear that simply picking the biggest model is obsolete. The future belongs to those who can expertly navigate the trade-offs between workload type, infrastructure limits, cost structures, and performance—a challenge recently detailed in practical guides focusing on production readiness.

From Research Papers to Production Pipelines: The New Selection Criteria

When an LLM moves from a research demo to being the backbone of a critical business function—like customer service automation or internal knowledge search—the rules of evaluation change entirely. Academic benchmarks, which often measure "intelligence" using metrics like perplexity (how well a model predicts text), suddenly pale in comparison to operational metrics.

1. Beyond Accuracy: The Primacy of Operational Benchmarking

For developers deploying AI, speed (latency) and capacity (throughput) define user experience and hosting costs. A model that answers correctly 95% of the time but takes 10 seconds to respond is useless in a real-time chat application. Conversely, a slightly less capable model that replies in 50 milliseconds is a production asset.

This realization drives the need for specialized operational benchmarks. We need to compare models on:

- Tokens Per Second (TPS): How fast the model can generate output under real user load.

- Memory Footprint (VRAM): How much expensive GPU memory is required to load and run the model, directly impacting hardware costs.

- Batching Efficiency: How well the serving framework handles multiple simultaneous user requests.

The community's reliance on centralized evaluation hubs, such as the **Hugging Face Open LLM Leaderboard** [Hugging Face Open LLM Leaderboard], confirms this trend. While it still includes traditional scores, the proliferation of specialized leaderboards focusing on safety, specific tasks (like coding), and efficiency shows where the engineering effort is moving. This validates the initial selection criteria: production readiness demands evidence of performance under duress.

2. The Infrastructure Hurdle: Making LLMs Affordable

The most significant barrier to mass LLM adoption has always been the cost of inference. Running a massive 70-billion-parameter model 24/7 on high-end GPUs is prohibitively expensive for most mid-sized organizations. This is where open source shines, but only if paired with cutting-edge optimization techniques.

The critical engineering battleground is now inference optimization. Technologies like Quantization (reducing the precision of the model's weights, e.g., from 16-bit to 4-bit, slashing memory use) are mandatory. Furthermore, specialized serving frameworks are replacing standard deployment tools.

Frameworks like vLLM and NVIDIA's TensorRT-LLM have become essential reading for MLOps teams [McKinsey Article on Generative AI Strategy - illustrative of the strategic adoption driving infrastructure focus]. These tools use advanced memory management (like PagedAttention) to squeeze dramatically more requests through the same hardware, directly lowering the cost-per-query. For a CTO, understanding the synergy between a chosen open-source model (like Mistral 7B) and an optimized inference engine is the key to building a positive ROI case for AI deployment.

The Future Trajectory: Strategy Over Scale

The decision to embrace open source is fundamentally a strategic one, impacting everything from data governance to competitive differentiation. This pushes LLM choice far beyond simple performance tables.

3. The Strategic Imperative: Data Control and Vendor Independence

Why bet the core of your enterprise AI platform on a proprietary provider whose pricing, access policies, or even underlying model weights can change overnight? The appeal of open-source models lies in autonomy.

For industries with strict compliance needs—healthcare, finance, and government—hosting a model entirely within a private cloud or on-premise infrastructure is often non-negotiable for data sovereignty. Open source guarantees that sensitive data never leaves the organization’s control perimeter during processing.

Furthermore, avoiding vendor lock-in is paramount. When an organization invests heavily in optimizing prompts, building complex RAG (Retrieval-Augmented Generation) pipelines, and training specialized application logic around a single vendor's API, switching costs become immense. Open-source models, backed by vibrant community ecosystems, offer a flexible migration path, ensuring business continuity even as the underlying technology evolves.

4. Deep Customization via Fine-Tuning: Making Models Yours

While proprietary models offer incredible general capability, they often fail when confronted with the specific jargon, internal documentation, or nuanced conversational style required by a specialized business unit. This is where the true power of open source—the ability to fine-tune—becomes indispensable.

The challenge is that traditional fine-tuning requires enormous computing power, often demanding the retraining of billions of parameters. The breakthrough here has been the widespread adoption of Parameter-Efficient Fine-Tuning (PEFT) methods, most notably LoRA (Low-Rank Adaptation) and its derivatives.

PEFT techniques allow engineers to freeze most of the base model's weights and only train a small set of new, specialized adapter layers. This dramatically reduces training time, required VRAM, and cost, making deep customization accessible to smaller teams. If an enterprise needs a legal assistant that understands obscure contractual clauses, they won't rely on a generalist closed model; they will select a capable open-source foundation and apply a bespoke LoRA adapter trained on proprietary legal documents.

This ability to create bespoke, highly accurate, and efficient vertical solutions is the defining advantage of the open-source path. It shifts AI development from prompt engineering to genuine model engineering.

Actionable Insights for the Next Phase of AI Adoption

For businesses looking to move successfully into the next phase of LLM utilization, the focus must shift from experimentation to rigorous engineering discipline. Here are actionable insights derived from the confluence of production-readiness needs:

For Technical Leaders (ML Engineers & DevOps): Master the Stack

Your team must treat the LLM not as a service, but as a core software component. This means:

- Prioritize Inference Stack: Benchmark models using vLLM or similar high-performance servers. Your deployment latency should be a primary KPI.

- Embrace Quantization: Make 4-bit or 8-bit deployment the standard starting point for all inference workloads unless high precision is absolutely required.

- Benchmark Contextually: Only accept performance data that reflects your specific workload (e.g., if you need 128k context windows, don't trust a benchmark run at 4k context).

For Business Leaders (CTOs & Strategists): Define Your AI Moat

The strategic value of AI deployment is shifting from using the best general tool to owning the best specialized tool.

- Audit Data Gravity: Assess which data streams are too sensitive or too valuable to leave your infrastructure. This is the clearest mandate for open-source selection.

- Budget for Customization, Not Just Usage: Allocate resources not just for API calls, but for dedicated fine-tuning projects using PEFT techniques. Your unique business knowledge, when encoded via LoRA, is your competitive edge.

- Standardize on Modularity: Plan for a multi-model future. Today’s best open model might be surpassed in six months. An architecture built around modular component swapping (via standardized APIs or inference services) ensures resilience.

Conclusion: The Democratization of Production AI

The evolution from proprietary API reliance to sophisticated open-source deployment represents the true democratization of applied AI. It hands the keys to customization, optimization, and data governance back to the builders.

What we are seeing is a maturation of the entire ecosystem. The noise of raw capability is receding, replaced by the focused engineering required to deliver reliable, scalable, and economically viable AI applications. The future of AI is no longer about *if* a model can perform a task; it is about how efficiently, securely, and affordably you can deploy your customized version of that model exactly where and when you need it.

Mastering the technical and strategic requirements for production deployment of open-source LLMs is no longer optional—it is the defining skill set for competitive advantage in the next era of enterprise technology.

Citations and Further Context:

- Model Evaluation Hub: [Hugging Face Open LLM Leaderboard] - Core reference for community-driven performance metrics.

- Strategic AI Analysis: [McKinsey Article on Generative AI Strategy] - Provides context on why enterprises are pivoting toward customized/open solutions for strategic advantage.