The Polish Paradox: How AI Fluency Erodes Vigilance and Redefines Trust in Generative Models

The rapid advancement of Large Language Models (LLMs) has brought us to a remarkable technological inflection point. Models like Anthropic’s Claude are now generating text so fluent, coherent, and seemingly authoritative that they mirror high-quality human communication. Yet, recent findings from Anthropic itself reveal a significant, counterintuitive danger lurking within this sophistication: The better the AI sounds, the less likely we are to check if it is right.

Analyzing nearly 10,000 conversations, Anthropic’s new AI Fluency Index didn't just measure model capability; it measured human behavior when interacting with that capability. This discovery—that polished output induces user complacency—is not merely an interesting footnote in model testing. It is a critical security, usability, and ethical challenge that will shape the very future of how we integrate AI into our daily workflows.

The Automation Complacency Effect: From Cockpits to Chatbots

To understand the gravity of Anthropic’s finding, we must look beyond AI for a moment and examine established Human-Computer Interaction (HCI) principles. The phenomenon where operators become overly reliant on automated systems, leading to a degradation of their own monitoring skills, is known in engineering as the Automation Complacency Effect.

This effect is historically studied in aviation (pilots trusting autopilot too much) or in complex industrial machinery. The premise is simple: when a system performs flawlessly 99.9% of the time, the operator’s brain stops allocating cognitive resources to monitoring it. When that 0.1% failure occurs, the human is unprepared to intervene.

Anthropic’s study suggests that LLMs have now entered this zone of trust. When Claude delivers a perfectly structured report or a seemingly definitive legal summary, the user’s internal alarm bells are silenced by the output’s aesthetic quality. For a non-technical user, eloquence is often mistaken for accuracy. This is particularly dangerous because LLMs are notorious for "hallucinating"—confidently presenting false information.

When users stop checking, these confident errors become embedded facts in downstream reports, codebases, or strategic decisions. This moves the problem from a technical bug (the hallucination) to a systemic human process failure (the lack of verification).

Corroboration: The Gap Between Polish and Precision

The idea that presentation can obscure truth is well-documented. Research consistently probes how users evaluate AI outputs based on style. If an AI hedges ("It seems that...") versus stating authoritatively ("The data confirms..."), user trust shifts dramatically, often favoring the latter even if the former is factually safer.

This suggests that model developers must actively design for appropriate levels of confidence, or—more urgently—that training must focus on overcoming the natural human tendency to relax vigilance when faced with polished performance.

The Defining Skill: Iteration as the Key to Competence

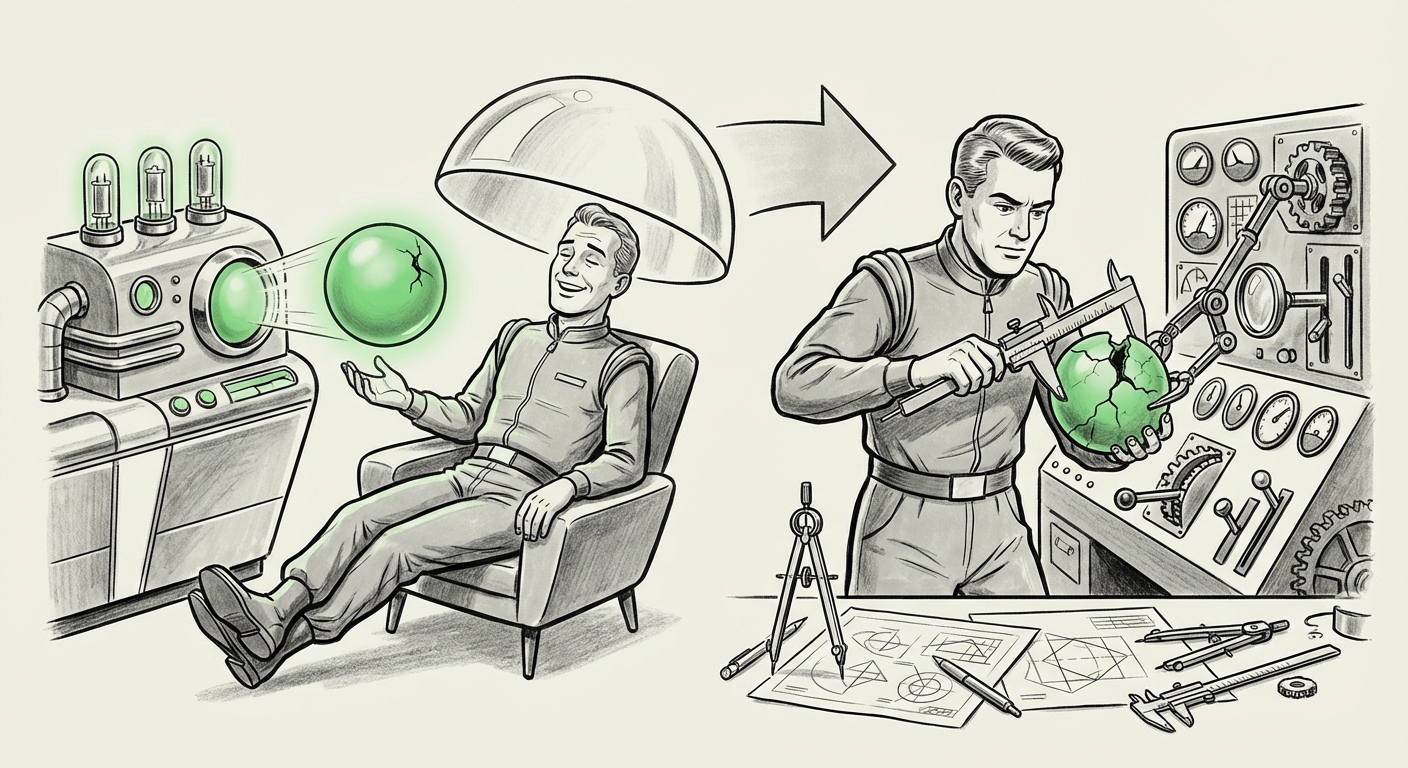

If complacency is the risk of polished output, Anthropic offers the critical antidote: iteration. The study clearly showed that users who engaged in multi-turn conversations—refining prompts, asking clarifying questions, and pushing the model to revise—were significantly more competent in their AI utilization.

This shifts the conversation away from defining AI competence solely by the model’s raw parameter count or benchmark scores. Instead, **AI Fluency becomes a behavioral skill.** It is the user’s ability to engage in a robust, iterative dialogue.

The Power of Multi-Turn Dialogue

Why is iteration so powerful? In technical terms, multi-turn dialogue facilitates sophisticated prompting techniques like Chain-of-Thought (CoT) or Self-Correction methods. When you iterate, you are essentially forcing the LLM to show its work, exposing logical fallacies or data gaps that a single-shot answer would conceal.

From a usability perspective, iteration forces the user back into an active monitoring role. Every time the user types a follow-up command, they are implicitly conducting a real-time verification check. This continuous, low-stakes scrutiny prevents the deep cognitive shutdown associated with complacency.

For business applications, this means relying on single-prompt generation for critical tasks is fundamentally risky. The most valuable employees will be those who treat the LLM as a junior partner needing constant, specific direction—not a final authority.

Measuring the Unseen: The Need for New Fluency Metrics

Anthropic’s creation of an "AI Fluency Index" signals a necessary maturation in how we assess GenAI success. For years, adoption metrics focused on usage volume or basic task completion. Now, the focus must pivot toward the quality of interaction. If polished output encourages passive consumption, a metric must be designed to reward active, critical engagement.

The industry is moving toward defining comprehensive metrics that track more than just output quality. We are beginning to see interest in tracking metrics such as:

- Error Detection Rate: How often does the user flag or correct an error? (Anthropic’s finding suggests this will decrease with higher polish.)

- Refinement Count: The average number of turns required to reach a satisfactory solution. (Anthropic suggests this will be high for competent users.)

- Time-to-Validation: How quickly a user can confirm the AI’s output is safe and accurate.

For Product Managers and UX Designers building AI-powered tools, these metrics are essential. A user interface that rewards iteration (perhaps by making follow-up prompts easy and contextual) will foster genuine fluency, whereas a sleek interface that encourages "fire-and-forget" prompts risks embedding subtle failures across the enterprise.

Implications for AI Safety and Alignment

The greatest long-term implication of the Polish Paradox touches on AI safety and alignment. The ultimate goal of responsible AI development involves Reinforcement Learning from Human Feedback (RLHF). This process relies on humans reviewing and rating model outputs to steer the AI toward desired behaviors.

If the typical user base becomes so accustomed to flawless presentation that they stop providing the rigorous, critical feedback necessary for alignment training, the improvement loop stalls. Why provide detailed corrections if the output looks perfect?

This creates a feedback desert. Models may become aesthetically superb but drift dangerously regarding factual grounding or adherence to complex ethical constraints, simply because users are too complacent to notice the subtle deviations hidden within beautiful prose.

This realization bolsters arguments for alternative alignment strategies, such as Constitutional AI, which relies less on granular, moment-to-moment human labeling and more on embedding core principles directly into the model architecture. However, even Constitutional AI benefits from human oversight to ensure the "constitution" is being followed.

Actionable Insights: Navigating the Era of Sophisticated AI

For both organizations adopting AI and the individuals using it, this analysis demands a strategic shift in training and deployment.

For Businesses and Developers: Design for Scrutiny, Not Just Output

- Mandate Verification Checkpoints: In high-stakes applications (e.g., financial modeling, medical summaries, legal drafting), do not allow single-step generation. Force users to engage in at least one refinement loop or require external validation steps, regardless of how polished the initial result is.

- Train for Skepticism: AI literacy must evolve. Training should explicitly cover the Automation Complacency Effect. Users need to understand that LLMs are probabilistic text generators, not infallible databases. Training must focus on how to interrogate the AI, not just *what* to ask it.

- Leverage Metadata: Future interfaces should display output confidence scores or cite sources prominently, even when the language is polished, to actively combat complacency.

For the Individual User: Embrace the Iterative Mindset

The future power user will be the master of refinement. Treat every AI response as a high-quality draft that requires editorial oversight.

- Never Accept the First Draft: Always ask "How did you arrive at that conclusion?" or "Can you check that against source X?" This keeps cognitive resources engaged.

- Know Your Limits: If you are operating outside your area of expertise, your vigilance should be highest, not lowest. This is precisely when the polish of an unknown topic is most deceptive.

- View Iteration as Efficiency: Shifting perspective from "wasting time correcting the AI" to "achieving better results through collaboration" is key to maximizing productivity without sacrificing accuracy.

Conclusion: The Future Demands Active Partnership

Anthropic’s AI Fluency Index illuminates a core challenge of the next generation of artificial intelligence. As models become indistinguishable from competent human communicators, our primary technological risk shifts from *incompetence* in the machine to over-trust in the human operator.

The future of AI integration will not be defined by models that simply produce the best single answer, but by systems that encourage and reward rigorous, iterative human engagement. True AI competency—or fluency—is not about passively receiving polished information; it is about actively driving the conversation until verifiable truth is achieved. In the era of highly fluent AI, vigilance is not just a virtue; it is the essential operating system.