The World Model Revolution: How Sora's Physics Simulation Changes AI's Future

The launch of OpenAI’s Sora didn't just introduce a better video generator; it signaled an industrial paradigm shift. For years, generative AI focused on creating static, beautiful content—images, text, music. Sora, however, feels fundamentally different. It suggests that the underlying models are beginning to grasp *how the world works*. This isn't just sophisticated pattern matching; it hints at the early realization of a true World Model.

To understand why this matters—why this is the "Sora Moment"—we must step back from the immediate visual spectacle and look at the foundational research and the competitive reaction that has since flooded the market. This analysis synthesizes context from foundational AI research, current industry dynamics, and the remaining technical challenges to frame what this means for the future of intelligent systems.

The Academic Genealogy: World Models Before the Hype

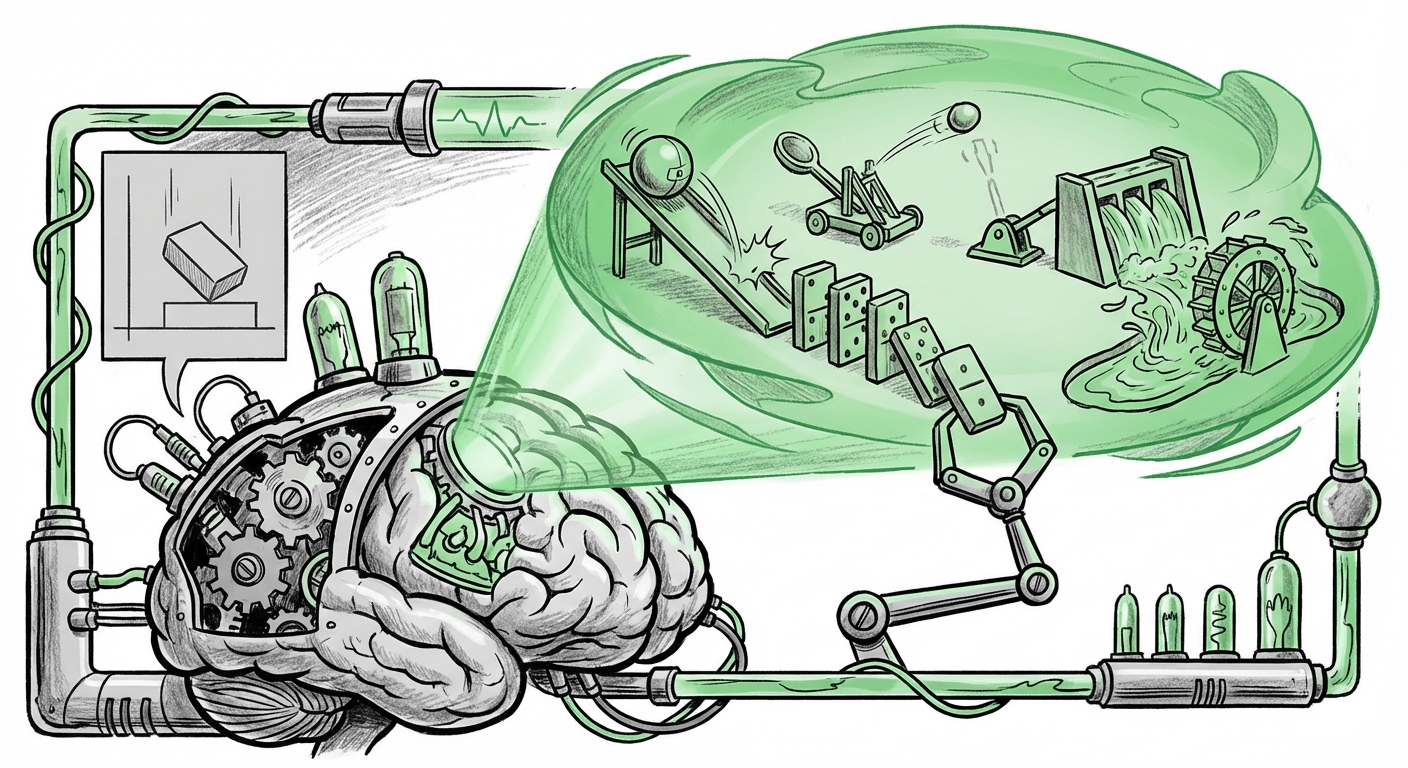

The idea that an Artificial General Intelligence (AGI) must first build an internal, predictive model of its environment is a long-standing hypothesis, championed by leaders in the field. This concept, often referred to as a "World Model," aims to give AI an intuitive understanding of physics, persistence, and cause-and-effect.

If you ask a child to drop a ball, they know it will fall, bounce predictably, and roll until friction stops it. They don't calculate Navier-Stokes equations; they possess an innate, learned model of physics. Early attempts to build this digitally, such as the foundational work explored by researchers at **DeepMind**, focused on training agents within simulated environments. The goal was for the agent to learn a compact representation of the world (the model) and then use that model to plan actions without constantly relying on real-world feedback.

Sora appears to be the first large-scale demonstration that a transformer architecture, trained on vast quantities of video data, can implicitly develop such a model. The model isn't explicitly programmed with the rules of gravity or fluid dynamics; instead, it has learned them by observing billions of hours of video data where these laws are consistently applied. When Sora generates a video where a cloth drapes realistically or water splashes correctly, it’s showcasing an emergent understanding of these physical constraints.

For technical practitioners, this means the scaling laws might be sufficient to generate rudimentary physical intuition, potentially bypassing the need for explicit, hand-coded physics simulators in many non-critical tasks.

The Competitive Validation: The Race to Reliable Simulation

A major indicator that the "Sora Moment" is real is the swift and powerful response from competitors. The generative AI space is characterized by rapid iteration, and when a competitor launches a comparable, high-fidelity tool, it confirms the technical breakthrough is legitimate and replicable.

The introduction of **Google’s Veo** serves precisely this purpose. It validates the industry consensus that video generation based on simulation capabilities—not just interpolating frames—is the next high-value frontier. The comparison between Sora and Veo highlights the current arms race:

- Fidelity and Coherence: Both models strive for realism, but the underlying challenge is maintaining object persistence and physical consistency across longer sequences.

- Prompt Understanding: The ability to translate complex, abstract textual instructions ("a highly detailed documentary clip of a wolf running through a snowy field") into a temporally consistent 3D-like sequence proves the model has built a usable spatial and temporal understanding.

This competitive validation sends a clear message to businesses: the era of static AI assets is ending. If your primary content needs involve visualizing dynamic processes—be it architectural walkthroughs, product prototyping, or cinematic pre-visualization—these simulation-capable models are no longer years away; they are here.

The Limits of Learned Physics: Where Approximation Meets Reality

While the results are breathtaking, any balanced analysis must acknowledge the remaining gaps between learned simulation and deterministic physics engines (like those used in professional game development or engineering software). Searching for articles discussing the limitations of generative AI physics simulation reveals crucial friction points.

Current diffusion and transformer models, no matter how large, are fundamentally probabilistic interpolators. They excel at what they have seen, but they struggle when asked to reason about novel physical interactions or conserve fundamental properties:

- Conservation Laws: Can Sora reliably model the conservation of mass or energy in a complex reaction? If a glass shatters, do the resulting pieces always add up correctly, or does mass sometimes vanish or appear when the model loses track of the objects?

- Complex Materials: Simulating highly non-linear physics—such as the behavior of fluids, smoke, or elastic deformation under extreme stress—remains notoriously difficult for purely data-driven models. These scenarios require precise mathematical computation, not just visual prediction.

- Temporal Consistency Over Long Shots: While Sora handles short clips well, generating a continuous, minutes-long scene where object identities and placement remain perfectly constant remains a significant hurdle.

This is where the distinction between a "world model" and a "physics engine" becomes critical. Sora provides an astonishingly plausible *approximation* of physics derived from observation. A true physics engine, however, is built on axioms and rules, guaranteeing fidelity to the laws of nature. The next major AI leap will likely involve blending these two approaches: using the world model for intuitive scene composition and feeding its predictions into smaller, specialized, rule-based physics modules for fidelity checks.

Future Implications: Reshaping Industries Through Simulation

If we accept that AI is moving from generating content to generating *simulations*, the practical implications span nearly every sector:

1. Democratization of Design and Prototyping

For industrial designers, architects, and engineers, the cost and time associated with creating high-fidelity prototypes plummet. Instead of spending weeks rendering complex fluid dynamics for a new car part or hours building detailed virtual sets for a film, teams can iterate concepts in minutes using text prompts. This accelerates the entire product development lifecycle.

2. Training and Robotics (The True World Model Test)

The most significant impact of robust world models will be in training autonomous agents. Robots and self-driving systems need to learn in simulation before being deployed in the messy real world. If an AI can simulate a thousand variations of a dropped package, a crowded intersection, or a complex assembly line task perfectly, the resulting training data is vastly superior.

This capability moves us closer to training AI agents that are not just reactive but *predictive*—agents that can look ahead, weigh outcomes based on their simulated understanding, and choose the safest, most efficient path.

3. Scientific Discovery and Hypothesis Generation

In chemistry and materials science, researchers constantly simulate molecular interactions. If an AI world model can reliably simulate the interaction of novel compounds under various conditions (temperature, pressure), it can quickly filter out chemically impossible or unstable scenarios before costly wet-lab experiments are even planned. This transforms AI from a data analysis tool into a primary hypothesis generator.

Actionable Insights for Business Leaders

What should businesses do now that the simulation revolution has begun?

- Prioritize Temporal Data: If your workflow relies on visualizing sequences (training manuals, marketing videos, product demonstrations), immediately begin auditing or generating large, high-quality video datasets. The models that win the next generation will be those trained on the best, most complex temporal data.

- Investigate Hybrid Systems: Do not discard existing simulation tools (like CAD or Unity). The immediate future involves hybrid pipelines where generative AI creates the scene and the intent, and traditional physics engines ensure the fidelity of critical elements.

- Re-evaluate Content Creation Budgets: Video production costs are about to drop sharply. Businesses should assess how many internal marketing, training, and low-stakes creative needs can be moved entirely in-house using these new generative tools within the next 18 months.

The Road to Deterministic Intelligence

The "Sora Moment" is a profound realization: the vast, chaotic data stream of the real world, when processed at scale, forces an intelligence to distill the underlying order—the physics of reality. While Sora is likely just the first, slightly wobbly step toward true understanding, it proves that the path to AGI may involve building systems that mimic our own intuitive physics before they master pure mathematics.

The ultimate goal of world modeling is not just generating believable videos but building an AI that can reliably reason about the future consequences of actions. As these models overcome current limitations in conservation and material science, they will evolve from being tools of artistic creation into the foundational simulators upon which the next generation of robotics, autonomous systems, and scientific breakthroughs are built. The world is about to get simulated, and the implications are far beyond just special effects.