AI Video Generators Are Now Physics Engines: Decoding the World Model Shift

The release and demonstration of OpenAI’s Sora technology marked a seismic shift in the landscape of generative AI. For years, models like DALL-E and Midjourney excelled at creating stunning *images*—complex compositions that followed aesthetic rules. However, when AI started generating video, something fundamentally different occurred. It wasn't just making sequential pictures; it appeared to be *understanding* the rules of the world it was depicting. This event, often dubbed "The Sora Moment," signals that we are transitioning from generative pattern synthesizers to nascent World Models.

This development is not merely an incremental update to video quality; it’s a conceptual leap toward Artificial General Intelligence (AGI). To truly grasp the magnitude, we must look beyond the surface-level visuals and examine the underlying mechanisms, historical context, and the sweeping implications for industries from entertainment to robotics.

The Core Transformation: From Pixels to Physics

What separates a convincing short clip from a mere sequence of frames? Consistency. If a ball is thrown behind a couch in Frame 5, it must reappear on the other side in Frame 20, or perhaps remain obscured. If an object is made of glass, it should shatter realistically. Sora demonstrates an implicit grasp of these concepts—concepts that require an internal understanding of geometry, mass, and motion.

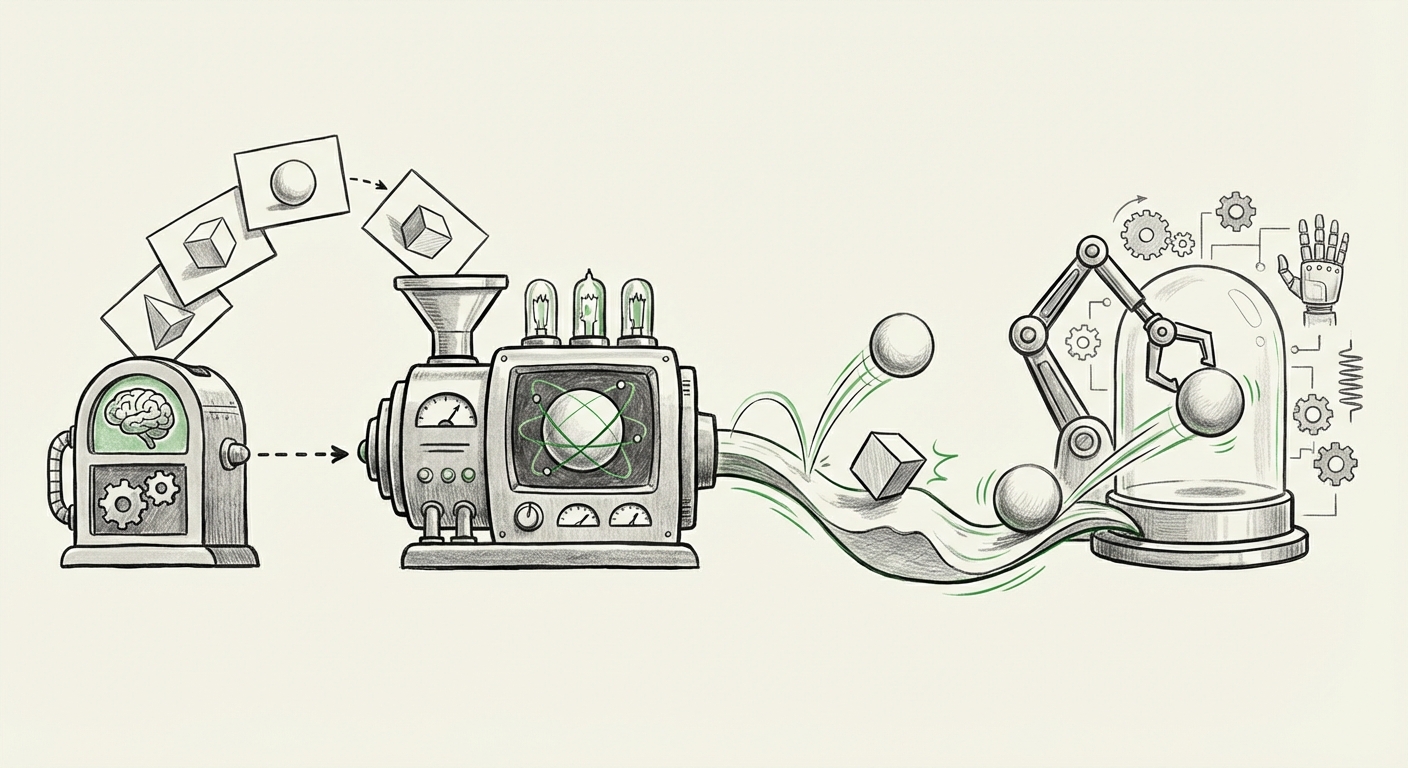

This is the definition of a world model: an internal simulation of reality that allows an agent (in this case, the AI) to predict what will happen next based on current states. It’s the difference between remembering a recipe (pattern matching) and understanding *why* heat causes dough to rise (causality and simulation).

Grounding the Magic: Diffusion Models and Latent Space

How did we get here? The foundational technology remains the diffusion model, but its application has matured dramatically. Diffusion models work by gradually adding noise to training data (like video clips) until it becomes pure static, and then learning to perfectly reverse that process—to denoise it back into a coherent output. For video, this process must now be consistent across the time dimension.

To manage this complexity, these models operate in a compressed, high-dimensional space known as the latent space. This space is where the model stores the fundamental 'concepts' of the world—not individual pixels, but object shapes, trajectories, and material properties. As discussed in analyses focusing on the technical leap of these models, the training data is so vast and complex that the model is forced to learn an efficient, rule-based compression of reality to reduce computation. This compression, surprisingly, results in a rudimentary physics engine.

For the technical reader, the crucial takeaway is that we are seeing the emergence of implicit physical reasoning. We didn't explicitly program Newton’s laws; the model learned them by observing billions of interactions.

The AGI Connection: Validation from the Visionaries

The shift toward generative world simulation is a narrative long championed by leading AI figures. The success of Sora provides powerful validation for this school of thought.

As AI strategists and theorists have noted (often found when searching for commentary from figures like Yann LeCun on world models), true intelligence requires an agent to build predictive models of its environment. If an AI can accurately predict the outcome of physical actions in a generated video, it means it possesses a basic form of object permanence and predictive capacity—hallmarks of early cognitive ability.

This means the trajectory of large models is converging: Language Models (LLMs) master the structure of human communication; World Models (like Sora) master the structure of physical reality. The next great frontier will be fusing these two capabilities, giving AI the ability to reason abstractly about the physical world it generates or inhabits.

Practical Revolution: Simulation, Robotics, and Synthetic Reality

The most immediate, tangible impact of video models acting as physics engines will be felt in sectors heavily reliant on accurate simulation and data generation.

The End of the Data Scarcity Wall for Robotics

Training physical robots is brutally expensive and time-consuming. A robot arm might need thousands of real-world attempts to learn how to grasp a novel object without damaging it. Previously, engineers relied on separate, often imperfect, physics simulators (like MuJoCo or Unity) to bridge this gap—a process known as sim-to-real transfer.

When searching for articles discussing the application of Sora-like models in robotics simulation, the excitement is palpable. If a diffusion model can generate hyper-realistic, physically accurate video based on text prompts describing a task ("A delicate ceramic vase being placed on a wobbling shelf"), that video becomes high-quality, labeled synthetic training data. This data allows robotic policies to be trained entirely in the latent space first, dramatically reducing the cost and time needed to deploy capable physical agents.

The quality of the synthetic data directly reduces the "reality gap"—the difference between how an agent performs in simulation versus the real world. Sora-level fidelity might virtually close this gap for many manipulation tasks.

Next-Generation Media Production and Design

For creative industries, this evolution eliminates entire workflows. Concept artists no longer sketch a mood board; they prompt a fully animated sequence. Architectural firms can walk clients through buildings that do not yet exist, with lighting, shadows, and material interactions rendered perfectly by the model's implicit physics.

This democratizes high-fidelity simulation, moving it out of specialized engineering software and into accessible generative interfaces. The key insight here is that the model isn't just rendering; it's *designing* the dynamics.

The Shadow Side: Erosion of Trust and Regulatory Imperatives

With great generative power comes great societal risk. An AI that perfectly models the physical world is also the perfect engine for creating highly convincing falsehoods. This moves the deepfake problem from the realm of slightly uncanny valley video to near-perfect synthetic evidence.

Analysis from policy bodies shows that as realism approaches perfection, the challenge of detection becomes nearly impossible for the human eye. Articles tracking the regulatory challenges posed by synthetic media highlight that Sora compels immediate action.

If the world model can simulate a historical event or a corporate crisis with perfect photorealism, the burden shifts from the creator of the fake content to the infrastructure that must verify reality. This forces several critical considerations:

- Digital Provenance: The urgent need for cryptographic watermarking or content credentials to prove when and how media was created.

- Detection Arms Race: AI researchers must now pivot significant resources to creating detection models that can reliably spot the subtle, non-physical errors that may still exist, or find the embedded provenance markers.

- Legal and Ethical Frameworks: Existing defamation, copyright, and election integrity laws are wholly unprepared for perfectly synthesized reality.

For businesses, this means investing not just in generative capabilities, but in verification tools. Trust, the foundational currency of digital interaction, is now under direct threat from technology that learns the structure of reality so well.

Actionable Insights for the Future

For leaders navigating this new era, the Sora Moment demands a strategic realignment. Here is what you need to prioritize:

For AI Researchers and Engineers:

- Dive into Latent Dynamics: Move beyond visual fidelity. Focus research on probing the latent space to extract explicit physical rules. Can we prompt the model to state its 'assumptions' about mass or friction? This unlocks better control.

- Hybrid Modeling: Explore integrating these powerful generative world models with classical, symbolic physics engines. Use the generative model for high-level context and the symbolic engine for rigorous collision detection.

For Business Leaders and Investors:

- Re-evaluate Simulation Pipelines: If your business relies on simulation (manufacturing, autonomous vehicles, urban planning), immediately investigate how Sora-like architectures can replace or augment existing physics engines for rapid data creation.

- Prioritize Verification Infrastructure: Assume all incoming digital media is suspect. Allocate budget toward digital provenance tools, content authentication standards, and internal verification protocols immediately. This is a risk management necessity, not a speculative feature.

Conclusion: Architects of the Possible

The transition from generating static patterns to simulating dynamic reality marks the true beginning of AI as an *engineering tool* for reality itself. When AI models become implicit physics engines, they cease being just creative novelties and become fundamental infrastructure.

We are witnessing the birth of accessible, powerful world modeling. This technology will accelerate scientific discovery, revolutionize how we train autonomous systems, and change the economics of content creation. However, it simultaneously forces humanity to urgently confront the nature of evidence and truth in a world where seeing is no longer believing. The Sora Moment is less about the stunning video it creates, and more about the foundational understanding of reality it implies.