From Buzzword to Benchmark: How GPT-5.3 Instant Signals the Era of Reliable, Real-Time AI Utility

The AI landscape is perpetually defined by incremental steps that suddenly yield exponential leaps in capability. The recent release of OpenAI’s **GPT-5.3 Instant**—touted for its commitment to smoother everyday conversations and significantly reduced hallucination during web search—is not just another version bump. It represents a critical inflection point: the industry is moving beyond demonstrating raw generative power and is now focused intensely on making AI a trustworthy, low-latency utility.

As technology analysts, we must look beyond the marketing gloss to understand the underlying engineering and market pressures driving this focus. GPT-5.3 Instant is a direct response to the two most significant bottlenecks preventing widespread, mission-critical AI adoption: the frustrating lag time of complex queries and the pervasive issue of "making things up."

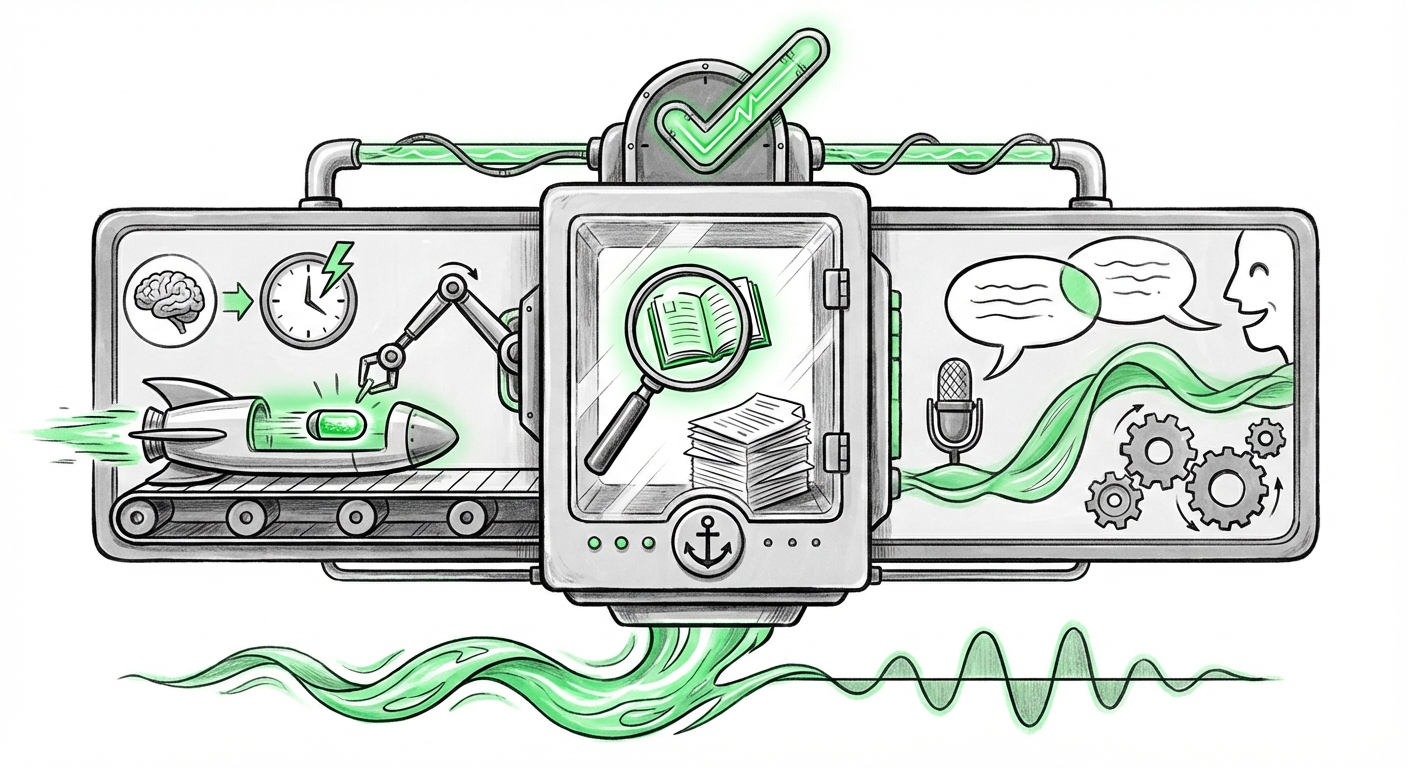

The Three Pillars of the Instant Revolution

The core value proposition of GPT-5.3 Instant rests on three intertwined advancements, each addressing a major pain point for developers and end-users alike:

- Speed/Latency ("Instant"): The pursuit of near-zero response time moves the experience from "talking to a sophisticated computer" to "having a real-time conversation."

- Factuality/Reliability ("Hallucinate Less"): Improving the grounding of information during Retrieval-Augmented Generation (RAG) makes the output actionable, not just interesting.

- Conversational Quality ("Smoother"): Refining dialogue management means the AI remembers context better, handles interruptions gracefully, and maintains complex, multi-turn reasoning threads.

Analyzing this release through the lens of industry trends confirms that these pillars are now the primary battlegrounds for AI supremacy.

Pillar 1: Winning the Latency War for Real-Time Interaction

In the early days of generative AI, speed was secondary to quality. Users accepted a few seconds of thinking time. Today, that latency is unacceptable, especially when AI is woven into live customer service, code debugging, or dynamic learning environments.

The drive for "Instant" performance requires sophisticated engineering, often involving techniques like model distillation, quantization, or perhaps the strategic use of Mixture-of-Experts (MoE) architectures where smaller, specialized models handle initial inputs before handing off to larger ones. Our analysis confirms that this engineering focus is a universal trend:

Corroboration Check: Our search queries pointed toward engineering discussions around **"LLM latency reduction"** and performance tuning for competitor models like Llama 3. This shows that reducing the time-to-first-token is no longer a niche concern but a central engineering mandate across the industry. For AI Engineers and CTOs, this means new deployment pipelines prioritizing efficient inference are becoming standard operating procedure.

Future Implication: Low latency fundamentally unlocks agentic behavior. If an AI must wait three seconds to check a database entry, it stalls the entire workflow. If it takes 100 milliseconds, it becomes a seamless background processor, allowing the human user to maintain their concentration and cognitive flow.

Pillar 2: The Trust Deficit—Why Grounding is Everything

The Achilles' heel of all generative models has been hallucination—the confident assertion of falsehoods. When an LLM is used for summarizing current events or providing technical facts, an incorrect answer is worse than no answer at all. GPT-5.3 Instant's focus on improving search integration directly tackles this trust deficit.

This is where **Retrieval-Augmented Generation (RAG)** becomes paramount. The model isn't just relying on its internal, static training data; it's using the web search as an external, verifiable "brain." The improvement isn't necessarily in the search algorithm itself, but in how the model interprets, synthesizes, and cites the retrieved documents.

Corroboration Check: The focus on search reliability places OpenAI directly in competition with Google, whose Gemini models are deeply integrated with Google Search. Articles contrasting **"Gemini vs OpenAI real-time search integration"** reveal that the market demands auditable answers. The platform that can consistently provide highly accurate, cited information drawn from the current web will win the high-stakes enterprise segment.

Business Implication: For Business Leaders and Investors, this is the key to adoption. When hallucinations decrease, the need for costly human oversight ("the human in the loop") reduces significantly. This directly lowers operational expenditure and opens AI use cases in legal, medical documentation, and financial reporting—fields where accuracy is non-negotiable.

Pillar 3: The Ascent of the Conversational Agent

While speed and accuracy serve the *function* of the AI, conversational fluidity serves its *experience*. "Smoother everyday conversations" implies a significant improvement in context tracking, persona consistency, and the handling of ambiguity.

We are moving past the era of the single-turn prompt. Users want to ask follow-up questions, change their minds midway through a task, and have the AI maintain the thread without constantly repeating context. This capability is the foundation of the true **AI Agent**—an entity capable of persistent, goal-oriented dialogue.

Corroboration Check: Trend analyses on **"Next generation conversational AI agents"** show that industry focus has decisively shifted towards building systems with long-term memory and planning capabilities. GPT-5.3 Instant appears to be delivering the conversational polish necessary to make these agentic behaviors feel natural, rather than brittle and frustrating.

UX and Design Implications: For designers, this means AI interfaces will require less rigid scaffolding. Users can interact more naturally, expecting the system to infer intent from subtle cues, mimicking human interaction more closely.

Broader Implications: The Democratization of High-Fidelity AI

The convergence of low latency, high factuality, and smooth dialogue has a profound societal implication: **The friction in accessing high-quality information is rapidly approaching zero.**

The Enterprise Imperative: From Novelty to Necessity

For businesses, the release confirms that the AI upgrade cycle is accelerating. Companies utilizing older models risk being left behind not just in feature set, but in fundamental reliability. When GPT-5.3 Instant provides an answer in real-time, correctly grounded, and conversationally appropriate, it establishes a new expectation for productivity tools.

This drives the urgent need for businesses to audit their current LLM deployments and plan migration strategies. As highlighted in discussions on **"The business implications of reducing LLM hallucination,"** the financial and regulatory risks associated with unreliable AI are significant deterrents to scaling. Models that actively mitigate hallucination reduce enterprise legal exposure and build consumer confidence simultaneously.

The Future User Experience: Subtle, Pervasive Intelligence

Imagine using your digital assistant to plan a complex trip. In the past, you might have to ask three separate questions: "What's the weather in Paris next week?" (Wait), "Find me three highly-rated hotels near the Louvre." (Wait), "Compare the pros and cons of renting a car vs. using public transport based on those hotel locations."

With a model emphasizing conversational fluency and speed, the exchange becomes:

User: "Plan my trip to Paris next week. I need a moderately priced hotel near the Louvre, and tell me if a car makes sense."

GPT-5.3 Instant (Instantly): *[Generates contextually relevant summaries of weather, hotels, and a concise pro/con analysis of transport options based on current local traffic data.]*

This transformation means AI stops being a feature you seek out and becomes the ambient intelligence layer underpinning every digital interaction.

Actionable Insights for Navigating the New Normal

For stakeholders looking to capitalize on this shift toward reliable, fast AI, here are three actionable areas:

- Prioritize RAG Quality Over Model Size: While bigger models are often better, the improvement here stems from better *integration* with external data. Businesses must invest heavily in cleaning, indexing, and structuring their proprietary data sources for optimal RAG performance. A fast model is only as good as the data it grounds itself on.

- Demand Benchmarks on Real-Time Tasks: When evaluating new models, do not accept marketing claims on latency alone. Demand performance data on complex, multi-step tasks that require immediate web grounding. The true measure of "Instant" is its performance under the strain of real-world complexity.

- Design for Agentic Flow: Begin redesigning user interfaces and operational workflows to allow for multi-turn, interrupted conversations. The goal should be to build systems that don't just answer questions but manage complex, ongoing tasks where the AI is a continuous partner, not a one-time tool.

The release of GPT-5.3 Instant is a clear signal that the AI arms race is maturing. The focus has shifted from simply building the biggest brain to engineering the most reliable, fastest, and most context-aware utility. This pivot from raw generation to trustworthy integration is the necessary prerequisite for AI to truly become the invisible, indispensable infrastructure of the modern world.