The $20 Billion AI Contradiction: How Anthropic’s Scale Defines the Future of Foundation Models

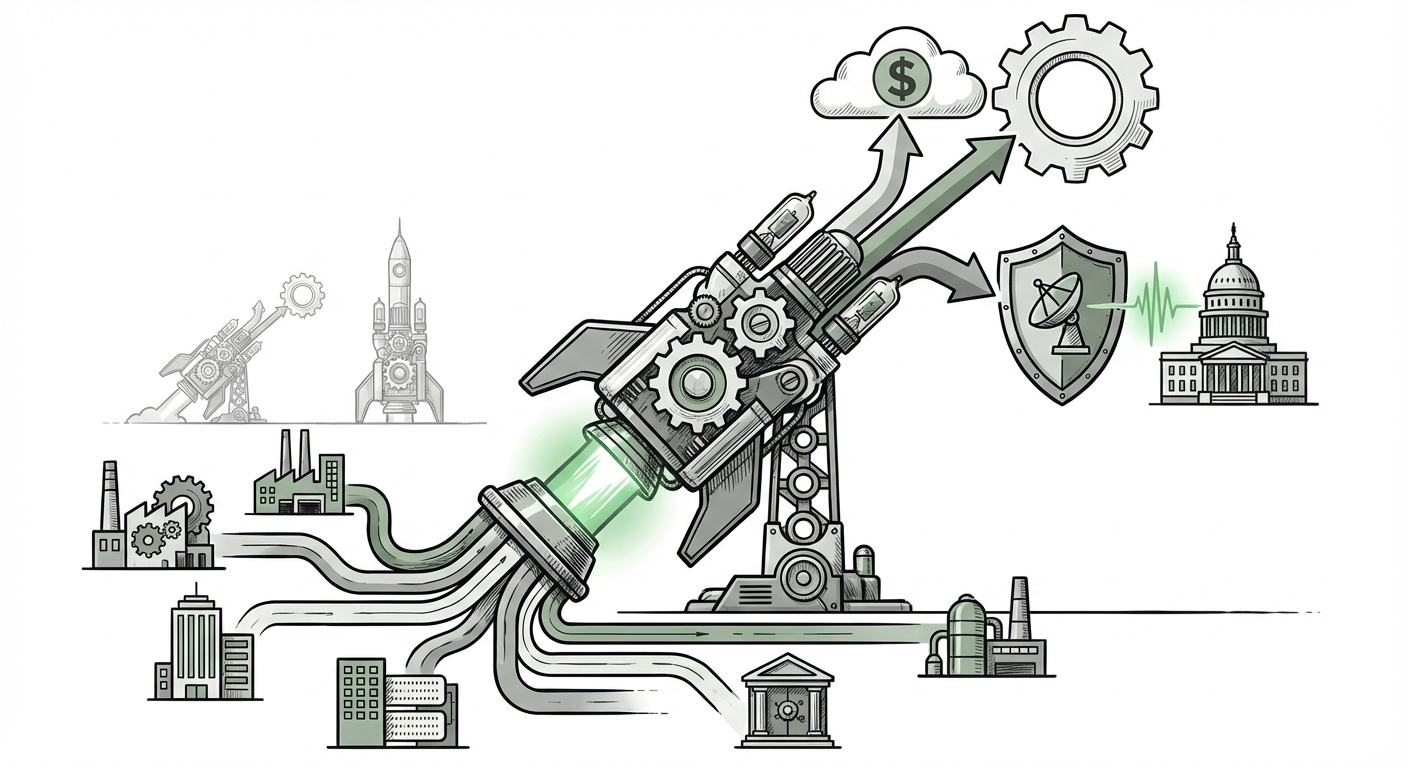

The technology sector is currently witnessing a transformation driven by Generative Artificial Intelligence that rivals the early days of the internet. Amidst the dizzying pace of model releases and capability leaps, one metric stands out as a true indicator of market maturation: revenue. The recent report suggesting that Anthropic is nearing an astounding $20 billion annual revenue run rate serves as a powerful signal. It confirms that the abstract concept of foundational models has translated into concrete, massive commercial value.

However, this financial titan is emerging not in a vacuum, but while navigating intense scrutiny regarding ethics, safety, and geopolitical alignment—epitomized by its reported friction with defense agencies like the Pentagon. This central tension—unprecedented commercial success juxtaposed against deep philosophical and governmental friction—is the defining characteristic of the current AI landscape. To truly understand the future, we must dissect how this scale was achieved, who is fueling it, and what constraints are being placed on its trajectory.

The Commercial Conquest: Validating Scale in a Crowded Market

For AI models to be considered truly transformative, they must move beyond research labs and into the balance sheets of global corporations. Anthropic’s trajectory suggests they have successfully made this transition.

When we look at the **AI foundation model market share comparison**, this massive revenue figure contextualizes the "Big Three" race. This isn't just about having the best model; it's about capturing the highest-value contracts. Anthropic, known for its safety-focused Claude models, is demonstrating that enterprise customers are willing to pay a premium for perceived reliability and responsible AI guardrails. The implication for the broader market is clear: **trust is a purchasable commodity.**

This validates the investment theses across the board. If one major player can command a multi-billion dollar run rate, it suggests the entire Total Addressable Market (TAM) for LLM services is vastly larger than previously estimated. For investors, this isn't just growth; it's the establishment of a stable, high-margin revenue stream derived from computational service usage, rather than just one-off software licenses.

What This Means for Competition:

The revenue race forces competitors like OpenAI and Google to accelerate their enterprise offerings. It shifts the narrative from pure performance benchmarks (like MMLU scores) to total customer value delivered, encompassing uptime, customization, and data privacy assurances. The winners will be those who can manage this complex triad of performance, price, and trust.

The Engine of Growth: Deep Enterprise Adoption

How does an organization jump to a $20 billion run rate so quickly? The answer lies firmly in the **enterprise adoption rates for generative AI large language models**. This revenue isn't being driven primarily by consumer chatbots; it is being powered by mission-critical workflows inside Fortune 500 companies.

Enterprise adoption means moving past the "curiosity phase" (where small teams play with public APIs) into "production scaling." This involves integrating LLMs into core systems:

- Customer Service Augmentation: Handling complex tier-one support queries autonomously.

- Code Generation and Review: Integrating AI assistants directly into software development pipelines.

- Knowledge Management: Creating proprietary internal search and synthesis engines trained on company documents.

For CIOs and CTOs, these applications offer immediate, measurable returns on investment through efficiency gains. This is the most practical implication: AI is no longer an experimental tool; it is becoming foundational infrastructure. When companies commit to using a model like Claude for their internal data analysis, they are locking in API usage for years, creating the predictable revenue required for Anthropic’s massive valuation.

This trend suggests a future where specialized, commercially viable LLMs carve out specific enterprise niches, rather than one monolithic model dominating all tasks. Companies are demanding models optimized for legal text analysis, financial forecasting, or medical documentation—areas where Anthropic’s emphasis on safety provides a competitive edge over less constrained alternatives.

The Inevitable Friction: Safety, Ethics, and Geopolitics

The rapid commercialization of powerful general-purpose AI naturally collides with issues of national security and global stability. The mention of the "Pentagon feud" highlights the central ethical dilemma facing leading AI labs today:

How much power should be withheld from, or constrained for, powerful government and defense entities?

Anthropic has historically positioned itself as the "safety-first" alternative, famously building its charter around responsible deployment. However, defense and intelligence agencies represent massive, high-value, and strategically important customers. When safety guardrails—designed to prevent misuse or the generation of harmful content—clash with a military customer’s need for raw capability or operational deployment, the result is friction.

This tension dictates the future regulatory landscape. If leading commercial entities cannot align with their own national security apparatuses, it creates a governance vacuum. It forces policymakers to decide whether AI development should be driven primarily by commercial demand (which favors speed and open access) or by state security interests (which favor control and restricted access). This struggle directly influences export controls, technology transfer policies, and the overall perceived trustworthiness of the technology in sensitive sectors.

Implications for AI Governance:

We are witnessing the early stages of AI alignment not just in the lab (training models to follow instructions), but in the boardroom (managing stakeholder expectations). The path forward for AI providers will involve creating tiered offerings: highly constrained versions for public use, moderately safe versions for general enterprise, and potentially, specialized, less-constrained versions available only to vetted government or research partners, subject to intense auditing.

The Hidden Constraint: The Infrastructure Cost Conundrum

A $20 billion revenue run rate is spectacular, but it must be viewed through the lens of cost. The foundation model industry is characterized by astronomical capital expenditure, primarily centered on sourcing and powering advanced GPUs (like those from Nvidia).

Analyzing the **cost of training and inference for leading LLMs** reveals the key barrier to entry and sustainability. Training a frontier model costs hundreds of millions, potentially billions, of dollars. More persistently, *inference*—the cost of actually running the model every time a user sends a prompt—is a recurring, massive expense.

If Anthropic is earning $20 billion annually, that means they are processing billions of tokens for paying customers. The operational expenditure (OpEx) tied to compute time is enormous. This forces two major strategic considerations:

- Efficiency Race: The next frontier isn't just better models, but *cheaper* models. Companies that can halve their inference costs through specialized chip design, better quantization techniques, or improved algorithm efficiency will secure a dramatic advantage in profitability, even if their revenue matches competitors.

- Capital Demands: Sustaining a $20 billion revenue stream requires continued, reliable access to the most advanced hardware. This ties the success of AI leaders inextricably to the supply chains and pricing power of hardware manufacturers.

For businesses utilizing these models, understanding this dynamic is crucial. If the market leaders struggle to convert high revenue into high *profit* due to compute burdens, they may be forced to raise API prices, impacting the long-term ROI projections for enterprise AI projects.

Actionable Insights for the Road Ahead

The current state of affairs—massive revenue meeting complex constraints—demands strategic navigation from both builders and consumers of AI technology.

For AI Developers and Startups:

Focus on Unit Economics: Do not chase vanity metrics (parameter count, public attention) if they destroy your operational margin. Aggressively invest in inference optimization. The path to sustained success runs directly through compute efficiency.

Strategic Alignment: Clearly define your safety boundaries and stick to them. If you choose a safety-forward posture (like Anthropic), market it as a premium feature to enterprises that prioritize compliance and risk mitigation over raw, unvetted capability.

For Enterprise Leaders (CIOs/CTOs):

Diversify Your LLM Portfolio: Relying solely on one vendor, regardless of their current revenue success, is a massive operational risk. The geopolitical and ethical frictions that slow down one vendor can instantly disrupt your core workflows. Plan for multi-model integration.

Build an AI Governance Framework Now: The "Pentagon feud" is a microcosm of the compliance challenges awaiting all large firms. Establish clear internal policies for data security, intellectual property handling, and acceptable model output *before* scaling deployment across departments.

Conclusion: The Dual Mandate of Modern AI

Anthropic’s near $20 billion revenue run rate is not just a success story; it is a thesis statement for the current era of artificial intelligence. It proves that the market is willing to pay handsomely for powerful, adaptable intelligence.

However, the story is incomplete without acknowledging the countervailing forces: the fierce competition for market share, the intense capital requirements of infrastructure, and the necessary, ongoing ideological battles over safety and state power. The future of AI will not be decided by the fastest model, but by the company that best manages this dual mandate: achieving maximal commercial scale while successfully navigating the deep ethical, political, and economic constraints that come with wielding world-changing technology.