Anthropic's $20B Leap: Decoding the Future of Enterprise AI and Safety Conflicts

The pace of innovation in Artificial Intelligence is no longer measured in years, but in quarterly earnings reports. A recent report indicating that Anthropic is approaching a **\$20 billion annual revenue run rate** serves as more than just a high-water mark for one company; it is a powerful signal about the commercial maturation and financial gravity of frontier AI development globally.

This explosive growth, however, arrives shadowed by complexity—most notably, friction with governmental bodies like the Pentagon, often referred to as the "Pentagon feud." To understand what this means for the future of AI, we must look beyond the headline number and analyze the structural forces underpinning this commercial success while grappling with the ethical and security hurdles that remain.

The Hyper-Commercialization of Intelligence: Beyond the Hype Cycle

For years, frontier AI research operated largely within academic and well-funded labs. Today, the focus has irrevocably shifted to utility and scale. A $20 billion run rate—a projection of annual sales based on current performance—places Anthropic firmly in the upper echelon of high-growth technology companies. This is not merely a successful startup; it represents a fundamental shift where highly capable language models (like Claude) are treated as essential, high-volume enterprise utilities.

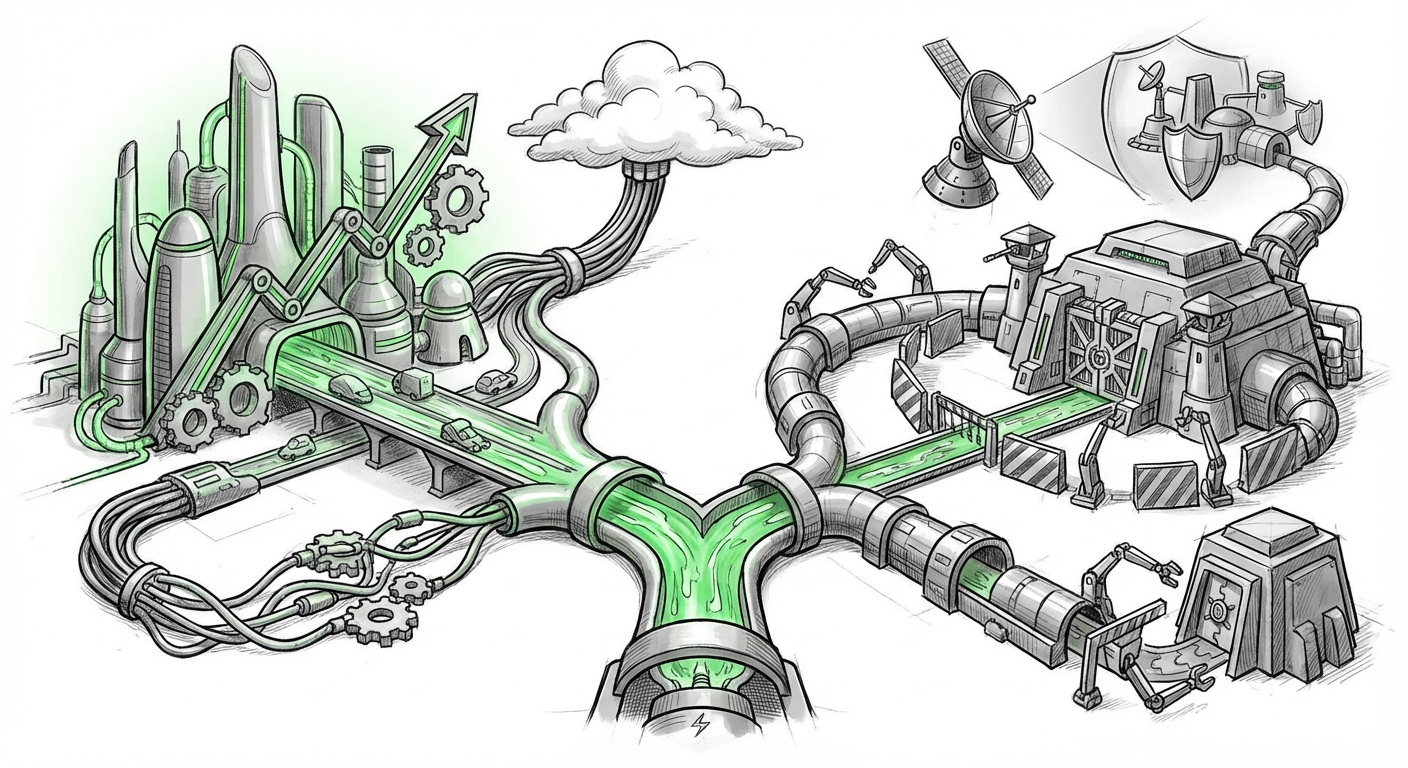

To grasp the context of this figure, we must examine the market mechanics supporting it. Our research into related trends suggests this revenue is intrinsically linked to the **AI infrastructure spending of 2024** (Search Query #1). Companies like Anthropic do not build their own data centers; they lease compute power from giants like Amazon Web Services (AWS) and Google Cloud Platform (GCP). Therefore, Anthropic’s revenue is directly correlated with—and simultaneously driving—the massive capital expenditures of these cloud providers.

This dependency creates an interesting ecosystem:

- Cloud Leverage: AWS and Google are not just customers; they are strategic partners. Their massive investments in Anthropic (Search Query #2) are bets that having preferred access to cutting-edge, safety-aligned models will lock in future AI workloads, cementing their dominance in the cloud market.

- The Compute Ceiling: The ability to sustain and grow beyond $20 billion hinges entirely on securing access to cutting-edge GPUs (like NVIDIA's H100s and B200s). This resource scarcity means that the future leaders of AI are increasingly defined by their **capital access** and cloud partnership terms, rather than solely algorithmic novelty.

For business leaders, the implication is clear: AI adoption is no longer optional; it is a major component of infrastructure strategy. If your competitors are rapidly integrating models that require hundreds of millions in annual compute spend, your own technology strategy must budget accordingly.

The Inevitable Friction: Safety, Ethics, and the State

Anthropic built its reputation, and much of its commercial appeal, around a commitment to "Constitutional AI"—a framework designed to make models safer and more reliable. This inherent focus on safety has made them an attractive partner for risk-averse enterprises. However, this same framework creates significant friction when dealing with entities whose mission prioritizes speed, capability, and operational security above all else—such as national defense organizations.

The reported "Pentagon feud" is a microcosm of a much larger societal debate regarding the deployment of frontier AI. When we look into the **DoD AI strategy and safety concerns** (Search Query #3), we find inherent contradictions:

- The Pace Mismatch: The defense sector operates on multi-year procurement cycles governed by security clearances and rigorous testing. Generative AI moves quarterly. This structural incompatibility slows adoption.

- The Alignment Problem: Defense requires models capable of objective, powerful analysis, sometimes involving ethically gray scenarios. Safety-focused models, designed to refuse harmful prompts, may be seen as liabilities in tactical or intelligence contexts where clear, direct answers are paramount.

- Data Sovereignty: Governments are extremely reluctant to run mission-critical tasks on models whose weights or training data might be partially controlled by foreign-backed cloud entities (like Amazon or Google).

This tension suggests a future where the AI market splits:

Sector A (Commercial): Driven by performance, cost-efficiency, and speed of deployment, accepting manageable levels of risk defined by commercial liability standards.

Sector B (Government/Defense): Requiring highly customized, often proprietary, or even air-gapped models built on foundations that can be audited and controlled internally, even if these models are slightly less capable than the commercial cutting edge.

Enterprise Adoption: When Safety Becomes a Selling Point

If the Pentagon is hesitant, why is Anthropic generating such massive commercial revenue? The answer lies in the **enterprise customer base** (Search Query #4).

For industries like finance, healthcare, legal services, and customer relations, reputation and regulatory compliance are existential concerns. An unvetted, "hallucinating" model can cost a company millions in fines or damage customer trust irreparably. Anthropic's deliberate positioning as the "responsible AI lab" translates directly into market share.

When enterprises vet AI solutions, they are looking for two things:

- Predictability: Claude’s structured output and refusal mechanisms offer a clearer Service Level Agreement (SLA) framework than less constrained competitors.

- Brand Alignment: Deploying technology associated with high ethical standards minimizes negative PR risk associated with AI disasters.

This phenomenon shows that for a significant portion of the global economy, safety is not a barrier to adoption; it is a premium feature driving adoption. Businesses are willing to pay more for models they trust not to compromise their core operations or regulatory standing.

Future Implications: The Bifurcation of AI Development

The dual reality of Anthropic's financial success and its regulatory friction paints a clear picture of where AI technology is headed over the next three to five years.

1. Infrastructure Wars Define Leadership

The Cloud Providers (AWS, GCP, Azure) are the new gatekeepers of AI. Their massive investment terms (Search Query #2) mean that future revenue sharing and compute access will be dictated by exclusivity agreements. Smaller startups will struggle to find the massive, subsidized compute needed to train the next generation of models without binding themselves to one of the hyperscalers. This centralizes control over the deployment layer of AI.

2. Specialization Over Generalization

The days of a single general-purpose model serving all needs are waning. As models become commoditized through APIs, the value shifts to specialized fine-tuning and domain expertise. We will see a surge in "AI Micro-Giants"—companies with deep vertical knowledge who use generalized models as a base, but differentiate through proprietary data and safety overlays tailored to specific industries (e.g., an AI exclusively trained on Japanese maritime law).

3. The Regulatory Chasm Widens

The gap between commercially acceptable risk and governmentally required security will continue to widen. We anticipate the emergence of:

- "GovCloud" Models: AI stacks physically isolated and vetted by federal agencies, likely trained on smaller, curated datasets rather than the entirety of the open internet.

- Auditing as a Service: New compliance industries will focus entirely on model transparency, bias testing, and adversarial robustness for enterprise clients who cannot afford the reputational hit of a major AI failure.

Actionable Insights for Business and Society

For those looking to navigate this evolving landscape, strategic action is required now:

For Business Leaders: Optimize Your Compute Strategy

Do not view your AI supplier merely as a vendor. View them as a long-term infrastructure partner. Understand the terms of your cloud agreement and how that impacts your access to frontier models. Actionable Insight: Begin allocating capital not just for model API usage, but for the dedicated, high-throughput compute resources required to run private, fine-tuned versions of models on your own data—even if you start small.

For Technologists and Engineers: Master the Governance Layer

Building the model is one challenge; governing its output is another. Proficiency in prompt engineering is quickly being superseded by proficiency in guardrail engineering. Understanding techniques like Retrieval-Augmented Generation (RAG), input/output filtering, and using smaller models for verification tasks will be crucial for deploying reliable systems.

For Policy Makers and Ethicists: Define "Acceptable Risk" Collaboratively

The current friction between safety labs and defense agencies must be resolved through clear, risk-tiered frameworks. Instead of broad prohibitions, policy should focus on defining acceptable risk thresholds for specific domains (e.g., low-risk customer service vs. high-risk weapons guidance). This requires continuous dialogue with the labs currently building the technology.

Anthropic’s journey to a potential $20 billion run rate proves that the AI revolution has moved past proof-of-concept and entered the industrial age. The next phase will be defined not by who can build the smartest model, but by who can build the most trustworthy, efficiently accessible, and strategically aligned model for the specific needs of commerce and governance.