The $20 Billion AI Nexus: How Anthropic’s Surge Redefines Frontier Model Commercialization and Safety

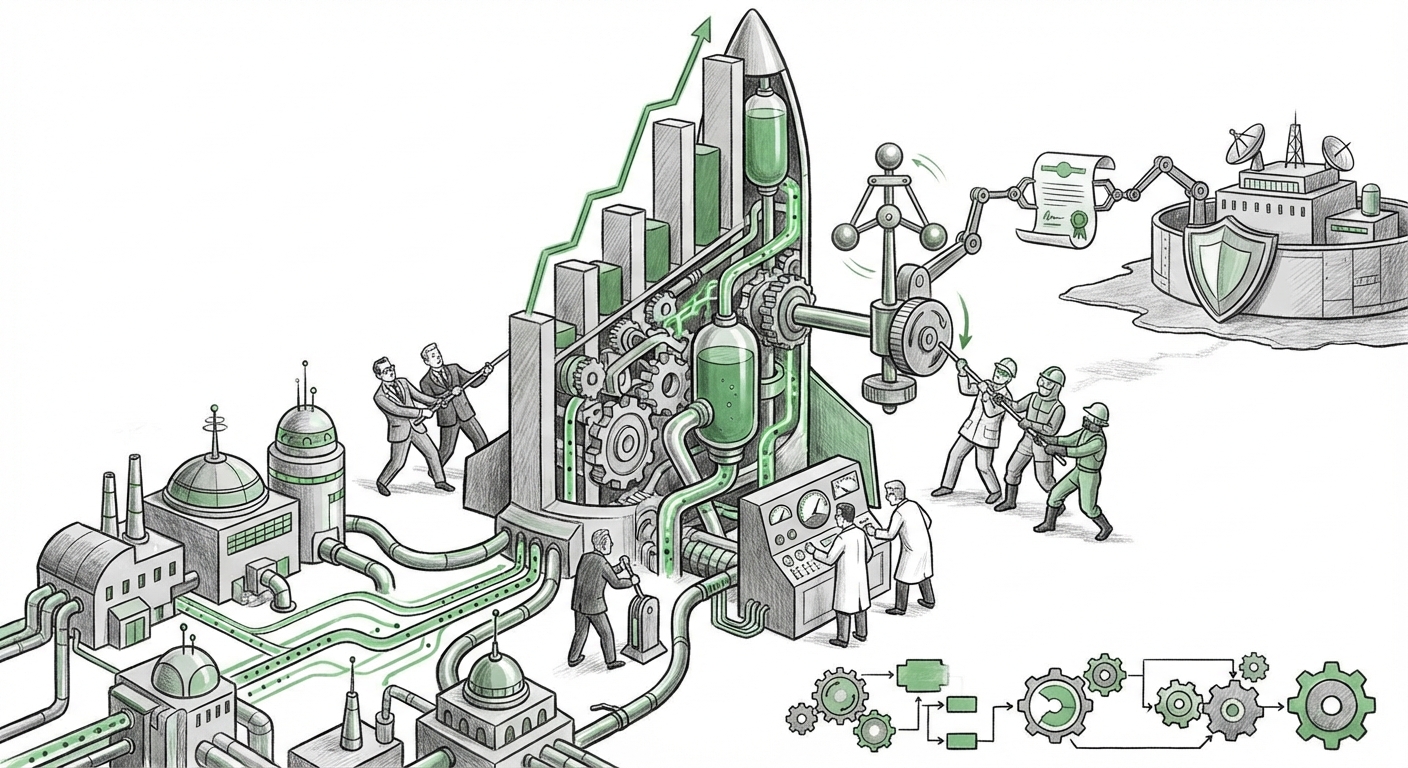

The technology sector is witnessing a gold rush fueled by Artificial Intelligence, but recent reports surrounding Anthropic’s projected $20 billion annual revenue run rate suggest something more profound than simple hype. This figure, achieved while the company navigates high-stakes ethical debates—famously dubbed the "Pentagon feud"—signals a critical inflection point: advanced, safety-conscious AI is not just scientifically feasible, it is economically indispensable.

For the general observer, a $20 billion figure might seem abstract. To put it simply, this level of revenue indicates that thousands of major businesses worldwide are relying on Anthropic’s Claude models every single day for core operations. This isn't a side project; this is an established utility. This analysis dives into what this commercial velocity means for the competitive landscape, the future of AI development, and the ongoing tug-of-war between speed and safety.

The Market Validation: Beyond the Hype Cycle

To appreciate the scale of Anthropic’s achievement, we must first look outward. Is this growth unique to one company, or is it reflective of a sector-wide explosion? Contextual research into the Generative AI market size growth forecast confirms that Anthropic is riding a tidal wave, not creating a ripple.

The AI infrastructure and software market is being projected to grow exponentially over the next five years. This confirms that the foundational technology has moved past the experimental phase and into the infrastructure layer of the global economy. When firms are forecasting multi-trillion dollar market potentials for AI, a $20 billion revenue stream for a single model provider speaks volumes about the immediate enterprise appetite for powerful LLMs.

The Competitive Clash: Quality Over Quantity

In the world of frontier AI, the primary rivalry often centers on OpenAI (backed by Microsoft) and Anthropic. Anthropic’s success suggests that its distinct product philosophy—often centered around Constitutional AI and enhanced safety guardrails—is finding fertile ground with paying customers.

When we look at detailed Claude 3 vs. GPT-4 performance benchmarks, the narrative shifts away from simply "who is bigger" to "who is better suited for specific tasks." If Anthropic’s models are proving superior in areas like complex reasoning, handling massive context windows, or maintaining lower hallucination rates in regulated industries, their revenue surge is simply the market rewarding superior technical execution for enterprise needs.

For CTOs and software architects, the choice between models is now less about brand recognition and more about the cost/benefit analysis of API performance metrics. Anthropic has clearly convinced a significant segment of the market that its models deliver superior value for mission-critical applications.

The Engine of Revenue: Deep Enterprise Integration

How does a company that started with a strong ethical focus translate that into nearly $20 billion? The answer lies in deep, sticky Enterprise adoption trends for Anthropic Claude LLMs.

This scale of revenue rarely comes from small-time hobbyists or entry-level subscriptions. It is built on long-term Service Level Agreements (SLAs), high-volume API commitments, and integration into mission-critical internal workflows—from automated legal drafting and financial compliance checking to coding assistance for massive software projects. If a major bank or pharmaceutical company moves its core data analysis onto the Claude API, the switching cost becomes enormous.

This transition signifies that businesses are moving beyond simple chatbot experiments. They are embedding these models directly into the "plumbing" of their operations. This revenue is durable because it is tied to efficiency gains that directly impact the bottom line.

Practical Implication for Business Leaders:

- ROI is King: Businesses must move quickly to quantify the ROI of LLM deployments. High-cost API usage is only justified by measurable improvements in output quality or speed.

- Dual Sourcing Strategy: Relying on a single AI provider is risky. Companies should actively benchmark and integrate models from providers like Anthropic, OpenAI, and Google to ensure resilience and competitive pricing.

- Data Governance: Enterprises adopting these models must have robust data governance policies in place, especially concerning proprietary data sent through third-party APIs.

The Uncomfortable Truth: Safety, Geopolitics, and the Defense Divide

Perhaps the most fascinating element of Anthropic’s current success is its navigation of the ethical minefield, starkly highlighted by reports concerning the "Pentagon feud."

Anthropic was founded by former OpenAI researchers prioritizing AI alignment and safety—often termed 'responsible scaling.' This focus puts them in direct conflict with the breakneck speed demanded by defense and intelligence agencies seeking immediate deployment of powerful tools. Analyzing the specifics of Anthropic DoD contract negotiations controversy reveals the central tension defining the next decade of AI:

The Speed vs. Safety Dilemma: On one side, governments and defense contractors demand the fastest, most capable AI to maintain technological superiority. On the other, companies like Anthropic argue that deploying systems approaching Artificial General Intelligence (AGI) requires rigorous, perhaps slow, testing to prevent catastrophic unintended consequences.

This internal friction, while seemingly slowing down government adoption, paradoxically builds consumer and enterprise trust. For businesses operating in highly regulated fields (like finance or healthcare), Anthropic’s perceived commitment to safety acts as a major differentiator. They are effectively saying: "We build powerful tools, but we are willing to pause deployment until we are confident in their inherent safety alignment." This reputation may secure them long-term, high-value enterprise contracts even if they temporarily lose out on specific defense budgets.

Future Implications: What This Revenue Spike Signifies

The trajectory toward a near-$20 billion revenue run rate for a single frontier AI company is not just a market trend; it is a structural shift in how technology companies are valued and built.

1. Acceleration of Model Specialization

We are moving past the era of a single monolithic "best" model. Anthropic’s success shows that customization and alignment matter. Future development will see providers focusing heavily on creating highly tuned versions of their base models for specific verticals (e.g., a "Claude for Pharma Research" or a "Claude for Financial Auditing"). Revenue will be driven by depth of integration, not just general capability.

2. The Rising Cost of Compute and Talent

Achieving this level of revenue requires immense investment in computing power (GPUs) and the world's most specialized engineering talent. This dynamic solidifies the AI industry into two tiers: the hyperscalers (who own the infrastructure) and the model developers (who buy or access that infrastructure). This barrier to entry means that the next wave of trillion-dollar AI companies will likely emerge from those who can secure unprecedented levels of capital—often through strategic partnerships with cloud giants.

3. The Regulatory Spotlight Will Intensify

When a company reaches this revenue bracket, regulatory scrutiny is guaranteed. Whether it is the U.S. government examining its defense posture or the EU scrutinizing data handling under the AI Act, the spotlight on Anthropic's governance, transparency, and safety protocols will only grow brighter. The market success of Anthropic may force regulators globally to create clearer rules of engagement for commercial AI deployment, using companies achieving this scale as case studies.

Actionable Insights for Navigating the New AI Reality

For technology leaders, investors, and policymakers, Anthropic’s ascent offers clear signals for forward planning:

- For Investors: Look beyond raw valuation multiples. Analyze the underlying enterprise consumption patterns (API calls, seat licenses). High recurring revenue tied to core business functions is the true indicator of long-term AI investment health, as demonstrated by Anthropic’s performance.

- For Product Developers: Stop treating AI as a feature and start treating it as a foundational layer. Begin intensive testing of models from different camps (Anthropic, OpenAI, Google) to understand which performs best for your latency, context length, and safety requirements.

- For Policymakers: The revenue figures prove that innovation cannot be stopped, only guided. Policy must pivot from trying to slow development to creating robust standards for deployment, data residency, and liability that allow safe commercialization to thrive. The tension between national security requirements and "Constitutional AI" principles must be explicitly addressed through clear frameworks.

Anthropic’s projected financial might underscores a simple truth: the pursuit of safer, more capable AI is the dominant technological narrative of our time. The $20 billion mark is not an endpoint; it is merely the entrance fee for the next, far more complex and impactful phase of the AI revolution.